ubuntu-18.04.4 Hadoop伪分布式环境安装

安装和配置JAVA环境

下载并解压JDK

下载地址:链接:https://pan.baidu.com/s/1OAkGjw5g2r5zkUu7h4Zqow

提取码:66jh

新建/usr/java目录

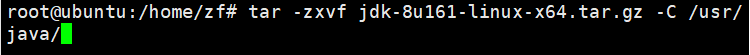

tar -zxvf jdk-8u161-linux-x64.tar.gz -C /usr/java/

配置环境变量

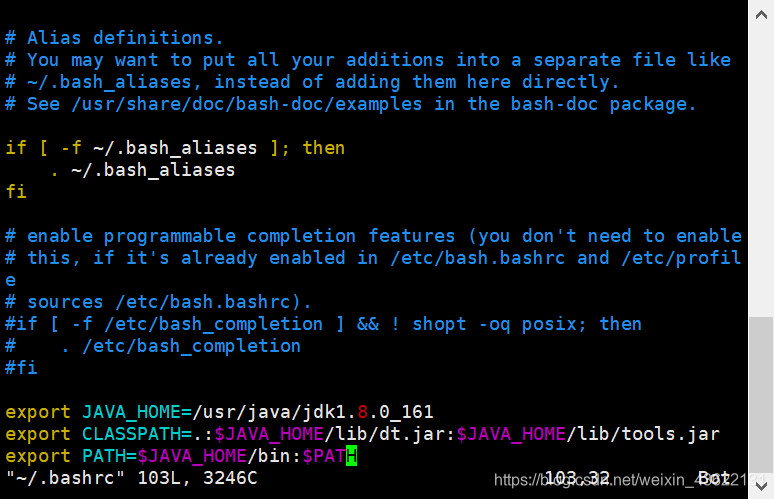

sudo vim ~/.bashrc

在最后加上下列内容(一定注意环境变量后面不要加空格,否则容易出问题,jdk版本号看自己的版本,如果用网盘里的就不用更改)

export JAVA_HOME=/usr/java/jdk1.8.0_161

export CLASSPATH=.:$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar

export PATH=$JAVA_HOME/bin:$PATH

下面命令使环境变量生效

source ~/.bashrc

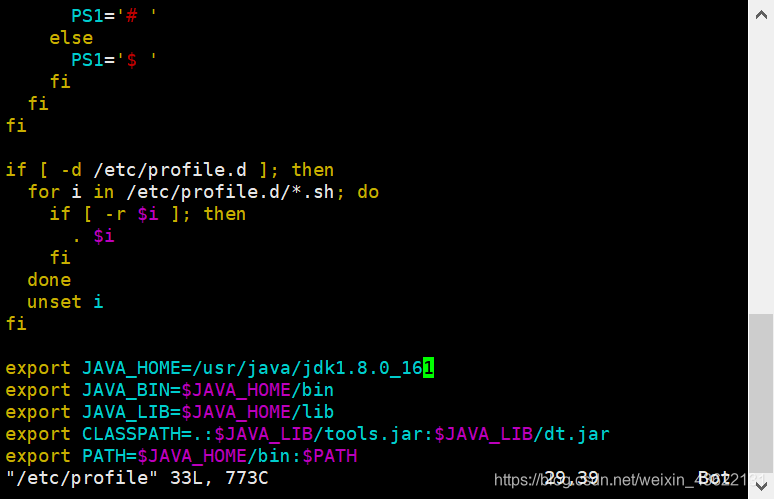

sudo vim /etc/profile

插入下列内容(再次强调jdk的版本号)

export JAVA_HOME=/usr/java/jdk1.8.0_161

export JAVA_BIN=$JAVA_HOME/bin

export JAVA_LIB=$JAVA_HOME/lib

export CLASSPATH=.:$JAVA_LIB/tools.jar:$JAVA_LIB/dt.jar

export PATH=$JAVA_HOME/bin:$PATH

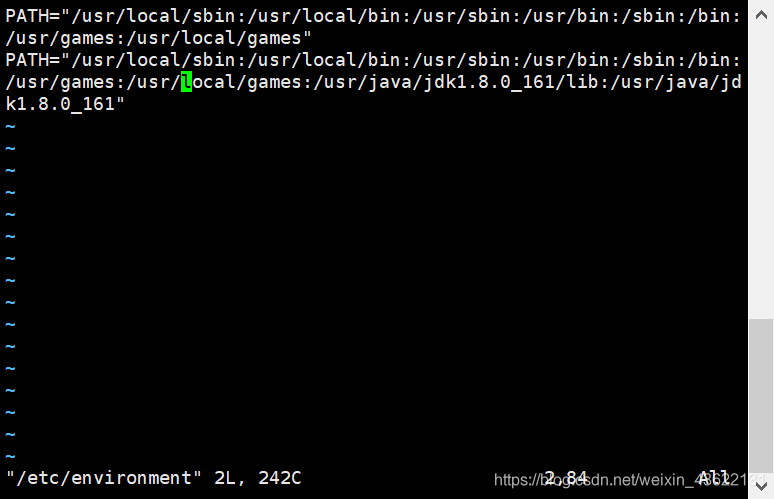

sudo vim /etc/environment

追加

PATH="/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin:/usr/games:/usr/local/games:/usr/java/jdk1.8.0_161/lib:/usr/java/jdk1.8.0_161"

下面命令使配置生效

source /etc/environment

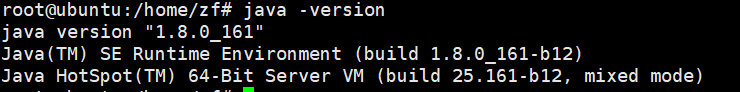

验证java环境是否配置成功

java -version

安装ssh-server并实现免密码登录

下载ssh-server

sudo apt-get install openssh-server

启动ssh

sudo /etc/init.d/ssh start

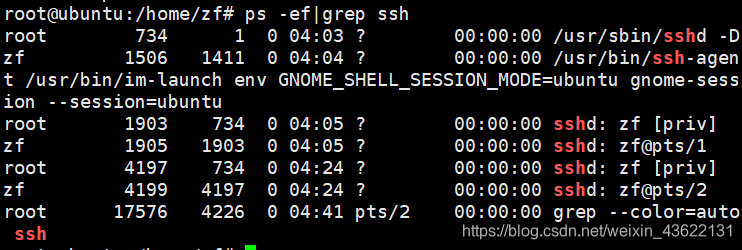

查看ssh服务是否启动,如果显示相关ssh字样则表示成功

ps -ef|grep ssh

设置免密码登录

使用该命令,一直回车

ssh-keygen -t rsa

导入authorized_keys

cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

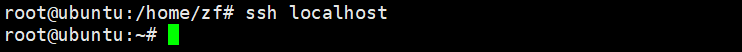

测试是否免密码登录

ssh localhost

安装Hadoop单机版和伪分布式环境

下载hadoop-2.7.4.tar.gz

链接:https://pan.baidu.com/s/1MnRYcE22faZpFxS6BM6yWQ

提取码:nu01

解压到/usr/local

然后切换到/usr/local将hadoop-2.7.4重命名为hadoop,并给/usr/local/hadoop设置访问权限

cd /usr/local

sudo mv hadoop-2.7.4 hadoop

sudo chmod 777 -R /usr/local/Hadoop

配置.bashsc文件

sudo vim ~/.bashrc

在文件末尾追加

export HADOOP_INSTALL=/usr/local/hadoop

export PATH=$PATH:$HADOOP_INSTALL/bin

export PATH=$PATH:$HADOOP_INSTALL/sbin

export HADOOP_MAPRED_HOME=$HADOOP_INSTALL

export HADOOP_COMMON_HOME=$HADOOP_INSTALL

export HADOOP_HDFS_HOME=$HADOOP_INSTALL

export YARN_HOME=$HADOOP_INSTALL

export HADOOP_COMMON_LIB_NATIVE_DIR=$HADOOP_INSTALL/lib/native

export HADOOP_OPTS="-Djava.library.path=$HADOOP_INSTALL/lib"

执行该命令使环境变量生效

source ~/.bashrc

伪分布式搭建

sudo vim /usr/local/hadoop/etc/hadoop/hadoop-env.sh

在末尾添加下面内容

export JAVA_HOME=/usr/java/jdk1.8.0_161

export HADOOP=/usr/local/hadoop

export PATH=$PATH:/usr/local/hadoop/bin

sudo vim /usr/local/hadoop/etc/hadoop/yarn-env.sh

在末尾添加

JAVA_HOME=/usr/java/jdk1.8.0_161

sudo vim /usr/local/hadoop/etc/hadoop/core-site.xml

将原来的< configuration > < /configuration >用下面替换

<configuration>

<property>

<name>hadoop.tmp.dir</name>

<value>file:/usr/local/hadoop/tmp</value>

<description>Abase for other temporary directories.</description>

</property>

<property>

<name>fs.defaultFS</name>

<value>hdfs://localhost:9000</value>

</property>

</configuration>

sudo vim /usr/local/hadoop/etc/hadoop/hdfs-site.xml

同样将原来的< configuration > < /configuration >用下面替换

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:/usr/local/hadoop/tmp/dfs/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>file:/usr/local/hadoop/tmp/dfs/data</value>

</property>

</configuration>

将原来的< configuration > < /configuration >用下面替换

sudo vim /usr/local/hadoop/etc/hadoop/yarn-site.xml

<configuration>

<!-- Site specific YARN configuration properties -->

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name>

<value>org.apache.hadoop.mapred.ShuffleHandler</value>

</property>

<property>

<name>yarn.resourcemanager.address</name>

<value>127.0.0.1:8032</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address</name>

<value>127.0.0.1:8030</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address</name>

<value>127.0.0.1:8031</value>

</property>

</configuration>

关机重启

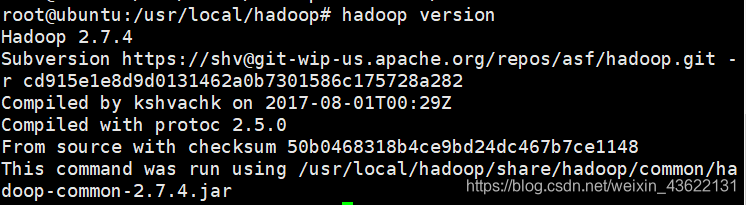

验证Hadoop是否安装配置成功

验证hadoop单机模式已经安装成功

hadoop version

启动HDFS为分布式模式

启动HDFS为分布式模式

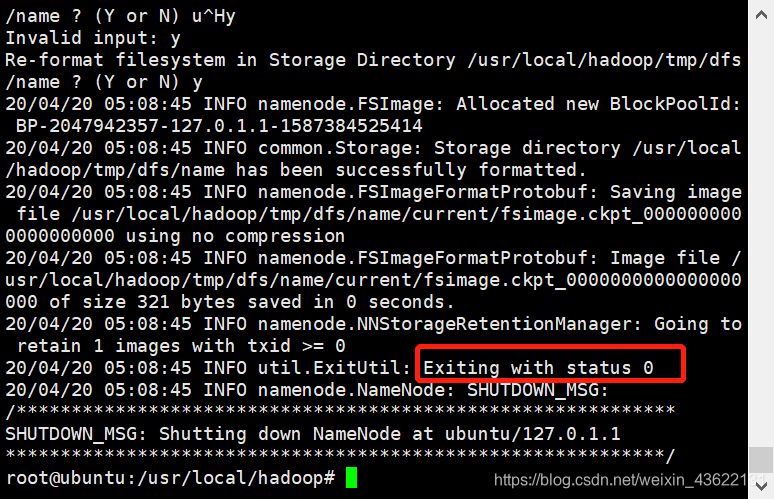

hdfs namenode -format

Exiting with status 0表示成功

启动hdfs

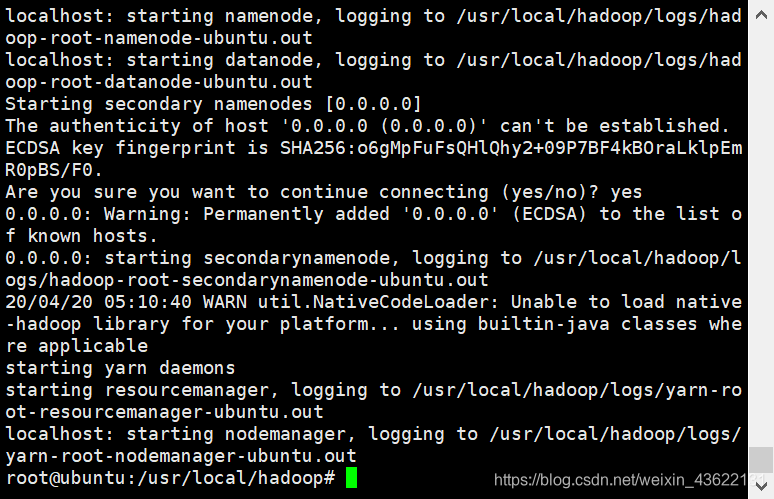

start-all.sh

查看进程

jps

六个进程则正确

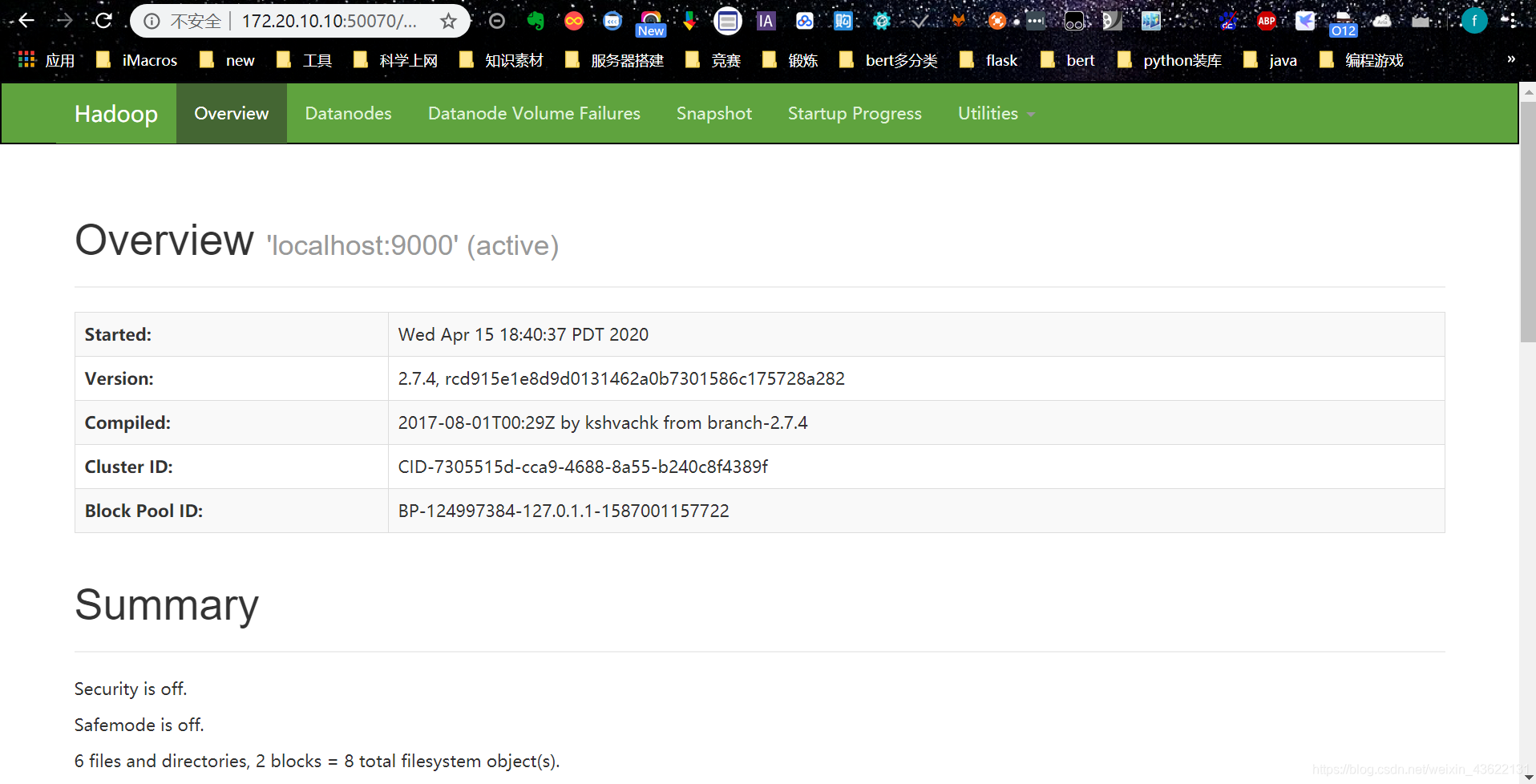

输入http://localhost:50070/,出现如下页面

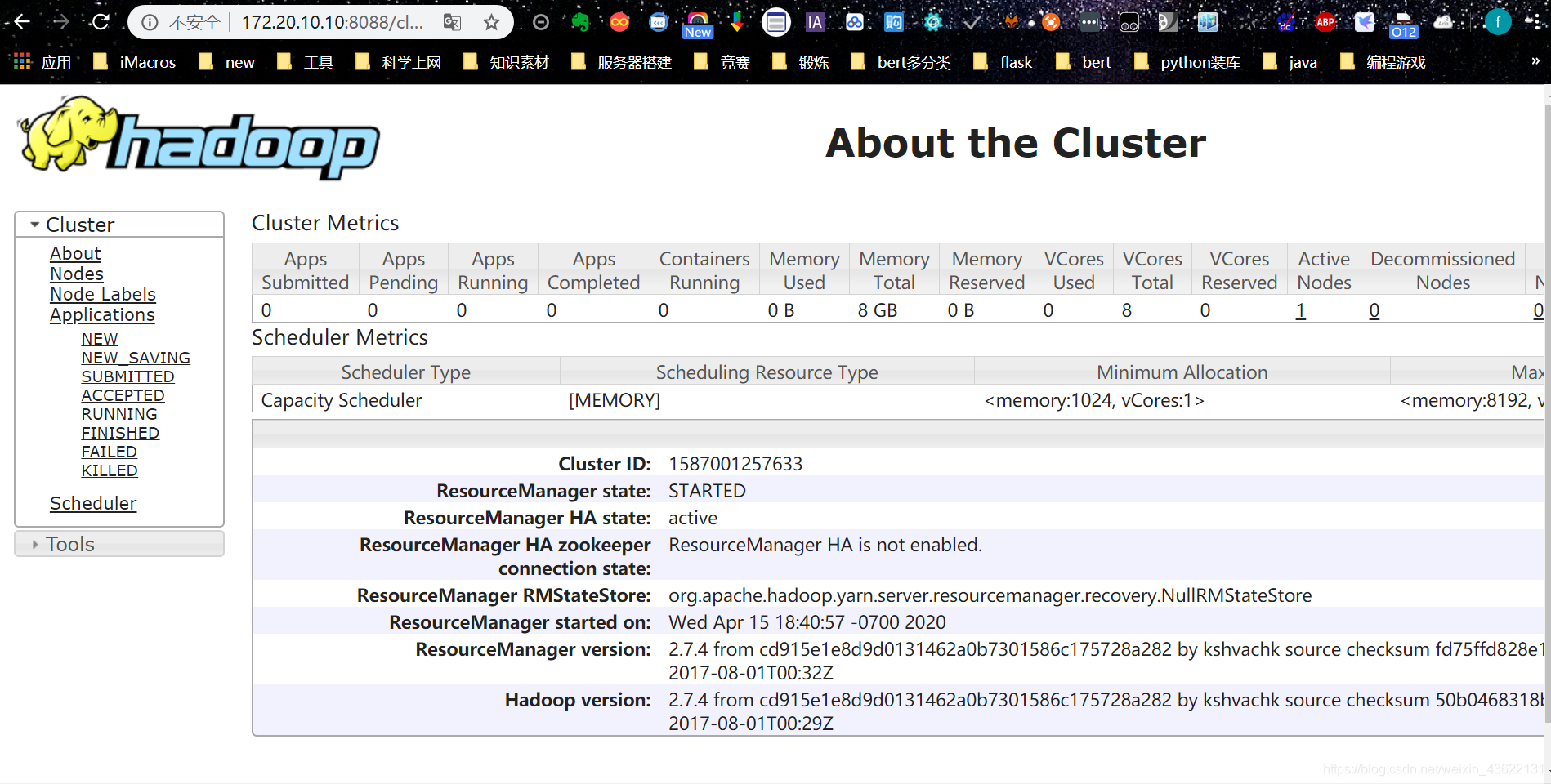

输入 http://localhost:8088/, 出现如下页面

用hadoop进行词频统计

启动HDFS

start-all.sh

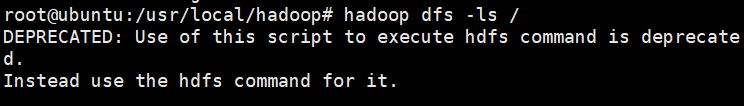

产看HDFS下面包含的文件目录

hadoop dfs -ls /

第一次运行下面什么都没有

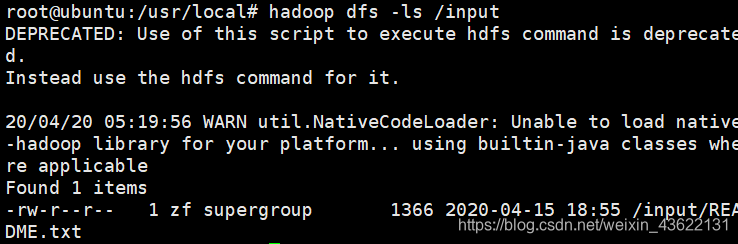

在HDFS中创建一个文件目录input,将/usr/local/hadoop/README.txt上传至input中,此时再用ls查看就发现多了个input目录

hdfs dfs -mkdir /input

hadoop fs -put /usr/local/hadoop/README.txt /input

hadoop dfs -ls /input

执行一下命令运行wordcount 并将结果输出到output中

hadoop jar /usr/local/hadoop/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.4.jar wordcount /input /output

查看词频统计结果

hadoop fs -cat /output/part-r-00000