Seata

Seata 是一款开源的分布式事务解决方案,致力于在微服务架构下提供高性能和简单易用的分布式事务服务。

本文旨在使用Seata进行开发过程中踩过的坑进行记录与总结,不对Seata原理介绍,有兴趣可参考官方文档。

背景介绍

在应用分布式架构的时候,不可不免的会遇到分布式事务的问题,市面上有很多成熟的解决方案,本次选用阿里巴巴开源的Seata作为分布式事务的解决方案,主要是因为阿里现在致力于打造分布式的阿里生态环境,而SpringCloud中很多组件都已经停更,而阿里开源的一系列组件都更稳定且持续更新,所以选择阿里系。

Seata版本选择

Seata目前最新版本是1.20(2020-04-20),本次开发使用的Seata版本为1.10(2020-02-19),这里也是怕最新版本不稳定。

附Seata下载地址:

链接: http://seata.io/zh-cn/blog/download.html.

Seata实操

在使用Seata进行开发的时候遇到了一些问题,做一些记录与总结

1.问题描述

按照官方文档进行安装与配置,将三个微服务注册在同一全局事务组下,名为fsp_tx_group,Seata服务端与微服务都正常启动,但是却出现了全局事务回滚失败的问题,具体问题如下:

逻辑业务为:微服务A写入数据库A,然后调用微服务B写入数据库B,最后调用微服务C写入数据库C,这三个微服务的调用组成一次全局事务,若中间任何一个环节出错,那么数据库A、B、C都要回滚,但是按照文档配置都正确后,发现数据库C始终回滚失败,即数据库A与B都回滚了,但是数据库C的更改依然保留,并未回滚。

2.问题定位

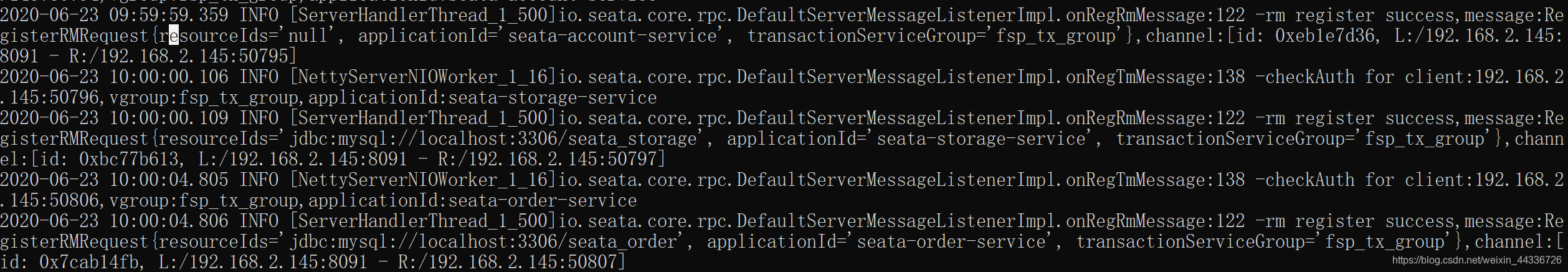

经排查,代码和配置文件并未出错,但是在Seata服务端的启动日志里发现一些端倪,如下图:

大图如下:

RM(Resource Manager)注册的地址出现问题,下面两个微服务可以注册到Mysql的数据库,但是最上面的RM未注册到Mysql数据库中,RM为Seata三大组件中的一个,组件及原理参见官方文档。

但为什么注册不到数据库,就没办法回滚了呢?因为数据库中有一张名为undo_log的表,这张表记录着数据更改之前的快照,全局事务ID等信息,所以当RM连接不到数据库时,无法将这些信息记录到undo_log这张表里,即导致回滚失败。

undo_log这张表是官方文档中给出的,在建立开发环境时配好。

3.解决方案

至于为什么RM连接数据库的时候会出错,经过分析后,发现application.yml文件中的数据库映射并没有配置错误,数据库也正常,但就是注册不成功,

所以尝试以下三种解决方案:

1.删除数据库,重新建库建表,经验证,未解决问题。

2.重新写Seata客户端的file.conf配置文件,经验证,未解决问题。

3.删除IDE整个module,重新建立module,拷贝同样的代码与配置文件,经验证,问题解决。

下面附上Seata配置文件与application.yml

file.conf:

transport {

# tcp udt unix-domain-socket

type = "TCP"

#NIO NATIVE

server = "NIO"

#enable heartbeat

heartbeat = true

#thread factory for netty

thread-factory {

boss-thread-prefix = "NettyBoss"

worker-thread-prefix = "NettyServerNIOWorker"

server-executor-thread-prefix = "NettyServerBizHandler"

share-boss-worker = false

client-selector-thread-prefix = "NettyClientSelector"

client-selector-thread-size = 1

client-worker-thread-prefix = "NettyClientWorkerThread"

# netty boss thread size,will not be used for UDT

boss-thread-size = 1

#auto default pin or 8

worker-thread-size = 8

}

shutdown {

# when destroy server, wait seconds

wait = 3

}

serialization = "seata"

compressor = "none"

}

service {

#vgroup->rgroup

vgroupMapping.fsp_tx_group = "default"

#only support single node

default.grouplist = "127.0.0.1:8091"

#degrade current not support

enableDegrade = false

#disable

disable = false

#unit ms,s,m,h,d represents milliseconds, seconds, minutes, hours, days, default permanent

max.commit.retry.timeout = "-1"

max.rollback.retry.timeout = "-1"

disableGlobalTransaction = false

}

client {

async.commit.buffer.limit = 10000

lock {

retry.internal = 10

retry.times = 30

}

report.retry.count = 5

tm.commit.retry.count = 1

tm.rollback.retry.count = 1

}

## transaction log store

store {

## store mode: file、db

mode = "db"

## file store

file {

dir = "sessionStore"

# branch session size , if exceeded first try compress lockkey, still exceeded throws exceptions

max-branch-session-size = 16384

# globe session size , if exceeded throws exceptions

max-global-session-size = 512

# file buffer size , if exceeded allocate new buffer

file-write-buffer-cache-size = 16384

# when recover batch read size

session.reload.read_size = 100

# async, sync

flush-disk-mode = async

}

## database store

db {

## the implement of javax.sql.DataSource, such as DruidDataSource(druid)/BasicDataSource(dbcp) etc.

datasource = "dbcp"

## mysql/oracle/h2/oceanbase etc.

db-type = "mysql"

driver-class-name = "com.mysql.jdbc.Driver"

url = "jdbc:mysql://127.0.0.1:3306/seata"

user = "root"

password = "phoenix1991mh"

min-conn = 1

max-conn = 3

global.table = "global_table"

branch.table = "branch_table"

lock-table = "lock_table"

query-limit = 100

}

}

lock {

## the lock store mode: local、remote

mode = "remote"

local {

## store locks in user's database

}

remote {

## store locks in the seata's server

}

}

recovery {

#schedule committing retry period in milliseconds

committing-retry-period = 1000

#schedule asyn committing retry period in milliseconds

asyn-committing-retry-period = 1000

#schedule rollbacking retry period in milliseconds

rollbacking-retry-period = 1000

#schedule timeout retry period in milliseconds

timeout-retry-period = 1000

}

transaction {

undo.data.validation = true

undo.log.serialization = "jackson"

undo.log.save.days = 7

#schedule delete expired undo_log in milliseconds

undo.log.delete.period = 86400000

undo.log.table = "undo_log"

}

## metrics settings

metrics {

enabled = false

registry-type = "compact"

# multi exporters use comma divided

exporter-list = "prometheus"

exporter-prometheus-port = 9898

}

support {

## spring

spring {

# auto proxy the DataSource bean

datasource.autoproxy = false

}

}

registry.conf:

registry {

# file 、nacos 、eureka、redis、zk

type = "nacos"

nacos {

serverAddr = "localhost:8848"

namespace = ""

cluster = "default"

}

eureka {

serviceUrl = "http://localhost:8761/eureka"

application = "default"

weight = "1"

}

redis {

serverAddr = "localhost:6381"

db = "0"

}

zk {

cluster = "default"

serverAddr = "127.0.0.1:2181"

session.timeout = 6000

connect.timeout = 2000

}

file {

name = "file.conf"

}

}

config {

# file、nacos 、apollo、zk

type = "file"

nacos {

serverAddr = "localhost"

namespace = ""

cluster = "default"

}

apollo {

app.id = "fescar-server"

apollo.meta = "http://192.168.1.204:8801"

}

zk {

serverAddr = "127.0.0.1:2181"

session.timeout = 6000

connect.timeout = 2000

}

file {

name = "file.conf"

}

}

application.yml

server:

port: 2004

spring:

application:

name: seata-account-service

cloud:

alibaba:

seata:

tx-service-group: fsp_tx_group

nacos:

discovery:

server-addr: localhost:8848

datasource:

driver-class-name: com.mysql.jdbc.Driver

url: jdbc:mysql://localhost:3306/seata_account

username: root

password: phoenix1991mh

logging:

level:

io:

seata: info

mybatis:

mapperLocations: classpath:mapper/*.xml