直接向分区表中插入数据

create table score3 like score;

insert into table score3 partition(month ='201807')

values ('001','002','100');

通过查询插入数据

通过load方式加载数据

load data local inpath '/export/servers/hivedatas/score.csv'

overwrite into table score partition(month='201806');

通过查询方式加载数据

create table score4 like score;

insert overwrite table score4 partition(month = '201806')

select s_id,c_id,s_score from score;

注: 关键字overwrite 必须要有

多插入模式

常用于实际生产环境当中,将一张表拆开成两部分或者多部分

给score表加载数据

load data local inpath '/export/servers/hivedatas/score.csv'

overwrite into table score partition(month='201806');

创建第一部分表:

create table score_first( s_id string,c_id string)

partitioned by (month string)

row format delimited fields terminated by '\t' ;

创建第二部分表:

create table score_second(c_id string,s_score int)

partitioned by (month string)

row format delimited fields terminated by '\t';

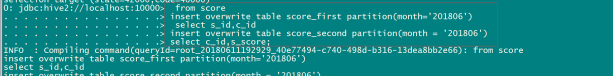

分别给第一部分与第二部分表加载数据

from score

insert overwrite table score_first partition(month='201806')

select s_id,c_id

insert overwrite table score_second partition(month = '201806')

select c_id,s_score;

查询语句中创建表并加载数据(as select)

将查询的结果保存到一张表当中去

create table score5 as select * from score;

创建表时通过location指定加载数据路径

score.cvs

01 01 80

01 02 90

01 03 99

02 01 70

02 02 60

02 03 80

03 01 80

03 02 80

03 03 80

04 01 50

04 02 30

04 03 20

05 01 76

05 02 87

06 01 31

06 03 34

07 02 89

07 03 98

1)创建表,并指定在hdfs上的位置

create external table score6 (s_id string,c_id string,s_score int)

row format delimited fields terminated by '\t' location '/myscore6';

2)上传数据到hdfs上

hdfs dfs -mkdir -p /myscore6

hdfs dfs -put score.csv /myscore6;

3)查询数据

select * from score6;