第一节:线性回归

向量运算时,矢量直接运算比循环算法效率高

- pytorch构建神经网络代码:

方法1:class方法

#ways to init a multilayer network

#method one

net = nn.Sequential(

nn.Linear(num_inputs, 1)

# other layers can be added here

)

#method two

net = nn.Sequential()

net.add_module('linear', nn.Linear(num_inputs, 1))

#net.add_module ......

#method three

from collections import OrderedDict

net = nn.Sequential(OrderedDict([

('linear', nn.Linear(num_inputs, 1))

# ......

]))

print(net)

print(net[0])

第二节:Softmax与分类

1.Softmax回归: ![[公式][公式]](https://img-blog.csdnimg.cn/20200214205118847.png)

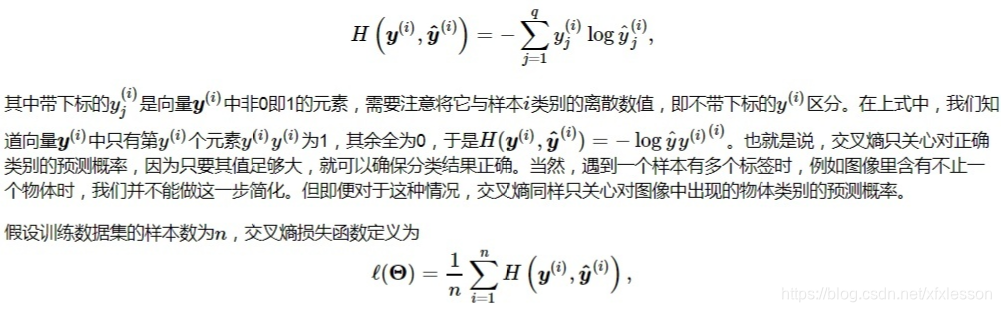

2.交叉熵损失函数:

3. 训练神经网络代码:

num_epochs, lr = 5, 0.1

# 本函数已保存在d2lzh_pytorch包中方便以后使用

def train_ch3(net, train_iter, test_iter, loss, num_epochs, batch_size,

params=None, lr=None, optimizer=None):

for epoch in range(num_epochs):

train_l_sum, train_acc_sum, n = 0.0, 0.0, 0

for X, y in train_iter:

y_hat = net(X)

l = loss(y_hat, y).sum()

# 梯度清零

if optimizer is not None:

optimizer.zero_grad()

elif params is not None and params[0].grad is not None:

for param in params:

param.grad.data.zero_()

l.backward()

if optimizer is None:

d2l.sgd(params, lr, batch_size)

else:

optimizer.step()

train_l_sum += l.item()

train_acc_sum += (y_hat.argmax(dim=1) == y).sum().item()

n += y.shape[0]

test_acc = evaluate_accuracy(test_iter, net)

print('epoch %d, loss %.4f, train acc %.3f, test acc %.3f'

% (epoch + 1, train_l_sum / n, train_acc_sum / n, test_acc))

train_ch3(net, train_iter, test_iter, cross_entropy, num_epochs, batch_size, [W, b], lr)

反向传递求梯度前一定要梯度清零,以免累增。

首先初始化梯度,计算完一次梯度,更新完之后,清零梯度,进行下一次的计算。

第三节: 多层感知机

1.pytorch搭建多层感知机

num_inputs, num_outputs, num_hiddens = 784, 10, 256

net = nn.Sequential(

d2l.FlattenLayer(),

nn.Linear(num_inputs, num_hiddens),

nn.ReLU(),

nn.Linear(num_hiddens, num_outputs),

)

for params in net.parameters():

init.normal_(params, mean=0, std=0.01)