实验环境:hadoop+java jdk+ubuntu

准备数据文件

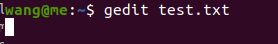

新建一个叫test的文本文件

pass:gedit 是一款文本编辑器,非常好用,没有的可以改为vi或vim

内容随便输

a b d aaa

das fs aa

ddd fssf

fsa aa

www werf

faa

编写代码

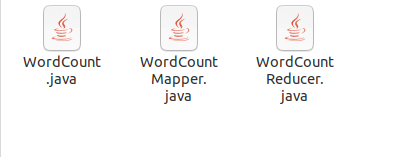

同样的,新建一个WordCountMapper.java,WordCountReducer.java,WordCount.java

并将以下代码复制进去

WordCountMapper.java

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

import java.util.StringTokenizer;

public class WordCountMapper extends Mapper<LongWritable,Text,Text,IntWritable>{

@Override

protected void map(LongWritable key, Text value, Context context)

throws IOException, InterruptedException {

//得到输入的每一行数据

String line=value.toString();

StringTokenizer st=new StringTokenizer(line);

while (st.hasMoreTokens()){

String word= st.nextToken();

context.write(new Text(word),new IntWritable(1)); //output

}

}

}

WordCountReducer.java

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class WordCountReducer extends Reducer<Text,IntWritable,Text,IntWritable>{

@Override

protected void reduce(Text key, Iterable<IntWritable> iterable, Context context) throws IOException, InterruptedException {

int sum=0;

for (IntWritable i:iterable){

sum=sum+i.get();

}

context.write(key,new IntWritable(sum));

}

}

WordCount.java

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class WordCount {

public static void main(String[] args){

//创建配置对象

Configuration conf=new Configuration();

try{

//创建job对象

Job job = Job.getInstance(conf, "word count");

//Configuration conf, String jobName

//设置运行job的类

job.setJarByClass(WordCount.class);

//设置mapper 类

job.setMapperClass(WordCountMapper.class);

//设置reduce 类

job.setReducerClass(WordCountReducer.class);

//设置map输出的key value

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(IntWritable.class);

//设置reduce 输出的 key value

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

//设置输入输出的路径

FileInputFormat.setInputPaths(job, new Path(args[0]));

FileOutputFormat.setOutputPath(job, new Path(args[1]));

//提交job

boolean flag = job.waitForCompletion(true);

if(!flag){

System.out.println("提交作业失败");

}

}catch (Exception e){

e.printStackTrace();

}

}

}

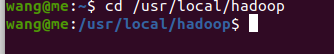

hadoop

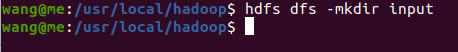

进入hadoop安装目录下,注意自己的路径

cd /usr/local/hadoop

在hdfs上新建input目录,如果以及有了则跳过此步

hdfs dfs -mkdir input

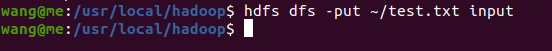

将之前的数据文件传到hdfs input目录上,注意自己文件的路径

hdfs dfs -put ~/test.txt input

导入java需要的包,注意自己的版本和路径

export CLASSPATH="/usr/local/hadoop/share/hadoop/common/hadoop-common-2.7.3.jar:/usr/local/hadoop/share/hadoop/mapreduce/hadoop-mapreduce-client-core-2.7.3.jar:/usr/local/hadoop/share/hadoop/common/lib/commons-cli-1.2.jar:$CLASSPATH"

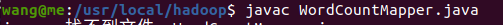

将之前建立好的三个java文件复制到hadoop目录下

编译之前的java文件,如果编译报很多错误则是之前导入包有问题,回到上面重新导

javac WordCountMapper.java

将之前三个文件编译好,形成三个.class文件

将所以的.class文件打包成一个WordCount.jar

jar -cvf WordCount.jar *.class

hadoop jar WordCount.jar WordCount input/test.txt output

WordCount.jar为包名,WordCount为主类名,input/test.txt为数据所在路径,output为输出路径

有下面界面则说明成功

查看结果

hdfs dfs -cat output/*

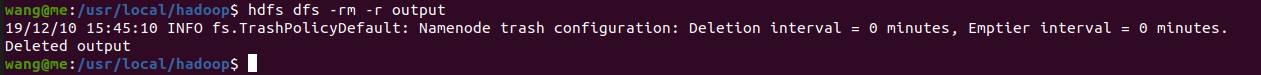

每次运行完之后,删掉output文件夹,否则以后执行不了