前面搭好了集群,然后也知道了如何用命令行命令来实现文件的管理,然后也可以用web控制面板来查看上传的文件了,很显然在实际的应用中是不可能使用命令行做操作的。

有些人可能不知道hadoop一开始是lucene的子项目,那么肯定hadoop就有java的API了,现在就让我们来实现以下比较常见的操作。

我是用的伪分布模式进行演示,其实是一样的。

maven我建议使用国内的云

这个大家上网搜一下maven换源是很简单的,这里不再赘述,总之就是修改一下setting.xml文件

- maven依赖

首先需要下载maven这里我用的是maven3.6,下载到官网下载。

然后得到这样一个压缩包,解压缩之后得到文件夹,点击idea左上角的file进入setting,

首先是导入maven,然后是导入setting文件,然后改变一下repository的位置,我一般不建议放在c盘。然后就可以了。

<dependencies>

<!-- https://mvnrepository.com/artifact/org.apache.hadoop/hadoop-common -->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>2.7.3</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.hadoop/hadoop-client -->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>2.7.3</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.hadoop/hadoop-hdfs -->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-hdfs</artifactId>

<version>2.7.3</version>

</dependency>

<dependency>

<groupId>log4j</groupId>

<artifactId>log4j</artifactId>

<version>1.2.17</version>

</dependency>

<!-- https://mvnrepository.com/artifact/junit/junit -->

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.12</version>

<scope>test</scope>

</dependency>

</dependencies>

直接复制进去就好了,具体的功能和作用可以在时间的过程中不断地深入了解。

- 配置本地hadoop环境首先,这里我用的是hadoop2.7.3,然后里面需要两个文件是没有的,一个是winutils.exe,另一个是hadoop.dll,我放在网盘里了地址如下:

点击下载 提取码 5n2g

注:要将这两个文件放入你的hadoop目录中的bin文件中。

然后有两种方法将本地Hadoop环境导入工程。

- 直接用代码引入

System.setProperty("hadoop.home.dir","F:/bigdata_software/hadoop-2.7.3");

- 第二种就是设置环境变量HADOOP_HOME,然后PATH中加入%HADOOP_HOME%\bin。(推荐)

注:在下面的demo中我写了第一种的引入,但是其实我也配置了第二种方法。方便大家测试。

- 然后就可以开始写代码了

- 文件的上传:

@Test

public void copyFile() throws IOException {

System.setProperty("hadoop.home.dir","F:/bigdata_software/hadoop-2.7.3");

Configuration conf = new Configuration();

conf.set("fs.defaultFS","hdfs://XXX:XXX:XXX:XXX:9000");//你的集群IP

FileSystem fs = FileSystem.get(conf);

fs.copyFromLocalFile(new Path("D:/idea/HDFS/src/main/resources/new.txt"), new Path("/1222_1.txt"));

fs.close();

System.out.println("done");

}

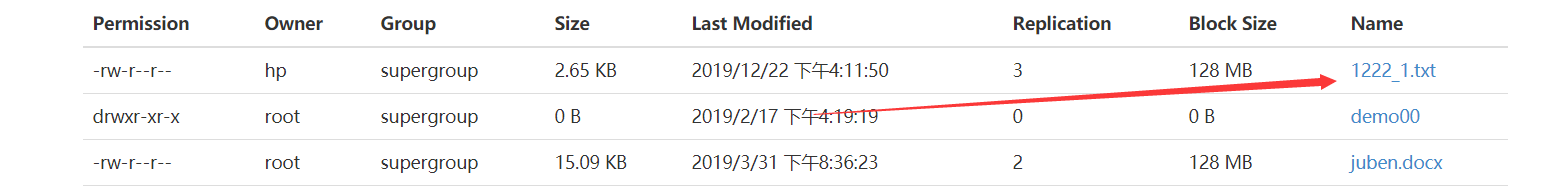

用dash查看结果:

- 删除文件

@Test

public void deleteFile() throws IOException {

System.setProperty("hadoop.home.dir","F:/bigdata_software/hadoop-2.7.3");

Configuration conf = new Configuration();

conf.set("fs.defaultFS","hdfs://:9000");

FileSystem fs = FileSystem.get(conf);

fs.delete(new Path("/user"), true);//第二个参数是否递归删除

fs.close();

System.out.println("done");

}

- 文件下载

@Test

public void getFiles() throws IOException {

System.setProperty("hadoop.home.dir","F:/bigdata_software/hadoop-2.7.3");

Configuration conf = new Configuration();

conf.set("fs.defaultFS","hdfs://:9000");

FileSystem fs = FileSystem.get(conf);

fs.copyToLocalFile(false, new Path("/juben.docx"), new Path("D:/idea/HDFS/src/main/resources/juben.copy.docx"), true);

fs.close();

System.out.println("done");

}

copyToLocalFile由四个参数

是否删除源文件

路径1

路径2

是否检查文件

- 查看文件详情

public void checkFileInfo() throws IOException {

System.setProperty("hadoop.home.dir","F:/bigdata_software/hadoop-2.7.3");

Configuration conf = new Configuration();

conf.set("fs.defaultFS","hdfs://:9000");

FileSystem fs = FileSystem.get(conf);

RemoteIterator<LocatedFileStatus> files = fs.listFiles(new Path("/"), true);

//遍历

while (files.hasNext()) {

LocatedFileStatus file = files.next();

System.out.println(file.getPath().getName());

//打印文件信息,这里不多列举

BlockLocation[] locations = file.getBlockLocations();

for (BlockLocation bl : locations) {

System.out.println("block-offset:" + bl.getOffset());

String[] hosts = bl.getHosts();

for (String host : hosts) {

System.out.println(host);

}

}

System.out.println("——————————————————");

}

}

- 数据流IO操作上传

public void putFileStream() throws IOException {

System.setProperty("hadoop.home.dir","F:/bigdata_software/hadoop-2.7.3");

Configuration conf = new Configuration();

conf.set("fs.defaultFS","hdfs://:9000");

FileSystem fs = FileSystem.get(conf);

FileInputStream fis = new FileInputStream(new File("D:/idea/HDFS/src/main/resources/log4j.properties"));

FSDataOutputStream fos = fs.create(new Path("/log4j.copy.properties"));

try {

IOUtils.copyBytes(fis, fos, 4 * 1024, false);//最后一个参数:是否关闭数据流

} catch (IOException e) {

e.printStackTrace();

}finally {

IOUtils.closeStream(fos);

IOUtils.closeStream(fis);

}

System.out.println("done");

}

- 数据流IO操作下载

public void getFileStream() throws IOException {

System.setProperty("hadoop.home.dir","F:/bigdata_software/hadoop-2.7.3");

Configuration conf = new Configuration();

conf.set("fs.defaultFS","hdfs://:9000");

FileSystem fs = FileSystem.get(conf);

FSDataInputStream fis = fs.open(new Path("/juben.docx"));

FileOutputStream fos = new FileOutputStream(new File("D:/idea/HDFS/src/main/resources/juben.copy.docx"));

try {

IOUtils.copyBytes(fis, fos, 4 * 1024, false);

} catch (IOException e) {

e.printStackTrace();

}finally {

IOUtils.closeStream(fos);

IOUtils.closeStream(fis);

}

System.out.println("done");

}

- 当遇到分块存储的文件时(size》128M)

采用定位下载

@Test

public void readFileSeek1() throws IOException {

System.setProperty("hadoop.home.dir","F:/bigdata_software/hadoop-2.7.3");

Configuration conf = new Configuration();

conf.set("fs.defaultFS","hdfs://:9000");

FileSystem fs = FileSystem.get(conf);

FSDataInputStream fis = fs.open(new Path(""));

FileOutputStream fos = new FileOutputStream(new File(""));

//流对接

byte[] bytes = new byte[1024];

for (int i = 0; i < 128 * 1024; i++) {

fis.read(bytes);

fos.write(bytes);

}

IOUtils.closeStream(fos);

IOUtils.closeStream(fis);

System.out.println("done");

}

@Test

public void readFileSeek2() throws IOException {

System.setProperty("hadoop.home.dir","F:/bigdata_software/hadoop-2.7.3");

Configuration conf = new Configuration();

conf.set("fs.defaultFS","hdfs://:9000");

FileSystem fs = FileSystem.get(conf);

FSDataInputStream fis = fs.open(new Path(""));

FileOutputStream fos = new FileOutputStream(new File(""));

fis.seek(128 * 1024 * 1024);

IOUtils.copyBytes(fis, fos, 1024, false);

IOUtils.closeStream(fos);

IOUtils.closeStream(fis);

System.out.println("done");

}

有问题的话可以随时评论问我,我每天都会回复,大家共勉!!~~