上篇:第1章 Kafka概述

1、环境准备

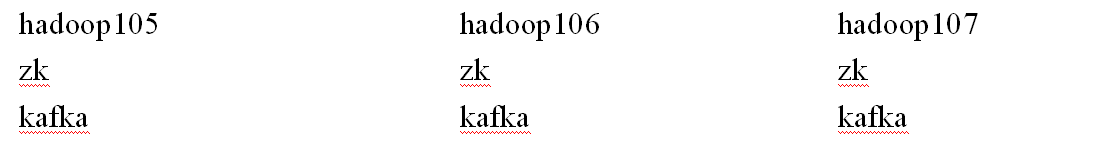

1.1 集群规划

1.2 jar包下载

http://kafka.apache.org/downloads.html

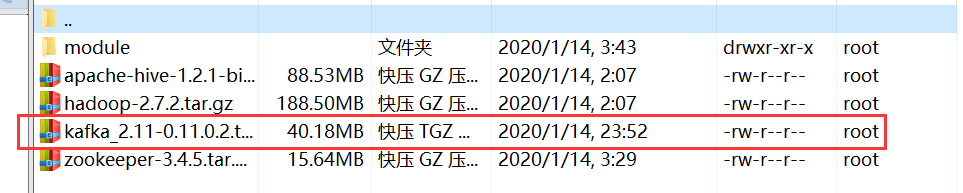

2、Kafka集群部署

(1)上传kafka压缩包kafka_2.11-0.11.0.2.tgz到/usr/local/hadoop文件目录下:

(2)解压kafka_2.11-0.11.0.2.tgz到/usr/local/hadoop/module文件目录下

[root@hadoop105 hadoop]# tar -zxvf kafka_2.11-0.11.0.2.tgz -C /usr/local/hadoop/module/

(3)更改 kafka_2.11-0.11.0.2名称为 kafka

[root@hadoop105 module]# mv kafka_2.11-0.11.0.2/ kafka

(4)在/usr/local/hadoop/module/kafka文件目录下创建logs文件夹

[root@hadoop105 kafka]# mkdir logs

(5)修改配置文件:server.properties

需要修改的配置文件如下图:

注意:Log Retention Policy(了解一下)

总结:server.properties配置文件参数属性

[root@hadoop102 kafka]$ cd config/

[root@hadoop102 config]$ vim server.properties

需要更改配置文件参数属性的内容:

# Server Basics

#broker的全局唯一编号,不能重复;删除topic功能使能

broker.id=0

delete.topic.enable=true

# Log Basics kafka运行日志存放的路径

log.dirs=/usr/local/hadoop/module/kafka/logs

# Zookeeper 配置连接Zookeeper集群地址

zookeeper.connect=hadoop105:2181,hadoop106:2181,hadoop107:2181

(6)配置完毕之后,对hadoop集群分发一下(两台机器:hadoop106、Hadoop07)

[root@hadoop105 module]# scp -r kafka/ hadoop106:/usr/local/hadoop/module/

[root@hadoop105 module]# scp -r kafka/ hadoop107:/usr/local/hadoop/module/

分发完毕之后,hadoop106、hadoop107这两台机器就有kafka数据了,接下来,对这两台机器进行更改

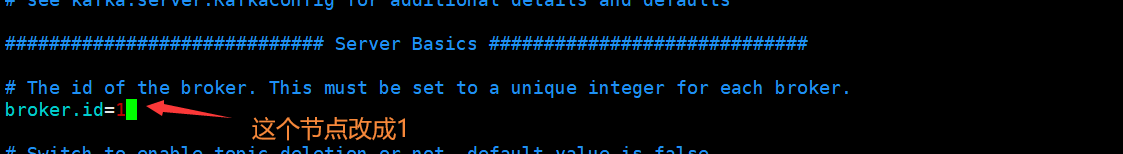

(7)首先,对hadoop106机器的配置参数进行修改

[root@hadoop106 module]# cd kafka/config/

[root@hadoop106 config]# vim server.properties

broker.id=1

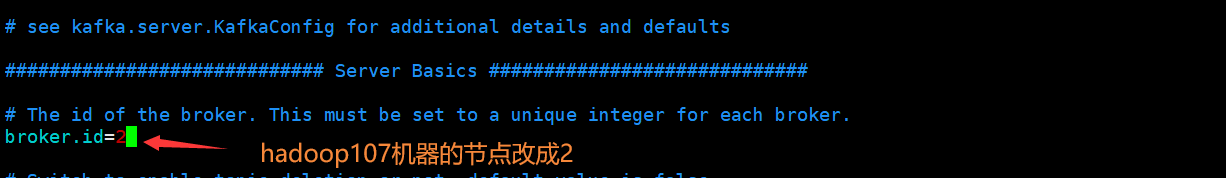

(8)其次,对hadoop107机器的配置参数进行修改

[root@hadoop107 module]# cd kafka/config/

[root@hadoop107 config]# vim server.properties

broker.id=2

(9)配置环境变量(hadoop105、Hadoop06、Hadoop07)

[root@hadoop105 kafka]# vim /etc/profile

#KAFKA_HOME

export KAFKA_HOME=/usr/local/hadoop/module/kafka

export PATH=$PATH:$KAFKA_HOME/bin

[root@hadoop105 kafka]# source /etc/profile

配置完毕之后,对机器分发一下(两台机器:hadoop106、Hadoop07)

启动Zookeeper集群

启动kafka集群

注意是:

启动kafka集群,必须先把zookeeper集群启动起来

依次在hadoop105、hadoop106、hadoop107节点上启动kafka

[root@hadoop105 kafka]$ bin/kafka-server-start.sh config/server.properties &

[root@hadoop106 kafka]$ bin/kafka-server-start.sh config/server.properties &

[root@hadoop107 kafka]$ bin/kafka-server-start.sh config/server.properties &

检查是否启动成功:

hadoop106

[2020-01-14 23:18:50,346] INFO [Kafka Server 1], started (kafka.server.KafkaServer)

hadoop107

[2020-01-14 23:31:22,569] INFO [Group Metadata Manager on Broker 2]: Removed 0 expired offsets in 1 milliseconds. (kafka.coordinator.group.GroupMetadataManager)

反馈出来的错误:

hadoop105:

[2020-01-16 17:59:52,847] INFO [Group Metadata Manager on Broker 0]: Removed 0 expired offsets in 0 milliseconds. (kafka.coordinator.group.GroupMetadataManager)

hadoop106:

kafka.common.InconsistentBrokerIdException: Configured broker.id 1 doesn't match stored broker.id 0 in meta.properties. If you moved your data, make sure your configured broker.id matches. If you intend to create a new broker, you should remove all data in your data directories (log.dirs).

hadoop107:

kafka.common.InconsistentBrokerIdException: Configured broker.id 2 doesn't match stored broker.id 0 in meta.properties. If you moved your data, make sure your configured broker.id matches. If you intend to create a new broker, you should remove all data in your data directories (log.dirs).

注意:

在启动kafka集群的时候,meta.properties里的broker.id改为跟server.properties里的一致

启动成功,如图所示:

hadoop105机器

hadoop106机器

hadoop107机器

关闭kafka集群

[root@hadoop105 kafka]$ bin/kafka-server-stop.sh stop

[root@hadoop106 kafka]$ bin/kafka-server-stop.sh stop

[root@hadoop107 kafka]$ bin/kafka-server-stop.sh stop

3、 Kafka命令行操作

(1)查看详细进程:jps -l (进程文档经常使用这个命令)

[root@hadoop105 ~]# jps -l

8184 org.apache.zookeeper.server.quorum.QuorumPeerMain

8236 kafka.Kafka

8556 sun.tools.jps.Jps

[root@hadoop105 kafka]#

(2)查看当前服务器中的所有topic(说明没有topic,需要创建)

[root@hadoop105 kafka]# bin/kafka-topics.sh --zookeeper hadoop105:2181 --list

[root@hadoop105 kafka]#

(3)创建一个topic(2个副本2个节点,名字叫first)

[root@hadoop105 kafka]# bin/kafka-topics.sh --create --zookeeper hadoop105:2181 --partitions 2 --replication-factor 2 --topic first

Created topic "first".

创建成功为:first

(4)查看当前服务器中的所有topic

[root@hadoop105 kafka]# bin/kafka-topics.sh --list --zookeeper hadoop105:2181

first

[root@hadoop105 kafka]#

选项说明:

–topic 定义topic名

–replication-factor 定义副本数

–partitions 定义分区数

注意:

a、当创建topics成功后,会根据不同的机器分区定义的,效果如图所示:

hadoop105机器,在kafka/logs文件目录下看到first-0空文件夹

hadoop106机器,在kafka/logs文件目录下看到first-0、first-1空文件夹

hadoop106机器,在kafka/logs文件目录下看到first-1空文件夹

由此可见,注意副本数不能大于节点数

(5)删除topic

[root@hadoop105 kafka]$ bin/kafka-topics.sh

--zookeeper hadoop105:2181 --delete --topic first

注意:

需要server.properties中设置delete.topic.enable=true否则只是标记删除或者直接重启。

若有提示:是它显示的bug

(6)发送消息

[root@hadoop105 kafka]# bin/kafka-console-producer.sh --broker-list hadoop105:9092 --topic first

>hello world

>hi KafKa!

由于是集群,所以可以在不同集群启动,或相同机器启动也是可以的,接下来我们做消费消息

(7)消费消息

[root@hadoop106 kafka]# bin/kafka-console-consumer.sh --zookeeper hadoop105:2181 --from-beginning --topic first

Using the ConsoleConsumer with old consumer is deprecated and will be removed in a future major release. Consider using the new consumer by passing [bootstrap-server] instead of [zookeeper].

hello world

He!

HelloWord,KaKa

- -from-beginning:会把first主题中以往所有的数据都读取出来。根据业务场景选择是否增加该配置。

另一种消费方式(不会有警告):

[root@hadoop106 kafka]# bin/kafka-console-consumer.sh --bootstrap-server hadoop105:9092 --from-beginning --topic first

HelloWord,KaKa

hello world

He!

把 - -zookeeper替换:- -bootstrap-server,同时把端口号改为:9092,不会有警告

(8)查看某个Topic的详情

[root@hadoop107 kafka]# bin/kafka-topics.sh --zookeeper hadoop105:2181 --describe --topic first

Topic:first PartitionCount:2 ReplicationFactor:2 Configs:

Topic: first Partition: 0 Leader: 0 Replicas: 0,1 Isr: 0,1

Topic: first Partition: 1 Leader: 1 Replicas: 1,2 Isr: 1,2

[root@hadoop107 kafka]#