一、主题式网络爬虫设计方案(15分)

1.主题式网络爬虫名称

关于python的中国城市天气网爬取

2.主题式网络爬虫爬取的内容与数据特征分析

爬取中国天气网各个城市每年各个月份的天气数据,

包括最高城市名,最低气温,天气状况等。

3.主题式网络爬虫设计方案概述(包括实现思路与技术难点)

实现思路:通过正则表达式以及通过读取爬取数据的csv文件数据,并且变成可视化图。

技术难点:代码有问题,初期爬取的值不是城市,而只有省份,后来也不对,从城市开始后就是天气了,不行。

二、主题页面的结构特征分析(15分)

1.主题页面的结构特征

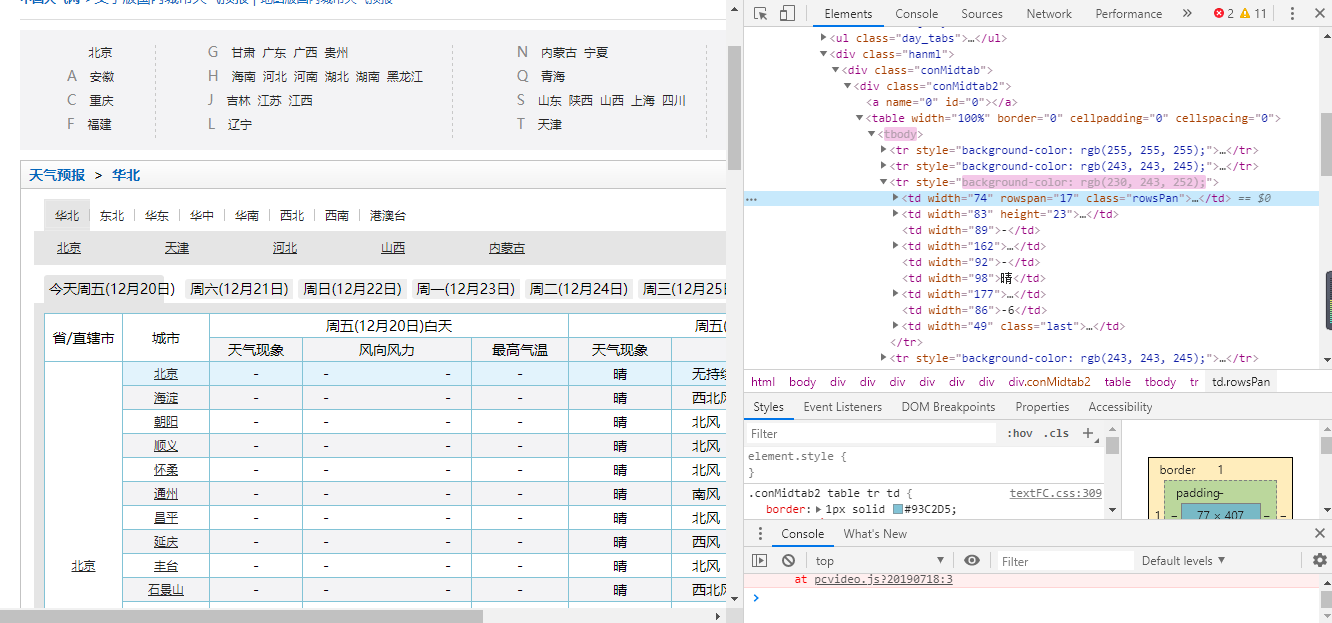

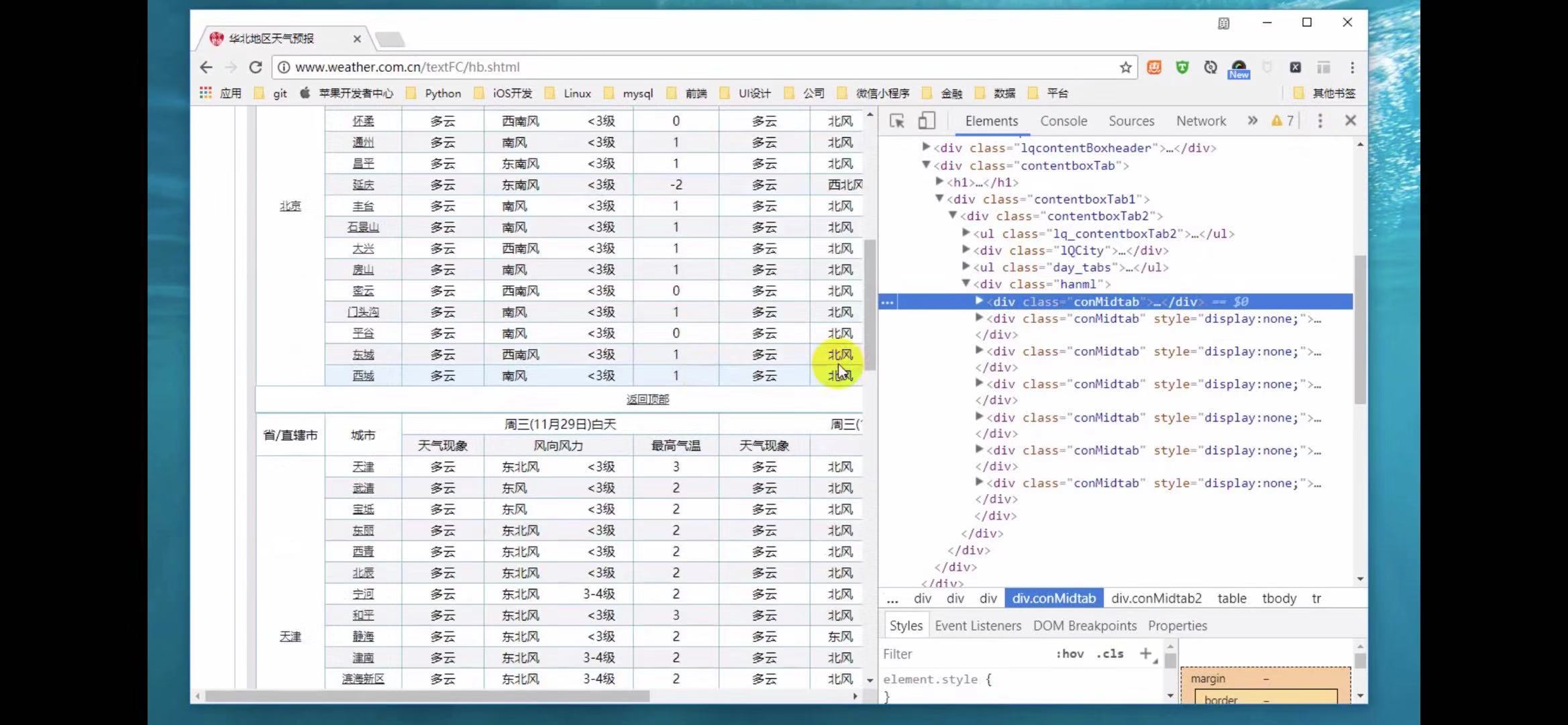

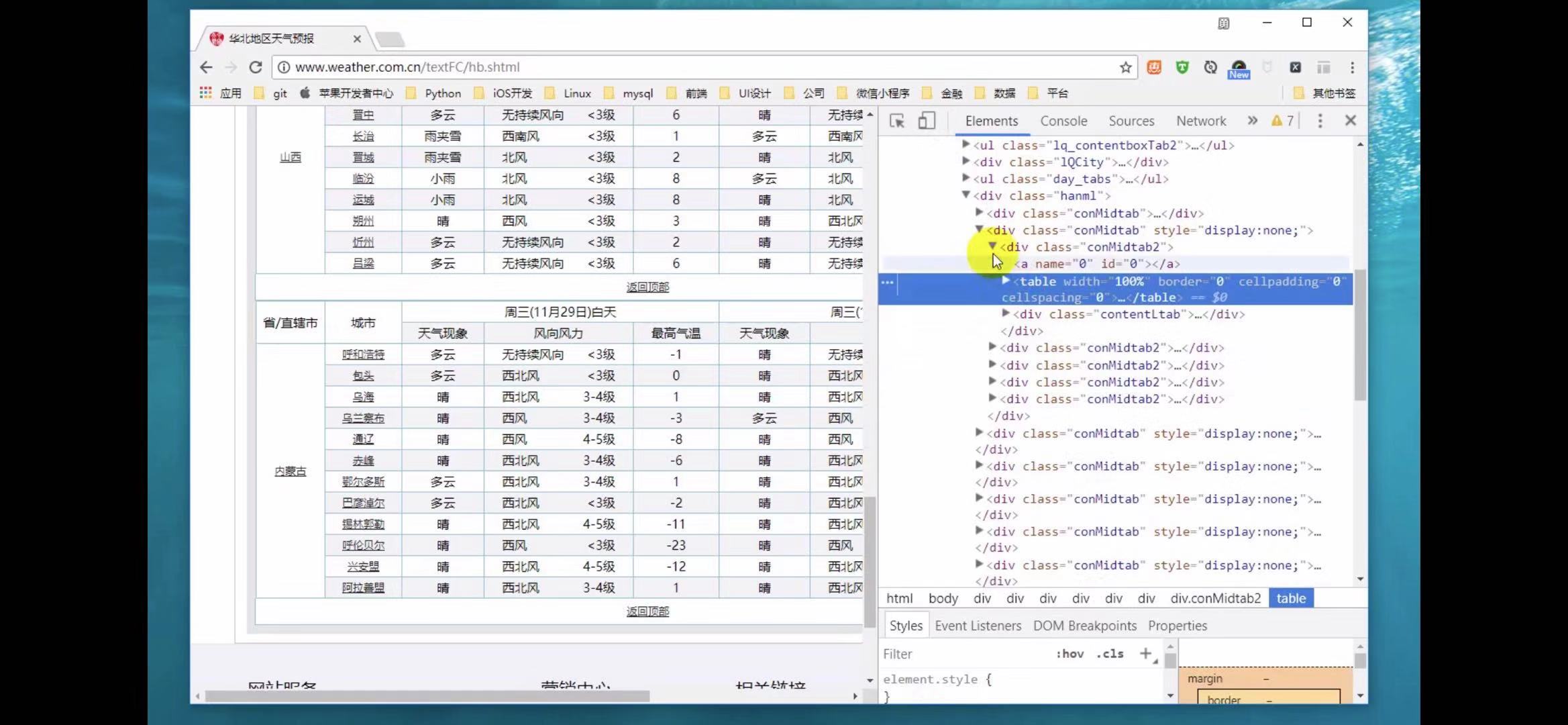

爬取页面的天气信息,该页面是由table,tr,conmidtab,display和none,div来组成的中国天气网html页面程序代码。

2.Htmls页面解析

以下是中国天气网部分地区的HTML页面分析,可以发现,一个省份就是用一个table来进行装,选择各个table,就可以将里面的各个城市都选中,

又在各个table中用tr来装载各个城市的天气信息,前两个tr标签是表头,后面的tr标签才是信息,

q

以及以下的’conMidtab包裹了该页面地区所有城市的信息段,将其展开,

会在里面再找到一个table,里面也有一个conmidtab,但并没有在页面所显示出来,因为,里面有一个display和none将它隐藏了起来。可运用于想查询哪一天的天气信息,那就会把上一条的天气信息隐藏起来。

3.节点(标签)查找方法与遍历方法

(必要时画出节点树结构)

使用正则表达式,以及tr标签,div,table分装,来进行查找各个城市的天气信息。

三、网络爬虫程序设计(60分)

爬虫程序主体要包括以下各部分,要附源代码及较详细注释,并在每部分程序后面提供输出结果的截图。

3.文本分析(可选):jieba分词、wordcloud可视化

5.数据持久化

6.附完整程序代码

1.数据爬取与采集

我们通过获取网页url来进行爬取

def get_one_page(url): #获取网页url 进行爬取

print('进行爬取'+url)

headers={'User-Agent':'User-Agent:Mozilla/5.0'} #头文件的user-agent

try:

response = requests.get(url,headers=headers)

if response.status_code == 200:

return response.content

return None

except RequestException:

return None

2.对数据进行清洗和处理

def parse_one_page(html):#解析清理网页

soup = BeautifulSoup(html, "lxml")

info = soup.find('div', class_='wdetail')

rows=[]

tr_list = info.find_all('tr')[1:] # 使用从第二个tr开始取

for index, tr in enumerate(tr_list): # enumerate可以返回元素的位置及内容

td_list = tr.find_all('td')

date = td_list[0].text.strip().replace("\n", "") # 取每个标签的text信息,并使用replace()函数将换行符删除

weather = td_list[1].text.strip().replace("\n", "").split("/")[0].strip()

temperature_high = td_list[2].text.strip().replace("\n", "").split("/")[0].strip()

temperature_low = td_list[2].text.strip().replace("\n", "").split("/")[1].strip()

rows.append((date,weather,temperature_high,temperature_low))

return rows

3.文本分析(可选):jieba分词、wordcloud可视化

然后我们再选取我们想要的城市,所需年份,及月份,以及相关部分代码

cities = ['quanzhou',beijing','shanghai','tianjin','chongqing','akesu','anning','anqing',

'anshan','anshun','anyang','baicheng','baishan','baiyiin','bengbu','baoding',

'baoji','baoshan','bazhong','beihai','benxi','binzhou','bole','bozhou',

'cangzhou','changde','changji','changshu','changzhou','chaohu','chaoyang',

'chaozhou','chengde','chengdu','chenggu','chengzhou','chibi','chifeng','chishui',

'chizhou','chongzuo','chuxiong','chuzhou','cixi','conghua'] #获取城市名称来爬取选定城市天气

years = ['2011','2012','2013','2014','2015','2016','2017','2018']#爬取的年份 months = ['01','02','03','04','05','06', '07', '08','09','10','11','12']#爬取的月份

def get_one_page(url): #获取网页url 进行爬取

def parse_one_page(html):#解析清晰和处理网页

if __name__ == '__main__':#主函数

# os.chdir() # 设置工作路径

#读取CSV文件数据 filename='quanzhou_weather.csv'

current_date=datetime.strptime(row[0],'%Y-%m-%d') #将日期数据转换为datetime对象

soup = BeautifulSoup(html, "lxml") #使用lxml的方式进行解析

info = soup.find('div', class_='wdetail') #寻找到第一个div,以及它的class= wdetail

ate = td_list[0].text.strip().replace("\n", "") # 取每个标签的text信息,并使用replace()函数将换行符删除

4.数据分析与可视化

(例如:数据柱形图、直方图、散点图、盒图、分布图、数据回归分析等)

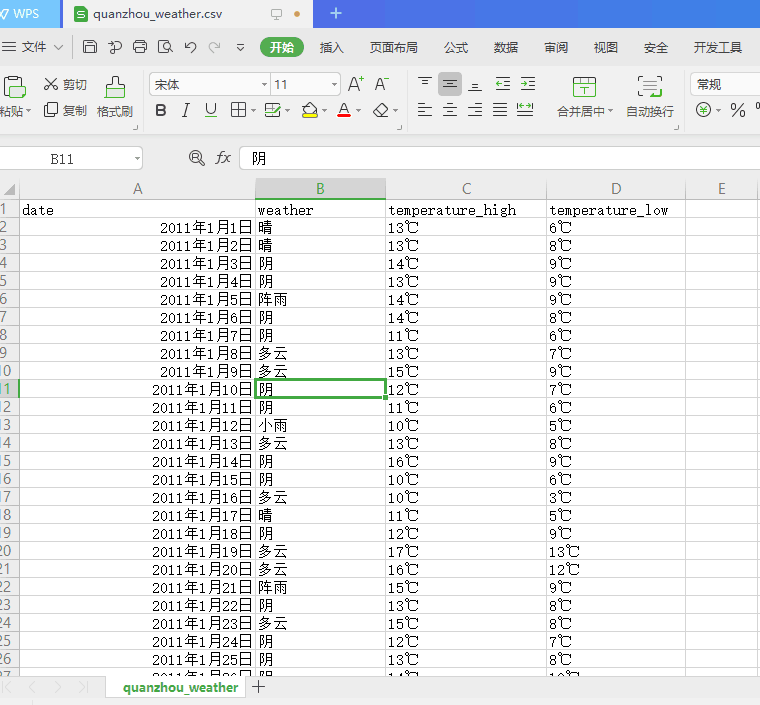

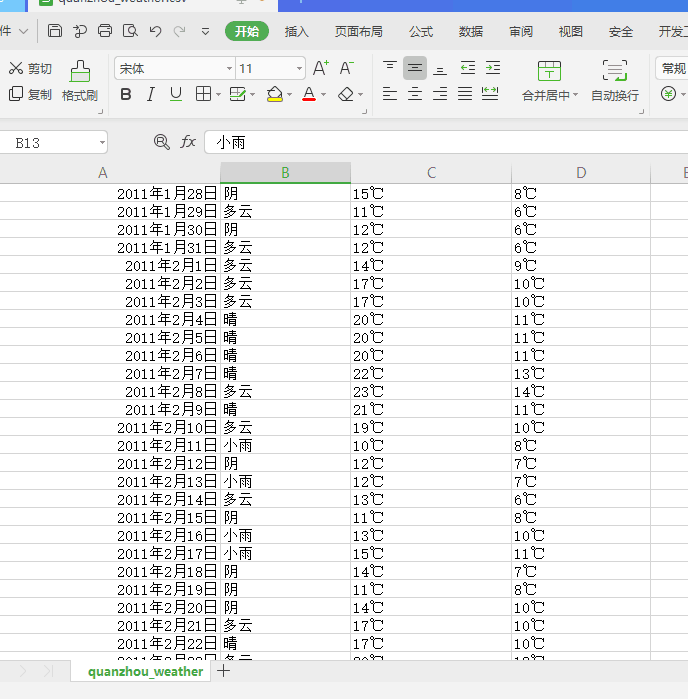

下图为爬取代表性城市泉州天气成功的效果图。

下图为代表城市泉州历年来各个月份每天的天气数据

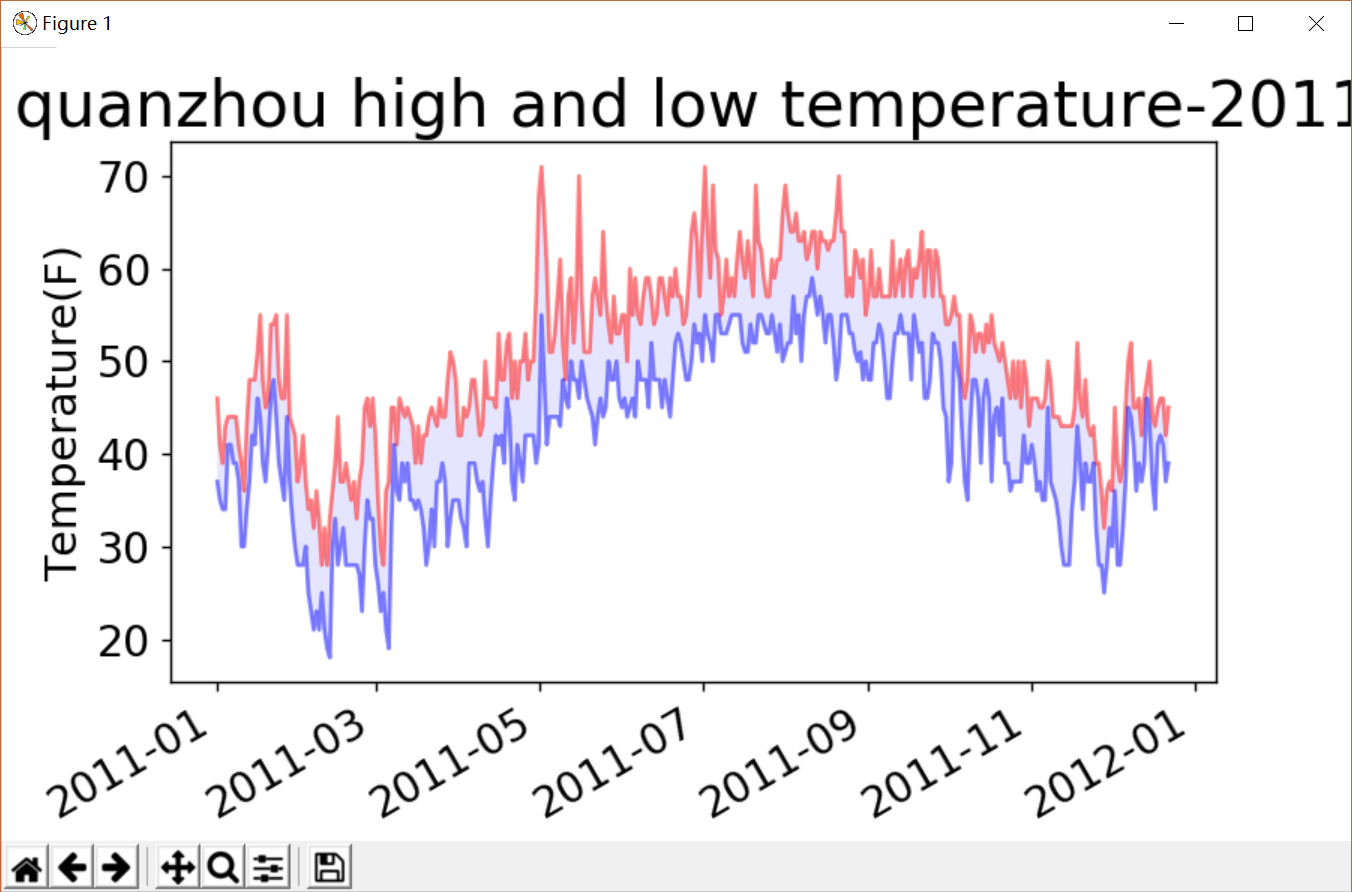

下图为爬取的代表性城市天气的可视化图,以及相关的代码分析。

import csv

from matplotlib import pyplot as plt

from datetime import datetime

#读取CSV文件数据

filename='quanzhou_weather.csv'

with open(filename) as f: #打开这个文件,并将结果文件对象存储在f中

reader=csv.reader(f) #创建一个阅读器reader

header_row=next(reader) #返回文件中的下一行

dates,highs,lows=[],[],[] #声明存储日期,最值的列表

for row in reader:

current_date=datetime.strptime(row[0],'%Y-%m-%d') #将日期数据转换为datetime对象

dates.append(current_date) #存储日期

high=int(row[1]) #将字符串转换为数字

highs.append(high) #存储温度最大值

low=int(row[3])

lows.append(low) #存储温度最小值

#根据数据绘制图形

fig=plt.figure(dpi=128,figsize=(10,6))

plt.plot(dates,highs,c='red',alpha=0.5)#实参alpha指定颜色的透明度,0表示完全透明,1(默认值)完全不透明

plt.plot(dates,lows,c='blue',alpha=0.5)

plt.fill_between(dates,highs,lows,facecolor='blue',alpha=0.1) #给图表区域填充颜色

plt.title('quanzhou high and low temperature-2011',fontsize=24)

plt.xlabel('',fontsize=16)

plt.ylabel('Temperature(F)',fontsize=16)

plt.tick_params(axis='both',which='major',labelsize=16)

fig.autofmt_xdate() #绘制斜的日期标签

plt.show()

5.数据持久化

6.附完整程序代码

爬取中国天气网各城市天气数据完整程序代码:

import requests from requests.exceptions import RequestException #爬取异常函数 from bs4 import BeautifulSoup import os #操作系统接口模块 用来写入爬出数据 import csv #爬出数据存为csv文件 import time

def get_one_page(url): #获取网页url 进行爬取 print('进行爬取'+url) headers={'User-Agent':'User-Agent:Mozilla/5.0'} #头文件的User-Agent try: response = requests.get(url,headers=headers) if response.status_code == 200: return response.content return None except RequestException: return None def parse_one_page(html):#解析清晰和处理网页 soup = BeautifulSoup(html, "lxml") #使用lxml的方式进行解析 info = soup.find('div', class_='wdetail') #寻找到第一个div,以及它的class= wdetail rows=[] tr_list = info.find_all('tr')[1:] for index, tr in enumerate(tr_list): # enumerate可以返回元素的位置及内容 td_list = tr.find_all('td') date = td_list[0].text.strip().replace("\n", "") # 取每个标签的text信息,并使用replace()函数将换行符删除 weather = td_list[1].text.strip().replace("\n", "").split("/")[0].strip() temperature_high = td_list[2].text.strip().replace("\n", "").split("/")[0].strip() temperature_low = td_list[2].text.strip().replace("\n", "").split("/")[1].strip() rows.append((date,weather,temperature_high,temperature_low)) return rows cities = ['quanzhou',beijing','shanghai','tianjin','chongqing','akesu','anning','anqing', 'anshan','anshun','anyang','baicheng','baishan','baiyiin','bengbu','baoding', 'baoji','baoshan','bazhong','beihai','benxi','binzhou','bole','bozhou', 'cangzhou','changde','changji','changshu','changzhou','chaohu','chaoyang', 'chaozhou','chengde','chengdu','chenggu','chengzhou','chibi','chifeng','chishui', 'chizhou','chongzuo','chuxiong','chuzhou','cixi','conghua', 'dali','dalian','dandong','danyang','daqing','datong','dazhou', 'deyang','dezhou','dongguan','dongyang','dongying','douyun','dunhua', 'eerduosi','enshi','fangchenggang','feicheng','fenghua','fushun','fuxin', 'fuyang','fuyang1','fuzhou','fuzhou1','ganyu','ganzhou','gaoming','gaoyou', 'geermu','gejiu','gongyi','guangan','guangyuan','guangzhou','gubaotou', 'guigang','guilin','guiyang','guyuan','haerbin','haicheng','haikou', 'haimen','haining','hami','handan','hangzhou','hebi','hefei','hengshui', 'hengyang','hetian','heyuan','heze','huadou','huaian','huainan','huanggang', 'huangshan','huangshi','huhehaote','huizhou','huludao','huzhou','jiamusi', 'jian','jiangdou','jiangmen','jiangyin','jiaonan','jiaozhou','jiaozou', 'jiashan','jiaxing','jiexiu','jilin','jimo','jinan','jincheng','jingdezhen', 'jinghong','jingjiang','jingmen','jingzhou','jinhua','jining1','jining', 'jinjiang','jintan','jinzhong','jinzhou','jishou','jiujiang','jiuquan','jixi', 'jiyuan','jurong','kaifeng','kaili','kaiping','kaiyuan','kashen','kelamayi', 'kuerle','kuitun','kunming','kunshan','laibin','laiwu','laixi','laizhou', 'langfang','lanzhou','lasa','leshan','lianyungang','liaocheng','liaoyang', 'liaoyuan','lijiang','linan','lincang','linfen','lingbao','linhe','linxia', 'linyi','lishui','liuan','liupanshui','liuzhou','liyang','longhai','longyan', 'loudi','luohe','luoyang','luxi','luzhou','lvliang','maanshan','maoming', 'meihekou','meishan','meizhou','mianxian','mianyang','mudanjiang','nanan', 'nanchang','nanchong','nanjing','nanning','nanping','nantong','nanyang', 'neijiang','ningbo','ningde','panjin','panzhihua','penglai','pingdingshan', 'pingdu','pinghu','pingliang','pingxiang','pulandian','puning','putian','puyang', 'qiannan','qidong','qingdao','qingyang','qingyuan','qingzhou','qinhuangdao', 'qinzhou','qionghai','qiqihaer','quanzhou','qujing','quzhou','rikaze','rizhao', 'rongcheng','rugao','ruian','rushan','sanmenxia','sanming','sanya','xiamen', 'foushan','shangluo','shangqiu','shangrao','shangyu','shantou','ankang','shaoguan', 'shaoxing','shaoyang','shenyang','shenzhen','shihezi','shijiazhuang','shilin', 'shishi','shiyan','shouguang','shuangyashan','shuozhou','shuyang','simao', 'siping','songyuan','suining','suizhou','suzhou','tacheng','taian','taicang', 'taixing','taiyuan','taizhou','taizhou1','tangshan','tengchong','tengzhou', 'tianmen','tianshui','tieling','tongchuan','tongliao','tongling','tonglu','tongren', 'tongxiang','tongzhou','tonghua','tulufan','weifang','weihai','weinan','wendeng', 'wenling','wenzhou','wuhai','wuhan','wuhu','wujiang','wulanhaote','wuwei','wuxi','wuzhou', 'xian','xiangcheng','xiangfan','xianggelila','xiangshan','xiangtan','xiangxiang', 'xianning','xiantao','xianyang','xichang','xingtai','xingyi','xining','xinxiang','xinyang', 'xinyu','xinzhou','suqian','suyu','suzhou1','xuancheng','xuchang','xuzhou','yaan','yanan', 'yanbian','yancheng','yangjiang','yangquan','yangzhou','yanji','tantai','yanzhou','yibin', 'yichang','yichun','yichun1','yili','yinchuan','yingkou','yulin1','yulin','yueyang','yongkang', 'yongzhou','yuxi','changchun','zaozhuang','zhangjiajie','zhangjiakou','changsha','changle', 'zhangzhou','zhuhai','zhengzhou','zunyi','fuqing','foshan'] #获取城市名称来爬取选定城市天气 years = ['2011','2012','2013','2014','2015','2016','2017','2018']#爬取的年份 months = ['01','02','03','04','05','06', '07', '08','09','10','11','12']#爬取的月份 if __name__ == '__main__':#主函数 # os.chdir() # 设置工作路径 for city in cities: with open(city + '_weather.csv', 'a', newline='') as f: writer = csv.writer(f)

writer.writerow(['date','weather','temperature_high','temperature_low'])

for year in years:

for month in months:

url = 'http://www.tianqihoubao.com/lishi/'+city+'/month/'+year+month+'.html'

html = get_one_page(url)

content=parse_one_page(html)

writer.writerows(content)

print(city+year+'年'+month+'月'+'数据爬取完毕!')

time.sleep(2)

四、结论(10分)

1.经过对主题数据的分析与可视化,可以得到哪些结论?

爬取的代表性城市的数据很详细,精确到每一天的天气变化,以及通过可视化图可以知道,最高气温与最低气温相差大,昼夜温差大。

2.对本次程序设计任务完成的情况做一个简单的小结。

这段时间通过对爬取中国天气网数据的项目,得到了不少的收获,就比如说懂得了要先将爬出的数据存为CSV格式不然的话运行不出来,再然后获取该页面的url再进行爬取(其中还必须有头文件),先用tr进行爬取,以及enumerate可以返回元素的位置及内容,