爬取页面数据,我们需要访问页面,发送http请求,以下内容就是Python发送请求的几种简单方式:

会使用到的库 urllib requests

1.urlopen

import urllib.request

import urllib.parse

import urllib.error

import socket

data = bytes(urllib.parse.urlencode({"hello": "world"}),encoding='utf8')

try:

response = urllib.request.urlopen('http://httpbin.org/post',data=data,timeout=10)

print(response.status)

print(response.read().decode('utf-8'))

except urllib.error.URLError as e:

if isinstance(e.reason, socket.timeout):

print("TIMEOUT")

2.requests

用到requests中的get post delete put 方法访问请求 这种比一简单一些

每个方法有相应的参数列表,比如 get params参数 proxies:设置代理 auth: 认证 timeout :超时时间 等

import requests

ico = requests.get("https://github.com/favicon.ico")

with open("favicon.ico", "wb") as file:

file.write(ico.content)

3.Request Session

from requests import Session, Request

url = "https://home.cnblogs.com/u/qiutian-guniang/"

s = Session()

req = Request('GET', url=url, headers=header)

pred = s.prepare_request(req)

r = s.send(pred)

print(r.text)

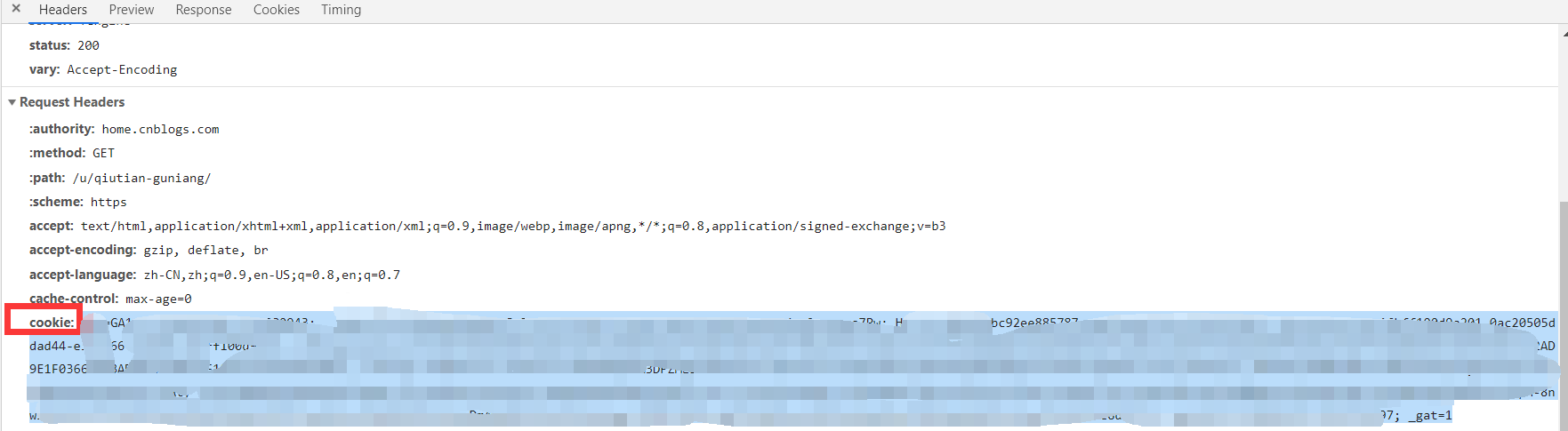

某些网页会禁止抓取数据 我们可以 通过设置User-Agent来设置 使用cookies来保持登录的访问状态例如:以下的cookie内容可以通过在F12控制台获取 复制粘贴 放入headers中

cookies = "_gat=1"

headers = {

"Cookie": cookies,

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; '

'x64) AppleWebKit/537.36 (KHTML, like Gecko) '

'Chrome/68.0.3440.106 Safari/537.36'

}