kubernetes二进制部署

1、环境规划

| 软件 |

版本 |

| Linux操作系统 |

CentOS Linux release 7.6.1810 (Core) |

| Kubernetes |

Kubernetes v1.13.4 Dashboard v1.8.3 |

| Docker |

Docker version 18.09.1, build 4c52b90 |

| etcd |

etcd-v3.3.10 |

| 角色 |

IP |

组件 |

推荐配置 |

| k8s_master etcd01 |

192.168.22.141 |

kube-apiserver kube-controller-manager kube-scheduler etcd |

CPU 2核+ 2G内存+ |

| k8s_node01 etcd02 |

192.168.22.142 |

kubelet kube-proxy docker flannel etcd |

CPU 2核+ 2G内存+ |

| k8s_node02 etcd03 |

192.168.22.143 |

kubelet kube-proxy docker flannel etcd |

CPU 2核+ 2G内存+ |

2、 单Master集群架构

3、 系统常规参数配置

3.1 关闭selinux

sed -i 's/SELINUX=enforcing/SELINUX=disabled/' /etc/selinux/config

setenforce 0 3.2 文件数调整

sed -i '/* soft nproc 4096/d' /etc/security/limits.d/20-nproc.conf

echo '* - nofile 65536' >> /etc/security/limits.conf

echo '* soft nofile 65535' >> /etc/security/limits.conf

echo '* hard nofile 65535' >> /etc/security/limits.conf

echo 'fs.file-max = 65536' >> /etc/sysctl.conf3.3 防火墙关闭

systemctl disable firewalld.service

systemctl stop firewalld.service3.4 常用工具安装及时间同步

yum -y install vim telnet iotop openssh-clients openssh-server ntp net-tools.x86_64 wget

ntpdate time.windows.com3.5 hosts文件配置(3个节点)

vim /etc/hosts

192.168.22.141 k8s_master

192.168.22.142 k8s_node01

192.168.22.143 k8s_node02

192.168.22.144 k8s_node03

192.168.22.145 k8s_node05vim /etc/hostname

k8s_master

vim /etc/hostname

k8s_node01

vim /etc/hostname

k8s_node023.6 服务器之间免密钥登录

ssh-keygen

ssh-copy-id -i ~/.ssh/id_rsa.pub [email protected]

ssh-copy-id -i ~/.ssh/id_rsa.pub [email protected]4、 自签ssl证书

4.1 etcd生成证书

| cfssl.sh |

etcd-cert.sh |

etcd.sh |

4.1.1 安装cfssl工具(cfssl.sh)

[root@k8s_master ssl_etcd]# cd /home/k8s_install/Deploy/ssl_etcd

[root@k8s_master ssl_etcd]# chmod +x cfssl.sh

[root@k8s_master ssl_etcd]# ./cfssl.sh

[root@k8s_master ssl_etcd]# cat cfssl.sh

curl -L https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 -o /usr/local/bin/cfssl

curl -L https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 -o /usr/local/bin/cfssljson

curl -L https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 -o /usr/local/bin/cfssl-certinfo

chmod +x /usr/local/bin/cfssl /usr/local/bin/cfssljson /usr/local/bin/cfssl-certinfo

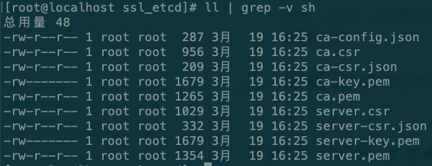

4.1.2 生成etcd 自签ca证书(etcd-cert.sh)

chmod +x etcd-cert.sh

./etcd-cert.sh[root@k8s_master ssl_etcd]# cat etcd-cert.sh

cat > ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"www": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

cat > ca-csr.json <<EOF

{

"CN": "etcd CA",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing"

}

]

}

EOF

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

#-----------------------

cat > server-csr.json <<EOF

{

"CN": "etcd",

"hosts": [

"10.206.240.188",

"10.206.240.189",

"10.206.240.111"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server

注意:hosts一定要包含所有节点,可以多部署几个预留节点以便后续扩容,否则还需要重新生成

4.1.3 etcd二进制包安装

#存放配置文件,可执行文件,证书文件

mkdir /opt/etcd/{cfg,bin,ssl} -p

#ssl 证书切记复制到/opt/etcd/ssl/

cp {ca,server-key,server}.pem /opt/etcd/ssl/

#部署etcd以及增加etcd服务(etcd.sh)

cd /home/k8s_install/soft/

tar -zxvf etcd-v3.3.10-linux-amd64.tar.gz

cd etcd-v3.3.10-linux-amd64

mv etcd etcdctl /opt/etcd/bin/

cd /home/k8s_install/ssl_etcd

chmod +x etcd.sh

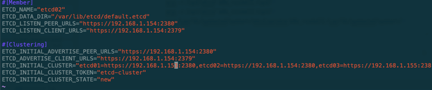

参数说明:1.etcd名称 2.本机ip 3.其他两个etcd名称以及地址

./etcd.sh etcd01 192.168.22.141 etcd02=https://192.168.22.142:2380,etcd03=https://192.168.22.143:2380

执行后会卡住实际是在等待其他两个节点加入

其他两个node节点部署etcd:

scp -r /opt/etcd/ k8s_node01:/opt/

scp -r /opt/etcd/ k8s_node02:/opt/

scp /usr/lib/systemd/system/etcd.service k8s_node01:/usr/lib/systemd/system/

scp /usr/lib/systemd/system/etcd.service k8s_node02:/usr/lib/systemd/system/

#修改node节点配置文件(2个节点都需要更改)

ssh k8s_node01

vim /opt/etcd/cfg/etcd

ETCD_NAME以及ip地址

systemctl daemon-reload

systemctl start etcd.service

etcd.sh脚本内容如下:

[root@k8s_master ssl_etcd]# cat etcd.sh

#!/bin/bash

# example: ./etcd.sh etcd01 192.168.22.141 etcd02=https://192.168.22.142:2380,etcd03=https://192.168.22.143:2380

ETCD_NAME=$1

ETCD_IP=$2

ETCD_CLUSTER=$3

WORK_DIR=/opt/etcd

cat <<EOF >$WORK_DIR/cfg/etcd

#[Member]

ETCD_NAME="${ETCD_NAME}"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://${ETCD_IP}:2380"

ETCD_LISTEN_CLIENT_URLS="https://${ETCD_IP}:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://${ETCD_IP}:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://${ETCD_IP}:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://${ETCD_IP}:2380,${ETCD_CLUSTER}"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

cat <<EOF >/usr/lib/systemd/system/etcd.service

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=${WORK_DIR}/cfg/etcd

ExecStart=${WORK_DIR}/bin/etcd \

--name=\${ETCD_NAME} \

--data-dir=\${ETCD_DATA_DIR} \

--listen-peer-urls=\${ETCD_LISTEN_PEER_URLS} \

--listen-client-urls=\${ETCD_LISTEN_CLIENT_URLS},http://127.0.0.1:2379 \

--advertise-client-urls=\${ETCD_ADVERTISE_CLIENT_URLS} \

--initial-advertise-peer-urls=\${ETCD_INITIAL_ADVERTISE_PEER_URLS} \

--initial-cluster=\${ETCD_INITIAL_CLUSTER} \

--initial-cluster-token=\${ETCD_INITIAL_CLUSTER_TOKEN} \

--initial-cluster-state=new \

--cert-file=${WORK_DIR}/ssl/server.pem \

--key-file=${WORK_DIR}/ssl/server-key.pem \

--peer-cert-file=${WORK_DIR}/ssl/server.pem \

--peer-key-file=${WORK_DIR}/ssl/server-key.pem \

--trusted-ca-file=${WORK_DIR}/ssl/ca.pem \

--peer-trusted-ca-file=${WORK_DIR}/ssl/ca.pem

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable etcd

systemctl restart etcd

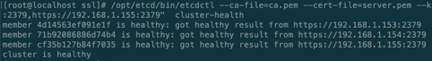

4.1.4 查看etcd集群健康情况

cd /opt/etcd/ssl

/opt/etcd/bin/etcdctl --ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem --endpoints="https://192.168.22.141:2379,https://192.168.22.142:2379,https://192.168.22.143:2379" cluster-health

5、 安装Docker(node 节点)

5.1 安装依赖包

yum install -y yum-utils device-mapper-persistent-data lvm25.2 配置官方源(替换为阿里源)

yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo5.3 更新并安装Docker-CE

yum makecache fast

yum install docker-ce -y

5.4 配置docker加速器

curl -sSL https://get.daocloud.io/daotools/set_mirror.sh | sh -s http://f1361db2.m.daocloud.io5.5 启动docker

systemctl restart docker.service

systemctl enable docker.service6、部署Flannel网络

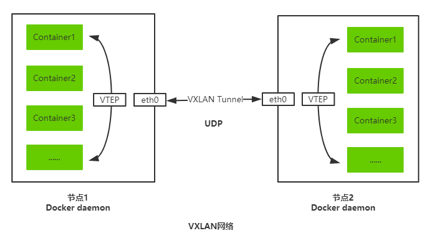

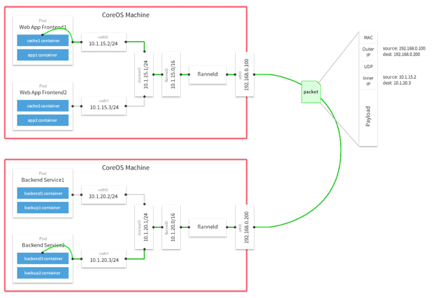

Overlay Network:覆盖网络,在基础网络上叠加的一种虚拟网络技术模式,该网络中的主机通过虚拟链路连接起来。 VXLAN:将源数据包封装到UDP中,并使用基础网络的IP/MAC作为外层报文头进行封装,然后在以太网上传输,到达目的地后由隧道端点解封装并将数据发送给目标地址。 Flannel:是Overlay网络的一种,也是将源数据包封装在另一种网络包里面进行路由转发和通信,目前已经支持UDP、VXLAN、AWS VPC和GCE路由等数据转发方式。 多主机容器网络通信其他主流方案:隧道方案( Weave、OpenvSwitch ),路由方案(Calico)等。

6.1 写入分配的子网段到etcd,供flanneld使用(master)

/opt/etcd/bin/etcdctl --ca-file=/opt/etcd/ssl/ca.pem --cert-file=/opt/etcd/ssl/server.pem --key-file=/opt/etcd/ssl/server-key.pem --endpoints=https://192.168.22.141:2379,https://192.168.22.142:2379,https://192.168.22.143:2379 set /coreos.com/network/config '{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}'6.2 二进制包安装Flannel(node节点 flannel.sh)

# 下载地址:https://github.com/coreos/flannel/releases/download/

mkdir /opt/kubernetes/{bin,cfg,ssl} -p

cd /home/k8s_install/flannel_install/

tar -zxvf flannel-v0.10.0-linux-amd64.tar.gz

mv {flanneld,mk-docker-opts.sh} /opt/kubernetes/bin/

cd /home/k8s_install/flannel_install

chmod +x flannel.sh

chmod +x /opt/kubernetes/bin/{flanneld,mk-docker-opts.sh}./flannel.sh https://192.168.22.141:2379,https://192.168.22.142:2379,https://192.168.22.143:2379脚本内容如下:

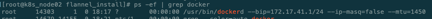

6.3 查看flannel是否部署成功

7、部署Master组件

mkdir /opt/kubernetes/{bin,cfg,ssl} -p

cd /home/k8s_install/k8s_master_componet

tar -zxvf kubernetes-server-linux-amd64.tar.gz

cp -r kubernetes/server/bin/{kube-scheduler,kube-controller-manager,kube-apiserver} /opt/kubernetes/bin

cp kubernetes/server/bin/kubectl /usr/bin/7.1 Kube-apiserver部署

chmod +x apiserver.sh

./apiserver.sh 192.168.22.141 https://192.168.22.141:2379,https://192.168.22.142:2379,https://192.168.22.143:2379脚本内容:

7.1.1 生成kube-apiserver自签证书(k8s-cert.sh)

chmod +x k8s-cert.sh

./k8s-cert.sh

cp ca.pem server.pem ca-key.pem server-key.pem /opt/kubernetes/ssl/

脚本内容如下:

备注:hosts中尽量把未来可拓展的node加进来,之后就不用在重新生成自签证书了.

7.1.2 生成token证书(kube_token.sh)

sh kube_token.sh

mv token.csv /opt/kubernetes/cfg/脚本内容如下(kube_token.sh):

# 创建 TLS Bootstrapping Token

BOOTSTRAP_TOKEN=$(head -c 16 /dev/urandom | od -An -t x | tr -d ' ')

cat > token.csv <<EOF

${BOOTSTRAP_TOKEN},kubelet-bootstrap,10001,"system:kubelet-bootstrap"

EOF

7.1.3 部署kube-apiserver配置文件及服务

cd /home/k8s_install/k8s_master_componet

chmod +x apiserver.sh

./apiserver.sh 192.168.22.141 https://192.168.22.141:2379,https://192.168.22.142:2379,https://192.168.22.143:2379脚本内容如下(apiserver.sh):

7.2 kube-controller-manager部署

7.2.1 部署controller-manager配置文件及服务

cd /home/k8s_install/k8s_master_componet

chmod +x controller-manager.sh

./controller-manager.sh 127.0.0.1脚本内容如下:

7.3 Kube-scheduler部署

7.3.1 部署Kube-scheduler配置文件及服务

cd /home/k8s_install/k8s_master_componet

chmod +x scheduler.sh

./scheduler.sh 127.0.0.1脚本内容如下:

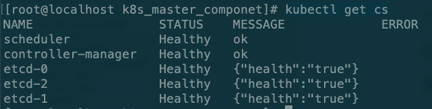

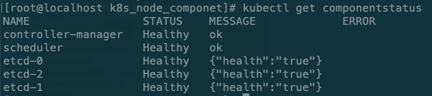

7.4 查看Master状态

kubectl get cs

8、部署Node组件

8.1 将kubelet-bootstrap用户绑定到系统集群角色(master执行)

kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap8.2 创建kubeconfig文件(存放连接apiserver认证信息,master执行)

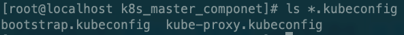

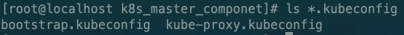

cd /home/k8s_install/k8s_master_componet

chmod +x kubeconfig.sh

#执行之前需要保证BOOTSTRAP_TOKEN正确修改脚本BOOTSTRAP_TOKEN

cat /opt/kubernetes/cfg/token.csv

./kubeconfig.sh 192.168.1.153 /home/k8s_install/k8s_master_componet/

参数说明:param1:apiserver地址 param2:生成证书目录

脚本说明:

8.3 复制kubeconfig文件到node节点

scp -r *.kubeconfig k8s_node01:/opt/kubernetes/cfg/

scp -r *.kubeconfig k8s_node02:/opt/kubernetes/cfg/8.4 部署kubelet组件

#复制包到node节点

scp kubelet kube-proxy k8s_node01:/opt/kubernetes/bin/

cd /home/k8s_install/k8s_node_componet

chmod +x kubelet.sh

./kubelet.sh 192.168.22.142脚本如下:

8.5 部署kube-proxy组件

cd /home/k8s_install/k8s_node_componet

chmod +x proxy.sh

./proxy.sh 192.168.22.142脚本如下:

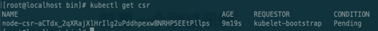

8.6 master授权node请求签名

kubectl get csr

kubectl certificate approve node-csr-aCTdx_2qXRajXlHrIlg2uPddhpexw8NRHP5EEtPllps

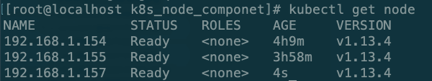

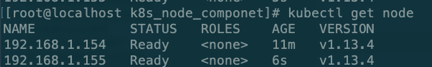

9、查询集群状态

# kubectl get node

# kubectl get componentstatus

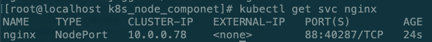

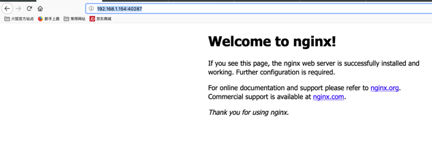

10、启动一个测试示例

# kubectl create deployment nginx --image=nginx

# kubectl get pod -o wide

# kubectl expose deployment nginx --port=88 --target-port=80 --type=NodePort

# kubectl get svc nginx

http://192.168.1.154:40287/

授权:

kubectl create clusterrolebinding cluster-system-anonymous --clusterrole=cluster-admin --user=system:anonymous

11、新增一个node节点步骤

本文以157为例子

11.1 参照三章系统常规参数配置

更新hosts(所有节点)

vim /etc/hosts

192.168.22.141 k8s_master

192.168.22.142 k8s_node01

192.168.22.143 k8s_node02

192.168.22.144 k8s_node03

11.2 安装Docker 参照第五章

11.3 从node01复制文件到node03

scp -r /opt/kubernetes/ k8s_node03:/opt/

scp /usr/lib/systemd/system/{kubelet,kube-proxy}.service k8s_node03:/usr/lib/systemd/system/

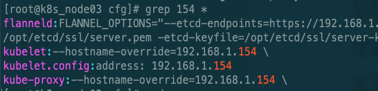

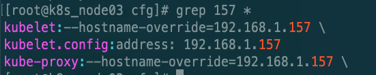

11.3.1 替换node节点ip

cd /opt/kubernetes/cfg

需要修改:kubelet,kubelet.config,kube-proxy文件ip

11.3.2 删除node01认证信息

rm -rf /opt/kubernetes/ssl/*

11.3.3 启动kubelet,kube-proxy服务

systemctl start kubelet.service

systemctl enable kubelet.service

systemctl start kube-proxy.service

systemctl enable kube-proxy.service

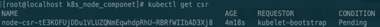

11.3.4 master授权新加入node

# kubectl get csr

# kubectl certificate approve node-csr-tE3KOFUjDDu1VLUZQNmEqwhdpRhU-RBRfWIIbAD3Xj8