kNN背景建模c++代码(包括比较)如下:

#include "opencv2/imgcodecs.hpp"

#include "opencv2/imgproc.hpp"

#include "opencv2/videoio.hpp"

#include <opencv2/highgui.hpp>

#include <opencv2/video.hpp>

#include <iostream>

#include <sstream>

using namespace cv;

using namespace std;

#define RATIO 2

const int HISTORY_NUM = 14;// 14;// 历史信息帧数

const int nKNN = 3;// KNN聚类后判断为背景的阈值

const float defaultDist2Threshold = 20.0f;// 灰度聚类阈值

struct PixelHistory

{

unsigned char *gray;// 历史灰度值

unsigned char *IsBG;// 对应灰度值的前景/背景判断,1代表判断为背景,0代表判断为前景

};

int main()

{

PixelHistory* framePixelHistory = NULL;// 记录一帧图像中每个像素点的历史信息

cv::Mat frame, FGMask, FGMask_KNN;

int keyboard = 0;

int rows, cols;

rows = cols = 0;

bool InitFlag = false;

int frameCnt = 0;

int gray = 0;

char* file_path = "..//Data//in_man.avi";

// Foreground mask generated by MOG2 method

Mat fgMaskMOG2;

// Background

Mat bgImg;

VideoCapture capture(file_path);

Ptr<BackgroundSubtractorKNN> pBackgroundKnn =

createBackgroundSubtractorKNN();

pBackgroundKnn->setHistory(200);

pBackgroundKnn->setDist2Threshold(600);

pBackgroundKnn->setShadowThreshold(0.5);

Ptr<BackgroundSubtractorMOG2> pMOG2 = createBackgroundSubtractorMOG2(200, 36.0, false);

while ((char)keyboard != 'q' && (char)keyboard != 27)

{

// 读取当前帧

if (!capture.read(frame))

exit(EXIT_FAILURE);

resize(frame, frame, Size(frame.size().width / RATIO, frame.size().height / RATIO));

imshow("Frame", frame);

pMOG2->apply(frame, fgMaskMOG2);

pMOG2->getBackgroundImage(bgImg);

medianBlur(fgMaskMOG2, fgMaskMOG2, 5);

// imshow("medianBlur", fgMaskMOG2);

// Fill black holes

morphologyEx(fgMaskMOG2, fgMaskMOG2, MORPH_CLOSE, getStructuringElement(MORPH_RECT, Size(5, 5)));

// Fill white holes

morphologyEx(fgMaskMOG2, fgMaskMOG2, MORPH_OPEN, getStructuringElement(MORPH_RECT, Size(5, 5)));

imshow("morphologyEx", fgMaskMOG2);

cvtColor(frame, frame, CV_BGR2GRAY);

if (!InitFlag)

{

// 初始化一些变量

rows = frame.rows;

cols = frame.cols;

FGMask.create(rows, cols, CV_8UC1);// 输出图像初始化

// framePixelHistory分配空间

framePixelHistory = (PixelHistory*)malloc(rows*cols * sizeof(PixelHistory));

for (int i = 0; i < rows*cols; i++)

{

framePixelHistory[i].gray = (unsigned char*)malloc(HISTORY_NUM * sizeof(unsigned char));

framePixelHistory[i].IsBG = (unsigned char*)malloc(HISTORY_NUM * sizeof(unsigned char));

memset(framePixelHistory[i].gray, 0, HISTORY_NUM * sizeof(unsigned char));

memset(framePixelHistory[i].IsBG, 0, HISTORY_NUM * sizeof(unsigned char));

}

InitFlag = true;

}

if (InitFlag)

{

FGMask.setTo(Scalar(255));

for (int i = 0; i < rows; i++)

{

for (int j = 0; j < cols; j++)

{

gray = frame.at<unsigned char>(i, j);

int fit = 0;

int fit_bg = 0;

// 比较确定前景/背景

for (int n = 0; n < HISTORY_NUM; n++)

{

if (fabs(gray - framePixelHistory[i*cols + j].gray[n]) < defaultDist2Threshold)// 灰度差别是否位于设定阈值内

{

fit++;

if (framePixelHistory[i*cols + j].IsBG[n])// 历史信息对应点之前被判断为背景

{

fit_bg++;

}

}

}

if (fit_bg >= nKNN)// 当前点判断为背景

{

FGMask.at<unsigned char>(i, j) = 0;

}

// 更新历史值

int index = frameCnt % HISTORY_NUM;

framePixelHistory[i*cols + j].gray[index] = gray;

framePixelHistory[i*cols + j].IsBG[index] = fit >= nKNN ? 1 : 0;// 当前点作为背景点存入历史信息

}

}

}

pBackgroundKnn->apply(frame, FGMask_KNN);

imshow("yuanFGMask", FGMask);

medianBlur(FGMask, FGMask, 5);

imshow("medianBlur", FGMask);

cv::Mat structuringElement2x2 = cv::getStructuringElement(cv::MORPH_RECT, cv::Size(2, 2));

erode(FGMask, FGMask, structuringElement2x2); //两次侵蚀处理,以消除噪音

cv::threshold(FGMask, FGMask, 20, 255.0, CV_THRESH_BINARY); //执行阈值处理并获得阈值掩码

cv::dilate(FGMask, FGMask, structuringElement2x2);

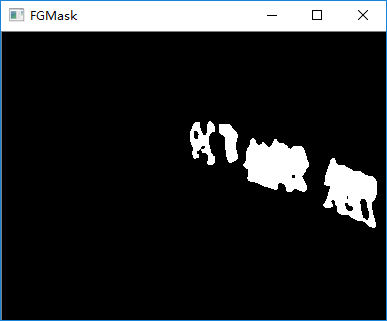

imshow("FGMask", FGMask);

//构造各种尺寸的元素以用于形态学变换

//cv::Mat structuringElement2x2 = cv::getStructuringElement(cv::MORPH_RECT, cv::Size(2, 2));

erode(FGMask_KNN, FGMask_KNN, structuringElement2x2); //两次侵蚀处理,以消除噪音

cv::threshold(FGMask_KNN, FGMask_KNN, 20, 255.0, CV_THRESH_BINARY); //执行阈值处理并获得阈值掩码

cv::dilate(FGMask_KNN, FGMask_KNN, structuringElement2x2);

imshow("FGMask_KNN", FGMask_KNN);

keyboard = waitKey(30);

frameCnt++;

}

capture.release();

return 0;

}

相比较而言:knn能够很快的检测出进入窗口的物体,检测到较小的物体

但有很严重的“尾巴”(特别是当k值较大(如5)的时候)

参考文章:OpenCV3学习(10.4)基于KNN的背景/前景分割算法BackgroundSubtractorKNN算法

image/watermark,type_ZmFuZ3poZW5naGVpdGk,shadow_10,text_aHR0cHM6Ly9ibG9nLmNzZG4ubmV0L3dlaWppYW5jaGVuZzk5OQ==,size_16,color_FFFFFF,t_70