kafka-node地址:https://www.npmjs.com/package/kafka-node

下面代码只是消费信息的

const kafka = require("kafka-node");

const Client = kafka.KafkaClient;

const Offset = kafka.Offset;

const Consumer = kafka.Consumer;

function toKafka() {

const client = new Client({ kafkaHost: "192.168.100.129:9092" });

const offset = new Offset(client);

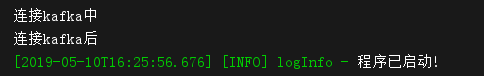

console.log("连接kafka中");

const topics = [

{

topic: "test",

partition: 0,

offset: 0,

},

];

const options = {

// 自动提交配置 (false 不会提交偏移量,每次都从头开始读取)

autoCommit: true,

autoCommitIntervalMs: 5000,

// 如果设置为true,则consumer将从有效负载中的给定偏移量中获取消息

fromOffset: false,

};

const consumer = new Consumer(client, topics, options);

consumer.on("message", function(message) {

console.log(message);

});

consumer.on("error", function(message) {

console.log(message);

console.log("kafka错误");

});

//addTopics(topics, cb, fromOffset) fromOffset:true, 从指定的offset中获取消息,否则,将从主题最后提交的offset中获取消息。

// consumer.addTopics(

// [{ topic: "", offset: 1 }],

// function(err, data) {

// console.log("根据指定的偏移量获取值" + data);

// },

// true,

// );

consumer.on("offsetOutOfRange", function(topic) {

topic.maxNum = 2;

offset.fetch([topic], function(err, offsets) {

const min = Math.min.apply(null, offsets[topic.topic][topic.partition]);

consumer.setOffset(topic.topic, topic.partition, min);

});

});

console.log("连接kafka后");

}

module.exports = toKafka;

估计后面再真正生产中可能会出现别的问题,后续在接着记录