本案例中主要讲述三种不同方式的scrapy框架模拟用户登陆方式。

- 人人网案例-采用传统cookies设置方式进行模拟登陆。

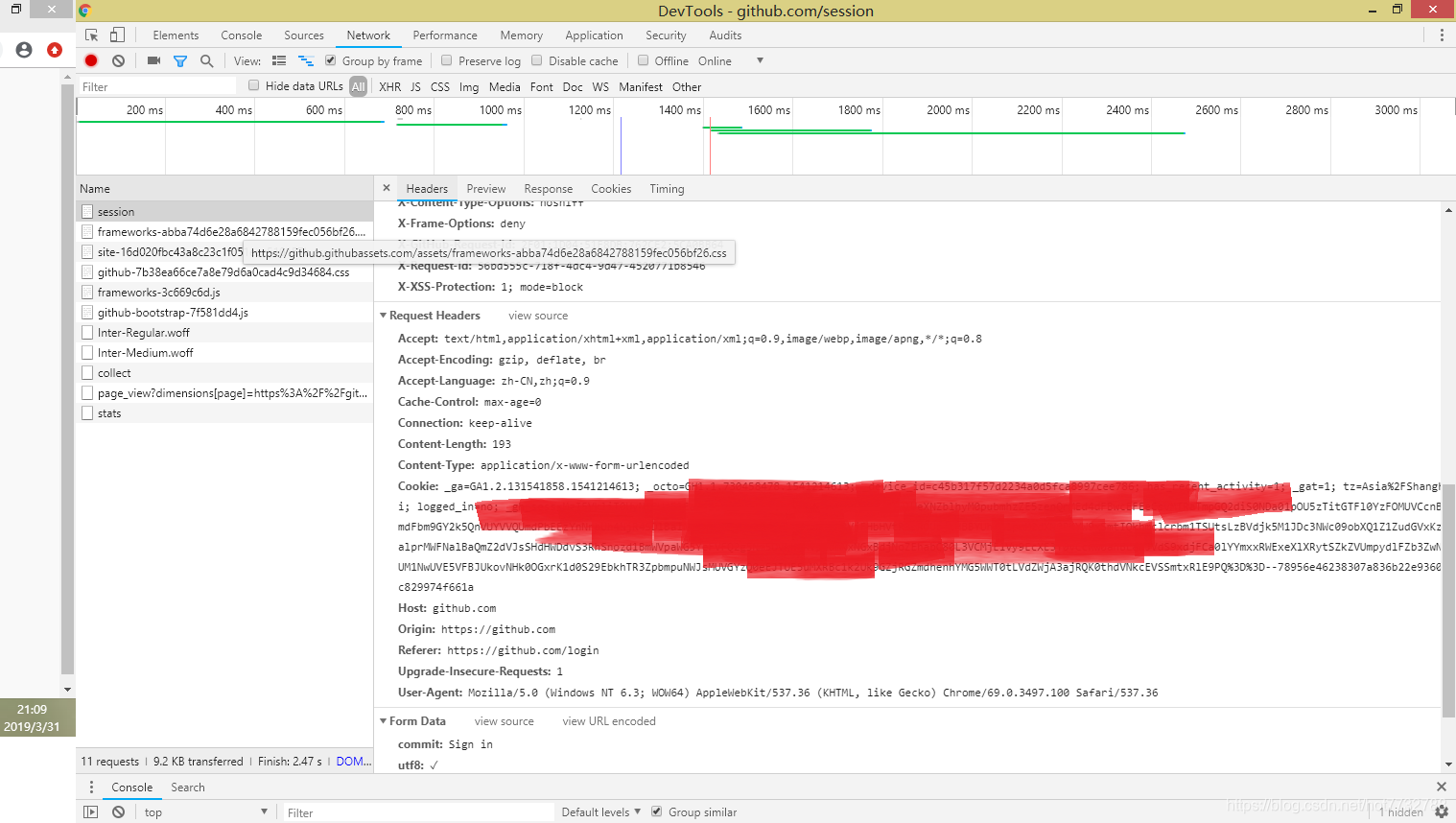

- post表单请求方式(自行定位表单位置)

- post方式调用scrapy.FormRequest.from_response函数进行自动定位表单方式进行模拟登陆.

1.案例框架结构

2.案例模拟登陆界面以及cookies获取界面

3.三种爬虫文件

# -*- coding: utf-8 -*-

import scrapy

import re

class RenrenSpider(scrapy.Spider):

name = 'renren'

allowed_domains = ['renren.com']

start_urls = ['http://www.renren.com/970254374/profile']

def start_requests(self):

cookies = "输入cookies"

cookies = {i.split("=")[0]:i.split("=")[1] for i in cookies.split("; ")}#cookies字符串转化为字典

yield scrapy.Request(

self.start_urls[0],

callback=self.parse,

cookies=cookies

)

def parse(self, response):

print(re.findall("物是人非", response.body.decode()))#如果能够输出用户名说明请求成功了

yield scrapy.Request(

"http://www.renren.com/970254374/profile?v=info_timeline",#以后的请求都会带上cookies

callback=self.parse_detail

)

def parse_detail(self, response):

print(re.findall("物是人非", response.body.decode()))

# -*- coding: utf-8 -*-

import scrapy

import re

class GithubSpider(scrapy.Spider):

name = 'github'

allowed_domains = ['github.com']

start_urls = ['http://github.com/login']

def parse(self, response):

authenticity_token = response.xpath("//input[@name='authenticity_token']/@value").extract_first()

utf8 = response.xpath("//input[@name='utf8']/@value").extract_first()

commit = response.xpath("//input[@name='commit']/@value").extract_first()

post_data = dict(

login="***",

password="***",

authenticity_token=authenticity_token,

utf8=utf8,

commit=commit

)

yield scrapy.FormRequest(

"https://github.com/session",

formdata = post_data,

callback = self.after_login,

)

def after_login(self, response):

with open("login.html", "w", encoding="utf-8") as f:

f.write(response.body.decode())

print(re.findall("songjianguo12", response.body.decode()))

# -*- coding: utf-8 -*-

import scrapy

import re

class Github2Spider(scrapy.Spider):

name = 'github2'

allowed_domains = ['github.com']

start_urls = ['http://github.com/login']

def parse(self, response):

yield scrapy.FormRequest.from_response(

response, #自动的从rsponse中寻找from表单-自动定位表单

formdata = {"login":"***", "password":"***"},

callback = self.after_login,

)

def after_login(self, response):

print(re.findall("songjianguo12", response.body.decode()))

4.settings.py文件

# -*- coding: utf-8 -*-

# Scrapy settings for login project

#

# For simplicity, this file contains only settings considered important or

# commonly used. You can find more settings consulting the documentation:

#

# https://doc.scrapy.org/en/latest/topics/settings.html

# https://doc.scrapy.org/en/latest/topics/downloader-middleware.html

# https://doc.scrapy.org/en/latest/topics/spider-middleware.html

BOT_NAME = 'login'

SPIDER_MODULES = ['login.spiders']

NEWSPIDER_MODULE = 'login.spiders'

# Crawl responsibly by identifying yourself (and your website) on the user-agent

USER_AGENT = 'Mozilla/5.0 (Windows NT 6.3; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/69.0.3497.100 Safari/537.36'

# USER_AGENTS = [ "Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; Win64; x64; Trident/5.0; .NET CLR 3.5.30729; .NET CLR 3.0.30729; .NET CLR 2.0.50727; Media Center PC 6.0)", "Mozilla/5.0 (compatible; MSIE 8.0; Windows NT 6.0; Trident/4.0; WOW64; Trident/4.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; .NET CLR 1.0.3705; .NET CLR 1.1.4322)", "Mozilla/4.0 (compatible; MSIE 7.0b; Windows NT 5.2; .NET CLR 1.1.4322; .NET CLR 2.0.50727; InfoPath.2; .NET CLR 3.0.04506.30)", "Mozilla/5.0 (Windows; U; Windows NT 5.1; zh-CN) AppleWebKit/523.15 (KHTML, like Gecko, Safari/419.3) Arora/0.3 (Change: 287 c9dfb30)", "Mozilla/5.0 (X11; U; Linux; en-US) AppleWebKit/527+ (KHTML, like Gecko, Safari/419.3) Arora/0.6", "Mozilla/5.0 (Windows; U; Windows NT 5.1; en-US; rv:1.8.1.2pre) Gecko/20070215 K-Ninja/2.1.1", "Mozilla/5.0 (Windows; U; Windows NT 5.1; zh-CN; rv:1.9) Gecko/20080705 Firefox/3.0 Kapiko/3.0", "Mozilla/5.0 (X11; Linux i686; U;) Gecko/20070322 Kazehakase/0.4.5" ]

# Obey robots.txt rules

ROBOTSTXT_OBEY = False

LOG_LEVEL = "WARNING"

COOKIES_DEBUG = True #查看cookies发送方向

# Configure maximum concurrent requests performed by Scrapy (default: 16)

#CONCURRENT_REQUESTS = 32

# Configure a delay for requests for the same website (default: 0)

# See https://doc.scrapy.org/en/latest/topics/settings.html#download-delay

# See also autothrottle settings and docs

#DOWNLOAD_DELAY = 3

# The download delay setting will honor only one of:

#CONCURRENT_REQUESTS_PER_DOMAIN = 16

#CONCURRENT_REQUESTS_PER_IP = 16

# Disable cookies (enabled by default)

#COOKIES_ENABLED = False

# Disable Telnet Console (enabled by default)

#TELNETCONSOLE_ENABLED = False

# Override the default request headers:

#DEFAULT_REQUEST_HEADERS = {

# 'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8',

# 'Accept-Language': 'en',

#}

# Enable or disable spider middlewares

# See https://doc.scrapy.org/en/latest/topics/spider-middleware.html

#SPIDER_MIDDLEWARES = {

# 'login.middlewares.LoginSpiderMiddleware': 543,

#}

# Enable or disable downloader middlewares

# See https://doc.scrapy.org/en/latest/topics/downloader-middleware.html

#DOWNLOADER_MIDDLEWARES = {

# 'login.middlewares.LoginDownloaderMiddleware': 543,

#}

# Enable or disable extensions

# See https://doc.scrapy.org/en/latest/topics/extensions.html

#EXTENSIONS = {

# 'scrapy.extensions.telnet.TelnetConsole': None,

#}

# Configure item pipelines

# See https://doc.scrapy.org/en/latest/topics/item-pipeline.html

#ITEM_PIPELINES = {

# 'login.pipelines.LoginPipeline': 300,

#}

# Enable and configure the AutoThrottle extension (disabled by default)

# See https://doc.scrapy.org/en/latest/topics/autothrottle.html

#AUTOTHROTTLE_ENABLED = True

# The initial download delay

#AUTOTHROTTLE_START_DELAY = 5

# The maximum download delay to be set in case of high latencies

#AUTOTHROTTLE_MAX_DELAY = 60

# The average number of requests Scrapy should be sending in parallel to

# each remote server

#AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0

# Enable showing throttling stats for every response received:

#AUTOTHROTTLE_DEBUG = False

# Enable and configure HTTP caching (disabled by default)

# See https://doc.scrapy.org/en/latest/topics/downloader-middleware.html#httpcache-middleware-settings

#HTTPCACHE_ENABLED = True

#HTTPCACHE_EXPIRATION_SECS = 0

#HTTPCACHE_DIR = 'httpcache'

#HTTPCACHE_IGNORE_HTTP_CODES = []

#HTTPCACHE_STORAGE = 'scrapy.extensions.httpcache.FilesystemCacheStorage'

- 本案例不进行效果展示,请读者自行实验效果,案例完全可行,如遇到问题无法结局可以给我留言,我看到会立即回复。