转载来自lyl

1.首先在hdfs的 core-site.xml添加如下

Eg:

<property>

<name>ha.zookeeper.quorum</name>

<value>hadoop001:2181,hadoop012:2181,hadoop011:2181</value>

</property>

<property>

<name>ha.zookeeper.auth</name>

<value>@/opt/beh/core/zk-auth.txt</value>

</property>

<property>

<name>ha.zookeeper.acl</name>

<value>@/opt/beh/core/zk-acl.txt</value>

</property>

<property>

<name>ha.zookeeper.parent-znode</name>

<value>/hadoop-ha</value>

</property>

<property>

<name>ha.zookeeper.session-timeout.ms</name>

<value>5000</value>

</property>

2.在zk,zkfz,nn,jn节点对应路径创建zk-auth.txt,zk-acl.txt

其中zk-auth.txt的内容为:digest:wkz:123

zk-acl.txt的内容这样得到:

java -cp ${ZOOKEEPER_HOME}/lib/*:${ZOOKEEPER_HOME}/zookeeper-3.4.5-cdh5.2.0.jar org.apache.zookeeper.server.auth.DigestAuthenticationProvider userwen03:pwd03

也可以:

java -cp /opt/beh/core/zookeeper/lib/*:/opt/beh/core/zookeeper/zookeeper-3.4.5-cdh5.1.3.jar org.apache.zookeeper.server.auth.DigestAuthenticationProvider wkz:123

zk-acl.txt的内容为:

digest:wkz:0d/doc2K/fcCANZOkv2jcT7Gf9s=:rwcda

3.停止hdfs,格式化zkfc:hadoop-daemon.sh start zkfc

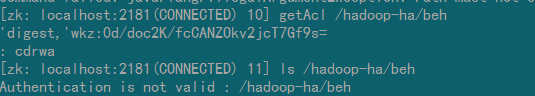

再次启动hdfs,进入zkcli,观察到如下

4.集群操作

集群启动

1. 启动zookeeper:zkServer.sh start,一般安装台数为基数

2. 对zookeeper集群进行格式化,在其中一个namenode执行即可:hdfs zkfc -formatZK

3. 启动JournalNode进程:hadoop-daemon.sh start journalnode,在zk节点执行

4. 格式化hadoop集群:hdfs namenode -format ns1

5. 启动namenode:hadoop-daemon.sh start namenode

6. 在另外一个namenode上执行如下命令:

hdfs namenode -bootstrapStandby

hadoop-daemon.sh start namenode

7. 在namenode上执行如下命令来启动所有进程:

start-dfs.sh start-yarn.sh

8. 启动后可以执行如下命令查看当前namenode是active还是standby状态

hdfs haadmin -getServiceState nn1

standby

hdfs haadmin -getServiceState nn2

active

9. 启动historyserver:

sh mr-jobhistory-daemon.sh start historyserver

zookeeper与hdfs启动次序

1、启动ZK集群,在每个zk的部署机器上运行

sh bin/zkServer.sh start

2、在ZK中创建znode来存储automatic Failover的数据,即对zookeeper集群进行格式化,任选一个NN执行完成即可:

sh bin/hdfs zkfc -formatZK

3、启动zkfs,在所有的NN节点中执行以下命令:

sh sbin/hadoop-daemon.sh start zkfc

4、启动集群

sh sbin/start-dfs.sh