版权声明:原创文章转载请声明出处https://blog.csdn.net/qq_40374604 https://blog.csdn.net/qq_40374604/article/details/85224780

简介:https://baike.baidu.com/item/Lucene/6753302?fr=aladdin

扩展停用词和新词:https://blog.csdn.net/u010357298/article/details/80776902

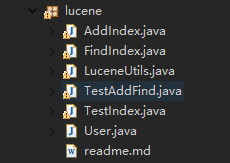

目录结构:(跟着代码练习一遍,效果更好) 摘要,排序和高亮 lucene4以后有一定改变

直接上代码 代码:

一:建立javabean

/**建立javabean*/

package lucene;

import com.alibaba.fastjson.JSON;

public class User {

private String id;

private String userName;

private String sal;

public User() {

}

public User(String id, String userName, String sal) {

this.id = id;

this.userName = userName;

this.sal = sal;

}

public String getId() {

return id;

}

public void setId(String id) {

this.id = id;

}

public String getUserName() {

return userName;

}

public void setUserName(String userName) {

this.userName = userName;

}

public String getSal() {

return sal;

}

public void setSal(String sal) {

this.sal = sal;

}

// @Override

// public String toString() {

// return "User[id='" + id + "',userName='" + userName + "',sal='" + sal

// + "']";

// }

/** 序列化 */

@Override

public String toString() {

// TODO Auto-generated method stub

return JSON.toJSONString(this);

}

}

二:创建索引库并插入数据

/**创建索引库并插入数据 */

package lucene;

import java.io.BufferedReader;

import java.io.File;

import java.io.FileInputStream;

import java.io.InputStreamReader;

import org.apache.lucene.analysis.Analyzer;

import org.apache.lucene.analysis.standard.StandardAnalyzer;

import org.apache.lucene.document.Document;

import org.apache.lucene.document.Field;

import org.apache.lucene.index.IndexWriter;

import org.apache.lucene.store.Directory;

import org.apache.lucene.store.FSDirectory;

import org.apache.lucene.util.Version;

public class AddIndex {

public void createIndexDB(String id, String userName, String sal)

throws Exception {

// 把数据填充到JavaBean对象中

User user = new User(id, userName, sal);

// 创建Document对象【导入的是Lucene包下的Document对象】

Document document = new Document();

// 将JavaBean对象所有的属性值,均放到Document对象中去,属性名可以和JavaBean相同或不同

/**

* 向Document对象加入一个字段 参数一:字段的关键字 参数二:字符的值 参数三:是否要存储到原始记录表中 YES表示是 NO表示否

* 参数四:是否需要将存储的数据拆分到词汇表中 ANALYZED表示拆分 NOT_ANALYZED表示不拆分

*

* */

document.add(new Field("id", user.getId(), Field.Store.YES,

Field.Index.ANALYZED));

document.add(new Field("userName", user.getUserName(), Field.Store.YES,

Field.Index.ANALYZED));

document.add(new Field("sal", user.getSal(), Field.Store.YES,

Field.Index.ANALYZED));

// 创建IndexWriter对象

// 目录指定为E:/createIndexDB

Directory directory = FSDirectory.open(new File("E:/createIndexDB"));

// 使用标准的分词算法对原始记录表进行拆分

Analyzer analyzer = new StandardAnalyzer(Version.LUCENE_30);

// LIMITED默认是1W个

IndexWriter.MaxFieldLength maxFieldLength = IndexWriter.MaxFieldLength.LIMITED;

/**

* IndexWriter将我们的document对象写到硬盘中

*

* 参数一:Directory d,写到硬盘中的目录路径是什么 参数二:Analyzer a,

* 以何种算法来对document中的原始记录表数据进行拆分成词汇表 参数三:MaxFieldLength mfl 最多将文本拆分出多少个词汇

*

* */

IndexWriter indexWriter = new IndexWriter(directory, analyzer,

maxFieldLength);

// 将Document对象通过IndexWriter对象写入索引库中

indexWriter.addDocument(document);

// 关闭IndexWriter对象

indexWriter.close();

}

public static void main(String[] args) {

String id = "2";

String userName = "李四";

String sal = "运维工程师";

AddIndex testIndex = new AddIndex();

try {

testIndex.createIndexDB(id, userName, sal);

System.out.println("添加成功:" + id);

} catch (Exception e) {

// TODO Auto-generated catch block

e.printStackTrace();

System.out.println("createIndexDB error");

}

/** 读取文件内容,存入索引库 */

try {

String classPath = System.getProperties().getProperty("user.dir");

String sep = System.getProperties().getProperty("file.separator");

String pathName = classPath + sep + "date" + sep + "testtitle.txt";

File file = new File(pathName);

InputStreamReader reader = new InputStreamReader(

new FileInputStream(file), "gbk");

BufferedReader br = new BufferedReader(reader);

String stringLine;

int count = 0;

while ((stringLine = br.readLine()) != null) {

count += 1;

testIndex.createIndexDB(count + "", stringLine.substring(0, 3),

stringLine);

System.out.println(stringLine);

}

br.close();

reader.close();

} catch (Exception e) {

// TODO: handle exception

System.out.println("createndexDB error");

}

}

}

三:查找

package lucene;

import java.io.File;

import java.net.URLDecoder;

import java.net.URLEncoder;

import org.apache.lucene.analysis.Analyzer;

import org.apache.lucene.analysis.standard.StandardAnalyzer;

import org.apache.lucene.document.Document;

import org.apache.lucene.queryParser.QueryParser;

import org.apache.lucene.search.IndexSearcher;

import org.apache.lucene.search.Query;

import org.apache.lucene.search.ScoreDoc;

import org.apache.lucene.search.TopDocs;

import org.apache.lucene.store.Directory;

import org.apache.lucene.store.FSDirectory;

import org.apache.lucene.util.Version;

import org.junit.Test;

public class FindIndex {

@Test

public void findIndexDB() throws Exception {

/**

* 参数一: IndexSearcher(Directory path)查询以xxx目录的索引库

*

* */

Directory directory = FSDirectory.open(new File("E:/createIndexDB"));

// 创建IndexSearcher对象

IndexSearcher indexSearcher = new IndexSearcher(directory);

// 创建QueryParser对象

/**

* 参数一: Version matchVersion 版本号【和上面是一样的】 参数二:String f,【要查询的字段】

* 参数三:Analyzer a【使用的拆词算法】

* */

Analyzer analyzer = new StandardAnalyzer(Version.LUCENE_30);

QueryParser queryParser = new QueryParser(Version.LUCENE_30,

"sal", analyzer);

// 给出要查询的关键字

String keyWords = "中国";

// 创建Query对象来封装关键字

Query query = queryParser.parse(keyWords);

// 用IndexSearcher对象去索引库中查询符合条件的前100条记录,不足100条记录的以实际为准

TopDocs topDocs = indexSearcher.search(query, 100);

// 获取符合条件的编号

for (int i = 0; i < topDocs.scoreDocs.length; i++) {

ScoreDoc scoreDoc = topDocs.scoreDocs[i];

int no = scoreDoc.doc;

// 用indexSearcher对象去索引库中查询编号对应的Document对象

Document document = indexSearcher.doc(no);

// 将Document对象中的所有属性取出,再封装回JavaBean对象中去

String id = document.get("id");

String userName = document.get("userName");

String sal = document.get("sal");

User user = new User(id, userName, sal);

System.out.println(user);

}

indexSearcher.close();

}

}

四:删除

package lucene;

import java.io.File;

import java.io.IOException;

import java.io.Reader;

import org.apache.lucene.analysis.Analyzer;

import org.apache.lucene.analysis.TokenStream;

import org.apache.lucene.analysis.standard.StandardAnalyzer;

import org.apache.lucene.index.IndexWriter;

import org.apache.lucene.index.Term;

import org.apache.lucene.store.FSDirectory;

import org.apache.lucene.util.Version;

import org.junit.Test;

public class TestIndex {

@Test

/**删除索引库*/

public void TestIndexDel() throws IOException {

// TODO Auto-generated constructor stub

Analyzer analyzer = new StandardAnalyzer(Version.LUCENE_30);

FSDirectory directory = FSDirectory.open(new File("E:/createIndexDB"));

// IndexWriterConfig

IndexWriter.MaxFieldLength maxFieldLength = IndexWriter.MaxFieldLength.LIMITED;

IndexWriter indexWriter = new IndexWriter(directory, analyzer,

maxFieldLength);

indexWriter.deleteAll();

System.out.println("good--已删除索引库所有文件");

indexWriter.deleteDocuments(new Term("userName", "李四"));

indexWriter.commit();

indexWriter.close();

}

}

五,优化

// 多条件搜索--(结果会排序)

QueryParser queryParser1 = new MultiFieldQueryParser(

LuceneUtils.getVersion(), new String[] { "userName", "sal" },

LuceneUtils.getAnalyzer());下面代码把lucene封装成工具包(转载)

package lucene;

import org.apache.commons.beanutils.BeanUtils;

import org.apache.lucene.analysis.Analyzer;

import org.apache.lucene.analysis.standard.StandardAnalyzer;

import org.apache.lucene.document.Document;

import org.apache.lucene.index.IndexWriter;

import org.apache.lucene.store.Directory;

import org.apache.lucene.store.FSDirectory;

import org.apache.lucene.util.Version;

import org.junit.Test;

import java.io.File;

import java.lang.reflect.Field;

import java.lang.reflect.Method;

/**

* Created 0

*/

/**

* 使用单例事例模式

* */

public class LuceneUtils {

private static Directory directory;

private static Analyzer analyzer;

private static IndexWriter.MaxFieldLength maxFieldLength;

private LuceneUtils() {

}

static {

try {

directory = FSDirectory.open(new File("E:/createIndexDB"));

analyzer = new StandardAnalyzer(Version.LUCENE_30);

maxFieldLength = IndexWriter.MaxFieldLength.LIMITED;

} catch (Exception e) {

e.printStackTrace();

}

}

public static Directory getDirectory() {

return directory;

}

public static Analyzer getAnalyzer() {

return analyzer;

}

public static IndexWriter.MaxFieldLength getMaxFieldLength() {

return maxFieldLength;

}

/**

* @param object

* 传入的JavaBean类型

* @return 返回Document对象

*/

public static Document javaBean2Document(Object object) {

try {

Document document = new Document();

// 得到JavaBean的字节码文件对象

Class<?> aClass = object.getClass();

// 通过字节码文件对象得到对应的属性【全部的属性,不能仅仅调用getFields()】

Field[] fields = aClass.getDeclaredFields();

// 得到每个属性的名字

for (Field field : fields) {

String name = field.getName();

// 得到属性的值【也就是调用getter方法获取对应的值】

String method = "get" + name.substring(0, 1).toUpperCase()

+ name.substring(1);

// 得到对应的值【就是得到具体的方法,然后调用就行了。因为是get方法,没有参数】

Method aClassMethod = aClass.getDeclaredMethod(method, null);

String value = aClassMethod.invoke(object).toString();

System.out.println(value);

// 把数据封装到Document对象中。

document.add(new org.apache.lucene.document.Field(name, value,

org.apache.lucene.document.Field.Store.YES,

org.apache.lucene.document.Field.Index.ANALYZED));

}

return document;

} catch (Exception e) {

e.printStackTrace();

}

return null;

}

/**

* @param aClass

* 要解析的对象类型,要用户传入进来

* @param document

* 将Document对象传入进来

* @return 返回一个JavaBean

*/

public static Object Document2JavaBean(Document document, Class<?> aClass) {

try {

// 创建该JavaBean对象

Object obj = aClass.newInstance();

// 得到该JavaBean所有的成员变量

Field[] fields = aClass.getDeclaredFields();

for (Field field : fields) {

// 设置允许暴力访问

field.setAccessible(true);

String name = field.getName();

String value = document.get(name);

// 使用BeanUtils把数据封装到Bean中

BeanUtils.setProperty(obj, name, value);

}

return obj;

} catch (Exception e) {

e.printStackTrace();

}

return null;

}

@Test

public void test() {

User user = new User();

LuceneUtils.javaBean2Document(user);

}

}