版权声明:路漫漫其修远兮,吾将上下而求索。 https://blog.csdn.net/Happy_Sunshine_Boy/article/details/85049006

java代码

package com.test.Job;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class jobSubmit {

public static void main(String[] args) throws Exception{

Configuration entries = new Configuration();

Job job = Job.getInstance(entries, "JobSubmit");

job.setJarByClass(jobSubmit.class);

FileInputFormat.setInputPaths(job, new Path(args[0]));

job.setMapperClass(MyMapper.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(LongWritable.class);

job.setReducerClass(MyReduce.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(LongWritable.class);

FileSystem fileSystem = FileSystem.newInstance(entries);

Path outFilePath = new Path(args[1]);

if (fileSystem.exists(outFilePath)) {

fileSystem.delete(outFilePath, true);

}

FileOutputFormat.setOutputPath(job, outFilePath);

boolean b = job.waitForCompletion(true);

System.exit(b ? 0 : 1);

}

public static class MyMapper extends Mapper<LongWritable, Text, Text, LongWritable>{

private final LongWritable one = new LongWritable(1);

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException,InterruptedException{

String[] split = value.toString().split(" ");

for(String sp : split){

context.write(new Text(sp), one);

}

}

}

public static class MyReduce extends Reducer<Text,LongWritable,Text,LongWritable>{

protected void reduce(Text key,Iterable<LongWritable> values,Context context) throws IOException,InterruptedException{

int sum = 0;

for(LongWritable writable : values){

sum += writable.get();

}

context.write(key, new LongWritable(sum));

}

}

}

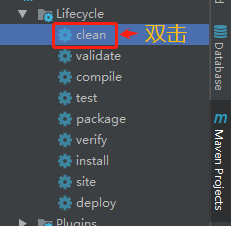

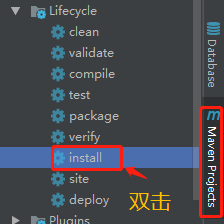

把java代码编译jar包(IDEA)

- clean

- install

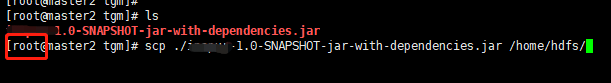

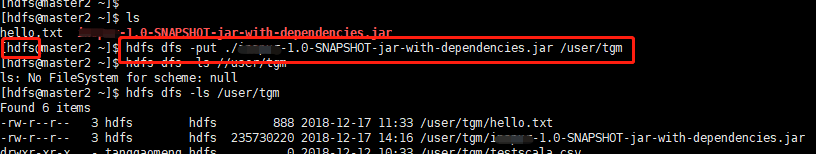

上传HDFS

- 上传Linux服务器

rz

- 拷贝到/home/hdfs 目录下

- 上传到HDFS

- 执行命令运行程序

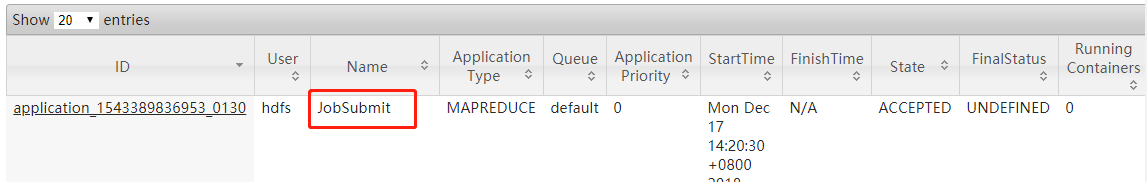

hadoop jar

/home/hdfs/XXXX-1.0-SNAPSHOT-jar-with-dependencies.jar(本地jar包路径)

com.test.Job.jobSubmit(main函数)

hdfs://192.168.1.37:8020/user/tgm/hello.txt(输入文件)

hdfs://192.168.1.37:8020/user/tgm/hello_result.txt(结果保存文件)

- 程序执行完毕,查看结果文件,从HDFS文件系统下载hello_result.txt文件,查看内容。