版权声明:本文为博主原创文章,未经博主允许不得转载。 https://blog.csdn.net/qq_38038143/article/details/84203344

1.下载

http://apache.fayea.com/zookeeper/

这里选择 stable 目录下的版本,下载并上传到 Linux。

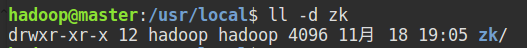

解压压缩包到 /usr/local/ 目录(注意权限问题),并修改名称为 zk,拥有者为登录用户:

在 ~/.bashrc 文件末尾添加:

export ZOOKEEPER_HOME=/usr/local/zk

export PATH=$PATH:$ZOOKEEPER_HOME/bin/

执行命令 source ~/.bashrc,即可在任意路径使用zookeeper命令。

2.配置

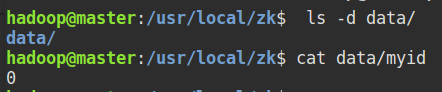

进入 /usr/local/zk/目录:

-

创建 data 目录,并添加文件myid,内容为 0:

-

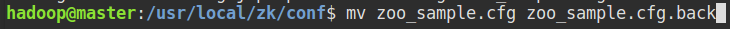

进入 /usr/local/zk/conf/ 目录:

将 zoo_sample.cfg 删除(或修改名称)

- 创建配置文件 zoo.cfg,内容如下,最后节点数量,名称根据自身集群设置(这里设置了共4个节点):

hadoop@master:/usr/local/zk/conf$ cat zoo.cfg

# The number of milliseconds of each tick

tickTime = 2000

# The number of ticks that the initial

# synchronization phase can take

initLimit=10

# The number of ticks that can pass between

# sending a request and getting ian acknowledgement

syncLimit=5

# the directory where the snapshot is stored.

dataDir=/usr/local/zk/data

# the ort at which the clients will connect

clientPort=2183

# the location of the log file

dataLogDir=/usr/local/zk/log

server.0=master:2288:3388

server.1=slave4:2288:3388

server.2=slave5:2288:3388

server.3=slave7:2288:3388

- 将 zk 目录传递给其他节点:

注:可能涉及到权限问题,可先将 zk 传递至 /home/hadoop/

scp -r /usr/local/zk slave4:/usr/local/

scp -r /usr/local/zk slave5:/usr/local/

scp -r /usr/local/zk slave7:/usr/local/

将各个节点的 zk/data/myid 文件内容改为 zoo.cfg 末行对应的数值,如这里的三个数据节点的配置:

[hadoop@slave4 ~]$ cat /usr/local/zk/data/myid

1

[hadoop@slave5 ~]$ cat /usr/local/zk/data/myid

2

[hadoop@slave7 ~]$ cat /usr/local/zk/data/myid

3

3. 启动

-

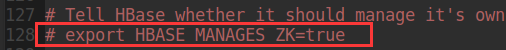

将HBase自带的zookeeper关闭

注释掉 hbase-env.sh 文件下的如下行:

-

启动

- 开启集群的Hbase

- 在配置的每个节点开启 zookeeper

在每个节点执行命令,若前面配置的无须路径启动没有成功,则使用 /usr/local/zk/bin/zkServer.sh start:

zkServer.sh start

关闭:

zkServer.sh stop

查看JPS:

配置成功!

4. 主要Shell操作

-

连接服务:

-

执行到 WatchedEvent state: SyncConneted type:None path:null 如果卡住,请按回车键:

-

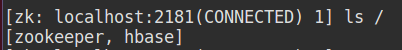

查看当前zookeeper所包含的内容:

-

创建一个Znode节点及它的内容:

[zk: localhost:2181(CONNECTED) 1] create /zk myData

Created /zk

[zk: localhost:2181(CONNECTED) 2] ls /

[zk, zookeeper, hbase]

- 查看 /zk 的内容:

[zk: localhost:2181(CONNECTED) 3] get /zk

myData

cZxid = 0x40000004d

ctime = Sun Nov 18 19:44:34 CST 2018

mZxid = 0x40000004d

mtime = Sun Nov 18 19:44:34 CST 2018

pZxid = 0x40000004d

cversion = 0

dataVersion = 0

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 6

numChildren = 0

- 设置(更改)/zk 内容:

[zk: localhost:2181(CONNECTED) 5] set /zk hadoopData

cZxid = 0x40000004d

ctime = Sun Nov 18 19:44:34 CST 2018

mZxid = 0x40000004e

mtime = Sun Nov 18 19:46:55 CST 2018

pZxid = 0x40000004d

cversion = 0

dataVersion = 1

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 10

numChildren = 0

[zk: localhost:2181(CONNECTED) 6] get /zk

hadoopData

cZxid = 0x40000004d

ctime = Sun Nov 18 19:44:34 CST 2018

mZxid = 0x40000004e

mtime = Sun Nov 18 19:46:55 CST 2018

pZxid = 0x40000004d

cversion = 0

dataVersion = 1

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 10

numChildren = 0

- 删除 /zk

[zk: localhost:2181(CONNECTED) 7] delete /zk

[zk: localhost:2181(CONNECTED) 8] ls /

[zookeeper, hbase]

5. 节点之间的关联

既然单个节点能够连接 ZK 服务,那各个节点之间有什么关联呢?

通过一个例子展示:

- 在 master、slave4 节点分别连接服务:

zkCli.sh -server localhost:2181

- 在 master 节点创建新的Znode:

master:

[zk: localhost:2181(CONNECTED) 15] create /zk master

Created /zk

[zk: localhost:2181(CONNECTED) 16] get /zk

master

cZxid = 0x400000054

ctime = Sun Nov 18 20:25:38 CST 2018

mZxid = 0x400000054

mtime = Sun Nov 18 20:25:38 CST 2018

pZxid = 0x400000054

cversion = 0

dataVersion = 0

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 6

numChildren = 0

slave4:

[zk: localhost:2181(CONNECTED) 5] get /zk

master

cZxid = 0x400000054

ctime = Sun Nov 18 20:25:38 CST 2018

mZxid = 0x400000054

mtime = Sun Nov 18 20:25:38 CST 2018

pZxid = 0x400000054

cversion = 0

dataVersion = 0

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 6

numChildren = 0

可以看出,各个节点在 ZK 服务上的Znode节点信息可以互相查看,甚至修改、删除。