版权声明:转载请声明出处,谢谢! https://blog.csdn.net/qq_31468321/article/details/83351660

写在前面

前一段时间使用python+PhantomJS爬取了一些股票信息,今天来总结一下之前写的爬虫。

整个爬虫分为如下几个部分,

- 爬取所有股票列表页的信息

- 爬取所有股票的详细信息

- 将爬取到的数据写入cvs文件中,每一种股票为一个CSV文件

爬取所有股票列表页的信息

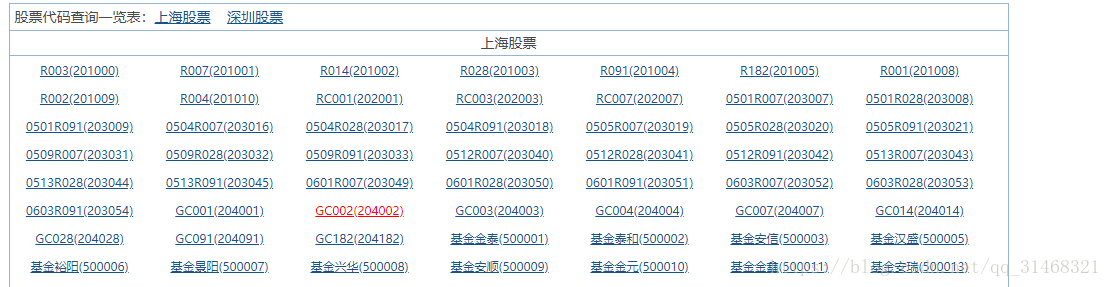

先来看一下网页

如上,我们准备先获取所有的股票名称和股票代码,然后构造成新的URL来爬取详细的信息。

- 先查看使用的包

from selenium import webdriver

from lxml import etree

import time

import csv

- 打开浏览器

#打开浏览器

def open_web(url):

web_info = webdriver.PhantomJS()

web_info.get(url)

html = web_info.page_source

time.sleep(3)

return html

- 获取所有股票代码和股票名称

获取到所有信息,并将信息写入字典

def get_all_stockcode(html):

info = {}

data = etree.HTML(html)

lis = data.xpath("//div[@class='quotebody']/div/ul/li")

for li in lis:

key,value = li.xpath(".//text()")[0].split("(")

value = value.replace(")"," ".strip())

info[key] = value

return info

获取了所有的股票名称和股票代码之后,开始构造详细数据网页的URL,开始爬取详细的信息,如下为详细数据页面。

4. 获取详细页面

#获取股票数据并保存

def get_stock_data(stock_num):

find_url = "http://www.aigaogao.com/tools/history.html?s="

#构造URL

socket_url = find_url+stock_num

one_stock_html = open_web(socket_url)

return one_stock_html

- 获取详细页面的信息

使用enumerate方法,该方法返回一个索引和一个内容信息,使用该接口获取表格中的数据。

def get_stock_infos(infos_html):

detaile_info = {}

title = []

deta_data = etree.HTML(infos_html)

infos_div = deta_data.xpath("//div[@id='ctl16_contentdiv']/table/tbody/tr")

for index,info in enumerate(infos_div):

one_info = {}

if index == 0:

title_list = info.xpath(".//td/text()")

for t in title_list:

title.append(t)

#print(index,title)

else:

print('*'*40)

tds_text = info.xpath(".//td/text()")

if "End" in tds_text[0]:

break

tds_a_text = info.xpath(".//td/a/text()")[0]

tds_span_text = info.xpath(".//td/span/text()")

time = tds_a_text

one_info[title[0]] =time

opening = tds_text[0]

one_info[title[1]] = opening

highest = tds_text[1]

one_info[title[2]] = highest

lowest = tds_text[2]

one_info[title[3]] = lowest

closing = tds_text[3]

one_info[title[4]] = closing

volume = tds_text[4]

one_info[title[5]] = volume

AMO = tds_text[5]

one_info[title[6]] = AMO

up_and_down = tds_text[6]

one_info[title[7]] = up_and_down

percent_up_and_down = tds_span_text[0].strip(" ")

one_info[title[8]] = percent_up_and_down

if len(tds_text) == 9:

drawn = tds_text[7]

SZ = tds_text[8]

else:

SZ = tds_text[-1]

drawn = 0

one_info[title[9]] = drawn

percent_P_V = tds_span_text[1].strip(" ")

one_info[title[10]] = percent_P_V

one_info[title[11]] = SZ

percent_SZ = tds_span_text[2].strip(" ")

one_info[title[12]] = percent_SZ

print(one_info)

detaile_info[index] = one_info

return detaile_info

- 写入CVS文件的接口

#打开CSV文件

def save_csv(name,detaile_info):

#打开文件

with open(name,'w+',newline="",encoding='GB2312') as fp:

if detaile_info:

#获取数据中的详细信息

headers = list(detaile_info[1].keys())

#写入头部信息

write = csv.DictWriter(fp,fieldnames=headers)

write.writeheader()

#写入详细信息

for index,info_x in enumerate(detaile_info):

if index != 0:

write.writerow(detaile_info[index])

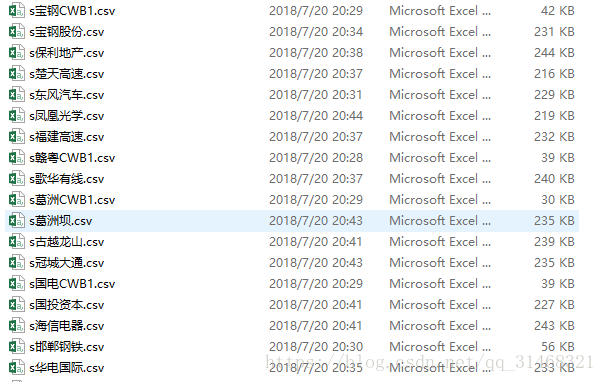

- 开始爬取

base_url = "http://quote.eastmoney.com/stocklist.html#sz"

html = open_web(base_url)

info = get_all_stockcode(html)

for socket_name,socket_code in info.items():

print(socket_name,socket_code)

html = get_stock_data(socket_code)

detaile_info = get_stock_infos(html)

if detaile_info:

if "*" in socket_name:

socket_name = socket_name.strip("*")

csv_name = "s"+socket_name+'.csv'

save_csv(csv_name,detaile_info)