五、爬虫流程

六、代码结构:

爬虫调度器(入口)--url管理器--url下载器--解析器--输出器

spider_main.py(入口)

from baike_spider import url_manager # url管理器

from baike_spider import html_downloader # url下载器

from baike_spider import html_parser # html解析器

from baike_spider import html_outputer # htmloutputer

class SpiderMain(object):

def __init__(self):

# 初始化各个对象

self.urls = url_manager.UrlManager()

self.downloader = html_downloader.HtmlDownloader()

self.parser = html_parser.HtmlParser()

self.outputer = html_outputer.HtmlOutputer()

# 爬虫调度程序

def craw(self, root_url):

count = 1

# 将入口url加入url管理器,add_new_url添加一个

self.urls.add_new_url(root_url)

# 启动爬虫循环,当url 管理器有url时

while self.urls.has_new_url():

try:

# 获取一个待爬取url

new_url = self.urls.get_new_url()

# 打印当前爬取的url,

print("craw %d : %s" %(count, new_url))

# 启动下载器下载页面并保存

html_cont = self.downloader.download(new_url)

# 下载好页面后,解析器解析,得到新的url列表和新的数据,

new_urls, new_data = self.parser.paser(new_url, html_cont)

# 将新的url添加进url管理器,add_new_urls添加多个

self.urls.add_new_urls(new_urls)

# 收集数据

self.outputer.collect_data(new_data)

# 爬取1000个页面

if count == 1000:

break

count = count + 1

except:

print('craw failed')

# 输出收集好的数据

self.outputer.output_html()

# 爬虫总调度程序入口

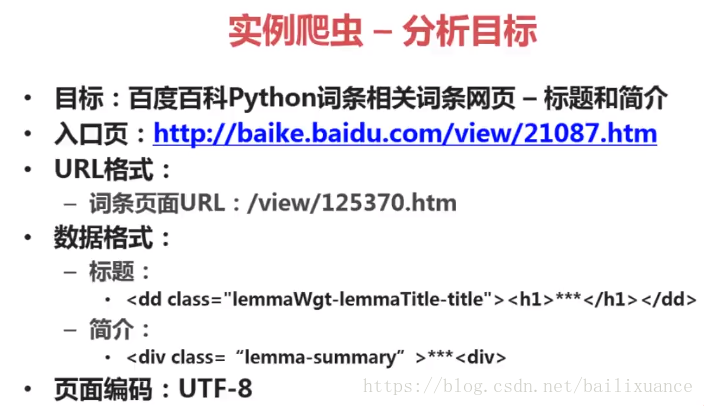

if __name__ == '__main__':

root_url = "https://baike.baidu.com/view/21087.htm"

obj_spider = SpiderMain()

obj_spider.craw(root_url)

url_manager.py(管理器)

class UrlManager(object):

# 待爬取和已爬取的url

def __init__(self):

self.new_urls = set()

self.old_urls = set()

# 向管理器添加一个url,

def add_new_url(self, url):

if url is None:

return

if url not in self.new_urls and url not in self.old_urls:

self.new_urls.add(url)

# 向管理器批量添加url

def add_new_urls(self, urls):

if urls is None or len(urls) == 0:

return

for url in urls:

self.add_new_url(url)

# 判断管理器是否有新的url

def has_new_url(self):

return len(self.new_urls) != 0

# 从管理器中获取一个url

def get_new_url(self):

new_url = self.new_urls.pop()

self.old_urls.add(new_url)

return new_url

html_downloader.py(下载器)

import urllib.request

class HtmlDownloader(object):

def download(self, url):

if url is None:

return None

response = urllib.request.urlopen(url)

if response.getcode() != 200:

return None

return response.read()

html_parser.py(解析器)

from bs4 import BeautifulSoup

import re

import urllib.parse

class HtmlParser(object):

# 获取新的url,即获取超链接

def _get_new_urls(self, page_url, soup):

new_urls = set()

# /view/123.htm

links = soup.find_all('a', href=re.compile(r'/item/'))

for link in links:

new_url = link['href']

# 拼接成一个新的url

# urllib.parse.urljoin将两个url拼接成一个url

new_full_url = urllib.parse.urljoin(page_url, new_url)

new_urls.add(new_full_url)

return new_urls

def _get_new_data(self, page_url, soup):

res_data = {}

# url

res_data['url'] = page_url

# 得到标题的标签

# <dd class="lemmaWgt-lemmaTitle-title">

# <h1>Python</h1>

title_node = soup.find('dd', class_="lemmaWgt-lemmaTitle-title").find('h1')

res_data['title'] = title_node.get_text()

# 获取简介

# lemma-summary

# <div class="lemma-summary" label-module="lemmaSummary">

# <div class="para" label-module="para">Python 是一门有条理的和强大的面向对象的程序设计语言,类似于Perl, Ruby, Scheme, Java.</div>

# </div>

# <div class="para" label-module="para">Python 是一门有条理的和强大的面向对象的程序设计语言,类似于Perl, Ruby, Scheme, Java.</div>

summary_node = soup.find('div', class_="lemma-summary")

res_data['summary'] = summary_node.get_text()

return res_data

def paser(self, page_url, html_cont):

if page_url is None or html_cont is None:

return

soup = BeautifulSoup(html_cont, 'html.parser', from_encoding='utf-8')

new_urls = self._get_new_urls(page_url, soup)

new_data = self._get_new_data(page_url, soup)

return new_urls, new_data

html_outputer.py(输出器)

class HtmlOutputer(object):

def __init__(self):

self.datas = []

# 收集数据

def collect_data(self, data):

if data is None:

return

self.datas.append(data)

# 将收集的数据写入html中,

def output_html(self):

# python默认输出asci

fout = open('output.html', 'w', encoding="utf-8")

fout.write("<html>")

fout.write("<head><meta http-equiv=\"content-type\" content=\"text/html;charset=utf-8\"></head>")

fout.write("<body>")

# 表格

fout.write("<table>")

for data in self.datas:

# 每行

fout.write("<tr>")

# 每个单元格

fout.write("<td>%s</td>" % data['url'])

fout.write("<td>%s</td>" % data['title'])

fout.write("<td>%s</td>" % data['summary'])

fout.write("</tr>")

fout.write("</table>")

fout.write("</body>")

fout.write("</html>")

fout.close()

参考:http://www.imooc.com/learn/563

源码(附注释):https://download.csdn.net/download/bailixuance/10712607