Master的准备切换分为两种

①、一种是基于文件系统的,spark提供目录保存spark Application和worker的注册信息,并将他们的恢复状态写入该目录,当spark的master节点宕掉的时候,重启master,就能获取application和worker的注册信息。需要手动进行切换

### 配置:conf/spark-env.sh

export SPARK_DAEMON_JAVA_OPTS="-Dspark.deploy.recoveryMode=FILESYSTEM -Dspark.deploy.recoveryDirectory=/nfs/spark/recovery"②、一种是基于zookeeper的,用于生产模式。其基本原理是通过zookeeper来选举一个Master,其他的Master处于Standby状态。将Standalone集群连接到同一个ZooKeeper实例并启动多个Master,利用zookeeper提供的选举和状态保存功能,可以使一个Master被选举,而其他Master处于Standby状态。如果现任Master死去,另一个Master会通过选举产生,并恢复到旧的Master状态,然后恢复调度。整个恢复过程可能要1-2分钟。

注意:

- 这个过程只会影响新Application的调度,对在故障期间已经运行的application不会受到影响

- 因为涉及到多个Master,需要在SparkContext指向一个Master列表,

spark://host1:port1,host2:port2,host3:port3,应用程序会轮询列表 - 不能将Master定义在

conf/spark-env.sh里了,而是直接在Application中定义。涉及的参数是export SPARK_MASTER_IP=bigdata001,这项不配置或者为空。否则,无法启动多个master

### 配置:conf/spark-env.sh

export SPARK_DAEMON_JAVA_OPTS="-Dspark.deploy.recoveryMode=ZOOKEEPER -Dspark.deploy.zookeeper.url=bigdata001:2181,bigdata002:2181,bigdata003:2181 -Dspark.deploy.zookeeper.dir=/spark"流程:(注意completeRecovery方法)

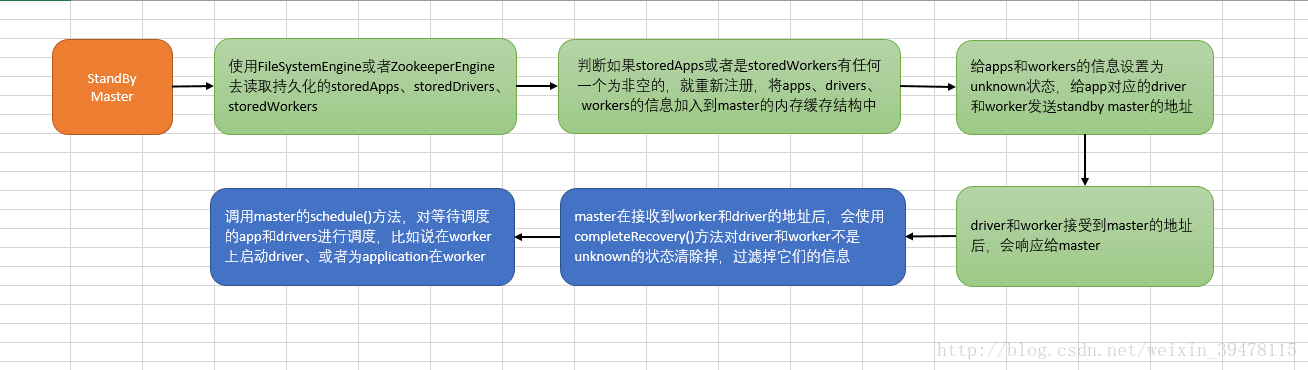

- 在active Master宕掉之后,内部持久化(FileSystemPersistenceEngine和ZookeeperPersistenceEngine)引擎首先会读取持久化的storedApps、storedDrivers、storedWorkers

- 如果storedApps、storedWorkers有任何一个是有内容的,那么就将持久化的Application、Worker信息重新注册,将apps、drivers、workers的信息加入到master的内存缓存结构中

- 将Application和Worker的状态都修改为UNKNOWN,然后向Application所对应的Driver和Worker发送StandBy Master的地址

- 如果Driver和Wroker是正常运转的情况下,接收到Master发送过来的地址后,就会相应到新的Master,在Master陆续接收到Driver和Worker发送过来的消息后,会使用completeRecovery()方法对没有发送响应消息的Driver和Worker进行处理,过滤掉他们的信息。

- 调用Master的schedule()方法,对正在调度的Driver和Application进行调度。在worker上启动driver,或者是为Applicaiton在worker上启动executor

源码分析:

第一步:开始恢复master

源码:org/apache/spark/deploy/master/Master.scala

/**

* 开始恢复master

*/

private def beginRecovery(storedApps: Seq[ApplicationInfo], storedDrivers: Seq[DriverInfo],

storedWorkers: Seq[WorkerInfo]) {

for (app <- storedApps) {

logInfo("Trying to recover app: " + app.id)

try {

// 注册application

registerApplication(app)

app.state = ApplicationState.UNKNOWN

app.driver.send(MasterChanged(self, masterWebUiUrl))

} catch {

case e: Exception => logInfo("App " + app.id + " had exception on reconnect")

}

}

for (driver <- storedDrivers) {

// Here we just read in the list of drivers. Any drivers associated with now-lost workers

// will be re-launched when we detect that the worker is missing.

drivers += driver

}

for (worker <- storedWorkers) {

logInfo("Trying to recover worker: " + worker.id)

try {

// 注册worker

registerWorker(worker)

worker.state = WorkerState.UNKNOWN

worker.endpoint.send(MasterChanged(self, masterWebUiUrl))

} catch {

case e: Exception => logInfo("Worker " + worker.id + " had exception on reconnect")

}

}

}第二步:点击第一步中registerApplication

源码:org/apache/spark/deploy/master/Master.scala

private def registerApplication(app: ApplicationInfo): Unit = {

// 拿到driver的地址

val appAddress = app.driver.address

// 如果driver的地址存在的情况下,就直接返回,就相当于对driver进行重复注册

if (addressToApp.contains(appAddress)) {

logInfo("Attempted to re-register application at same address: " + appAddress)

return

}

applicationMetricsSystem.registerSource(app.appSource)

//将Application的信息加入到内存缓存中

apps += app

idToApp(app.id) = app

endpointToApp(app.driver) = app

addressToApp(appAddress) = app

//将Application的信息加入到等待调度的队列中,调度的算法为FIFO

waitingApps += app

}第三步:点击第一步中registerWorker

源码:org/apache/spark/deploy/master/Master.scala

private def registerWorker(worker: WorkerInfo): Boolean = {

// There may be one or more refs to dead workers on this same node (w/ different ID's),

// remove them.

workers.filter { w =>

(w.host == worker.host && w.port == worker.port) && (w.state == WorkerState.DEAD)

}.foreach { w =>

workers -= w

}第四步:完成master的主备切换,也就是完成master的主备切换

源码:org/apache/spark/deploy/master/Master.scala

/**

* 完成master的主备切换,也就是完成master的主备切换

*/

private def completeRecovery() {

// Ensure "only-once" recovery semantics using a short synchronization period.

if (state != RecoveryState.RECOVERING) { return }

state = RecoveryState.COMPLETING_RECOVERY

// 将Applicaiton和Worker都过滤出来,目前状况还是UNKNOWN的

// 然后遍历,分别调用removeWorker和finishApplication方法,对可能已经出故障,或者已经死掉的Application和Worker进行清理

// 三点:

// 1、从内存缓存结构中移除

// 2、从相关组件的内存缓存中移除(比如说worker所在的driver也要移除)

// 3、从持久化存储中移除

// Kill off any workers and apps that didn't respond to us.

workers.filter(_.state == WorkerState.UNKNOWN).foreach(removeWorker)

apps.filter(_.state == ApplicationState.UNKNOWN).foreach(finishApplication)

// Reschedule drivers which were not claimed by any workers

drivers.filter(_.worker.isEmpty).foreach { d =>

logWarning(s"Driver ${d.id} was not found after master recovery")

// 重新启动driver,对于sparkstreaming程序而言

if (d.desc.supervise) {

logWarning(s"Re-launching ${d.id}")

relaunchDriver(d)

} else {

removeDriver(d.id, DriverState.ERROR, None)

logWarning(s"Did not re-launch ${d.id} because it was not supervised")

}

}

state = RecoveryState.ALIVE

schedule()

logInfo("Recovery complete - resuming operations!")

}第五步:点击第四步中的removeWorker

// 移除worker

private def removeWorker(worker: WorkerInfo) {

logInfo("Removing worker " + worker.id + " on " + worker.host + ":" + worker.port)

worker.setState(WorkerState.DEAD)

idToWorker -= worker.id

addressToWorker -= worker.endpoint.address

for (exec <- worker.executors.values) {

logInfo("Telling app of lost executor: " + exec.id)

// 向driver发送exeutor丢失了

exec.application.driver.send(ExecutorUpdated(

exec.id, ExecutorState.LOST, Some("worker lost"), None))

// 将worker上的所有executor给清楚掉

exec.application.removeExecutor(exec)

}

for (driver <- worker.drivers.values) {

// spark自动监视,driver所在的worker挂掉的时候,也会把这个driver移除掉,如果配置supervise这个属性的时候,driver也挂掉的时候master会重新启动driver

if (driver.desc.supervise) {

logInfo(s"Re-launching ${driver.id}")

relaunchDriver(driver)

} else {

logInfo(s"Not re-launching ${driver.id} because it was not supervised")

removeDriver(driver.id, DriverState.ERROR, None)

}

}

// 持久化引擎会移除worker

persistenceEngine.removeWorker(worker)

}

第六步:点击第四步中的finishApplication

private def finishApplication(app: ApplicationInfo) {

removeApplication(app, ApplicationState.FINISHED)

}

def removeApplication(app: ApplicationInfo, state: ApplicationState.Value) {

// 将数据从内存缓存结果中移除

if (apps.contains(app)) {

logInfo("Removing app " + app.id)

apps -= app

idToApp -= app.id

endpointToApp -= app.driver

addressToApp -= app.driver.address

if (completedApps.size >= RETAINED_APPLICATIONS) {

val toRemove = math.max(RETAINED_APPLICATIONS / 10, 1)

completedApps.take(toRemove).foreach( a => {

Option(appIdToUI.remove(a.id)).foreach { ui => webUi.detachSparkUI(ui) }

applicationMetricsSystem.removeSource(a.appSource)

})

completedApps.trimStart(toRemove)

}

completedApps += app // Remember it in our history

waitingApps -= app

// If application events are logged, use them to rebuild the UI

asyncRebuildSparkUI(app)

for (exec <- app.executors.values) {

// 杀掉app对应的executor

killExecutor(exec)

}

app.markFinished(state)

if (state != ApplicationState.FINISHED) {

app.driver.send(ApplicationRemoved(state.toString))

}

// 从持久化引擎中移除application

persistenceEngine.removeApplication(app)

schedule()

// Tell all workers that the application has finished, so they can clean up any app state.

workers.foreach { w =>

w.endpoint.send(ApplicationFinished(app.id))

}

}

}第七步:点击第四步中的relaunchDriver

private def relaunchDriver(driver: DriverInfo) {

driver.worker = None

// 将driver的状态设置为relaunching

driver.state = DriverState.RELAUNCHING

// 将driver加入到等待的队列当中

waitingDrivers += driver

schedule()

}第八步:点击第四步的removeDriver

/**

* 移除driver

*/

private def removeDriver(

driverId: String,

finalState: DriverState,

exception: Option[Exception]) {

drivers.find(d => d.id == driverId) match {

case Some(driver) =>

logInfo(s"Removing driver: $driverId")

drivers -= driver

if (completedDrivers.size >= RETAINED_DRIVERS) {

val toRemove = math.max(RETAINED_DRIVERS / 10, 1)

completedDrivers.trimStart(toRemove)

}

// 将driver加入到已经完成的driver中

completedDrivers += driver

// 将driver从持久化引擎中移除掉

persistenceEngine.removeDriver(driver)

// 将driver的状态设置为final

driver.state = finalState

driver.exception = exception

// 将driver所在的worker中移除掉driver

driver.worker.foreach(w => w.removeDriver(driver))

schedule()

case None =>

logWarning(s"Asked to remove unknown driver: $driverId")

}

}

}第九步:点击第四步中schedule(),资源调度算法

详情看更新博客