简介

当您在阿里云容器服务中使用GPU ECS主机构建Kubernetes集群进行AI训练时,经常需要知道每个Pod使用的GPU的使用情况,比如每块显存使用情况、GPU利用率,GPU卡温度等监控信息,本文介绍如何快速在阿里云上构建基于Prometheus + Grafana的GPU监控方案。

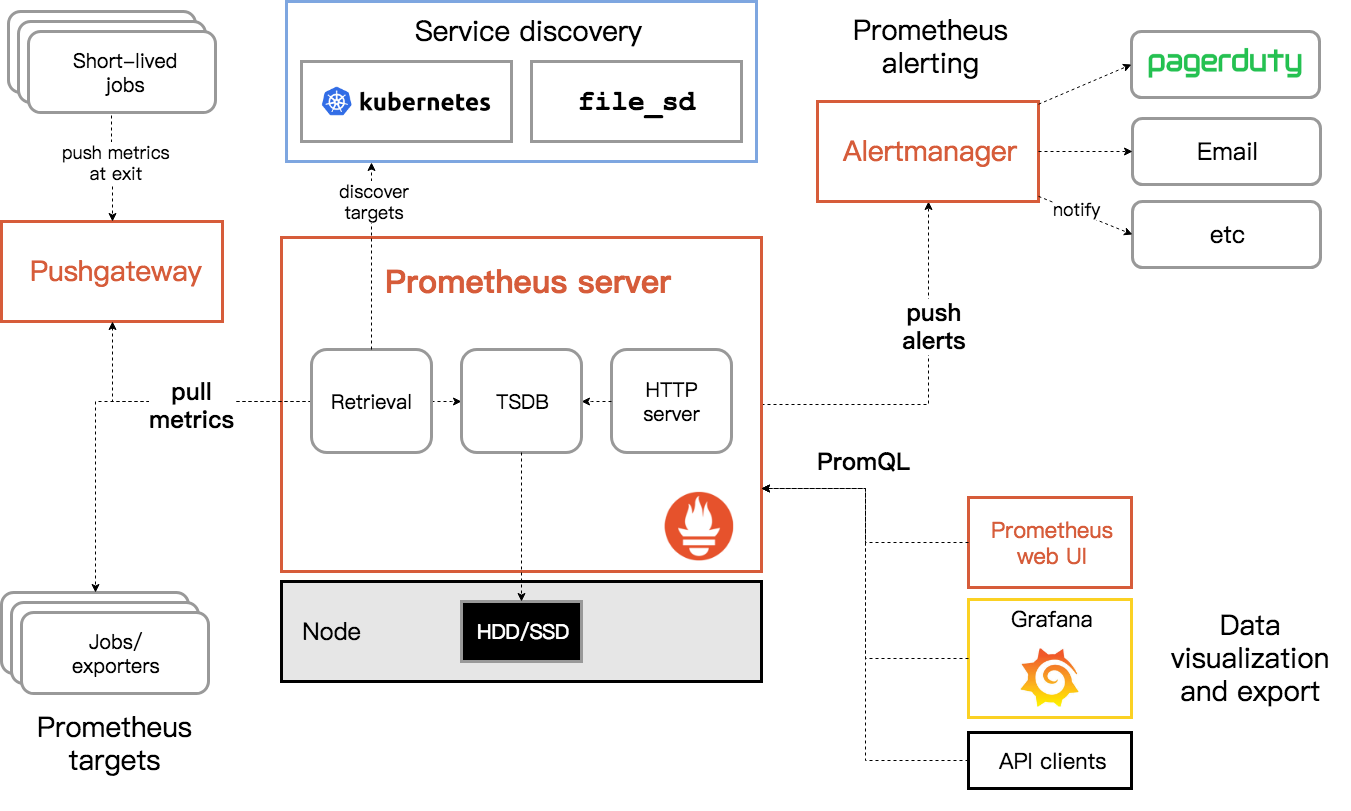

Prometheus

Prometheus 是一个开源的服务监控系统和时间序列数据库。从 2012 年开始编写代码,再到 2015 年 github 上开源以来,已经吸引了 9k+ 关注,2016 年 Prometheus 成为继 k8s 后,第二名 CNCF(Cloud Native Computing Foundation) 成员。2018年8月 于CNCF毕业。

作为新一代开源解决方案,很多理念与 Google SRE 运维之道不谋而合。

操作

前提:您已经通过阿里云容器服务创建了拥有GPU ECS的Kubernetes集群,具体步骤请参考:尝鲜阿里云容器服务Kubernetes 1.9,拥抱GPU新姿势。

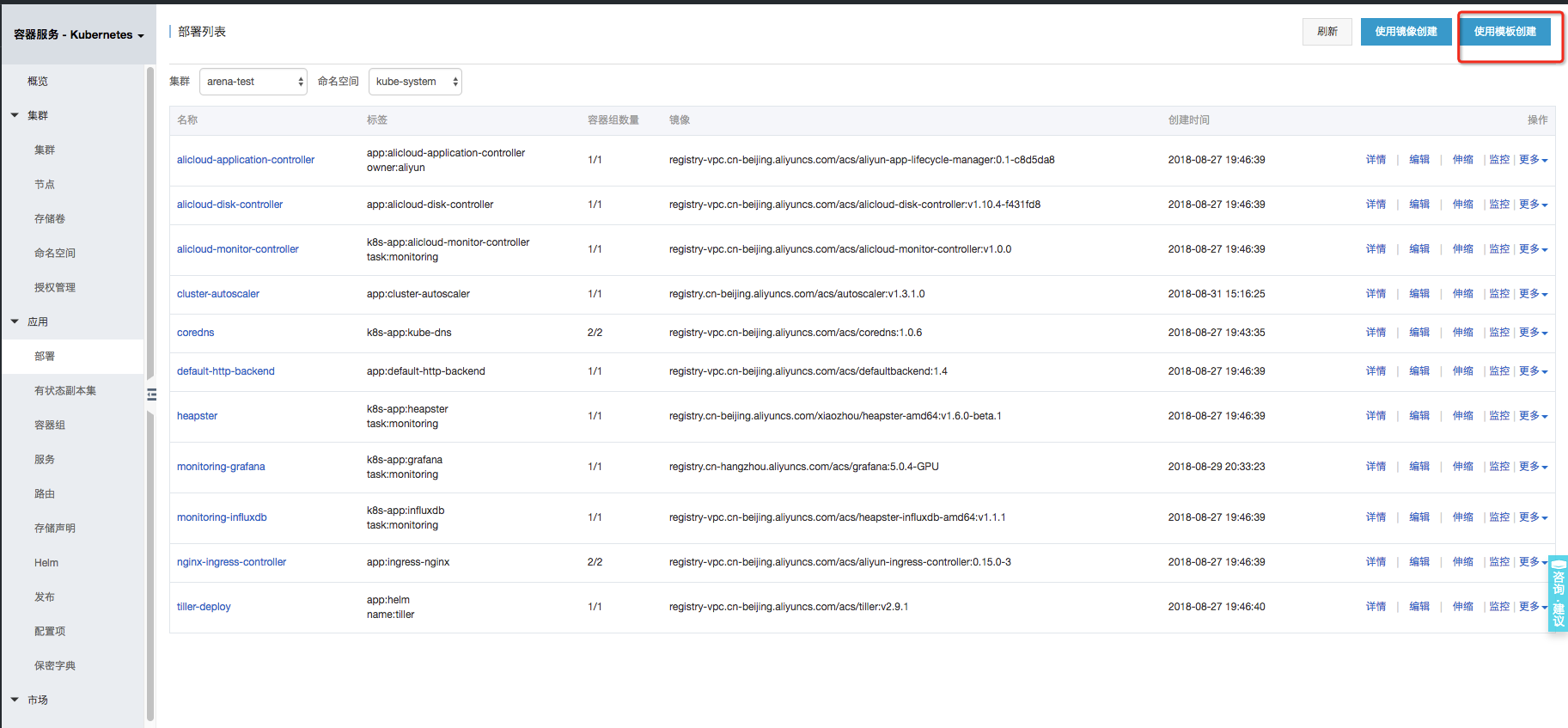

登录容器服务控制台,选择【容器服务-Kubernetes】,点击【应用-->部署-->使用模板创建】:

选择您的GPU集群和Namespace,命名空间可以选择kube-system,然后在下面的模板中填入部署Prometheus和GPU-Expoter对应的Yaml内容。

部署Prometheus

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-env

data:

storage-retention: 360h

storage-memory-chunks: '1048576'

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRole

metadata:

name: prometheus

rules:

- apiGroups: ["", "extensions", "apps"]

resources:

- nodes

- nodes/proxy

- services

- endpoints

- pods

- deployments

- services

verbs: ["get", "list", "watch"]

- nonResourceURLs: ["/metrics"]

verbs: ["get"]

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: prometheus

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRoleBinding

metadata:

name: prometheus

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: prometheus

subjects:

- kind: ServiceAccount

name: prometheus

namespace: kube-system # 如果部署在其他namespace下, 需要修改这里的namespace配置

---

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: prometheus-deployment

spec:

replicas: 1

selector:

matchLabels:

app: prometheus

template:

metadata:

name: prometheus

labels:

app: prometheus

spec:

serviceAccount: prometheus

serviceAccountName: prometheus

containers:

- name: prometheus

image: registry.cn-hangzhou.aliyuncs.com/acs/prometheus:1.1.1

args:

- '-storage.local.retention=$(STORAGE_RETENTION)'

- '-storage.local.memory-chunks=1048576'

- '-config.file=/etc/prometheus/prometheus.yml'

ports:

- name: web

containerPort: 9090

env:

- name: STORAGE_RETENTION

valueFrom:

configMapKeyRef:

name: prometheus-env

key: storage-retention

- name: STORAGE_MEMORY_CHUNKS

valueFrom:

configMapKeyRef:

name: prometheus-env

key: storage-memory-chunks

volumeMounts:

- name: config-volume

mountPath: /etc/prometheus

- name: prometheus-data

mountPath: /prometheus

volumes:

- name: config-volume

configMap:

name: prometheus-configmap

- name: prometheus-data

emptyDir: {}

---

apiVersion: v1

kind: Service

metadata:

labels:

name: prometheus-svc

kubernetes.io/name: "Prometheus"

name: prometheus-svc

spec:

type: LoadBalancer

selector:

app: prometheus

ports:

- name: prometheus

protocol: TCP

port: 9090

targetPort: 9090

---

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-configmap

data:

prometheus.yml: |-

rule_files:

- "/etc/prometheus-rules/*.rules"

scrape_configs:

- job_name: kubernetes-service-endpoints

honor_labels: false

kubernetes_sd_configs:

- api_servers:

- 'https://kubernetes.default.svc'

in_cluster: true

role: endpoint

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scheme]

action: replace

target_label: __scheme__

regex: (https?)

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_service_annotation_prometheus_io_port]

action: replace

target_label: __address__

regex: (.+)(?::\d+);(\d+)

replacement: $1:$2

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_service_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: kubernetes_name如果您选择kube-system以外的namespace, 需要修改yaml中ClusterRoleBinding绑定的serviceAccount内容:

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRoleBinding

metadata:

name: prometheus

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: prometheus

subjects:

- kind: ServiceAccount

name: prometheus

namespace: kube-system # 如果部署在其他namespace下, 需要修改这里的namespace配置部署Prometheus 的GPU 采集器

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: node-gpu-exporter

spec:

selector:

matchLabels:

app: node-gpu-exporter

template:

metadata:

labels:

app: node-gpu-exporter

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: aliyun.accelerator/nvidia_count

operator: Exists

hostPID: true

containers:

- name: node-gpu-exporter

image: registry.cn-hangzhou.aliyuncs.com/acs/gpu-prometheus-exporter:0.1-f48bc3c

imagePullPolicy: Always

env:

- name: MY_NODE_NAME

valueFrom:

fieldRef:

fieldPath: spec.nodeName

- name: MY_POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: MY_NODE_IP

valueFrom:

fieldRef:

fieldPath: status.hostIP

- name: EXCLUDE_PODS

value: $(MY_POD_NAME),nvidia-device-plugin-$(MY_NODE_NAME),nvidia-device-plugin-ctr

- name: CADVISOR_URL

value: http://$(MY_NODE_IP):10255

ports:

- containerPort: 9445

hostPort: 9445

resources:

requests:

memory: 30Mi

cpu: 100m

limits:

memory: 50Mi

cpu: 200m

---

apiVersion: v1

kind: Service

metadata:

annotations:

prometheus.io/scrape: 'true'

name: node-gpu-exporter

labels:

app: node-gpu-exporter

k8s-app: node-gpu-exporter

spec:

type: ClusterIP

clusterIP: None

ports:

- name: http-metrics

port: 9445

protocol: TCP

selector:

app: node-gpu-exporter

部署Grafana

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: monitoring-grafana

spec:

replicas: 1

template:

metadata:

labels:

task: monitoring

k8s-app: grafana

spec:

containers:

- name: grafana

image: registry.cn-hangzhou.aliyuncs.com/acs/grafana:5.0.4-gpu-monitoring

ports:

- containerPort: 3000

protocol: TCP

volumes:

- name: grafana-storage

emptyDir: {}

---

apiVersion: v1

kind: Service

metadata:

name: monitoring-grafana

spec:

ports:

- port: 80

targetPort: 3000

type: LoadBalancer

selector:

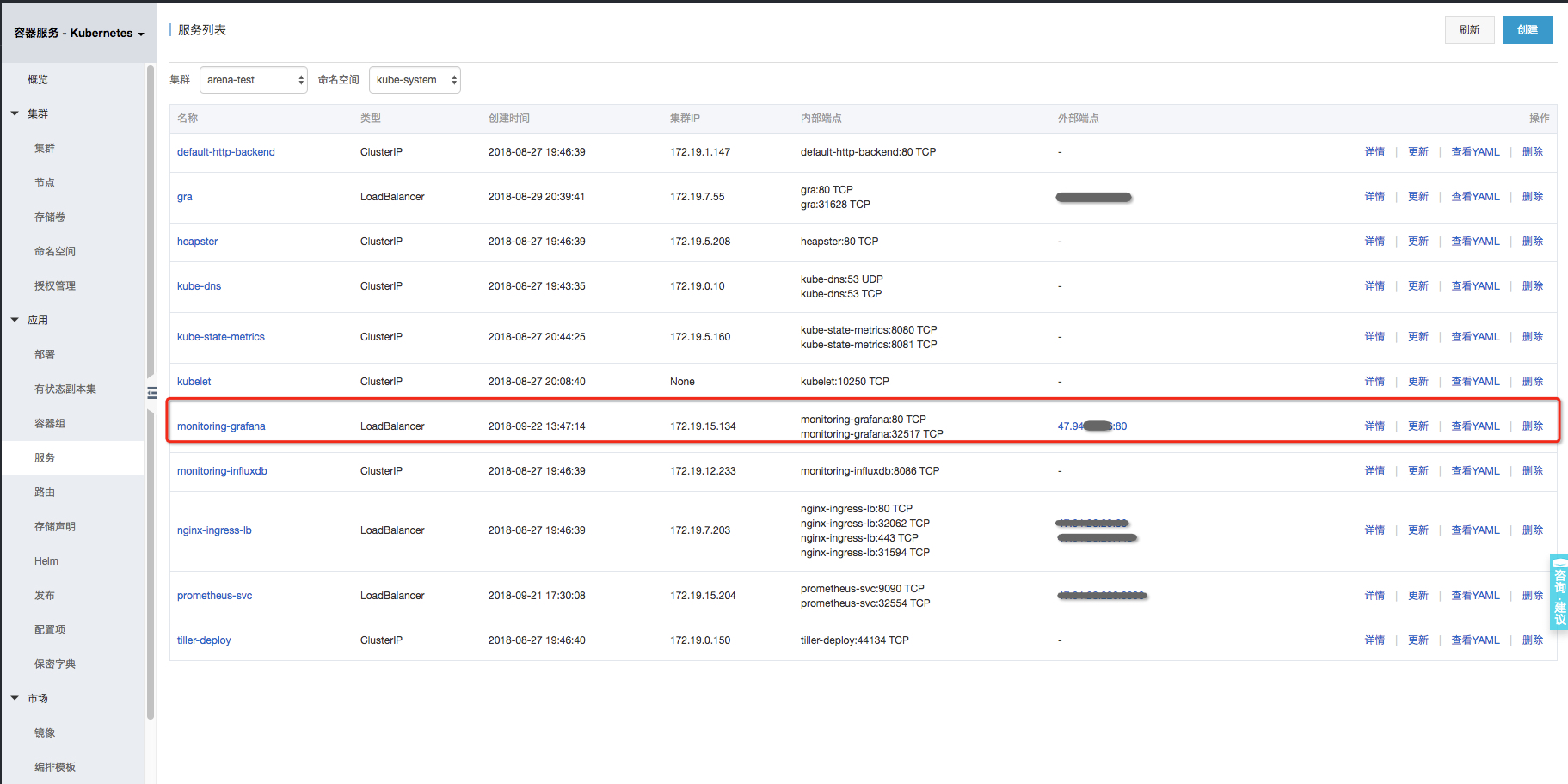

k8s-app: grafana- 进入【应用-->服务】页面,选择对应集群及kube-system命名空间,点击monitoring-grafana对应的外部端点,浏览器自动跳转到Grafana的login页面,初始用户名及密码均为

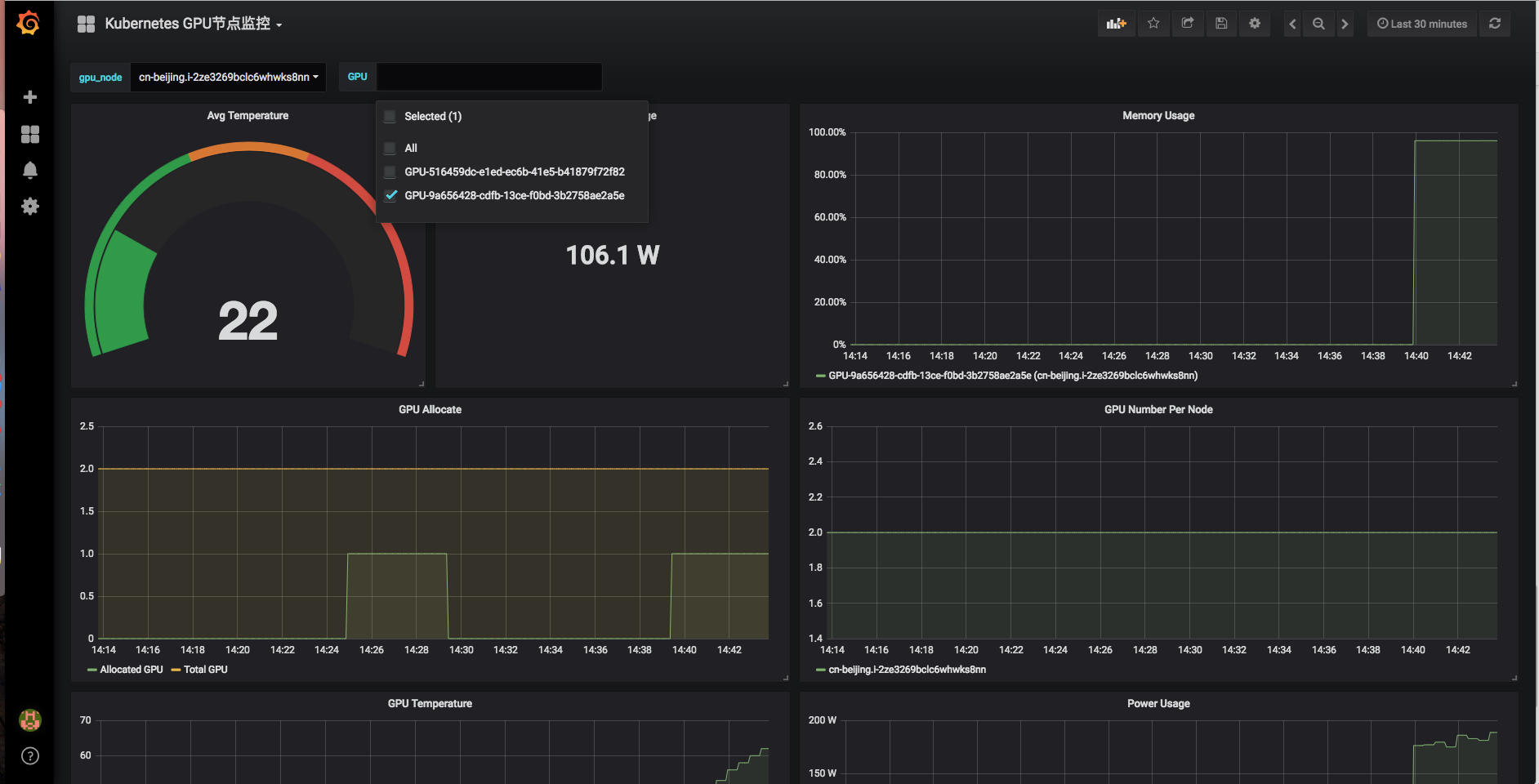

admin,你可以在登录成功后重置密码、添加用户等操作。在Dashboard中可以看到GPU应用监控和GPU节点监控信息。

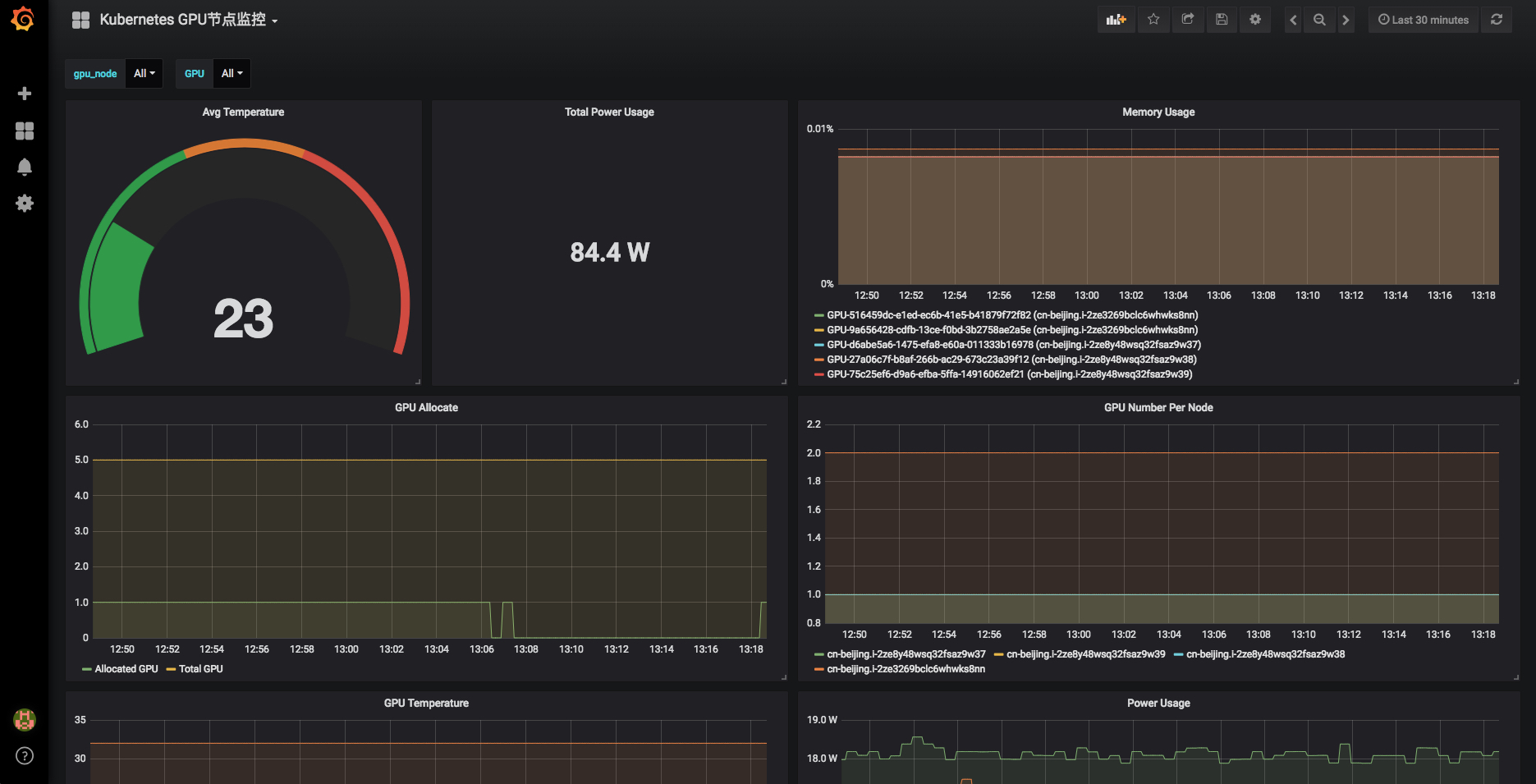

节点GPU监控

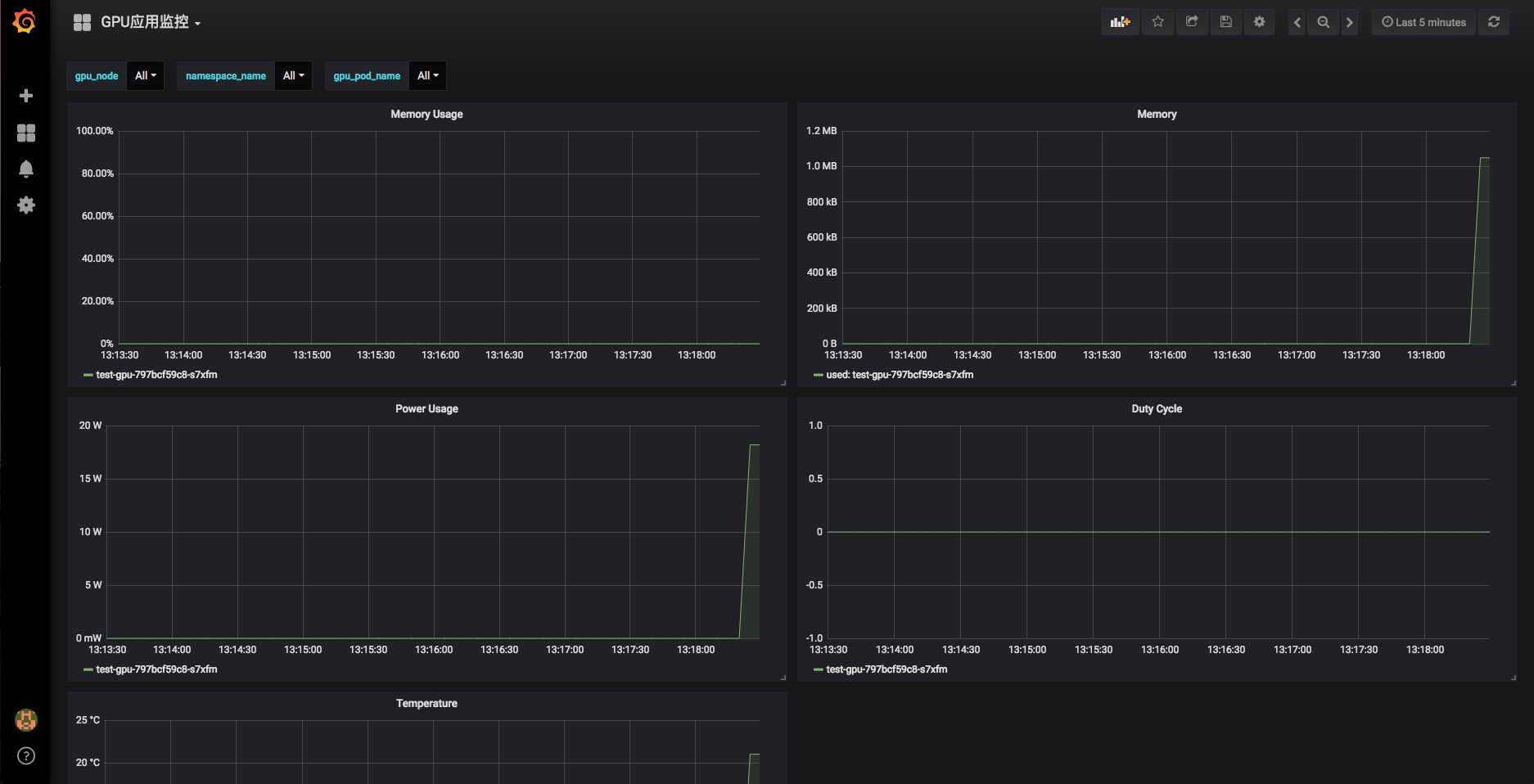

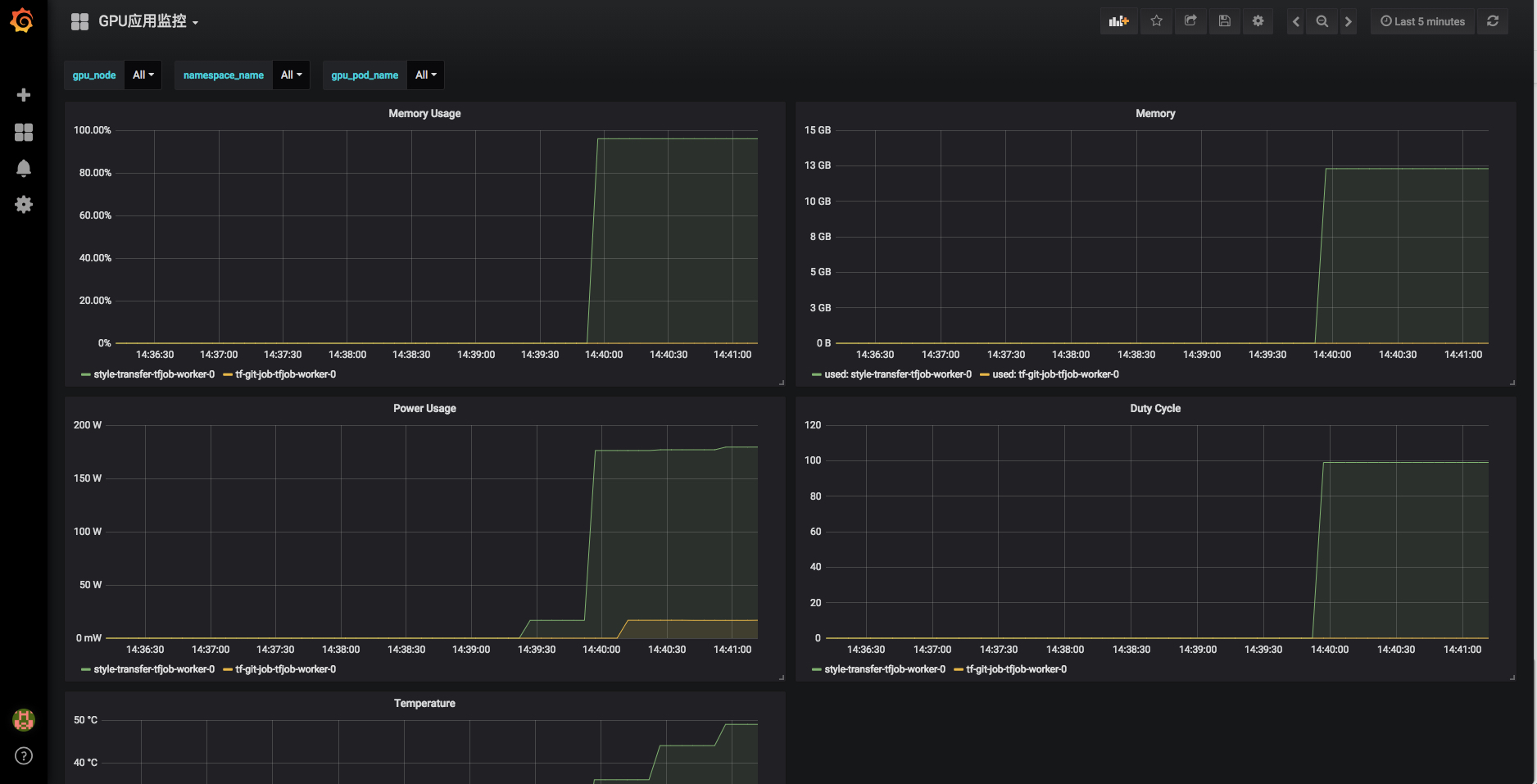

Pod GPU监控

部署应用

如果您已经使用了Arena Arena - 打开KubeFlow的正确姿势) ,可以直接使用arena提交一个训练任务。

arena submit tf --name=style-transfer \

--gpus=1 \

--workers=1 \

--workerImage=registry.cn-hangzhou.aliyuncs.com/tensorflow-samples/neural-style:gpu \

--workingDir=/neural-style \

--ps=1 \

--psImage=registry.cn-hangzhou.aliyuncs.com/tensorflow-samples/style-transfer:ps \

"python neural_style.py --styles /neural-style/examples/1-style.jpg --iterations 1000000"

NAME: style-transfer

LAST DEPLOYED: Thu Sep 20 14:34:55 2018

NAMESPACE: default

STATUS: DEPLOYED

RESOURCES:

==> v1alpha2/TFJob

NAME AGE

style-transfer-tfjob 0s提交任务成功后在监控页面里可以看到Pod的GPU相关指标, 能够看到我们通过Arena部署的Pod,以及pod里GPU 的资源消耗

节点维度也可以看到对应的GPU卡和节点的负载, 在GPU节点监控页面可以选择对应的节点和GPU卡