版权声明:本文为博主原创文章,未经博主允许不得转载。 https://blog.csdn.net/shaoyou223/article/details/79934180

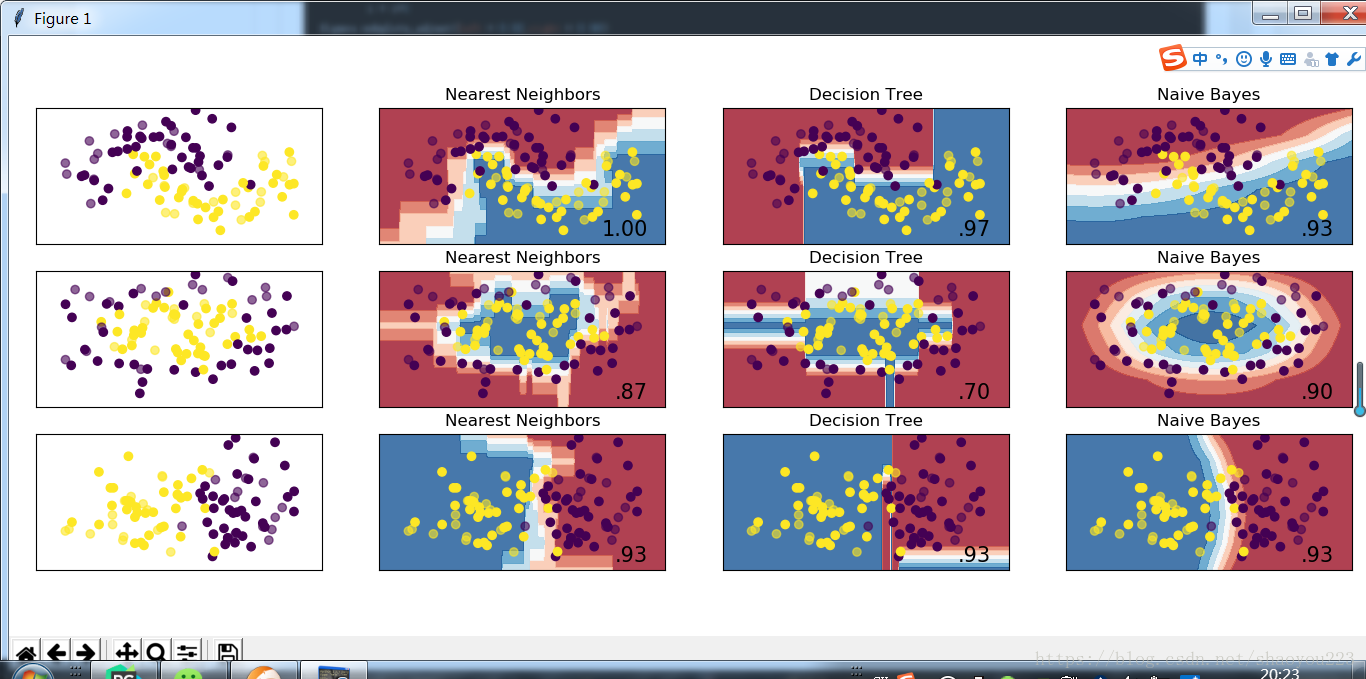

用三种分类方法,分别是k最近邻、决策树和朴素贝叶斯。画出数据点和决策边界,对比其区别。结果在最后的图中

import numpy as np from numpy import * import matplotlib.pyplot as plt from sklearn.naive_bayes import GaussianNB from sklearn import metrics from sklearn.preprocessing import StandardScaler #from sklearn.cross_validation import train_test_split from sklearn import datasets from matplotlib.colors import ListedColormap from sklearn.datasets import make_moons,make_circles,make_classification from sklearn.neighbors import KNeighborsClassifier from sklearn.tree import DecisionTreeClassifier h = 0.02 names = ['Nearest Neighbors','Decision Tree','Naive Bayes'] classifiers = [KNeighborsClassifier(3),DecisionTreeClassifier(max_depth=5),GaussianNB()] #生成三种形态的数据集 X,y = make_classification(n_features=2,n_informative=2,n_redundant=0,random_state=1,n_clusters_per_class=1) rng = np.random.RandomState(2) X += 2 * rng.uniform(size=X.shape) linearly_separable = (X,y) datasets = [make_moons(noise=0.3,random_state=0),make_circles(noise=0.2,factor=0.5,random_state=1),linearly_separable] figure = plt.figure(figsize= (18,6)) i = 1 for ds in datasets: #处理数据,数据标准化后分为测试集和训练集 测试集占30% X,y = ds X = StandardScaler().fit_transform(X) X_train = X[:int(X.shape[0]*0.7)] y_train = y[:int(X.shape[0]*0.7)] X_test = X[int(X.shape[0]*0.7):] y_test = y[int(X.shape[0]*0.7):] x_min,x_max = X[:,0].min()-0.5,X[:,0].max()+0.5 y_min, y_max = X[:, 1].min() - 0.5, X[:, 1].max() + 0.5 xx,yy = np.meshgrid(np.arange(x_min,x_max,h),np.arange(y_min,y_max)) #画出原始数据点 cm = plt.cm.RdBu cm_bright = ListedColormap(['# FF0000','# 0000FF']) ax = plt.subplot(len(datasets),len(classifiers)+1,i) #填充训练集中的点 ax.scatter(X_train[:,0],X_train[:,1],c = y_train) #填充测试集中的点 ax.scatter(X_test[:,0],X_test[:,1],c = y_test,alpha=0.6) #设置x轴和y轴的范围 ax.set_xlim(xx.min(),xx.max()) ax.set_ylim(yy.min(), yy.max()) #设置x轴和y轴的刻度 ax.set_xticks(()) ax.set_yticks(()) i += 1 #画出每个模型的决策点和决策边界 for name,clf in zip(names,classifiers): ax = plt.subplot(len(datasets),len(classifiers)+1,i) score = clf.fit(X_train,y_train).score(X_test,y_test) #画出决策边界,为此我们需要为网格中的每一个点预测一个颜色(类别) Z = clf.predict_proba(np.c_[xx.ravel(),yy.ravel()])[:,1] #把结果放进颜色图中 Z = Z.reshape(xx.shape) ax.contourf(xx,yy,Z,cmap = cm,alpha = 0.8) #填充训练集中的点 ax.scatter(X_train[:,0],X_train[:,1],c = y_train) #填充测试集中的点 ax.scatter(X_test[:,0],X_test[:,1],c = y_test,alpha = 0.6) ax.set_xlim(xx.min(), xx.max()) ax.set_ylim(yy.min(), yy.max()) # 设置x轴和y轴的刻度 ax.set_xticks(()) ax.set_yticks(()) ax.set_title(name) #在图的右下角标记模型分数 ax.text(xx.max()- 0.3,yy.min()+0.3,('%.2f'%score).lstrip('0'),size = 15,horizontalalignment = 'right') i = i+1 figure.subplots_adjust(left = 0.02,right = 0.98) plt.show()