云硬盘的性能如何衡量?一般使用以下几个指标对存储设备的性能进行描述:

IOPS:每秒读/写次数,单位为次(计数)。存储设备的底层驱动类型决定了不同的 IOPS。

吞吐量:每秒的读写数据量,单位为MB/s。

时延:IO操作的发送时间到接收确认所经过的时间,单位为秒。

FIO是测试磁盘性能的一个非常好的工具,用来对硬件进行压力测试和验证。建议使用libaio的I/O引擎进行测试,请自行安装FIO和Libaio。

请特别注意:

1. 请不要在系统盘上进行 fio 测试,避免损坏系统重要文件

2. fio测试建议在空闲的、未保存重要数据的硬盘上进行,并在测试完后重新制作文件系统。请不要在业务数据硬盘上测试,避免底层文件系统元数据损坏导致数据损坏

2. 测试硬盘性能时,推荐直接测试裸盘(如 vdb);测试文件系统性能时,推荐指定具体文件测试(如 /data/file)

安装libaio、fio

apt install -y libaio-dev

wget http://brick.kernel.dk/snaps/fio-2.1.7.tar.gz

./configure

make

make make install

不同场景的测试公式基本一致,只有3个参数(读写模式,iodepth,blocksize)的区别。下面举例说明使用block size为4k,iodepth为1来测试顺序读性能的命令。

4k随意读测试iops

命令如下:

fio --bs=4k --ioengine=libaio --iodepth=1 --direct=1 --rw=read --time_based --runtime=600 --refill_buffers --norandommap --randrepeat=0 --group_reporting --name=fio-read --size=50G --filename=/dev/vdb1

每个工作负载适合的最佳iodepth不同,具体取决于您的特定应用程序对于 IOPS 和延迟的敏感程度。

参数说明:

常见用例如下:

block=4k iodepth=1 随机读测试,能反映磁盘的时延性能;

block=128K iodepth=32 能反映峰值吞吐性能 ;

block=4k iodepth=32 能反映峰值IOPS性能。

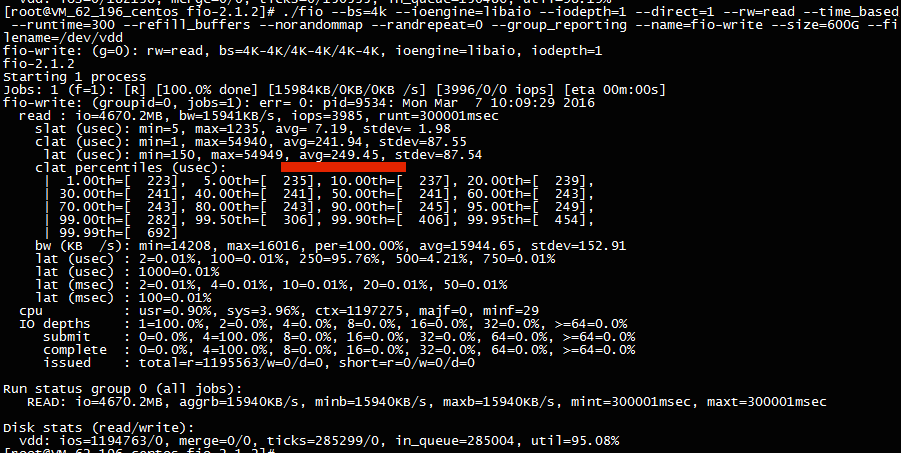

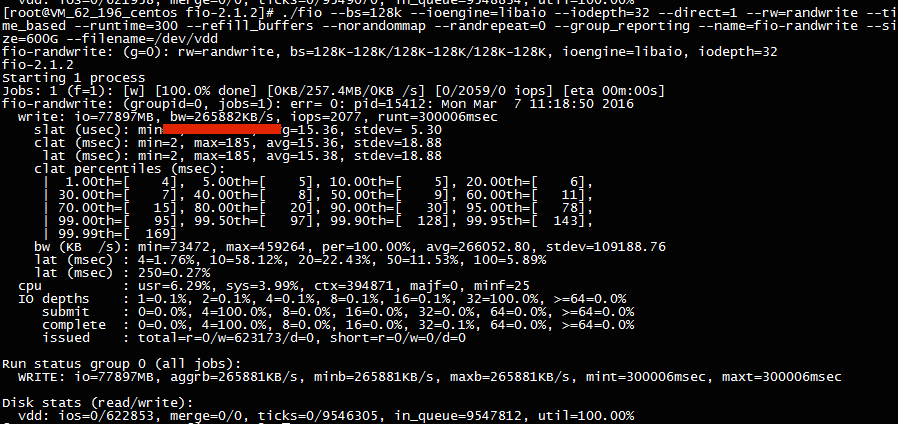

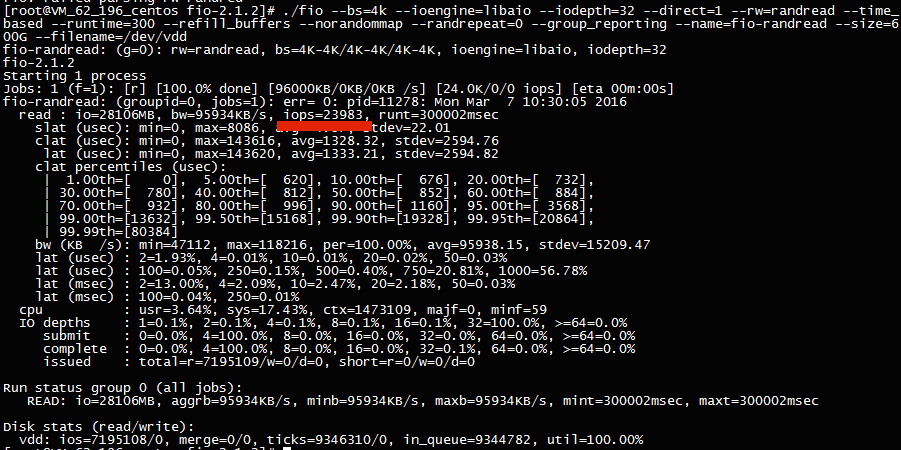

下图为SSD云硬盘的测试性能截图:

扫描二维码关注公众号,回复:

30025 查看本文章

———————————————————————————

另一种简单的测试方法

4k随机读写iops测试、512k顺序读写吞吐量测试

利用配置文件,此处的fio.conf是自己编写的脚本文件

root@VM-0-12-ubuntu:/home/ubuntu# cat fio.conf

[global]

ioengine=libaio

iodepth=128

time_based

direct=1

thread=1

group_reporting

randrepeat=0

norandommap

numjobs=32

timeout=6000

runtime=120

# 4K随机读,IOPS

[randread-4k]

rw=randread

bs=4k

filename=/dev/vdb1 #注:/dev/vdb是目标测试磁盘的设备名称

rwmixread=100

stonewall

# 4K随机写,IOPS

[randwrite-4k]

rw=randwrite

bs=4k

filename=/dev/vdb1

stonewall

# 512K顺序读,吞吐

[read-512k]

rw=read

bs=512k

filename=/dev/vdb1

stonewall

# 512K顺序写,吞吐

[write-512k]

rw=write

bs=512k

filename=/dev/vdb1

stonewall

执行命令

root@VM-0-12-ubuntu:/home/ubuntu#fio fio.conf

结果如下:

randread-4k: (g=0): rw=randread, bs=4K-4K/4K-4K/4K-4K, ioengine=libaio, iodepth=128

...

randwrite-4k: (g=1): rw=randwrite, bs=4K-4K/4K-4K/4K-4K, ioengine=libaio, iodepth=128

...

read-512k: (g=2): rw=read, bs=512K-512K/512K-512K/512K-512K, ioengine=libaio, iodepth=128

...

write-512k: (g=3): rw=write, bs=512K-512K/512K-512K/512K-512K, ioengine=libaio, iodepth=128

...

fio-2.1.7

Starting 128 threads

randread-4k: (groupid=0, jobs=32): err= 0: pid=24060: Tue Apr 17 12:34:20 2018

read : io=960768KB, bw=8002.9KB/s, iops=2000, runt=120053msec

slat (usec): min=1, max=875635, avg=15984.07, stdev=68311.64

clat (msec): min=5, max=5043, avg=2019.68, stdev=397.04

lat (msec): min=40, max=5111, avg=2035.66, stdev=398.82

clat percentiles (msec):

| 1.00th=[ 1045], 5.00th=[ 1270], 10.00th=[ 1434], 20.00th=[ 1860],

| 30.00th=[ 1942], 40.00th=[ 1975], 50.00th=[ 2008], 60.00th=[ 2040],

| 70.00th=[ 2089], 80.00th=[ 2180], 90.00th=[ 2638], 95.00th=[ 2769],

| 99.00th=[ 2933], 99.50th=[ 2966], 99.90th=[ 3261], 99.95th=[ 4015],

| 99.99th=[ 5014]

bw (KB /s): min= 0, max= 894, per=3.14%, avg=251.18, stdev=128.48

lat (msec) : 10=0.01%, 20=0.01%, 50=0.02%, 100=0.01%, 250=0.01%

lat (msec) : 500=0.16%, 750=0.32%, 1000=0.32%, 2000=45.22%, >=2000=53.95%

cpu : usr=0.02%, sys=0.09%, ctx=209159, majf=0, minf=3128

IO depths : 1=0.1%, 2=0.1%, 4=0.1%, 8=0.1%, 16=0.2%, 32=0.4%, >=64=99.2%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.1%

issued : total=r=240192/w=0/d=0, short=r=0/w=0/d=0

latency : target=0, window=0, percentile=100.00%, depth=128

randwrite-4k: (groupid=1, jobs=32): err= 0: pid=24182: Tue Apr 17 12:34:20 2018

write: io=960800KB, bw=8002.2KB/s, iops=2000, runt=120068msec

slat (usec): min=2, max=884998, avg=15984.48, stdev=66543.07

clat (msec): min=4, max=7981, avg=2017.39, stdev=385.47

lat (msec): min=27, max=7981, avg=2033.37, stdev=387.03

clat percentiles (msec):

| 1.00th=[ 1057], 5.00th=[ 1319], 10.00th=[ 1467], 20.00th=[ 1860],

| 30.00th=[ 1926], 40.00th=[ 1975], 50.00th=[ 2008], 60.00th=[ 2057],

| 70.00th=[ 2114], 80.00th=[ 2180], 90.00th=[ 2606], 95.00th=[ 2704],

| 99.00th=[ 2868], 99.50th=[ 2933], 99.90th=[ 3228], 99.95th=[ 5473],

| 99.99th=[ 7111]

bw (KB /s): min= 0, max= 878, per=3.14%, avg=251.08, stdev=154.28

lat (msec) : 10=0.01%, 50=0.02%, 100=0.03%, 250=0.01%, 500=0.01%

lat (msec) : 750=0.42%, 1000=0.37%, 2000=46.44%, >=2000=52.72%

cpu : usr=0.02%, sys=0.10%, ctx=170362, majf=0, minf=32

IO depths : 1=0.1%, 2=0.1%, 4=0.1%, 8=0.1%, 16=0.2%, 32=0.4%, >=64=99.2%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.1%

issued : total=r=0/w=240200/d=0, short=r=0/w=0/d=0

latency : target=0, window=0, percentile=100.00%, depth=128

read-512k: (groupid=2, jobs=32): err= 0: pid=24304: Tue Apr 17 12:34:20 2018

read : io=15682MB, bw=133566KB/s, iops=260, runt=120224msec

slat (usec): min=31, max=2782.5K, avg=122435.74, stdev=251753.79

clat (msec): min=35, max=24997, avg=14629.26, stdev=3582.09

lat (msec): min=140, max=25077, avg=14751.70, stdev=3588.71

clat percentiles (msec):

| 1.00th=[ 1844], 5.00th=[ 6128], 10.00th=[10945], 20.00th=[13042],

| 30.00th=[14091], 40.00th=[14877], 50.00th=[15008], 60.00th=[15926],

| 70.00th=[16057], 80.00th=[16712], 90.00th=[16712], 95.00th=[16712],

| 99.00th=[16712], 99.50th=[16712], 99.90th=[16712], 99.95th=[16712],

| 99.99th=[16712]

bw (KB /s): min= 24, max=14278, per=3.11%, avg=4154.73, stdev=1645.11

lat (msec) : 50=0.01%, 100=0.01%, 250=0.09%, 1000=0.50%, 2000=0.82%

lat (msec) : >=2000=98.58%

cpu : usr=0.00%, sys=0.08%, ctx=27953, majf=0, minf=16899

IO depths : 1=0.1%, 2=0.2%, 4=0.4%, 8=0.8%, 16=1.6%, 32=3.3%, >=64=93.6%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=99.9%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.1%

issued : total=r=31363/w=0/d=0, short=r=0/w=0/d=0

latency : target=0, window=0, percentile=100.00%, depth=128

write-512k: (groupid=3, jobs=32): err= 0: pid=24426: Tue Apr 17 12:34:20 2018

write: io=16117MB, bw=137117KB/s, iops=267, runt=120359msec

slat (usec): min=39, max=3744.3K, avg=118989.73, stdev=211850.72

clat (msec): min=44, max=23277, avg=14163.52, stdev=3673.67

lat (msec): min=44, max=23978, avg=14282.51, stdev=3684.25

clat percentiles (msec):

| 1.00th=[ 1074], 5.00th=[ 5276], 10.00th=[10028], 20.00th=[12256],

| 30.00th=[13435], 40.00th=[14222], 50.00th=[15008], 60.00th=[15664],

| 70.00th=[16057], 80.00th=[16712], 90.00th=[16712], 95.00th=[16712],

| 99.00th=[16712], 99.50th=[16712], 99.90th=[16712], 99.95th=[16712],

| 99.99th=[16712]

bw (KB /s): min= 22, max=42965, per=3.21%, avg=4395.78, stdev=2482.81

lat (msec) : 50=0.02%, 250=0.19%, 500=0.20%, 750=0.06%, 1000=0.44%

lat (msec) : 2000=0.88%, >=2000=98.20%

cpu : usr=0.04%, sys=0.06%, ctx=29046, majf=0, minf=32

IO depths : 1=0.1%, 2=0.2%, 4=0.4%, 8=0.8%, 16=1.6%, 32=3.2%, >=64=93.7%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=99.9%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.1%

issued : total=r=0/w=32233/d=0, short=r=0/w=0/d=0

latency : target=0, window=0, percentile=100.00%, depth=128

Run status group 0 (all jobs):

READ: io=960768KB, aggrb=8002KB/s, minb=8002KB/s, maxb=8002KB/s, mint=120053msec, maxt=120053msec

Run status group 1 (all jobs):

WRITE: io=960800KB, aggrb=8002KB/s, minb=8002KB/s, maxb=8002KB/s, mint=120068msec, maxt=120068msec

Run status group 2 (all jobs):

READ: io=15682MB, aggrb=133566KB/s, minb=133566KB/s, maxb=133566KB/s, mint=120224msec, maxt=120224msec

Run status group 3 (all jobs):

WRITE: io=16117MB, aggrb=137117KB/s, minb=137117KB/s, maxb=137117KB/s, mint=120359msec, maxt=120359msec

Disk stats (read/write):

vdb: ios=271670/273456, merge=0/1084, ticks=30026240/30626672, in_queue=62613216, util=100.00%