版权声明:本文为博主原创文章,未经博主允许不得转载。 https://blog.csdn.net/qq_40795214/article/details/81989719

分析一下这几天玩爬虫碰到的坑。

因为初学,所以边看书,边看别人的实例,本想照着别人的先搞出个小名堂,不料深陷403泥潭,一下午才拔出来。我用的是scrapy框架,具体报错如下:

[root@Uu tutorial]# scrapy crawl dmoz -o torrents.jl

2018-08-23 22:49:26 [scrapy.utils.log] INFO: Scrapy 1.5.1 started (bot: tutorial)

2018-08-23 22:49:26 [scrapy.utils.log] INFO: Versions: lxml 3.2.1.0, libxml2 2.9.1, cssselect 1.0.3, parsel 1.5.0, w3lib 1.19.0, Twisted 18.7.0, Python 2.7.5 (default, Jul 13 2018, 13:06:57) - [GCC 4.8.5 20150623 (Red Hat 4.8.5-28)], pyOpenSSL 18.0.0 (OpenSSL 1.1.0i 14 Aug 2018), cryptography 2.3.1, Platform Linux-3.10.0-693.el7.x86_64-x86_64-with-centos-7.4.1708-Core

2018-08-23 22:49:26 [scrapy.crawler] INFO: Overridden settings: {'NEWSPIDER_MODULE': 'tutorial.spiders', 'FEED_URI': 'torrents.jl', 'CONCURRENT_REQUESTS': 1, 'SPIDER_MODULES': ['tutorial.spiders'], 'BOT_NAME': 'tutorial', 'COOKIES_ENABLED': False, 'USER_AGENT': 'Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; AcooBrowser; .NET CLR 1.1.4322; .NET CLR 2.0.50727)', 'FEED_FORMAT': 'jl', 'DOWNLOAD_DELAY': 5}

2018-08-23 22:49:26 [scrapy.middleware] INFO: Enabled extensions:

['scrapy.extensions.feedexport.FeedExporter',

'scrapy.extensions.memusage.MemoryUsage',

'scrapy.extensions.logstats.LogStats',

'scrapy.extensions.telnet.TelnetConsole',

'scrapy.extensions.corestats.CoreStats']

2018-08-23 22:49:26 [scrapy.middleware] INFO: Enabled downloader middlewares:

['scrapy.downloadermiddlewares.httpauth.HttpAuthMiddleware',

'scrapy.downloadermiddlewares.downloadtimeout.DownloadTimeoutMiddleware',

'scrapy.downloadermiddlewares.defaultheaders.DefaultHeadersMiddleware',

'scrapy.downloadermiddlewares.useragent.UserAgentMiddleware',

'scrapy.downloadermiddlewares.retry.RetryMiddleware',

'scrapy.downloadermiddlewares.redirect.MetaRefreshMiddleware',

'scrapy.downloadermiddlewares.httpcompression.HttpCompressionMiddleware',

'scrapy.downloadermiddlewares.redirect.RedirectMiddleware',

'scrapy.downloadermiddlewares.httpproxy.HttpProxyMiddleware',

'scrapy.downloadermiddlewares.stats.DownloaderStats']

2018-08-23 22:49:26 [scrapy.middleware] INFO: Enabled spider middlewares:

['scrapy.spidermiddlewares.httperror.HttpErrorMiddleware',

'scrapy.spidermiddlewares.offsite.OffsiteMiddleware',

'scrapy.spidermiddlewares.referer.RefererMiddleware',

'scrapy.spidermiddlewares.urllength.UrlLengthMiddleware',

'scrapy.spidermiddlewares.depth.DepthMiddleware']

2018-08-23 22:49:26 [scrapy.middleware] INFO: Enabled item pipelines:

[]

2018-08-23 22:49:26 [scrapy.core.engine] INFO: Spider opened

2018-08-23 22:49:26 [scrapy.extensions.logstats] INFO: Crawled 0 pages (at 0 pages/min), scraped 0 items (at 0 items/min)

2018-08-23 22:49:26 [scrapy.extensions.telnet] DEBUG: Telnet console listening on 127.0.0.1:6023

2018-08-23 22:49:27 [scrapy.core.engine] DEBUG: Crawled (403) <GET http://www.dmoz.org/Computers/Programming/Languages/Python/Books/> (referer: None)

2018-08-23 22:49:27 [scrapy.spidermiddlewares.httperror] INFO: Ignoring response <403 http://www.dmoz.org/Computers/Programming/Languages/Python/Books/>: HTTP status code is not handled or not allowed

2018-08-23 22:49:33 [scrapy.core.engine] DEBUG: Crawled (403) <GET http://www.dmoz.org/Computers/Programming/Languages/Python/Resources/> (referer: None)

2018-08-23 22:49:33 [scrapy.spidermiddlewares.httperror] INFO: Ignoring response <403 http://www.dmoz.org/Computers/Programming/Languages/Python/Resources/>: HTTP status code is not handled or not allowed

2018-08-23 22:49:33 [scrapy.core.engine] INFO: Closing spider (finished)

2018-08-23 22:49:33 [scrapy.statscollectors] INFO: Dumping Scrapy stats:

{'downloader/request_bytes': 662,

'downloader/request_count': 2,

'downloader/request_method_count/GET': 2,

'downloader/response_bytes': 1402,

'downloader/response_count': 2,

'downloader/response_status_count/403': 2,

'finish_reason': 'finished',

'finish_time': datetime.datetime(2018, 8, 23, 14, 49, 33, 717814),

'httperror/response_ignored_count': 2,

'httperror/response_ignored_status_count/403': 2,

'log_count/DEBUG': 3,

'log_count/INFO': 9,

'memusage/max': 42958848,

'memusage/startup': 42958848,

'response_received_count': 2,

'scheduler/dequeued': 2,

'scheduler/dequeued/memory': 2,

'scheduler/enqueued': 2,

'scheduler/enqueued/memory': 2,

'start_time': datetime.datetime(2018, 8, 23, 14, 49, 26, 612313)}

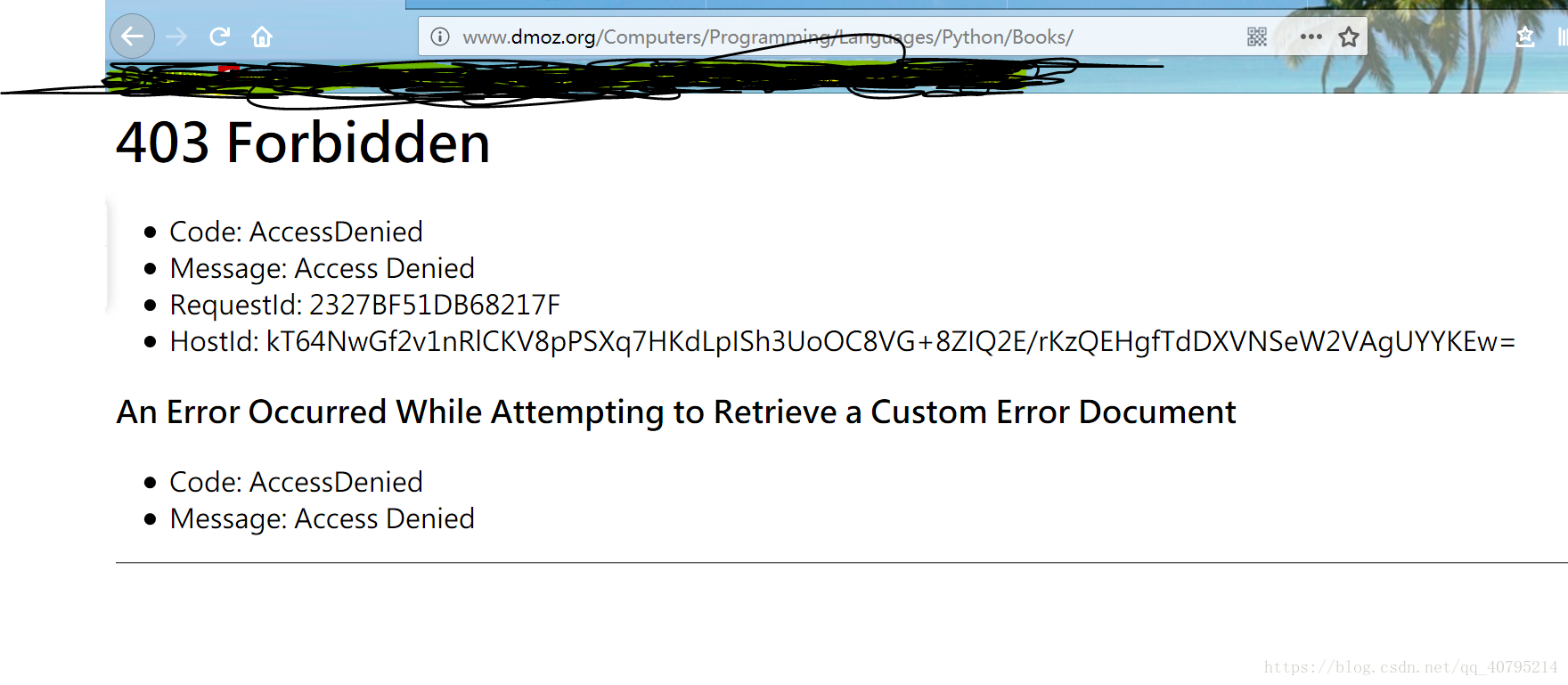

2018-08-23 22:49:33 [scrapy.core.engine] INFO: Spider closed (finished)开始以为是settings.py的问题,改来改去,发现都不是,又改了item,还不是,最后把要爬取的url输入浏览器,发现地址失效,如下所示:

所以,解决问题的方式就是,重新找一个地址爬取,原地址已不可用。