本系列教程主要讲解Camera从APP层到HAL层的整个流程,第一篇先讲解CameraService的启动,后面会讲解open、preview、takepicture的流程。

Android 7.0之前CameraService是在mediaserver进程中注册的,看下Android 6.0的代码:

//path: frameworks\av\media\mediaserver\main_mediaserver.cpp

int main()

{

sp<ProcessState> proc(ProcessState::self());

sp<IServiceManager> sm = defaultServiceManager();

ALOGI("ServiceManager: %p", sm.get());

AudioFlinger::instantiate();

MediaPlayerService::instantiate();

ResourceManagerService::instantiate();

//初始化相机服务

CameraService::instantiate();

AudioPolicyService::instantiate();

SoundTriggerHwService::instantiate();

RadioService::instantiate();

registerExtensions();

ProcessState::self()->startThreadPool();

IPCThreadState::self()->joinThreadPool();

}接着看下Android 7.0中main_mediaserver.cpp的代码,发现没有了CameraService::instantiate(); 也就是说Android 7.0之后就不在main_mediaserver.cpp中注册了。

//没有CameraService::instantiate(),也少了几个别的服务,这里只关注CameraService

int main(int argc __unused, char **argv __unused)

{

signal(SIGPIPE, SIG_IGN);

sp<ProcessState> proc(ProcessState::self());

sp<IServiceManager> sm(defaultServiceManager());

ALOGI("ServiceManager: %p", sm.get());

InitializeIcuOrDie();

MediaPlayerService::instantiate();

ResourceManagerService::instantiate();

registerExtensions();

ProcessState::self()->startThreadPool();

IPCThreadState::self()->joinThreadPool();

}我们看下FrameWork层Camera的代码(frameworks\av\camera ),发现多了个cameraserver文件夹 ,看下里面的main_cameraserver.cpp,原来CameraServe::instantiaicte()在这里。

int main(int argc __unused, char** argv __unused)

{

signal(SIGPIPE, SIG_IGN);

sp<ProcessState> proc(ProcessState::self());

sp<IServiceManager> sm = defaultServiceManager();

ALOGI("ServiceManager: %p", sm.get());

//初始化CameraService服务

CameraServe::instantiaicte();

ProcessState::self()->startThreadPool();

IPCThreadState::self()->joinThreadPool();

}从这里可以猜测cameraserver应该是作为独立的进程运行的,我们看下 cameraserver文件夹下面的另一个文件cameraserver.rc

//这表示由init进程启动名字为cameraserver的进程,果然是独立进程,路径为/system/bin/cameraserver

service cameraserver /system/bin/cameraserver

//class表示类别,同一类别(这里是main类别)的进程同时启动,同时停止

class main

//用户名及分组

user cameraserver

group audio camera input drmrpc

ioprio rt 4

writepid /dev/cpuset/camera-daemon/tasks /dev/stune/top-app/taskscameraserver.rc由Android.mk文件来打包到指定位置:

LOCAL_PATH:= $(call my-dir)

include $(CLEAR_VARS)

//源文件

LOCAL_SRC_FILES:= \

main_cameraserver.cpp

LOCAL_SHARED_LIBRARIES := \

libcameraservice \

libcutils \

libutils \

libbinder \

libcamera_client

//模块的名称

LOCAL_MODULE:= cameraserver

LOCAL_32_BIT_ONLY := true

LOCAL_CFLAGS += -Wall -Wextra -Werror -Wno-unused-parameter

//LOCAL_INIT_RC会将cameraserver.rc放在/system/etc/init/目录中,这个目录下的脚本会由init进程来启动。

LOCAL_INIT_RC := cameraserver.rc

include $(BUILD_EXECUTABLE)那cameraserver.rc文件什么时候执行呢?这里我们需要理解下Android系统启动过程,Android系统启动包括两大块:Linux内核启动,Android框架启动。

1,Linux内核启动:

- BootLoader启动

内核启动首先装载BootLoader引导程序,执行完进入kthreadd内核进程,这是所有内核进程的父进程。

- 加载Linux内核

初始化驱动、安装根文件系统等,最后启动第一个用户进程init进程,它是所有用户进程的父进程。这样就进入了Android框架的启动阶段。

2,Android框架启动

init进程启动后会加载init.rc(system\core\rootdir\init.rc)脚本,当它执行mount_all指令挂载分区时,会加载/{system,vendor,odm}/etc/init目录下的所有rc脚本,这样就会启动cameraserver进程,同时也会启动zygote进程(第一个Java层进程,也是Java层所有进程的父进程)、ServiceManager、mediaserver(多媒体服务进程)、surfaceflinger(屏幕渲染相关的进程)等。之后zygote会孵化出启动system_server进程,Android framework里面的所有service(ActivityManagerService、WindowManagerService等)都是由system_server启动,这里就不细讲了。

那为什么Android 7.0之前cameraservice是运行在mediaserver进程中的,而从Android 7.0开始将cameraservice分离出来成一个单独的cameraserver进程?这是为了安全性,因为mediaserver 进程中有很多其它的Service,如AudioFlinger、MediaPlayerService等,如果这其中有一个Service挂掉就会导致mediaserver进程重启,如果相机正在执行,这样就会挂掉,用户体验很差。

现在知道了cameraserver进程是怎么启动的了,下面分析下它的启动过程,cameraserver进程的入口是frameworks\av\camera\cameraserver\main_cameraserver.cpp的main函数,看下代码:

int main(int argc __unused, char** argv __unused)

{

signal(SIGPIPE, SIG_IGN);

//获取一个ProcessState跟Binder驱动打交道

sp<ProcessState> proc(ProcessState::self());

//获取ServiceManager用以注册该服务

sp<IServiceManager> sm = defaultServiceManager();

ALOGI("ServiceManager: %p", sm.get());

CameraService::instantiate();

//线程池管理

ProcessState::self()->startThreadPool();

IPCThreadState::self()->joinThreadPool();

}我们主要关注CameraService::instantiate(),别的几行属于Binder机制,我这里假设你已经熟悉了,不熟悉的话先补下Binder知识,不然后面很难理解的。

CameraService继承了BinderService和BnCameraService类。CameraService::instantiate()函数是调用其父类BinderService类的方法。

class CameraService :

public BinderService<CameraService>,

public ::android::hardware::BnCameraService,

public IBinder::DeathRecipient,

public camera_module_callbacks_t

{

......

}

//模板类

template<typename SERVICE>

class BinderService

{

public:

static status_t publish(bool allowIsolated = false) {

sp<IServiceManager> sm(defaultServiceManager());

return sm->addService(

String16(SERVICE::getServiceName()),

new SERVICE(), allowIsolated);

}

static void publishAndJoinThreadPool(bool allowIsolated = false) {

publish(allowIsolated);

joinThreadPool();

}

static void instantiate() { publish(); }

......

}BinderService使用了模板类,使用CameraService::instantiate()初始化时,SERVICE就是CameraService。替换模板后CameraService::instantiate()函数如下:

static void instantiate() { publish(); }

static status_t publish() {

sp<IServiceManager> sm(defaultServiceManager());

/getServiceName()返回"media.camera"字符串

return sm->addService(

String16(CameraService ::getServiceName()), new CameraService (), false);

}我们知道defaultServiceManager返回的是BpServiceManager,看下它的addService函数:

virtual status_t addService(const String16& name, const sp<IBinder>& service,

bool allowIsolated)

{

Parcel data, reply;

//写入Interface name,这里是"android.os.IServiceManager"

data.writeInterfaceToken(IServiceManager::getInterfaceDescriptor());

//写入Service name

data.writeString16(name);

//写入Service实例

data.writeStrongBinder(service);

data.writeInt32(allowIsolated ? 1 : 0);

//remote()实际上是指BpBinder(0)

status_t err = remote()->transact(ADD_SERVICE_TRANSACTION, data, &reply);

return err == NO_ERROR ? reply.readExceptionCode() : err;

}注意addService第二个参数service是sp类型,而sp初始化时,会调用其包装的对象的onFirstRef()函数,不懂的可以参考Android系统的智能指针(轻量级指针、强指针和弱指针)的实现原理分析

这里的service就是CameraService,看下它的onFirstRef()函数:

//原始代码有点长,这里只保留了比较重要的代码

void CameraService::onFirstRef()

{

BnCameraService::onFirstRef();

//camera_module_t和CAMERA_HARDWARE_MODULE_ID定义在hardware\libhardware\include\hardware\camera_common.h中,

camera_module_t *rawModule;

//注意这里把rawModule强转成camera_module_t,至于为什么能强转,下面会讲的

int err = hw_get_module(CAMERA_HARDWARE_MODULE_ID,

(const hw_module_t **)&rawModule);

//创建CameraModule对象并初始化

mModule = new CameraModule(rawModule);

err = mModule->init();

//获取摄像头数量

mNumberOfCameras = mModule->getNumberOfCameras();

mNumberOfNormalCameras = mNumberOfCameras;

int latestStrangeCameraId = INT_MAX;

for (int i = 0; i < mNumberOfCameras; i++) {

String8 cameraId = String8::format("%d", i);

//获取每个camera的信息以及初始化状态

}

//因为CameraService继承了camera_module_callbacks_t,定义在camera_common.h中,所以这里的Callback主要监听camera_device_status_change和torch_mode_status_change

if (mModule->getModuleApiVersion() >= CAMERA_MODULE_API_VERSION_2_1) {

mModule->setCallbacks(this);

}

//连接CameraServiceProxy服务,也就是"media.camera.proxy"服务,此服务由SystemServer注册到ServiceManager中

CameraService::pingCameraServiceProxy();

}camera_module_t(hardware\libhardware\include\hardware\camera_common.h)的声明如下:

typedef struct camera_module {

//注意common必须是camera_module的第一个成员,这样就可以根据camera_module_t的地址强转成hw_module_t

hw_module_t common;

int (*get_number_of_cameras)(void);

......

} camera_module_t;hw_get_module()函数定义在hardware\libhardware\hardware.c文件中

int hw_get_module(const char *id, const struct hw_module_t **module)

{

return hw_get_module_by_class(id, NULL, module);

}

int hw_get_module_by_class(const char *class_id, const char *inst,

const struct hw_module_t **module)

{

......

//首先根据ro.hardware.class_id.inst查找动态链接库路径,如果可以找到,直接跳到found位置

snprintf(prop_name, sizeof(prop_name), "ro.hardware.%s", name);

if (property_get(prop_name, prop, NULL) > 0) {

if (hw_module_exists(path, sizeof(path), name, prop) == 0) {

goto found;

}

}

//在所有的配置变量中查找所需模块的动态链接库路径

for (i=0 ; i<HAL_VARIANT_KEYS_COUNT; i++) {

if (property_get(variant_keys[i], prop, NULL) == 0) {

continue;

}

if (hw_module_exists(path, sizeof(path), name, prop) == 0) {

goto found;

}

}

return -ENOENT;

found:

//根据路径加载动态链接库,并将硬件模块的结构体地址赋给module

return load(class_id, path, module);

}static int load(const char *id,

const char *path,

const struct hw_module_t **pHmi)

{

int status = -EINVAL;

void *handle = NULL;

struct hw_module_t *hmi = NULL;

//打开.so文件

handle = dlopen(path, RTLD_NOW);

//获取hal_module_info结构体的地址

const char *sym = HAL_MODULE_INFO_SYM_AS_STR;

hmi = (struct hw_module_t *)dlsym(handle, sym);

}

//hardware.h

/**

* Name of the hal_module_info

*/

#define HAL_MODULE_INFO_SYM HMI

/**

* Name of the hal_module_info as a string

*/

#define HAL_MODULE_INFO_SYM_AS_STR "HMI"根据上述代码,dlsym就是找HMI的地址,而HMI就是HAL_MODULE_INFO_SYM的宏定义,所以最终就是找HAL_MODULE_INFO_SYM的地址。

通过查找Android 7.0 代码,发现HAL_MODULE_INFO_SYM是在hardware/qcom/camera/QCamera2/QCamera2Hal.cpp文件中实现的(如果你的7.0源码没有HAL层的代码,可以参考在线源代码http://androidxref.com/)。

static hw_module_t camera_common = {

.tag = HARDWARE_MODULE_TAG,

.module_api_version = CAMERA_MODULE_API_VERSION_2_4,

.hal_api_version = HARDWARE_HAL_API_VERSION,

.id = CAMERA_HARDWARE_MODULE_ID,

.name = "QCamera Module",

.author = "Qualcomm Innovation Center Inc",

.methods = &qcamera::QCamera2Factory::mModuleMethods,

.dso = NULL,

.reserved = {0}

};

camera_module_t HAL_MODULE_INFO_SYM = {

.common = camera_common,

.get_number_of_cameras = qcamera::QCamera2Factory::get_number_of_cameras,

.get_camera_info = qcamera::QCamera2Factory::get_camera_info,

.set_callbacks = qcamera::QCamera2Factory::set_callbacks,

.get_vendor_tag_ops = qcamera::QCamera3VendorTags::get_vendor_tag_ops,

.open_legacy = qcamera::QCamera2Factory::open_legacy,

.set_torch_mode = qcamera::QCamera2Factory::set_torch_mode,

.init = NULL,

.reserved = {0}

};所以最终rawModule就是指向HAL_MODULE_INFO_SYM,这样CameraService就跟Camera HAL层联系起来了。

回到CameraService::onFirstRef()函数中:

//创建CameraModule对象并初始化

mModule = new CameraModule(rawModule);

err = mModule->init();

//获取摄像头数量

mNumberOfCameras = mModule->getNumberOfCameras();

mNumberOfNormalCameras = mNumberOfCameras;CameraModule::CameraModule(camera_module_t *module) {

//对mModule进行初始化

mModule = module;

}

int CameraModule::init() {

//mModule->init就是指向HAL_MODULE_INFO_SYM的init,为NULL

if (getModuleApiVersion() >= CAMERA_MODULE_API_VERSION_2_4 &&

mModule->init != NULL) {

ATRACE_BEGIN("camera_module->init");

res = mModule->init();

ATRACE_END();

}

//调用getNumberOfCameras()

mCameraInfoMap.setCapacity(getNumberOfCameras());

}

int CameraModule::getNumberOfCameras() {

int numCameras;

//调用HAL_MODULE_INFO_SYM的get_number_of_cameras()函数

numCameras = mModule->get_number_of_cameras();

return numCameras;

}

//查看HAL层代码

int QCamera2Factory::get_number_of_cameras()

{

int numCameras = 0;

//gQCamera2Factory为空的话,创建一个对象

if (!gQCamera2Factory) {

gQCamera2Factory = new QCamera2Factory();

if (!gQCamera2Factory) {

LOGE("Failed to allocate Camera2Factory object");

return 0;

}

}

if(gQCameraMuxer)

numCameras = gQCameraMuxer->get_number_of_cameras();

else

//获取Camera摄像头数量

numCameras = gQCamera2Factory->getNumberOfCameras();

return numCameras;

}

QCamera2Factory::QCamera2Factory()

{

mHalDescriptors = NULL;

mCallbacks = NULL;

//get_num_of_cameras()在mm_camera_interface.c中,之后就会进入到Linux内核,往下就不讲了

mNumOfCameras = get_num_of_cameras();

mNumOfCameras_expose = get_num_of_cameras_to_expose();

......

}onFirstRef函数主要是根据ID查找并加载HAL模块的动态链接库,然后创建CameraModule对象并初始化以及获取摄像头数量,之后给HAL层设置一个监听接口。

回到addService函数中,接着看其里面的具体内容:

virtual status_t addService(const String16& name, const sp<IBinder>& service,

bool allowIsolated)

{

Parcel data, reply;

//写入Interface name,这里是"android.os.IServiceManager"

data.writeInterfaceToken(IServiceManager::getInterfaceDescriptor());

//写入Service name

data.writeString16(name);

//写入Service实例

data.writeStrongBinder(service);

data.writeInt32(allowIsolated ? 1 : 0);

//remote()实际上是指BpBinder(0),它是指Binder驱动中的0号引用,也就是指ServiceManager的代理对象

status_t err = remote()->transact(ADD_SERVICE_TRANSACTION, data, &reply);

return err == NO_ERROR ? reply.readExceptionCode() : err;

}

status_t BpBinder::transact(

uint32_t code, const Parcel& data, Parcel* reply, uint32_t flags)

{

// Once a binder has died, it will never come back to life.

if (mAlive) {

//通过IPCThreadState对象来向Binder驱动发送添加服务的请求,注意mHandle值为0

status_t status = IPCThreadState::self()->transact(

mHandle, code, data, reply, flags);

if (status == DEAD_OBJECT) mAlive = 0;

return status;

}

return DEAD_OBJECT;

}

status_t IPCThreadState::transact(int32_t handle,

uint32_t code, const Parcel& data,

Parcel* reply, uint32_t flags)

{

status_t err = data.errorCheck();

if (err == NO_ERROR) {

//将数据封装成binder_transaction_data结构,并写到mOut变量中

err = writeTransactionData(BC_TRANSACTION, flags, handle, code, data, NULL);

}

if ((flags & TF_ONE_WAY) == 0) {

if (reply) {

err = waitForResponse(reply);

} else {

Parcel fakeReply;

err = waitForResponse(&fakeReply);

}

}

return err;

}

status_t IPCThreadState::writeTransactionData(int32_t cmd, uint32_t binderFlags,

int32_t handle, uint32_t code, const Parcel& data, status_t* statusBuffer)

{

binder_transaction_data tr;

//封装数据

tr.target.ptr = 0; /* Don't pass uninitialized stack data to a remote process */

tr.target.handle = handle;

tr.code = code;

tr.flags = binderFlags;

tr.cookie = 0;

tr.sender_pid = 0;

tr.sender_euid = 0;

const status_t err = data.errorCheck();

if (err == NO_ERROR) {

tr.data_size = data.ipcDataSize();

tr.data.ptr.buffer = data.ipcData();

tr.offsets_size = data.ipcObjectsCount()*sizeof(binder_size_t);

tr.data.ptr.offsets = data.ipcObjects();

}

......

//写到mOut变量中

mOut.writeInt32(cmd);

mOut.write(&tr, sizeof(tr));

return NO_ERROR;

}到这里只是将数据封装了一下,但是还没发送,接着看waitForResponse函数:

status_t IPCThreadState::waitForResponse(Parcel *reply, status_t *acquireResult)

{

uint32_t cmd;

int32_t err;

while (1) {

//跟Binder驱动交互,这个是核心,在下面讲解

if ((err=talkWithDriver()) < NO_ERROR) break;

//读取Binder驱动返回的命令

cmd = (uint32_t)mIn.readInt32();

IF_LOG_COMMANDS() {

alog << "Processing waitForResponse Command: "

<< getReturnString(cmd) << endl;

}

switch (cmd) {

case BR_TRANSACTION_COMPLETE:

if (!reply && !acquireResult) goto finish;

break;

case BR_DEAD_REPLY:

err = DEAD_OBJECT;

goto finish;

case BR_FAILED_REPLY:

err = FAILED_TRANSACTION;

goto finish;

case BR_ACQUIRE_RESULT:

{

ALOG_ASSERT(acquireResult != NULL, "Unexpected brACQUIRE_RESULT");

const int32_t result = mIn.readInt32();

if (!acquireResult) continue;

*acquireResult = result ? NO_ERROR : INVALID_OPERATION;

}

goto finish;

case BR_REPLY:

{

binder_transaction_data tr;

err = mIn.read(&tr, sizeof(tr));

ALOG_ASSERT(err == NO_ERROR, "Not enough command data for brREPLY");

if (err != NO_ERROR) goto finish;

if (reply) {

if ((tr.flags & TF_STATUS_CODE) == 0) {

reply->ipcSetDataReference(

reinterpret_cast<const uint8_t*>(tr.data.ptr.buffer),

tr.data_size,

reinterpret_cast<const binder_size_t*>(tr.data.ptr.offsets),

tr.offsets_size/sizeof(binder_size_t),

freeBuffer, this);

} else {

err = *reinterpret_cast<const status_t*>(tr.data.ptr.buffer);

freeBuffer(NULL,

reinterpret_cast<const uint8_t*>(tr.data.ptr.buffer),

tr.data_size,

reinterpret_cast<const binder_size_t*>(tr.data.ptr.offsets),

tr.offsets_size/sizeof(binder_size_t), this);

}

} else {

freeBuffer(NULL,

reinterpret_cast<const uint8_t*>(tr.data.ptr.buffer),

tr.data_size,

reinterpret_cast<const binder_size_t*>(tr.data.ptr.offsets),

tr.offsets_size/sizeof(binder_size_t), this);

continue;

}

}

goto finish;

default:

err = executeCommand(cmd);

if (err != NO_ERROR) goto finish;

break;

}

}

finish:

if (err != NO_ERROR) {

if (acquireResult) *acquireResult = err;

if (reply) reply->setError(err);

mLastError = err;

}

return err;

}

//不穿参数时doReceive为true

status_t IPCThreadState::talkWithDriver(bool doReceive)

{

//mProcess是IPCThreadState初始化时所包含的ProcessState对象,它的mDriverFD对应Binder驱动的文件描述符

if (mProcess->mDriverFD <= 0) {

return -EBADF;

}

//将mOut的数据封装到binder_write_read结构体中

binder_write_read bwr;

// Is the read buffer empty?

const bool needRead = mIn.dataPosition() >= mIn.dataSize();

// We don't want to write anything if we are still reading

// from data left in the input buffer and the caller

// has requested to read the next data.

const size_t outAvail = (!doReceive || needRead) ? mOut.dataSize() : 0;

bwr.write_size = outAvail;

bwr.write_buffer = (uintptr_t)mOut.data();

// This is what we'll read.

if (doReceive && needRead) {

bwr.read_size = mIn.dataCapacity();

bwr.read_buffer = (uintptr_t)mIn.data();

} else {

bwr.read_size = 0;

bwr.read_buffer = 0;

}

bwr.write_consumed = 0;

bwr.read_consumed = 0;

status_t err;

do {

#if defined(__ANDROID__)

//这里就是向Binder驱动写入命令和数据

if (ioctl(mProcess->mDriverFD, BINDER_WRITE_READ, &bwr) >= 0)

err = NO_ERROR;

#else

err = INVALID_OPERATION;

#endif

} while (err == -EINTR);

......

return err;

}下面就进入到了Linux内核中了,AOSP中没有这部分代码,我们可以使用这两个Linux内核在线阅读网站:https://lxr.missinglinkelectronics.com/linux 或者 http://elixir.free-electrons.com/

//路径

linux/drivers/android/binder.c

linux/include/uapi/linux/android/binder.h首先binder_init函数会创建 /dev/binder节点:

static int __init binder_init(void)

{

......

while ((device_name = strsep(&device_names, ","))) {

//初始化Binder设备

ret = init_binder_device(device_name);

}

return ret;

}

static int __init init_binder_device(const char *name)

{

int ret;

struct binder_device *binder_device;

binder_device = kzalloc(sizeof(*binder_device), GFP_KERNEL);

if (!binder_device)

return -ENOMEM;

//操作函数结构体

binder_device->miscdev.fops = &binder_fops;

binder_device->miscdev.minor = MISC_DYNAMIC_MINOR;

//设备名称,这里就是"binder",这样用户空间可以通过/dev/binder节点进行操作

binder_device->miscdev.name = name;

binder_device->context.binder_context_mgr_uid = INVALID_UID;

binder_device->context.name = name;

//向内核中注册misc设备

ret = misc_register(&binder_device->miscdev);

if (ret < 0) {

kfree(binder_device);

return ret;

}

hlist_add_head(&binder_device->hlist, &binder_devices);

return ret;

}

//这里指定了文件操作的函数

static const struct file_operations binder_fops = {

.owner = THIS_MODULE,

.poll = binder_poll,

//从Linux kernel 2.6.36版本开始,删除了ioctl函数指针,用unlocked_ioctl取代

.unlocked_ioctl = binder_ioctl,

.compat_ioctl = binder_ioctl,

.mmap = binder_mmap,

.open = binder_open,

.flush = binder_flush,

.release = binder_release,

};回到IPCThreadState::talkWithDriver:

if (ioctl(mProcess->mDriverFD, BINDER_WRITE_READ, &bwr) >= 0)

err = NO_ERROR;

//ioctl就对应内核中的binder_ioctl函数,

static long binder_ioctl(struct file *filp, unsigned int cmd, unsigned long arg)

{

int ret;

struct binder_proc *proc = filp->private_data;

struct binder_thread *thread;

......

//从binder_proc中查找binder_thread,如果当前线程已经加入到proc的线程队列则直接返回,如果不存在则创建binder_thread,并将当前线程添加到当前的proc

thread = binder_get_thread(proc);

switch (cmd) {

case BINDER_WRITE_READ:

ret = binder_ioctl_write_read(filp, cmd, arg, thread);

if (ret)

goto err;

break;

}

static int binder_ioctl_write_read(struct file *filp,

unsigned int cmd, unsigned long arg,

struct binder_thread *thread)

{

int ret = 0;

struct binder_proc *proc = filp->private_data;

unsigned int size = _IOC_SIZE(cmd);

void __user *ubuf = (void __user *)arg;

struct binder_write_read bwr;

if (size != sizeof(struct binder_write_read)) {

ret = -EINVAL;

goto out;

}

//把用户空间的binder_write_read数据拷贝到内核空间bwr

if (copy_from_user(&bwr, ubuf, sizeof(bwr))) {

ret = -EFAULT;

goto out;

}

if (bwr.write_size > 0) {

//当写缓存中有数据时,执行Binder写操作

ret = binder_thread_write(proc, thread,

bwr.write_buffer,

bwr.write_size,

&bwr.write_consumed);

trace_binder_write_done(ret);

//如果Binder写操作失败,则将bwr数据拷回到内核空间,并返回

if (ret < 0) {

bwr.read_consumed = 0;

if (copy_to_user(ubuf, &bwr, sizeof(bwr)))

ret = -EFAULT;

goto out;

}

}

if (bwr.read_size > 0) {

//当读缓存中有数据时,执行Binder读操作

ret = binder_thread_read(proc, thread, bwr.read_buffer,

bwr.read_size,

&bwr.read_consumed,

filp->f_flags & O_NONBLOCK);

trace_binder_read_done(ret);

if (!list_empty(&proc->todo))

wake_up_interruptible(&proc->wait);

//如果Binder读操作失败,则将bwr数据拷回到内核空间,并返回

if (ret < 0) {

if (copy_to_user(ubuf, &bwr, sizeof(bwr)))

ret = -EFAULT;

goto out;

}

}

//将bwr数据拷回到内核空间,并返回

if (copy_to_user(ubuf, &bwr, sizeof(bwr))) {

ret = -EFAULT;

goto out;

}

out:

return ret;

}看下binder_thread_write写操作:

static int binder_thread_write(struct binder_proc *proc,

struct binder_thread *thread,

binder_uintptr_t binder_buffer, size_t size,

binder_size_t *consumed)

{

uint32_t cmd;

struct binder_context *context = proc->context;

void __user *buffer = (void __user *)(uintptr_t)binder_buffer;

void __user *ptr = buffer + *consumed;

void __user *end = buffer + size;

while (ptr < end && thread->return_error == BR_OK) {

if (get_user(cmd, (uint32_t __user *)ptr))

return -EFAULT;

ptr += sizeof(uint32_t);

trace_binder_command(cmd);

if (_IOC_NR(cmd) < ARRAY_SIZE(binder_stats.bc)) {

binder_stats.bc[_IOC_NR(cmd)]++;

proc->stats.bc[_IOC_NR(cmd)]++;

thread->stats.bc[_IOC_NR(cmd)]++;

}

switch (cmd) {

......

//我们前面发送的命令就是BC_TRANSACTION

case BC_TRANSACTION:

case BC_REPLY: {

struct binder_transaction_data tr;

//拷贝用户空间数据到内核空间tr

if (copy_from_user(&tr, ptr, sizeof(tr)))

return -EFAULT;

ptr += sizeof(tr);

binder_transaction(proc, thread, &tr,

cmd == BC_REPLY, 0);

break;

}

}

return 0;

}

static void binder_transaction(struct binder_proc *proc,

struct binder_thread *thread,

struct binder_transaction_data *tr, int reply,

binder_size_t extra_buffers_size)

{

......

if (reply) {

......

} else {

//因为我们传的tr->target.handle为0,也就是ServiceManager进程

if (tr->target.handle) {

......

} else {

//直接获取ServiceManager的在Binder驱动中的binder_node目标节点

target_node = context->binder_context_mgr_node;

}

//获取ServiceManager的进程

target_proc = target_node->proc;

}

......

if (target_thread) {

e->to_thread = target_thread->pid;

//获取ServiceManager的TODO队列和等待队列

target_list = &target_thread->todo;

target_wait = &target_thread->wait;

}

//分配两个结构体内存

t = kzalloc(sizeof(*t), GFP_KERNEL);

binder_stats_created(BINDER_STAT_TRANSACTION);

tcomplete = kzalloc(sizeof(*tcomplete), GFP_KERNEL);

binder_stats_created(BINDER_STAT_TRANSACTION_COMPLETE);

......

//为target_proc分配一块Buffer

t->buffer = binder_alloc_buf(target_proc, tr->data_size,

tr->offsets_size, extra_buffers_size,

!reply && (t->flags & TF_ONE_WAY));

......

//向target_list,也就是ServiceManager的TODO队列,添加BINDER_WORK_TRANSACTION事务

t->work.type = BINDER_WORK_TRANSACTION;

list_add_tail(&t->work.entry, target_list);

//向当前线程的TODO队列添加BINDER_WORK_TRANSACTION_COMPLETE事务

tcomplete->type = BINDER_WORK_TRANSACTION_COMPLETE;

list_add_tail(&tcomplete->entry, &thread->todo);

//如果ServiceManager进程正在等待,则唤醒

if (target_wait) {

if (reply || !(t->flags & TF_ONE_WAY))

wake_up_interruptible_sync(target_wait);

else

wake_up_interruptible(target_wait);

}

return;

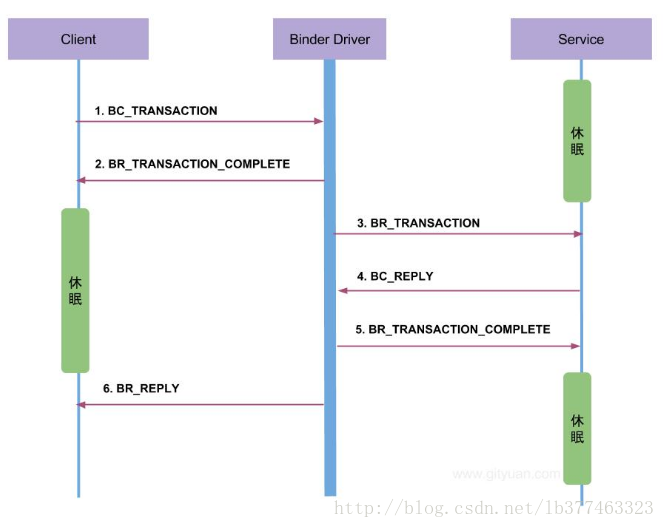

} 对比这张Binder架构图,Client就是cameraserver进程,Service就是servicemanager进程,整个注册服务的过程就是cameraserver进程发送BC_TRANSACTION命令到Binder驱动中,然后Binder驱动发送BR_TRANSACTION命令到servermanager进程中注册,这里会生成一个handle,用来标识”media.camera”服务,然后将此服务添加到svclist全局链表中。然后通过BC_REPLY命令将返回结果发送到Binder驱动中,Binder驱动再通过BR_REPLY命令将结果发送给cameraserver进程。

至此,CameraService的servicename和实例就在ServiceManager中注册了,之后别的进程即可通过ServiceManager跨进程获取CameraService服务。并且CameraService通过onFirstRef()函数跟Camera HAL层联系起来了。

作者:lb377463323

出处:http://blog.csdn.net/lb377463323

原文链接:http://blog.csdn.net/lb377463323/article/details/78796915

转载请注明出处!