本次比较特殊,一次介绍两个Linux子系统,主要原因是和以往的DM9000接口不同,DM9621是USB接口的.

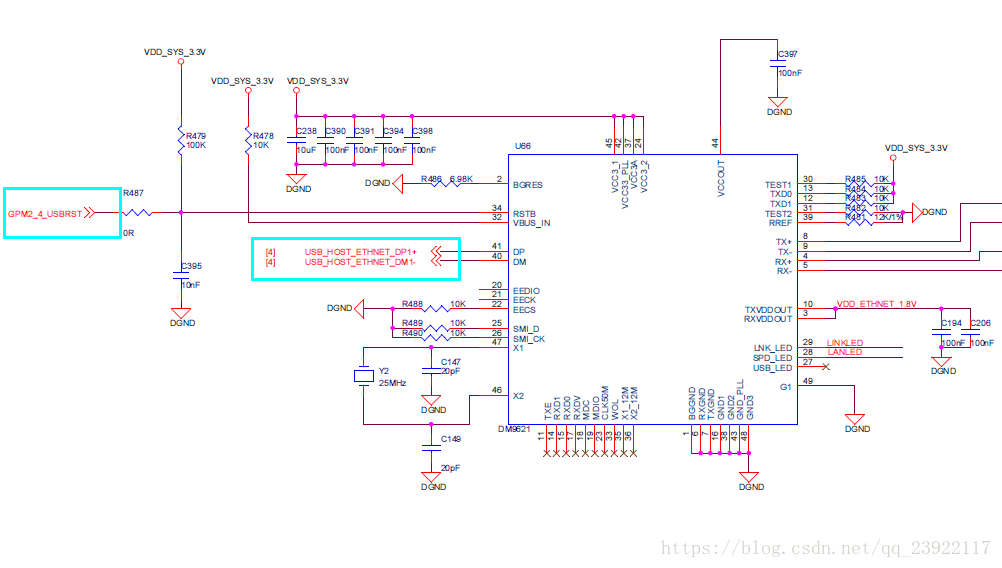

我们先来看一下tiny4412上DM9621的接口特性,如下是DM9621的电路图:

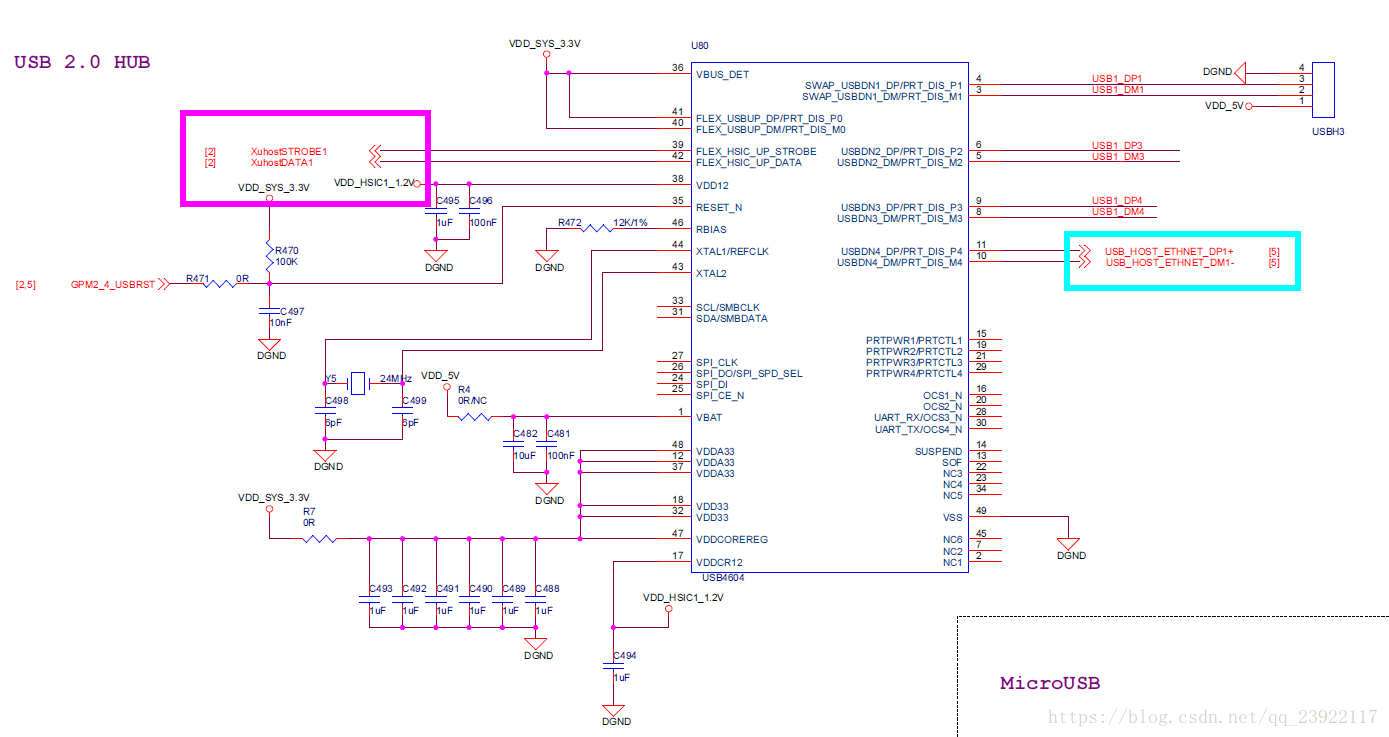

如上图青靛色的部分,它是连接在外接的USB Hub上的,USB Hub的型号是USB4604,电路连接如下:

从上面的电路连接结构可以看出,usb网卡连接在图中青靛色地方,还有两组是扩展USB接口,另外一组排针引出,在途中品红色方框里是USB Hub和芯片连接的引脚,它没有采用USB连接,而是采用了HSIC接口,这种接口和USB接口的信号传输方式不同,USB是差分传输,类似于CAN总线,而HSIC则通过STORBE线和DATA线进行数据的传输.既然友善之臂采用这个接口,那么就在芯片引出后不得不采用USB Hub一类的带有HSIC转USB的器件进行转接,因为两种信号不兼容.但是电缆连接拓扑结构是兼容的.

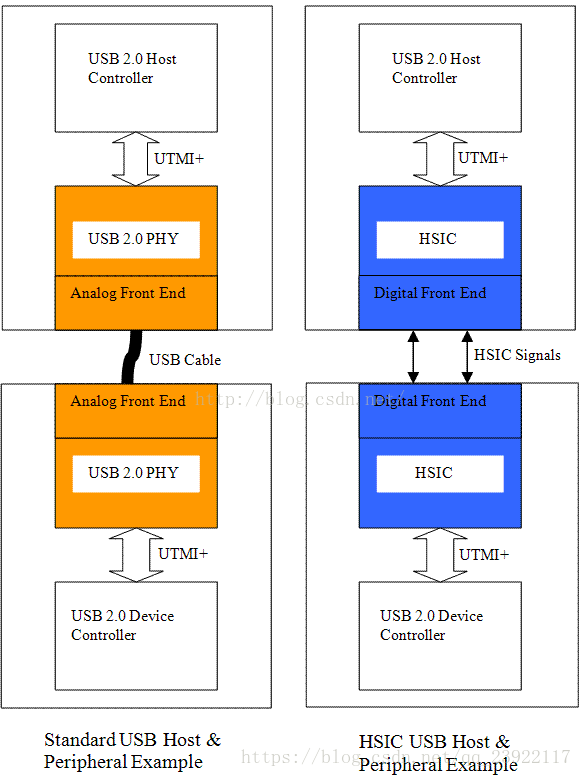

关于HSIC的一些介绍:

HSIC 是一个两线的源同步穿行接口,使用 240MHz 双倍数据速率来产生 480Mbps的告诉数据速率,和传统 USB 电缆连接拓补结构的主机完全兼容。HSIC 接口不支持中速和低速 USB 转换。

- 480Mbps 高速数据速率

- 源同步串行接口

- 不传输时不耗电

- 最长线路长度位 10cm

- 不支持热插拔

- 信号驱动在 1.2V 标准 LVCMOS 水平

- 为低功耗应用设计

- 不支持高速线性调频协议,HSIC 只能工作在高速状态

- HSIC 可以代替 I2C

- I2C 不够快,而且还需要特殊驱动

- HSIC 允许 USB 软件复用

- PHY 可以复用或适应现存的 PHY 技术

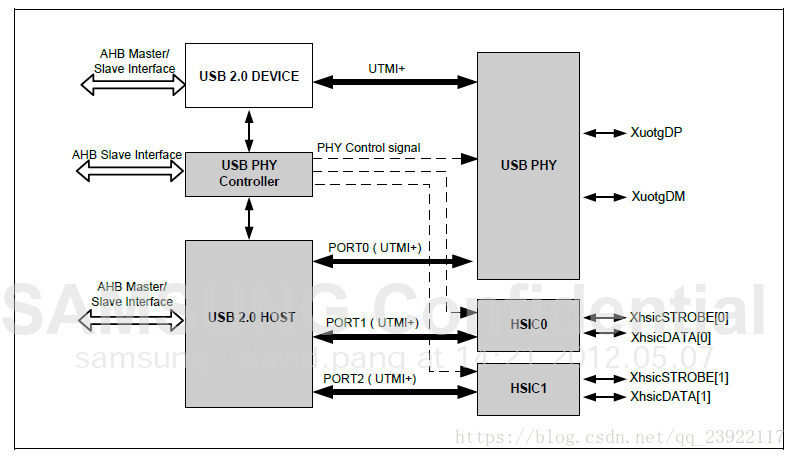

然后,我们看一下Exynos4412 SoC上的USB控制器的架构:

从上面的架构可以看出,CPU的USB控制器是支持1路USB输出和两路HSIC输出的,tiny4412采用了第二组HSIC外接USB Hub.别看这个框架很清晰,涉及到的寄存器特别的多,所以驱动适配器层的驱动程序,一般都是CPU原厂给出的,本次也不例外,我们本次开发驱动主要是驱动的控制器层,这一层是和特定设备相关的,适配器层则是通用的,依照USB协议来完成的.由于本次涉及到的知识比较多,篇幅上面也很长,所以,就不细讲Exynos的USB控制器的架构了.

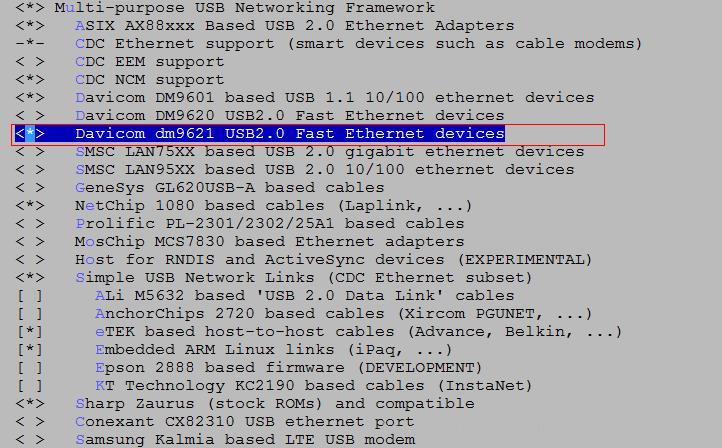

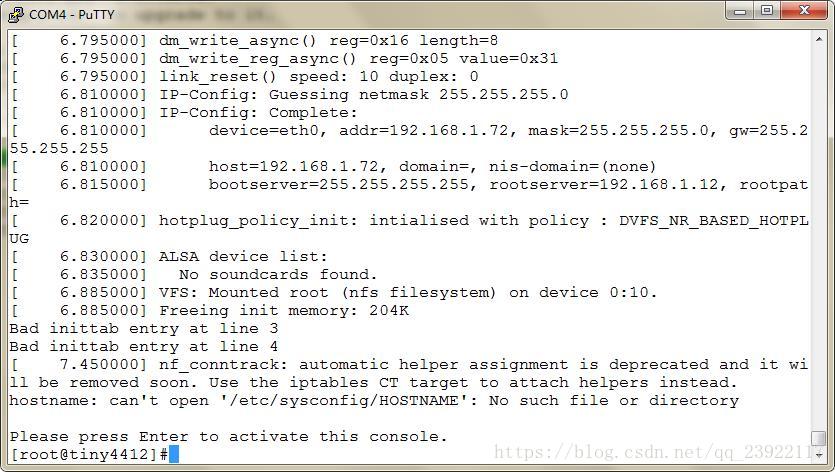

因为DM9621是USB接口的网卡,所以,驱动程序首先是注册USB驱动,然后在USB驱动的基础上,注册net驱动,所以,我们大致地先讲一下USB子系统,然后再大致讲一下网络子系统,再之后,我们就写一个驱动来测试.

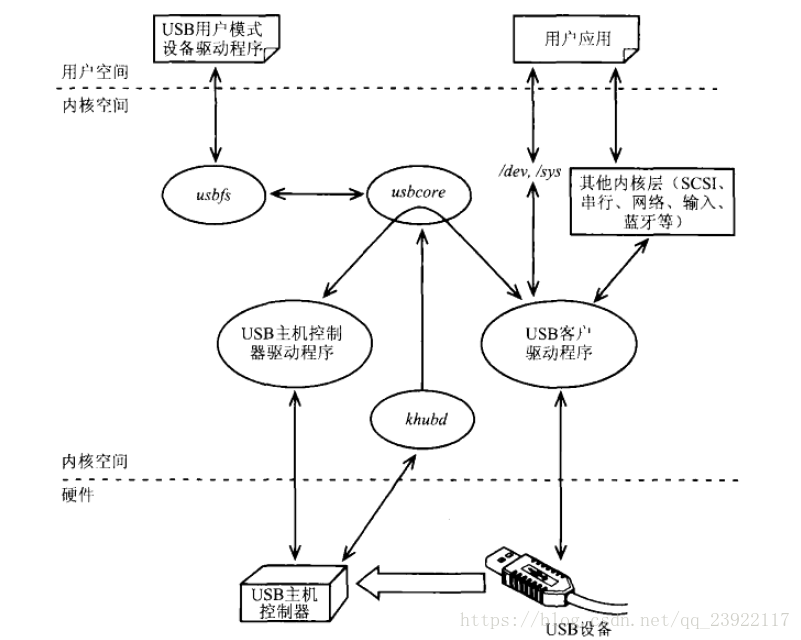

USB子系统:

如上图是USB子系统的框架,我们需要开发的是USB客户驱动程序那一层,关于操作具体地USB控制器寄存器相关的驱动原厂已经提供了,属于USB主机控制器驱动程序那一层,这两层之间靠USB核心层来进行匹配的,我们来讲一下这个流程和上图的组成部分:

1).主体框架:包括USB客户驱动程序,USB核心层驱动程序和USB主机控制器驱动程序.因为USB设备是多种多样的,比如USB蓝牙,USB网卡,USB存储设备,这些不同的USB设备需要不同的USB驱动来完成不同的功能,这些不同的驱动就是USB客户驱动程序,假设USB控制器主机上只有一个,驱动这个USB控制器的驱动也就一个就可以搞定,主要的区别就是USB客户驱动程序,我们插入不同的设备,就需要匹配不同的USB客户驱动程序,这个任务我们专门用USB核心层来完成,USB客户驱动程序和USB主机控制器程序都注册仅USB核心层,然后,根据不同的设备信息匹配不同的设备驱动;

2).USB核心:USB核心由一些基础代码组成,这些基础代码包括结构体和函数定义,共HCD和客户驱动程序使用,同时也间接地使得客户驱动程序与具体的主机控制器无关.

3).驱动不同主机控制器的HCD.

4).用于根集线器(包括物理集成器)的Hub驱动和一个内核辅助线程khubd. khubd监视与该集线器连接的所有端口.系统检测端口状态变化以及配置配置热插拔设备是很消耗时间的事情,而内核提供的基础设施辅助线程就能很好完成这些任务.通常情况下,该线程处于休眠态.当集线器驱动程序检测到USB端口状态变化后,该内核线程被立马唤醒.

5).用于USB客户设备的设备驱动程序.

6).USB文件I同usbfs,它能够让你从用户空间驱动USB设备.

对于端到端的操作,需要USB子系统和许多其他的内核层共同完成.比如要正常使用USB大容量存储设备,需要USB子系统和SCSI驱动程序协同工作,要驱动USB蓝牙键盘,需要USB子系统,蓝牙层,输入子系统和tty层联合完成,而本次,我们讲的就是USB子系统和网络子系统来完成对DM9621的驱动程序.

了解了USB子系统的框架之后,我们来说一下USB的一些特性:

(1)总线速度:

USB传输速度目前常见的有4种,分别是USB1.0, USB1.1, USB2.0和USB3.0,USB1.0传输速度是1.5Mbps,称为低速USB, USB1.1传输速度是12Mbps,称为全速USB, USB2.0传输速度是480Mbps,称为高速USB, USB3.0传输速度是5Gbps,称为超高速USB, USB 2.0基于半双工二线制总线,只能提供单向数据流传输,而USB 3.0采用了对偶单纯形四线制差分信号线,故而支持双向并发数据流传输,这也是新规范速度猛增的关键原因.

(2)主机控制器:

UHCI(Universal Host Controller Interface,通用主机控制器接口):该标准是英特尔提出来的,基于x86的PC机很可能用的就是这个.

OHCI(Open Host Controller Interface,开放主机控制器接口):该标准是康柏和微软等公司提出来的,兼容OHCI的控制器硬件智能程度比UHCI高,所以基于OHCI的HCD(Host Controler Drivers,主机控制器驱动程序)比基于UHCI的HCD更简单.

EHCI(Enhanced Host Controller Interface,增强型主机控制器接口):该主机控制器支持高速的USB2.0设备.为支持低速的USB设备,该控制器通常包含UHCI或OHCI控制器.(Exynos芯片就是EHCI然后包含OHCI).

USB OTG控制器:这类控制器在嵌入式微控制器领域越来越受欢迎.由于采用了OTG控制器,每个通信终端就能充当DRD(Dual-Role Device,双重角色设备).用HNP(Host Negotiation Protocol, 主机沟通协议)初始化设备连接后,这样的设备可以根据功能需要在主机模式和设备模式之间任意切换(Exynos芯片上主机模式采用DMA模式,从机模式采用Slave模式).

主机控制器内嵌了一个叫根集线器的硬件,根集线器是逻辑集线器,多个USB端口共用它,这些端口可以和内部或外部的集线器相连,扩展更多的USB端口,这样级联下来形成树状结构.

(3)传输模式:

•控制传输模式: 用来传送外设和主机之间的控制,状态,配置等信息;

•批量传输模式: 传输大量时延要求不高的数据,比如U盘;

•中断传输模式: 传输数据量小,但对传输时延敏感,要求马上响应,比如键鼠;

•等时传输模式: 传输实时数据,传输速率要预先可知,可能会造成丢包,比如USB声卡.

(4)寻址:

USB设备的每个可寻址的单元称作端点,为每个端点分配地址称作端点地址,每个端点都有与之相关的传输模式,如果一个端点的数据传输模式是批量模式,那这个端点就叫批量端点.地址为0的端点专门用来配置设备,控制管道与它相连,完成设备枚举过程,

每个端点既可以沿上行方向方向发送数据(IN传输),也可以沿下行方向接收数据(OUT传输),IN传输和OUT传输的地址空间是分开的,因此,一个批量传输IN端点和一个批量传输OUT端点可以有相同的地址,所以,USB是7位可寻址的,那么IN和OUT具有相同的地址时,就可以匹配2^7 = 128,由于地址0专门用于控制端点,所以,一个USB接口最大可以连接127个设备(1~127).

关于USB子系统具体的代码架构内容是比较多的,我们就先说这么多吧,篇幅太长了,驱动代码里也有注释信息可以帮助更好地理解,下面再来说一下网络子系统.

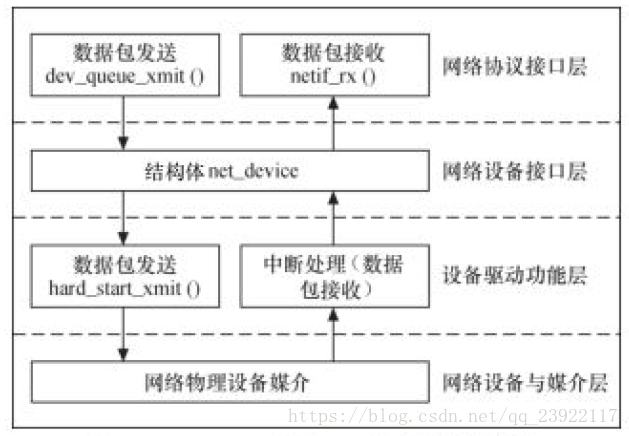

如上图是网络子系统的驱动体系结构.分为四个部分,我们来简单地说一下:

1).网络协议接口层想网络层协议提供统一的数据包收发接口,不论上层协议是ARP还是IP,都通过dev_queue_xmit()函数发送数据,并通过netif_rx()函数接收数据.这一层的存在使得上层协议独立于具体的设备.

2).网络设备接口层想协议接口层提供统一的用于描述具体网络设备属性和操作的结构体net_device,该结构体是设备驱动功能层中各函数的容器.实际上,网络设备接口层从宏观上规划了具体操作硬件的设备驱动功能层的结构.

3).设备驱动功能层的各函数是网络设备接口层net_device数据结构的具体成员,是驱使网络设备硬件完成相应动作的程序,它通过hard_start_xmit()函数启动发送操作,并通过网络设备上的中断触发接收操作.

4).网络设备与媒介层是王城数据包发送和接收的物理实体,包括网络适配器和具体的传输媒介,网络适配器被设备驱动功能层中的函数在物理上驱动.对于Linux系统而言,网络设备和媒介都可以是虚拟的.

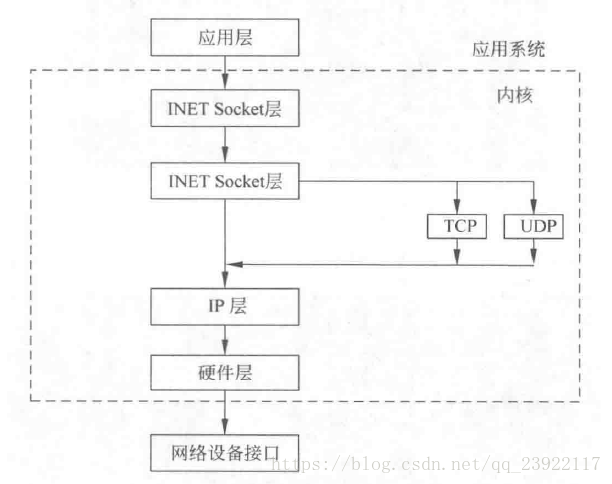

下面是Linux网络协议栈示意图:

就先说这么多吧,具体细节请查阅有关资料.下面,我们直接把驱动代码贴出来,我们需要完成的是USB客户驱动程序,并且这个程序属于网络子系统的

#include <linux/init.h>

#include <linux/module.h>

#include <linux/slab.h>

#include <linux/sched.h>

#include <linux/stddef.h>

#include <linux/netdevice.h>

#include <linux/etherdevice.h>

#include <linux/ethtool.h>

#include <linux/mii.h>

#include <linux/usb.h>

#include <linux/usb/usbnet.h>

#include <linux/crc32.h>

#include <linux/ctype.h>

#include <linux/skbuff.h>

#include <linux/version.h>

#include <linux/delay.h>

/* control requests */

#define DM_READ_REGS 0x00

#define DM_WRITE_REGS 0x01

#define DM_READ_MEMS 0x02

#define DM_WRITE_REG 0x03

#define DM_WRITE_MEMS 0x05

#define DM_WRITE_MEM 0x07

/* registers */

#define DM_NET_CTRL 0x00

#define DM_RX_CTRL 0x05

#define DM_FLOW_CTRL 0x0a

#define DM_SHARED_CTRL 0x0b

#define DM_SHARED_ADDR 0x0c

#define DM_SHARED_DATA 0x0d /* low + high */

#define DM_EE_PHY_L 0x0d

#define DM_EE_PHY_H 0x0e

#define DM_WAKEUP_CTRL 0x0f

#define DM_PHY_ADDR 0x10 /* 6 bytes */

#define DM_MCAST_ADDR 0x16 /* 8 bytes */

#define DM_GPR_CTRL 0x1e

#define DM_GPR_DATA 0x1f

#define DM_PID 0x2a

#define DM_XPHY_CTRL 0x2e

#define DM_TX_CRC_CTRL 0x31

#define DM_RX_CRC_CTRL 0x32

#define DM_SMIREG 0x91

#define USB_CTRL 0xf4

#define PHY_SPEC_CFG 20

#define DM_TXRX_M 0x5C

#define MD96XX_EEPROM_MAGIC 0x9620

#define DM_MAX_MCAST 64

#define DM_MCAST_SIZE 8

#define DM_EEPROM_LEN 256

#define DM_TX_OVERHEAD 2 /* 2 byte header */

#define DM_RX_OVERHEAD_9601 7 /* 3 byte header + 4 byte crc tail */

#define DM_RX_OVERHEAD 8 /* 4 byte header + 4 byte crc tail */

#define DM_TIMEOUT 1000

#define DM_MODE9621 0x80

#define DM_TX_CS_EN 0 /* Transmit Check Sum Control */

#define DM9621_PHY_ID 1 /* Stone add For kernel read phy register */

struct dm96xx_priv {

int flag_fail_count;

u8 mode_9621;

};

// 设置以太网地址

static unsigned param_addr[6];

static int __init dm9621_set_mac(char *str) {

unsigned char addr[6];

unsigned int val;

int idx = 0;

char *p = str, *end;

while (*p && idx < 6) {

val = simple_strtoul(p, &end, 16);

if (end <= p) {

break;

} else {

addr[idx++] = val;

p = end;

if (*p == ':'|| *p == '-') {

p++;

} else {

break;

}

}

}

if (idx == 6) {

printk("Setup ethernet address to %pM\n", addr);

memcpy(param_addr, addr, 6);

}

return 1;

}

__setup("ethmac=", dm9621_set_mac);

static int dm_read(struct usbnet *dev, u8 reg, u16 length, void *data)

{

// usb_control_msg V.S. usb_submit_urb

// USB_CTRL_SET_TIMEOUT V.S. USB_CTRL_GET_TIMEOUT

return usb_control_msg(dev->udev,

usb_rcvctrlpipe(dev->udev, 0),

DM_READ_REGS,

USB_DIR_IN | USB_TYPE_VENDOR | USB_RECIP_DEVICE,

0, reg, data, length, USB_CTRL_SET_TIMEOUT);

}

static int dm_read_reg(struct usbnet *dev, u8 reg, u8 *value)

{

u16 v[2];

int ret;

ret = dm_read(dev, reg, 2, v);

*value = (u8)(v[0] & 0xff);

return ret;

}

static int dm_write(struct usbnet *dev, u8 reg, u16 length, void *data)

{

return usb_control_msg(dev->udev,

usb_sndctrlpipe(dev->udev, 0),

DM_WRITE_REGS,

USB_DIR_OUT | USB_TYPE_VENDOR |USB_RECIP_DEVICE,

0, reg, data, length, USB_CTRL_SET_TIMEOUT);

}

static int dm_write_reg(struct usbnet *dev, u8 reg, u8 value)

{

return usb_control_msg(dev->udev,

usb_sndctrlpipe(dev->udev, 0),

DM_WRITE_REG,

USB_DIR_OUT | USB_TYPE_VENDOR |USB_RECIP_DEVICE,

value, reg, NULL, 0, USB_CTRL_SET_TIMEOUT);

}

static void dm_write_async_callback(struct urb *urb)

{

struct usb_ctrlrequest *req = (struct usb_ctrlrequest *)urb->context;

int status = urb->status;

if (status < 0)

printk(KERN_DEBUG "dm_write_async_callback() failed with %d\n", status);

kfree(req);

usb_free_urb(urb);

}

static void dm_write_async_helper(struct usbnet *dev, u8 reg, u8 value,

u16 length, void *data)

{

struct usb_ctrlrequest *req;

struct urb *urb;

int status;

urb = usb_alloc_urb(0, GFP_ATOMIC);

if (!urb) {

printk("Error allocating URB in dm_write_async_helper!\n");

return;

}

req = kmalloc(sizeof(struct usb_ctrlrequest), GFP_ATOMIC);

if (!req) {

printk("Failed to allocate memory for control request\n");

usb_free_urb(urb);

return;

}

req->bRequestType = USB_DIR_OUT | USB_TYPE_VENDOR | USB_RECIP_DEVICE;

req->bRequest = length ? DM_WRITE_REGS : DM_WRITE_REG;

req->wValue = cpu_to_le16(value);

req->wIndex = cpu_to_le16(reg);

req->wLength = cpu_to_le16(length);

usb_fill_control_urb(urb, dev->udev,

usb_sndctrlpipe(dev->udev, 0),

(void *)req, data, length,

dm_write_async_callback, req);

status = usb_submit_urb(urb, GFP_ATOMIC);

if (status < 0) {

netdev_err(dev->net, "Error submitting the control message: status=%d\n",

status);

kfree(req);

usb_free_urb(urb);

}

}

static void dm_write_reg_async(struct usbnet *dev, u8 reg, u8 value)

{

printk("dm_write_reg_async() reg=0x%02x value=0x%02x\n", reg, value);

dm_write_async_helper(dev, reg, value, 0, NULL);

}

static void dm_write_async(struct usbnet *dev, u8 reg, u16 length, void *data)

{

printk("dm_write_async() reg=0x%02x length=%d\n", reg, length);

dm_write_async_helper(dev, reg, 0, length, data);

}

static int dm_write_shared_word(struct usbnet *dev, int phy, u8 reg, __le16 value)

{

int ret, i;

mutex_lock(&dev->phy_mutex);

ret = dm_write(dev, DM_SHARED_DATA, 2, &value);

if (ret < 0)

goto out;

dm_write_reg(dev, DM_SHARED_ADDR, phy ? (reg | 0x40) : reg);

if (!phy)

dm_write_reg(dev, DM_SHARED_CTRL, 0x10);

dm_write_reg(dev, DM_SHARED_CTRL, phy ? 0x0a : 0x12);

dm_write_reg(dev, DM_SHARED_CTRL, 0x10);

for (i = 0; i < DM_TIMEOUT; i++) {

u8 tmp;

udelay(1);

ret = dm_read_reg(dev, DM_SHARED_CTRL, &tmp);

if (ret < 0)

goto out;

/* ready */

if ((tmp & 1) == 0)

break;

}

if (i == DM_TIMEOUT) {

printk("%s write timed out!\n", phy ? "phy" : "eeprom");

ret = -EIO;

goto out;

}

dm_write_reg(dev, DM_SHARED_CTRL, 0x0);

out:

mutex_unlock(&dev->phy_mutex);

return ret;

}

static int dm_read_shared_word(struct usbnet *dev, int phy, u8 reg, __le16 *value)

{

u16 v[2];

int ret, i;

mutex_lock(&dev->phy_mutex);

dm_write_reg(dev, DM_SHARED_ADDR, phy ? (reg | 0x40) : reg);

dm_write_reg(dev, DM_SHARED_CTRL, phy ? 0xc : 0x4);

for (i = 0; i < DM_TIMEOUT; i++) {

u8 tmp;

udelay(1);

ret = dm_read_reg(dev, DM_SHARED_CTRL, &tmp);

if (ret < 0)

goto out;

/* ready */

if ((tmp & 1) == 0)

break;

}

if (i == DM_TIMEOUT) {

printk("%s read timed out!\n", phy ? "phy" : "eeprom");

ret = -EIO;

goto out;

}

dm_write_reg(dev, DM_SHARED_CTRL, 0x0);

ret = dm_read(dev, DM_SHARED_DATA, 2, v);

*value = v[0];

out:

mutex_unlock(&dev->phy_mutex);

return ret;

}

static int dm9621_mdio_read(struct net_device *netdev, int phy_id, int loc)

{

struct usbnet *dev = netdev_priv(netdev);

__le16 res;

dm_read_shared_word(dev, phy_id, loc, &res);

return le16_to_cpu(res);

}

static void dm9621_mdio_write(struct net_device *netdev, int phy_id, int loc, int val)

{

struct usbnet *dev = netdev_priv(netdev);

__le16 res = cpu_to_le16(val);

int mdio_val;

dm_write_shared_word(dev, phy_id, loc, res);

mdelay(1);

mdio_val = dm9621_mdio_read(netdev, phy_id, loc);

}

/***************************************************************************************************/

static void dm9621_get_drvinfo(struct net_device *net, struct ethtool_drvinfo *info)

{

/* Inherit standard device info */

usbnet_get_drvinfo(net, info);

info->eedump_len = DM_EEPROM_LEN;

}

static u32 dm9621_get_link(struct net_device *net)

{

struct usbnet *dev = netdev_priv(net);

return mii_link_ok(&dev->mii);

}

static int dm_write_eeprom_word(struct usbnet *dev, int phy, u8 offset, u8 value)

{

int ret, i;

u8 reg,dloc,tmp_H,tmp_L;

__le16 eeword;

//devwarn(dev, "offset = 0x%x value = 0x%x ", offset, value);

/* hank: from offset to determin eeprom word register location,reg */

reg = (offset >> 1) & 0xff;

/* hank: high/low byte by odd/even of offset */

dloc = (offset & 0x01) ? DM_EE_PHY_H : DM_EE_PHY_L;

/* retrieve high and low byte from the corresponding reg*/

ret = dm_read_shared_word(dev, 0, reg, &eeword);

//devwarn(dev, "reg = 0x%x dloc = 0x%x eeword = 0x%4x", reg, dloc, eeword);

tmp_H = (eeword & 0xff);

tmp_L = (eeword >> 8);

printk("tmp_L = 0x%2x tmp_H = 0x%2x eeword = 0x%4x\n", tmp_L, tmp_H, eeword);

/* determine new high and low byte */

if (offset & 0x01) {

tmp_L = value;

} else {

tmp_H = value;

}

//printk("updated new: tmp_L =0x%2x tmp_H =0x%2x\n", tmp_L,tmp_H);

mutex_lock(&dev->phy_mutex);

/* hank: write low byte data first to eeprom reg */

// dm_write(dev, (offset & 0x01)? DM_EE_PHY_H:DM_EE_PHY_L, 1, &value);

dm_write(dev,DM_EE_PHY_L, 1, &tmp_H);

/* high byte will be zero */

//(offset & 0x01)? (value = eeword << 8):(value = eeword >> 8);

/* write the not modified 8 bits back to its origional high/low byte reg */

dm_write(dev,DM_EE_PHY_H, 1, &tmp_L);

if (ret < 0)

goto out;

/* hank : write word location to reg 0x0c */

ret = dm_write_reg(dev, DM_SHARED_ADDR, reg);

if (!phy)

dm_write_reg(dev, DM_SHARED_CTRL, 0x10);

dm_write_reg(dev, DM_SHARED_CTRL, 0x12);

dm_write_reg(dev, DM_SHARED_CTRL, 0x10);

for (i = 0; i < DM_TIMEOUT; i++) {

u8 tmp;

udelay(1);

ret = dm_read_reg(dev, DM_SHARED_CTRL, &tmp);

if (ret < 0)

goto out;

/* ready */

if ((tmp & 1) == 0)

break;

}

if (i == DM_TIMEOUT) {

printk("%s write timed out!\n", phy ? "phy" : "eeprom");

ret = -EIO;

goto out;

}

//dm_write_reg(dev, DM_SHARED_CTRL, 0x0);

out:

mutex_unlock(&dev->phy_mutex);

return ret;

}

static int dm_read_eeprom_word(struct usbnet *dev, u8 offset, void *value)

{

return dm_read_shared_word(dev, 0, offset, value);

}

static int dm9621_get_eeprom_len(struct net_device *dev)

{

return DM_EEPROM_LEN;

}

static int dm9621_get_eeprom(struct net_device *net, struct ethtool_eeprom *eeprom, u8 * data)

{

struct usbnet *dev = netdev_priv(net);

__le16 *ebuf = (__le16 *) data;

int i;

/* access is 16bit */

if ((eeprom->offset % 2) || (eeprom->len % 2))

return -EINVAL;

for (i = 0; i < eeprom->len / 2; i++) {

if (dm_read_eeprom_word(dev, eeprom->offset / 2 + i, &ebuf[i]) < 0)

return -EINVAL;

}

return 0;

}

static int dm9621_set_eeprom(struct net_device *net,struct ethtool_eeprom *eeprom, u8 *data)

{

struct usbnet *dev = netdev_priv(net);

printk("EEPROM: magic = 0x%x offset =0x%x data = 0x%x\n",

eeprom->magic, eeprom->offset,*data);

if (eeprom->magic != MD96XX_EEPROM_MAGIC) {

printk("EEPROM: magic value mismatch, magic = 0x%x\n", eeprom->magic);

return -EINVAL;

}

if (dm_write_eeprom_word(dev, 0, eeprom->offset, *data) < 0)

return -EINVAL;

return 0;

}

#define DM_LINKEN (1<<5)

#define DM_MAGICEN (1<<3)

#define DM_LINKST (1<<2)

#define DM_MAGICST (1<<0)

static void dm9621_get_wol(struct net_device *net, struct ethtool_wolinfo *wolinfo)

{

struct usbnet *dev = netdev_priv(net);

u8 opt;

if (dm_read_reg(dev, DM_WAKEUP_CTRL, &opt) < 0) {

wolinfo->supported = 0;

wolinfo->wolopts = 0;

return;

}

wolinfo->supported = WAKE_PHY | WAKE_MAGIC;

wolinfo->wolopts = 0;

if (opt & DM_LINKEN)

wolinfo->wolopts |= WAKE_PHY;

if (opt & DM_MAGICEN)

wolinfo->wolopts |= WAKE_MAGIC;

}

static int dm9621_set_wol(struct net_device *net, struct ethtool_wolinfo *wolinfo)

{

struct usbnet *dev = netdev_priv(net);

u8 opt = 0;

if (wolinfo->wolopts & WAKE_PHY)

opt |= DM_LINKEN;

if (wolinfo->wolopts & WAKE_MAGIC)

opt |= DM_MAGICEN;

dm_write_reg(dev, DM_NET_CTRL, 0x48); // enable WAKEEN

//dm_write_reg(dev, 0x92, 0x3f); //keep clock on Hank Jun 30

return dm_write_reg(dev, DM_WAKEUP_CTRL, opt);

}

static struct ethtool_ops dm9621_ethtool_ops = {

.get_drvinfo = dm9621_get_drvinfo,

.get_link = dm9621_get_link,

.get_msglevel = usbnet_get_msglevel,

.set_msglevel = usbnet_set_msglevel,

.get_eeprom_len = dm9621_get_eeprom_len,

.get_eeprom = dm9621_get_eeprom,

.set_eeprom = dm9621_set_eeprom,

.get_settings = usbnet_get_settings,

.set_settings = usbnet_set_settings,

.nway_reset = usbnet_nway_reset,

.get_wol = dm9621_get_wol,

.set_wol = dm9621_set_wol,

};

/***************************************************************************************************/

static int dm9621_ioctl(struct net_device *net, struct ifreq *rq, int cmd)

{

struct usbnet *dev = netdev_priv(net);

return generic_mii_ioctl(&dev->mii, if_mii(rq), cmd, NULL);

}

static void dm9621_set_multicast(struct net_device *net)

{

struct usbnet *dev = netdev_priv(net);

/* We use the 20 byte dev->data for our 8 byte filter buffer

* to avoid allocating memory that is tricky to free later */

u8 *hashes = (u8 *) & dev->data;

u8 rx_ctl = 0x31;

memset(hashes, 0x00, DM_MCAST_SIZE);

hashes[DM_MCAST_SIZE - 1] |= 0x80; /* broadcast address */

if (net->flags & IFF_PROMISC) {

rx_ctl |= 0x02;

#if LINUX_VERSION_CODE > KERNEL_VERSION(2,6,33)

} else if (net->flags & IFF_ALLMULTI || netdev_mc_count(net) > DM_MAX_MCAST) {

rx_ctl |= 0x8;

} else if (!netdev_mc_empty(net)) {

struct netdev_hw_addr *ha;

netdev_for_each_mc_addr(ha, net) {

u32 crc = crc32_le(~0, ha->addr, ETH_ALEN) & 0x3f;

hashes[crc>>3] |= 1 << (crc & 0x7);

}

#else //LINUX_VERSION_CODE <= KERNEL_VERSION(2,6,33)

} else if (net->flags & IFF_ALLMULTI || net->mc_count > DM_MAX_MCAST) {

rx_ctl |= 0x08;

} else if (net->mc_count) {

struct dev_mc_list *mc_list = net->mc_list;

int i;

for (i = 0; i < net->mc_count; i++, mc_list = mc_list->next) {

u32 crc = crc32_le(~0, mc_list->dmi_addr, ETH_ALEN) & 0x3f;

hashes[crc>>3] |= 1 << (crc & 0x7);

}

#endif

}

dm_write_async(dev, DM_MCAST_ADDR, DM_MCAST_SIZE, hashes);

dm_write_reg_async(dev, DM_RX_CTRL, rx_ctl);

}

static void __dm9621_set_mac_address(struct usbnet *dev)

{

dm_write_async(dev, DM_PHY_ADDR, ETH_ALEN, dev->net->dev_addr);

}

static int dm9621_set_mac_address(struct net_device *net, void *p)

{

struct sockaddr *addr = p;

struct usbnet *dev = netdev_priv(net);

printk("dm962x: Set mac addr %pM\n", addr->sa_data);

if (!is_valid_ether_addr(addr->sa_data)) {

printk("not setting invalid mac address %pM\n", addr->sa_data);

return -EINVAL;

}

memcpy(net->dev_addr, addr->sa_data, net->addr_len);

__dm9621_set_mac_address(dev);

return 0;

}

#if LINUX_VERSION_CODE >= KERNEL_VERSION(2,6,31)

static const struct net_device_ops vm_netdev_ops= { // 实现在usbnet.c中

.ndo_open = usbnet_open,

.ndo_stop = usbnet_stop,

.ndo_start_xmit = usbnet_start_xmit,

.ndo_tx_timeout = usbnet_tx_timeout,

.ndo_change_mtu = usbnet_change_mtu,

.ndo_validate_addr = eth_validate_addr,

.ndo_do_ioctl = dm9621_ioctl,

#if LINUX_VERSION_CODE >= KERNEL_VERSION(3,2,0)

.ndo_set_rx_mode = dm9621_set_multicast,

#else

.ndo_set_multicast_list = dm9621_set_multicast,

#endif

.ndo_set_mac_address = dm9621_set_mac_address,

};

#endif

/***************************************************************************************************/

int

dm9621_bind(struct usbnet *dev, struct usb_interface *intf)

{

const unsigned char *mac_src;

struct dm96xx_priv *priv;

u16 pid[2];

u8 temp, val;

int mdio_val, i, ret;

// 从interface获取USB端点信息

ret = usbnet_get_endpoints(dev, intf);

if(ret)

goto out;

// 给usbnet结构体赋值

#if LINUX_VERSION_CODE >= KERNEL_VERSION(2,6,31)

dev->net->netdev_ops = &vm_netdev_ops;

dev->net->ethtool_ops = &dm9621_ethtool_ops;

#else

dev->net->do_ioctl = dm9621_ioctl;

dev->net->ethtool_ops = &dm9621_ethtool_ops;

dev->net->set_multicast = dm9621_set_multicast;

#endif

dev->net->hard_header_len += DM_TX_OVERHEAD;

dev->hard_mtu = dev->net->mtu + dev->net->hard_header_len;

dev->rx_urb_size = dev->hard_mtu + ETH_HLEN + DM_RX_OVERHEAD + 1;

dev->mii.dev = dev->net;

dev->mii.mdio_read = dm9621_mdio_read;

dev->mii.mdio_write = dm9621_mdio_write;

dev->mii.phy_id_mask = 0x1f;

dev->mii.reg_num_mask = 0x1f;

dev->mii.phy_id = DM9621_PHY_ID;

// JJ1

if((ret = dm_read_reg(dev, 0x29, &val)) >= 0)

printk("dm962x: dm_read_reg() 0x29 0x%02x\n", val);

else

printk("dm962x: dm_read_reg() 0x29 failded %d\n", ret);

if((ret = dm_read_reg(dev, 0x28, &val)) >= 0)

printk("dm962x: dm_read_reg() 0x28 0x%02x\n", val);

else

printk("dm962x: dm_read_reg() 0x28 failded %d\n", ret);

if((ret = dm_read_reg(dev, 0x2b, &val)) >= 0)

printk("dm962x: dm_read_reg() 0x2b 0x%02x\n", val);

else

printk("dm962x: dm_read_reg() 0x2b failded %d\n", ret);

if((ret = dm_read_reg(dev, 0x2a, &val)) >= 0)

printk("dm962x: dm_read_reg() 0x2a 0x%02x\n", val);

else

printk("dm962x: dm_read_reg() 0x2a failded %d\n", ret);

// JJ3

if((ret = dm_read_reg(dev, 0xf2, &val)) >= 0)

printk("dm962x: dm_read_reg() 0xf2 0x%02x\n", val);

else

printk("dm962x: dm_read_reg() 0xf2 failded %d\n", ret);

// reset

dm_write_reg(dev, DM_NET_CTRL, 1);

udelay(20);

// Stone add Enable "MAC layer" Flow Control, TX Pause Packet Enable

dm_write_reg(dev, DM_FLOW_CTRL, 0x29);

// Stone add Enable "PHY layer" Flow Control support (phy register 0x04 bit 10)

temp = dm9621_mdio_read(dev->net, dev->mii.phy_id, 0x04);

dm9621_mdio_write(dev->net, dev->mii.phy_id, 0x04, temp | 0x400);

// Add V1.1, Enable auto link while plug in RJ45

dm_write_reg(dev, USB_CTRL, 0x20);

// try to using param addr at first

if(is_valid_ether_addr(param_addr)){

mac_src = "param data";

memcpy(dev->net->dev_addr, param_addr, 6);

__dm9621_set_mac_address(dev);

}

else{

// try reading the node address from the attached EEPROM

mac_src = "eeprom";

for(i = 0; i < 6; i += 2)

dm_read_eeprom_word(dev, i / 2, dev->net->dev_addr + i);

if(is_valid_ether_addr(param_addr)){

__dm9621_set_mac_address(dev);

}

else{

// try reading from mac

mac_src = "chip";

if(dm_read(dev, DM_PHY_ADDR, ETH_ALEN, dev->net->dev_addr) < 0){

printk(KERN_ERR "Error reading MAC address\n");

ret = -ENODEV;

goto out;

}

}

}

if(!is_valid_ether_addr(dev->net->dev_addr)){

printk("dm962x: Invalid ethernet MAC address. Please set using ifconfig\n");

} else {

printk("dm962x: ethernet MAC address %pM (%s)\n", dev->net->dev_addr, mac_src);

}

// read SMI mode register

priv = dev->driver_priv = kmalloc(sizeof(struct dm96xx_priv), GFP_ATOMIC);

if (!priv) {

printk("Failed to allocate memory for dm96xx_priv\n");

ret = -ENOMEM;

goto out;

}

// work-around for 9621 mode

#if 0

printk("dm962x: Fixme: work around for 9621 mode\n");

printk("dm962x: Add tx_fixup() debug...\n");

#endif

dm_write_reg(dev, DM_MCAST_ADDR, 0); // clear data bus to 0s

dm_read_reg(dev, DM_MCAST_ADDR, &temp); // clear data bus to 0s

priv->flag_fail_count = 0;

// Must clear data bus before we can read the 'MODE9621' bit

ret = dm_read_reg(dev, DM_SMIREG, &temp);

if (ret < 0) {

printk(KERN_ERR "dm962x: Error read SMI register\n");

}

else priv->mode_9621 = temp & DM_MODE9621;

printk(KERN_WARNING "dm962x: 9621 Mode = %d\n", priv->mode_9621);

// Need to check the Chipset version (register 0x5c is 0x02?)

dm_read_reg(dev, DM_TXRX_M, &temp);

if (temp == 0x02) {

dm_read_reg(dev, 0x3f, &temp);

temp |= 0x80;

dm_write_reg(dev, 0x3f, temp);

}

//Stone add for check Product ID == 0x1269

ret = dm_read(dev, DM_PID, 2, pid);

if (pid[0] == 0x1269)

dm_write_reg(dev, DM_SMIREG, 0xa0);

if (pid[0] == 0x0269)

dm_write_reg(dev, DM_SMIREG, 0xa0);

/* power up phy */

dm_write_reg(dev, DM_GPR_CTRL, 1);

dm_write_reg(dev, DM_GPR_DATA, 0);

/* Init tx/rx checksum */

#if DM_TX_CS_EN

dm_write_reg(dev, DM_TX_CRC_CTRL, 7);

#endif

dm_write_reg(dev, DM_RX_CRC_CTRL, 2);

/* receive broadcast packets */

dm9621_set_multicast(dev->net);

dm9621_mdio_write(dev->net, dev->mii.phy_id, MII_BMCR, BMCR_RESET);

/* Hank add, work for comapubility issue (10M Power control) */

dm9621_mdio_write(dev->net, dev->mii.phy_id, PHY_SPEC_CFG, 0x800);

mdio_val = dm9621_mdio_read(dev->net, dev->mii.phy_id, PHY_SPEC_CFG);

dm9621_mdio_write(dev->net, dev->mii.phy_id, MII_ADVERTISE,

ADVERTISE_ALL | ADVERTISE_CSMA | ADVERTISE_PAUSE_CAP);

mii_nway_restart(&dev->mii);

out:

return ret;

}

/* cleanup device ... can sleep, but can't fail */

void

dm9621_unbind(struct usbnet *dev, struct usb_interface *intf)

{

struct dm96xx_priv* priv= dev->driver_priv;

printk("dm9621_unbind():\n");

printk("flag_fail_count %lu\n", (long unsigned int)priv->flag_fail_count);

kfree(dev->driver_priv); // displayed dev->.. above, then can free dev

#if LINUX_VERSION_CODE >= KERNEL_VERSION(2,6,31)

printk("rx_length_errors %lu\n",dev->net->stats.rx_length_errors);

printk("rx_over_errors %lu\n",dev->net->stats.rx_over_errors );

printk("rx_crc_errors %lu\n",dev->net->stats.rx_crc_errors );

printk("rx_frame_errors %lu\n",dev->net->stats.rx_frame_errors );

printk("rx_fifo_errors %lu\n",dev->net->stats.rx_fifo_errors );

printk("rx_missed_errors %lu\n",dev->net->stats.rx_missed_errors);

#else

printk("rx_length_errors %lu\n",dev->stats.rx_length_errors);

printk("rx_over_errors %lu\n",dev->stats.rx_over_errors );

printk("rx_crc_errors %lu\n",dev->stats.rx_crc_errors );

printk("rx_frame_errors %lu\n",dev->stats.rx_frame_errors );

printk("rx_fifo_errors %lu\n",dev->stats.rx_fifo_errors );

printk("rx_missed_errors %lu\n",dev->stats.rx_missed_errors);

#endif

}

/* reset device ... can sleep */

int

dm9621_link_reset(struct usbnet *dev)

{

struct ethtool_cmd ecmd;

mii_check_media(&dev->mii, 1, 1);

mii_ethtool_gset(&dev->mii, &ecmd);

/* hank add */

dm9621_mdio_write(dev->net, dev->mii.phy_id, PHY_SPEC_CFG, 0x800);

printk("link_reset() speed: %d duplex: %d\n", ecmd.speed, ecmd.duplex);

return 0;

}

/* for status polling */

void

dm9621_status(struct usbnet *dev, struct urb *urb)

{

int link;

u8 *buf;

/* format:

b0: net status

b1: tx status 1

b2: tx status 2

b3: rx status

b4: rx overflow

b5: rx count

b6: tx count

b7: gpr

*/

if (urb->actual_length < 8)

return;

buf = urb->transfer_buffer;

link = !!(buf[0] & 0x40);

if (netif_carrier_ok(dev->net) != link) {

if (link) {

netif_carrier_on(dev->net);

usbnet_defer_kevent (dev, EVENT_LINK_RESET);

} else

netif_carrier_off(dev->net);

printk("Link Status is: %d\n", link);

}

}

/* fixup rx packet (strip framing) */

int

dm9621_rx_fixup(struct usbnet *dev, struct sk_buff *skb)

{

u8 status;

int len;

struct dm96xx_priv *priv = (struct dm96xx_priv *)dev->driver_priv;

/* 9621 format:

b0: rx status

b1: packet length (incl crc) low

b2: packet length (incl crc) high

b3..n-4: packet data

bn-3..bn: ethernet crc

*/

/* 9621 format:

one additional byte then 9621 :

rx_flag in the first pos

*/

if (unlikely(skb->len < DM_RX_OVERHEAD_9601)) { // 20090623

printk("unexpected tiny rx frame\n");

return 0;

}

if (priv->mode_9621) {

/* mode 9621 */

if (unlikely(skb->len < DM_RX_OVERHEAD)) { // 20090623

printk("unexpected tiny rx frame\n");

return 0;

}

status = skb->data[1];

len = (skb->data[2] | (skb->data[3] << 8)) - 4;

if (unlikely(status & 0xbf)) {

#if LINUX_VERSION_CODE >= KERNEL_VERSION(2,6,31)

if (status & 0x01) dev->net->stats.rx_fifo_errors++;

if (status & 0x02) dev->net->stats.rx_crc_errors++;

if (status & 0x04) dev->net->stats.rx_frame_errors++;

if (status & 0x20) dev->net->stats.rx_missed_errors++;

if (status & 0x90) dev->net->stats.rx_length_errors++;

#else

if (status & 0x01) dev->stats.rx_fifo_errors++;

if (status & 0x02) dev->stats.rx_crc_errors++;

if (status & 0x04) dev->stats.rx_frame_errors++;

if (status & 0x20) dev->stats.rx_missed_errors++;

if (status & 0x90) dev->stats.rx_length_errors++;

#endif

return 0;

}

skb_pull(skb, 4);

skb_trim(skb, len);

}

else { /* mode 9621 (original driver code) */

status = skb->data[0];

len = (skb->data[1] | (skb->data[2] << 8)) - 4;

if (unlikely(status & 0xbf)) {

#if LINUX_VERSION_CODE >= KERNEL_VERSION(2,6,31)

if (status & 0x01) dev->net->stats.rx_fifo_errors++;

if (status & 0x02) dev->net->stats.rx_crc_errors++;

if (status & 0x04) dev->net->stats.rx_frame_errors++;

if (status & 0x20) dev->net->stats.rx_missed_errors++;

if (status & 0x90) dev->net->stats.rx_length_errors++;

#else

if (status & 0x01) dev->stats.rx_fifo_errors++;

if (status & 0x02) dev->stats.rx_crc_errors++;

if (status & 0x04) dev->stats.rx_frame_errors++;

if (status & 0x20) dev->stats.rx_missed_errors++;

if (status & 0x90) dev->stats.rx_length_errors++;

#endif

return 0;

}

skb_pull(skb, 3);

skb_trim(skb, len);

}

return 1;

}

/* fixup tx packet (add framing) */

struct sk_buff *

dm9621_tx_fixup(struct usbnet *dev, struct sk_buff *skb, gfp_t flags)

{

int len;

/* format:

b0: packet length low

b1: packet length high

b3..n: packet data

*/

len = skb->len;

if (skb_headroom(skb) < DM_TX_OVERHEAD) {

struct sk_buff *skb2;

skb2 = skb_copy_expand(skb, DM_TX_OVERHEAD, 0, flags);

dev_kfree_skb_any(skb);

skb = skb2;

if (!skb)

return NULL;

}

__skb_push(skb, DM_TX_OVERHEAD);

/* usbnet adds padding if length is a multiple of packet size if so, adjust length value in header */

if ((skb->len % dev->maxpacket) == 0)

len++;

skb->data[0] = len;

skb->data[1] = len >> 8;

/* hank, recalcute checksum of TCP */

return skb;

}

static const struct driver_info dm9621_info = {

.description= "Davicom DM9620 USB Ethernet",

.flags = FLAG_ETHER,

.bind = dm9621_bind,

.rx_fixup = dm9621_rx_fixup,

.tx_fixup = dm9621_tx_fixup,

.status = dm9621_status,

.link_reset = dm9621_link_reset,

.reset = dm9621_link_reset,

.unbind = dm9621_unbind,

};

const struct usb_device_id products[] = {

{

USB_DEVICE(0x07aa, 0x9601), /* Corega FEther USB-TXC */

.driver_info = (unsigned long)&dm9621_info,

}, {

USB_DEVICE(0x0a46, 0x9601), /* Davicom USB-100 */

.driver_info = (unsigned long)&dm9621_info,

}, {

USB_DEVICE(0x0a46, 0x6688), /* ZT6688 USB NIC */

.driver_info = (unsigned long)&dm9621_info,

}, {

USB_DEVICE(0x0a46, 0x0268), /* ShanTou ST268 USB NIC */

.driver_info = (unsigned long)&dm9621_info,

}, {

USB_DEVICE(0x0a46, 0x8515), /* ADMtek ADM8515 USB NIC */

.driver_info = (unsigned long)&dm9621_info,

}, {

USB_DEVICE(0x0a47, 0x9601), /* Hirose USB-100 */

.driver_info = (unsigned long)&dm9621_info,

}, {

USB_DEVICE(0x0a46, 0x9620), /* Davicom 9620 */

.driver_info = (unsigned long)&dm9621_info,

}, {

USB_DEVICE(0x0a46, 0x9621), /* Davicom 9621 */

.driver_info = (unsigned long)&dm9621_info,

}, {

USB_DEVICE(0x0a46, 0x9622), /* Davicom 9622 */

.driver_info = (unsigned long)&dm9621_info,

}, {

USB_DEVICE(0x0fe6, 0x8101), /* Davicom 9601 USB to Fast Ethernet Adapter */

.driver_info = (unsigned long)&dm9621_info,

}, {

USB_DEVICE(0x0a46, 0x1269), /* Davicom 9621A CDC */

.driver_info = (unsigned long)&dm9621_info,

}, {

USB_DEVICE(0x0a46, 0x0269), /* Davicom 9620A CDC */

.driver_info = (unsigned long)&dm9621_info,

},

{}, // END,最后一项为空,用来标识结束

};

MODULE_DEVICE_TABLE(usb, products);

struct usb_driver dm9621_driver = {

.name = "dm9620",

.id_table = products,

.probe = usbnet_probe, // 驱动匹配用

.disconnect = usbnet_disconnect,// 热插拔支持

.suspend = usbnet_suspend, // USB协议规定USB设备一定要支持休眠功能

.resume = usbnet_resume,

};

/***************************************************************************************************/

static void __exit

dm9621_drv_exit(void)

{

usb_deregister(&dm9621_driver);

}

static int __init

dm9621_drv_init(void)

{

// 向USB核心注册USB驱动

return usb_register(&dm9621_driver);

}

module_init(dm9621_drv_init);

module_exit(dm9621_drv_exit);

MODULE_LICENSE("GPL v2");

然后上面的代码是依赖于usbnet.c,这个文件已经做好了,不需要我们自己修改,直接用就可以了,原始代码如下:

// #define DEBUG // error path messages, extra info

// #define VERBOSE // more; success messages

#include <linux/module.h>

#include <linux/init.h>

#include <linux/netdevice.h>

#include <linux/etherdevice.h>

#include <linux/ctype.h>

#include <linux/ethtool.h>

#include <linux/workqueue.h>

#include <linux/mii.h>

#include <linux/usb.h>

#include <linux/usb/usbnet.h>

#include <linux/slab.h>

#include <linux/kernel.h>

#include <linux/pm_runtime.h>

#define DRIVER_VERSION "22-Aug-2005"

/*-------------------------------------------------------------------------*/

#define RX_MAX_QUEUE_MEMORY (60 * 1518)

#define RX_QLEN(dev) (((dev)->udev->speed == USB_SPEED_HIGH) ? \

(RX_MAX_QUEUE_MEMORY/(dev)->rx_urb_size) : 4)

#define TX_QLEN(dev) (((dev)->udev->speed == USB_SPEED_HIGH) ? \

(RX_MAX_QUEUE_MEMORY/(dev)->hard_mtu) : 4)

// reawaken network queue this soon after stopping; else watchdog barks

#define TX_TIMEOUT_JIFFIES (5*HZ)

// throttle rx/tx briefly after some faults, so khubd might disconnect()

// us (it polls at HZ/4 usually) before we report too many false errors.

#define THROTTLE_JIFFIES (HZ/8)

// between wakeups

#define UNLINK_TIMEOUT_MS 3

/*-------------------------------------------------------------------------*/

// randomly generated ethernet address

static u8 node_id [ETH_ALEN];

static const char driver_name [] = "usbnet";

/* use ethtool to change the level for any given device */

static int msg_level = -1;

module_param (msg_level, int, 0);

MODULE_PARM_DESC (msg_level, "Override default message level");

/*-------------------------------------------------------------------------*/

/* handles CDC Ethernet and many other network "bulk data" interfaces */

int usbnet_get_endpoints(struct usbnet *dev, struct usb_interface *intf)

{

int tmp;

struct usb_host_interface *alt = NULL;

struct usb_host_endpoint *in = NULL, *out = NULL;

struct usb_host_endpoint *status = NULL;

for (tmp = 0; tmp < intf->num_altsetting; tmp++) {

unsigned ep;

in = out = status = NULL;

alt = intf->altsetting + tmp;

/* take the first altsetting with in-bulk + out-bulk;

* remember any status endpoint, just in case;

* ignore other endpoints and altsettings.

*/

for (ep = 0; ep < alt->desc.bNumEndpoints; ep++) {

struct usb_host_endpoint *e;

int intr = 0;

e = alt->endpoint + ep;

switch (e->desc.bmAttributes) {

case USB_ENDPOINT_XFER_INT:

if (!usb_endpoint_dir_in(&e->desc))

continue;

intr = 1;

/* FALLTHROUGH */

case USB_ENDPOINT_XFER_BULK:

break;

default:

continue;

}

if (usb_endpoint_dir_in(&e->desc)) {

if (!intr && !in)

in = e;

else if (intr && !status)

status = e;

} else {

if (!out)

out = e;

}

}

if (in && out)

break;

}

if (!alt || !in || !out)

return -EINVAL;

if (alt->desc.bAlternateSetting != 0 ||

!(dev->driver_info->flags & FLAG_NO_SETINT)) {

tmp = usb_set_interface (dev->udev, alt->desc.bInterfaceNumber,

alt->desc.bAlternateSetting);

if (tmp < 0)

return tmp;

}

dev->in = usb_rcvbulkpipe (dev->udev,

in->desc.bEndpointAddress & USB_ENDPOINT_NUMBER_MASK);

dev->out = usb_sndbulkpipe (dev->udev,

out->desc.bEndpointAddress & USB_ENDPOINT_NUMBER_MASK);

dev->status = status;

return 0;

}

EXPORT_SYMBOL_GPL(usbnet_get_endpoints);

int usbnet_get_ethernet_addr(struct usbnet *dev, int iMACAddress)

{

int tmp, i;

unsigned char buf [13];

tmp = usb_string(dev->udev, iMACAddress, buf, sizeof buf);

if (tmp != 12) {

dev_dbg(&dev->udev->dev,

"bad MAC string %d fetch, %d\n", iMACAddress, tmp);

if (tmp >= 0)

tmp = -EINVAL;

return tmp;

}

for (i = tmp = 0; i < 6; i++, tmp += 2)

dev->net->dev_addr [i] =

(hex_to_bin(buf[tmp]) << 4) + hex_to_bin(buf[tmp + 1]);

return 0;

}

EXPORT_SYMBOL_GPL(usbnet_get_ethernet_addr);

static void intr_complete (struct urb *urb);

static int init_status (struct usbnet *dev, struct usb_interface *intf)

{

char *buf = NULL;

unsigned pipe = 0;

unsigned maxp;

unsigned period;

if (!dev->driver_info->status)

return 0;

pipe = usb_rcvintpipe (dev->udev,

dev->status->desc.bEndpointAddress

& USB_ENDPOINT_NUMBER_MASK);

maxp = usb_maxpacket (dev->udev, pipe, 0);

/* avoid 1 msec chatter: min 8 msec poll rate */

period = max ((int) dev->status->desc.bInterval,

(dev->udev->speed == USB_SPEED_HIGH) ? 7 : 3);

buf = kmalloc (maxp, GFP_KERNEL);

if (buf) {

dev->interrupt = usb_alloc_urb (0, GFP_KERNEL);

if (!dev->interrupt) {

kfree (buf);

return -ENOMEM;

} else {

usb_fill_int_urb(dev->interrupt, dev->udev, pipe,

buf, maxp, intr_complete, dev, period);

dev->interrupt->transfer_flags |= URB_FREE_BUFFER;

dev_dbg(&intf->dev,

"status ep%din, %d bytes period %d\n",

usb_pipeendpoint(pipe), maxp, period);

}

}

return 0;

}

/* Passes this packet up the stack, updating its accounting.

* Some link protocols batch packets, so their rx_fixup paths

* can return clones as well as just modify the original skb.

*/

void usbnet_skb_return (struct usbnet *dev, struct sk_buff *skb)

{

int status;

if (test_bit(EVENT_RX_PAUSED, &dev->flags)) {

skb_queue_tail(&dev->rxq_pause, skb);

return;

}

skb->protocol = eth_type_trans (skb, dev->net);

dev->net->stats.rx_packets++;

dev->net->stats.rx_bytes += skb->len;

netif_dbg(dev, rx_status, dev->net, "< rx, len %zu, type 0x%x\n",

skb->len + sizeof (struct ethhdr), skb->protocol);

memset (skb->cb, 0, sizeof (struct skb_data));

if (skb_defer_rx_timestamp(skb))

return;

status = netif_rx (skb);

if (status != NET_RX_SUCCESS)

netif_dbg(dev, rx_err, dev->net,

"netif_rx status %d\n", status);

}

EXPORT_SYMBOL_GPL(usbnet_skb_return);

/*-------------------------------------------------------------------------

*

* Network Device Driver (peer link to "Host Device", from USB host)

*

*-------------------------------------------------------------------------*/

int usbnet_change_mtu (struct net_device *net, int new_mtu)

{

struct usbnet *dev = netdev_priv(net);

int ll_mtu = new_mtu + net->hard_header_len;

int old_hard_mtu = dev->hard_mtu;

int old_rx_urb_size = dev->rx_urb_size;

if (new_mtu <= 0)

return -EINVAL;

// no second zero-length packet read wanted after mtu-sized packets

if ((ll_mtu % dev->maxpacket) == 0)

return -EDOM;

net->mtu = new_mtu;

dev->hard_mtu = net->mtu + net->hard_header_len;

if (dev->rx_urb_size == old_hard_mtu) {

dev->rx_urb_size = dev->hard_mtu;

if (dev->rx_urb_size > old_rx_urb_size)

usbnet_unlink_rx_urbs(dev);

}

return 0;

}

EXPORT_SYMBOL_GPL(usbnet_change_mtu);

/* The caller must hold list->lock */

static void __usbnet_queue_skb(struct sk_buff_head *list,

struct sk_buff *newsk, enum skb_state state)

{

struct skb_data *entry = (struct skb_data *) newsk->cb;

__skb_queue_tail(list, newsk);

entry->state = state;

}

/*-------------------------------------------------------------------------*/

/* some LK 2.4 HCDs oopsed if we freed or resubmitted urbs from

* completion callbacks. 2.5 should have fixed those bugs...

*/

static enum skb_state defer_bh(struct usbnet *dev, struct sk_buff *skb,

struct sk_buff_head *list, enum skb_state state)

{

unsigned long flags;

enum skb_state old_state;

struct skb_data *entry = (struct skb_data *) skb->cb;

spin_lock_irqsave(&list->lock, flags);

old_state = entry->state;

entry->state = state;

__skb_unlink(skb, list);

spin_unlock(&list->lock);

spin_lock(&dev->done.lock);

__skb_queue_tail(&dev->done, skb);

if (dev->done.qlen == 1)

tasklet_schedule(&dev->bh);

spin_unlock_irqrestore(&dev->done.lock, flags);

return old_state;

}

/* some work can't be done in tasklets, so we use keventd

*

* NOTE: annoying asymmetry: if it's active, schedule_work() fails,

* but tasklet_schedule() doesn't. hope the failure is rare.

*/

void usbnet_defer_kevent (struct usbnet *dev, int work)

{

set_bit (work, &dev->flags);

if (!schedule_work (&dev->kevent))

netdev_err(dev->net, "kevent %d may have been dropped\n", work);

else

netdev_dbg(dev->net, "kevent %d scheduled\n", work);

}

EXPORT_SYMBOL_GPL(usbnet_defer_kevent);

/*-------------------------------------------------------------------------*/

static void rx_complete (struct urb *urb);

static int rx_submit (struct usbnet *dev, struct urb *urb, gfp_t flags)

{

struct sk_buff *skb;

struct skb_data *entry;

int retval = 0;

unsigned long lockflags;

size_t size = dev->rx_urb_size;

skb = __netdev_alloc_skb_ip_align(dev->net, size, flags);

if (!skb) {

netif_dbg(dev, rx_err, dev->net, "no rx skb\n");

usbnet_defer_kevent (dev, EVENT_RX_MEMORY);

usb_free_urb (urb);

return -ENOMEM;

}

entry = (struct skb_data *) skb->cb;

entry->urb = urb;

entry->dev = dev;

entry->length = 0;

usb_fill_bulk_urb (urb, dev->udev, dev->in,

skb->data, size, rx_complete, skb);

spin_lock_irqsave (&dev->rxq.lock, lockflags);

if (netif_running (dev->net) &&

netif_device_present (dev->net) &&

!test_bit (EVENT_RX_HALT, &dev->flags) &&

!test_bit (EVENT_DEV_ASLEEP, &dev->flags)) {

switch (retval = usb_submit_urb (urb, GFP_ATOMIC)) {

case -EPIPE:

usbnet_defer_kevent (dev, EVENT_RX_HALT);

break;

case -ENOMEM:

usbnet_defer_kevent (dev, EVENT_RX_MEMORY);

break;

case -ENODEV:

netif_dbg(dev, ifdown, dev->net, "device gone\n");

netif_device_detach (dev->net);

break;

case -EHOSTUNREACH:

retval = -ENOLINK;

break;

default:

netif_dbg(dev, rx_err, dev->net,

"rx submit, %d\n", retval);

tasklet_schedule (&dev->bh);

break;

case 0:

__usbnet_queue_skb(&dev->rxq, skb, rx_start);

}

} else {

netif_dbg(dev, ifdown, dev->net, "rx: stopped\n");

retval = -ENOLINK;

}

spin_unlock_irqrestore (&dev->rxq.lock, lockflags);

if (retval) {

dev_kfree_skb_any (skb);

usb_free_urb (urb);

}

return retval;

}

/*-------------------------------------------------------------------------*/

static inline void rx_process (struct usbnet *dev, struct sk_buff *skb)

{

if (dev->driver_info->rx_fixup &&

!dev->driver_info->rx_fixup (dev, skb)) {

/* With RX_ASSEMBLE, rx_fixup() must update counters */

if (!(dev->driver_info->flags & FLAG_RX_ASSEMBLE))

dev->net->stats.rx_errors++;

goto done;

}

// else network stack removes extra byte if we forced a short packet

if (skb->len) {

/* all data was already cloned from skb inside the driver */

if (dev->driver_info->flags & FLAG_MULTI_PACKET)

dev_kfree_skb_any(skb);

else

usbnet_skb_return(dev, skb);

return;

}

netif_dbg(dev, rx_err, dev->net, "drop\n");

dev->net->stats.rx_errors++;

done:

skb_queue_tail(&dev->done, skb);

}

/*-------------------------------------------------------------------------*/

static void rx_complete (struct urb *urb)

{

struct sk_buff *skb = (struct sk_buff *) urb->context;

struct skb_data *entry = (struct skb_data *) skb->cb;

struct usbnet *dev = entry->dev;

int urb_status = urb->status;

enum skb_state state;

skb_put (skb, urb->actual_length);

state = rx_done;

entry->urb = NULL;

switch (urb_status) {

/* success */

case 0:

if (skb->len < dev->net->hard_header_len) {

state = rx_cleanup;

dev->net->stats.rx_errors++;

dev->net->stats.rx_length_errors++;

netif_dbg(dev, rx_err, dev->net,

"rx length %d\n", skb->len);

}

break;

/* stalls need manual reset. this is rare ... except that

* when going through USB 2.0 TTs, unplug appears this way.

* we avoid the highspeed version of the ETIMEDOUT/EILSEQ

* storm, recovering as needed.

*/

case -EPIPE:

dev->net->stats.rx_errors++;

usbnet_defer_kevent (dev, EVENT_RX_HALT);

// FALLTHROUGH

/* software-driven interface shutdown */

case -ECONNRESET: /* async unlink */

case -ESHUTDOWN: /* hardware gone */

netif_dbg(dev, ifdown, dev->net,

"rx shutdown, code %d\n", urb_status);

goto block;

/* we get controller i/o faults during khubd disconnect() delays.

* throttle down resubmits, to avoid log floods; just temporarily,

* so we still recover when the fault isn't a khubd delay.

*/

case -EPROTO:

case -ETIME:

case -EILSEQ:

dev->net->stats.rx_errors++;

if (!timer_pending (&dev->delay)) {

mod_timer (&dev->delay, jiffies + THROTTLE_JIFFIES);

netif_dbg(dev, link, dev->net,

"rx throttle %d\n", urb_status);

}

block:

state = rx_cleanup;

entry->urb = urb;

urb = NULL;

break;

/* data overrun ... flush fifo? */

case -EOVERFLOW:

dev->net->stats.rx_over_errors++;

// FALLTHROUGH

default:

state = rx_cleanup;

dev->net->stats.rx_errors++;

netif_dbg(dev, rx_err, dev->net, "rx status %d\n", urb_status);

break;

}

state = defer_bh(dev, skb, &dev->rxq, state);

if (urb) {

if (netif_running (dev->net) &&

!test_bit (EVENT_RX_HALT, &dev->flags) &&

state != unlink_start) {

rx_submit (dev, urb, GFP_ATOMIC);

usb_mark_last_busy(dev->udev);

return;

}

usb_free_urb (urb);

}

netif_dbg(dev, rx_err, dev->net, "no read resubmitted\n");

}

static void intr_complete (struct urb *urb)

{

struct usbnet *dev = urb->context;

int status = urb->status;

switch (status) {

/* success */

case 0:

dev->driver_info->status(dev, urb);

break;

/* software-driven interface shutdown */

case -ENOENT: /* urb killed */

case -ESHUTDOWN: /* hardware gone */

netif_dbg(dev, ifdown, dev->net,

"intr shutdown, code %d\n", status);

return;

/* NOTE: not throttling like RX/TX, since this endpoint

* already polls infrequently

*/

default:

netdev_dbg(dev->net, "intr status %d\n", status);

break;

}

if (!netif_running (dev->net))

return;

memset(urb->transfer_buffer, 0, urb->transfer_buffer_length);

status = usb_submit_urb (urb, GFP_ATOMIC);

if (status != 0)

netif_err(dev, timer, dev->net,

"intr resubmit --> %d\n", status);

}

/*-------------------------------------------------------------------------*/

void usbnet_pause_rx(struct usbnet *dev)

{

set_bit(EVENT_RX_PAUSED, &dev->flags);

netif_dbg(dev, rx_status, dev->net, "paused rx queue enabled\n");

}

EXPORT_SYMBOL_GPL(usbnet_pause_rx);

void usbnet_resume_rx(struct usbnet *dev)

{

struct sk_buff *skb;

int num = 0;

clear_bit(EVENT_RX_PAUSED, &dev->flags);

while ((skb = skb_dequeue(&dev->rxq_pause)) != NULL) {

usbnet_skb_return(dev, skb);

num++;

}

tasklet_schedule(&dev->bh);

netif_dbg(dev, rx_status, dev->net,

"paused rx queue disabled, %d skbs requeued\n", num);

}

EXPORT_SYMBOL_GPL(usbnet_resume_rx);

void usbnet_purge_paused_rxq(struct usbnet *dev)

{

skb_queue_purge(&dev->rxq_pause);

}

EXPORT_SYMBOL_GPL(usbnet_purge_paused_rxq);

/*-------------------------------------------------------------------------*/

// unlink pending rx/tx; completion handlers do all other cleanup

static int unlink_urbs (struct usbnet *dev, struct sk_buff_head *q)

{

unsigned long flags;

struct sk_buff *skb;

int count = 0;

spin_lock_irqsave (&q->lock, flags);

while (!skb_queue_empty(q)) {

struct skb_data *entry;

struct urb *urb;

int retval;

skb_queue_walk(q, skb) {

entry = (struct skb_data *) skb->cb;

if (entry->state != unlink_start)

goto found;

}

break;

found:

entry->state = unlink_start;

urb = entry->urb;

/*

* Get reference count of the URB to avoid it to be

* freed during usb_unlink_urb, which may trigger

* use-after-free problem inside usb_unlink_urb since

* usb_unlink_urb is always racing with .complete

* handler(include defer_bh).

*/

usb_get_urb(urb);

spin_unlock_irqrestore(&q->lock, flags);

// during some PM-driven resume scenarios,

// these (async) unlinks complete immediately

retval = usb_unlink_urb (urb);

if (retval != -EINPROGRESS && retval != 0)

netdev_dbg(dev->net, "unlink urb err, %d\n", retval);

else

count++;

usb_put_urb(urb);

spin_lock_irqsave(&q->lock, flags);

}

spin_unlock_irqrestore (&q->lock, flags);

return count;

}

// Flush all pending rx urbs

// minidrivers may need to do this when the MTU changes

void usbnet_unlink_rx_urbs(struct usbnet *dev)

{

if (netif_running(dev->net)) {

(void) unlink_urbs (dev, &dev->rxq);

tasklet_schedule(&dev->bh);

}

}

EXPORT_SYMBOL_GPL(usbnet_unlink_rx_urbs);

/*-------------------------------------------------------------------------*/

// precondition: never called in_interrupt

static void usbnet_terminate_urbs(struct usbnet *dev)

{

DECLARE_WAIT_QUEUE_HEAD_ONSTACK(unlink_wakeup);

DECLARE_WAITQUEUE(wait, current);

int temp;

/* ensure there are no more active urbs */

add_wait_queue(&unlink_wakeup, &wait);

set_current_state(TASK_UNINTERRUPTIBLE);

dev->wait = &unlink_wakeup;

temp = unlink_urbs(dev, &dev->txq) +

unlink_urbs(dev, &dev->rxq);

/* maybe wait for deletions to finish. */

while (!skb_queue_empty(&dev->rxq)

&& !skb_queue_empty(&dev->txq)

&& !skb_queue_empty(&dev->done)) {

schedule_timeout(msecs_to_jiffies(UNLINK_TIMEOUT_MS));

set_current_state(TASK_UNINTERRUPTIBLE);

netif_dbg(dev, ifdown, dev->net,

"waited for %d urb completions\n", temp);

}

set_current_state(TASK_RUNNING);

dev->wait = NULL;

remove_wait_queue(&unlink_wakeup, &wait);

}

int usbnet_stop(struct net_device *net)

{

struct usbnet *dev = netdev_priv(net);

struct driver_info *info = dev->driver_info;

int retval;

clear_bit(EVENT_DEV_OPEN, &dev->flags);

netif_stop_queue (net);

netif_info(dev, ifdown, dev->net,

"stop stats: rx/tx %lu/%lu, errs %lu/%lu\n",

net->stats.rx_packets, net->stats.tx_packets,

net->stats.rx_errors, net->stats.tx_errors);

/* allow minidriver to stop correctly (wireless devices to turn off

* radio etc) */

if (info->stop) {

retval = info->stop(dev);

if (retval < 0)

netif_info(dev, ifdown, dev->net,

"stop fail (%d) usbnet usb-%s-%s, %s\n",

retval,

dev->udev->bus->bus_name, dev->udev->devpath,

info->description);

}

if (!(info->flags & FLAG_AVOID_UNLINK_URBS))

usbnet_terminate_urbs(dev);

usb_kill_urb(dev->interrupt);

usbnet_purge_paused_rxq(dev);

/* deferred work (task, timer, softirq) must also stop.

* can't flush_scheduled_work() until we drop rtnl (later),

* else workers could deadlock; so make workers a NOP.

*/

dev->flags = 0;

del_timer_sync (&dev->delay);

tasklet_kill (&dev->bh);

if (info->manage_power)

info->manage_power(dev, 0);

else

usb_autopm_put_interface(dev->intf);

return 0;

}

EXPORT_SYMBOL_GPL(usbnet_stop);

/***************************************************************************************************/

// posts reads, and enables write queuing

// precondition: never called in_interrupt

int usbnet_open (struct net_device *net)

{

struct usbnet *dev = netdev_priv(net);

int retval;

struct driver_info *info = dev->driver_info;

if ((retval = usb_autopm_get_interface(dev->intf)) < 0) {

netif_info(dev, ifup, dev->net,

"resumption fail (%d) usbnet usb-%s-%s, %s\n",

retval,

dev->udev->bus->bus_name,

dev->udev->devpath,

info->description);

goto done_nopm;

}

// put into "known safe" state

if (info->reset && (retval = info->reset (dev)) < 0) {

netif_info(dev, ifup, dev->net,

"open reset fail (%d) usbnet usb-%s-%s, %s\n",

retval,

dev->udev->bus->bus_name,

dev->udev->devpath,

info->description);

goto done;

}

// insist peer be connected

if (info->check_connect && (retval = info->check_connect (dev)) < 0) {

netif_dbg(dev, ifup, dev->net, "can't open; %d\n", retval);

goto done;

}

/* start any status interrupt transfer */

if (dev->interrupt) {

retval = usb_submit_urb (dev->interrupt, GFP_KERNEL);

if (retval < 0) {

netif_err(dev, ifup, dev->net,

"intr submit %d\n", retval);

goto done;

}

}

set_bit(EVENT_DEV_OPEN, &dev->flags);

netif_start_queue (net);

netif_info(dev, ifup, dev->net,

"open: enable queueing (rx %d, tx %d) mtu %d %s framing\n",

(int)RX_QLEN(dev), (int)TX_QLEN(dev),

dev->net->mtu,

(dev->driver_info->flags & FLAG_FRAMING_NC) ? "NetChip" :

(dev->driver_info->flags & FLAG_FRAMING_GL) ? "GeneSys" :

(dev->driver_info->flags & FLAG_FRAMING_Z) ? "Zaurus" :

(dev->driver_info->flags & FLAG_FRAMING_RN) ? "RNDIS" :

(dev->driver_info->flags & FLAG_FRAMING_AX) ? "ASIX" :

"simple");

// delay posting reads until we're fully open

tasklet_schedule (&dev->bh);

if (info->manage_power) {

retval = info->manage_power(dev, 1);

if (retval < 0)

goto done_manage_power_error;

usb_autopm_put_interface(dev->intf);

}

return retval;

done_manage_power_error:

clear_bit(EVENT_DEV_OPEN, &dev->flags);

done:

usb_autopm_put_interface(dev->intf);

done_nopm:

return retval;

}

EXPORT_SYMBOL_GPL(usbnet_open);

/*-------------------------------------------------------------------------*/

/* ethtool methods; minidrivers may need to add some more, but

* they'll probably want to use this base set.

*/

int usbnet_get_settings (struct net_device *net, struct ethtool_cmd *cmd)

{

struct usbnet *dev = netdev_priv(net);

if (!dev->mii.mdio_read)

return -EOPNOTSUPP;

return mii_ethtool_gset(&dev->mii, cmd);

}

EXPORT_SYMBOL_GPL(usbnet_get_settings);

int usbnet_set_settings (struct net_device *net, struct ethtool_cmd *cmd)

{

struct usbnet *dev = netdev_priv(net);

int retval;

if (!dev->mii.mdio_write)

return -EOPNOTSUPP;

retval = mii_ethtool_sset(&dev->mii, cmd);

/* link speed/duplex might have changed */

if (dev->driver_info->link_reset)

dev->driver_info->link_reset(dev);

return retval;

}

EXPORT_SYMBOL_GPL(usbnet_set_settings);

u32 usbnet_get_link (struct net_device *net)

{

struct usbnet *dev = netdev_priv(net);

/* If a check_connect is defined, return its result */

if (dev->driver_info->check_connect)

return dev->driver_info->check_connect (dev) == 0;

/* if the device has mii operations, use those */

if (dev->mii.mdio_read)

return mii_link_ok(&dev->mii);

/* Otherwise, dtrt for drivers calling netif_carrier_{on,off} */

return ethtool_op_get_link(net);

}

EXPORT_SYMBOL_GPL(usbnet_get_link);

int usbnet_nway_reset(struct net_device *net)

{

struct usbnet *dev = netdev_priv(net);

if (!dev->mii.mdio_write)

return -EOPNOTSUPP;

return mii_nway_restart(&dev->mii);

}

EXPORT_SYMBOL_GPL(usbnet_nway_reset);

void usbnet_get_drvinfo (struct net_device *net, struct ethtool_drvinfo *info)

{

struct usbnet *dev = netdev_priv(net);

strlcpy (info->driver, dev->driver_name, sizeof info->driver);

strlcpy (info->version, DRIVER_VERSION, sizeof info->version);

strlcpy (info->fw_version, dev->driver_info->description,

sizeof info->fw_version);

usb_make_path (dev->udev, info->bus_info, sizeof info->bus_info);

}

EXPORT_SYMBOL_GPL(usbnet_get_drvinfo);

u32 usbnet_get_msglevel (struct net_device *net)

{

struct usbnet *dev = netdev_priv(net);

return dev->msg_enable;

}

EXPORT_SYMBOL_GPL(usbnet_get_msglevel);

void usbnet_set_msglevel (struct net_device *net, u32 level)

{

struct usbnet *dev = netdev_priv(net);

dev->msg_enable = level;

}

EXPORT_SYMBOL_GPL(usbnet_set_msglevel);

/* drivers may override default ethtool_ops in their bind() routine */

static const struct ethtool_ops usbnet_ethtool_ops = {

.get_settings = usbnet_get_settings,

.set_settings = usbnet_set_settings,

.get_link = usbnet_get_link,

.nway_reset = usbnet_nway_reset,

.get_drvinfo = usbnet_get_drvinfo,

.get_msglevel = usbnet_get_msglevel,

.set_msglevel = usbnet_set_msglevel,

.get_ts_info = ethtool_op_get_ts_info,

};

/*-------------------------------------------------------------------------*/

/* work that cannot be done in interrupt context uses keventd.

*

* NOTE: with 2.5 we could do more of this using completion callbacks,

* especially now that control transfers can be queued.

*/

static void

kevent (struct work_struct *work)

{

struct usbnet *dev =

container_of(work, struct usbnet, kevent);

int status;

/* usb_clear_halt() needs a thread context */

if (test_bit (EVENT_TX_HALT, &dev->flags)) {

unlink_urbs (dev, &dev->txq);

status = usb_autopm_get_interface(dev->intf);

if (status < 0)

goto fail_pipe;

status = usb_clear_halt (dev->udev, dev->out);

usb_autopm_put_interface(dev->intf);

if (status < 0 &&

status != -EPIPE &&

status != -ESHUTDOWN) {

if (netif_msg_tx_err (dev))

fail_pipe:

netdev_err(dev->net, "can't clear tx halt, status %d\n",

status);

} else {

clear_bit (EVENT_TX_HALT, &dev->flags);

if (status != -ESHUTDOWN)

netif_wake_queue (dev->net);

}

}

if (test_bit (EVENT_RX_HALT, &dev->flags)) {

unlink_urbs (dev, &dev->rxq);

status = usb_autopm_get_interface(dev->intf);

if (status < 0)

goto fail_halt;

status = usb_clear_halt (dev->udev, dev->in);

usb_autopm_put_interface(dev->intf);

if (status < 0 &&

status != -EPIPE &&

status != -ESHUTDOWN) {

if (netif_msg_rx_err (dev))

fail_halt:

netdev_err(dev->net, "can't clear rx halt, status %d\n",

status);

} else {

clear_bit (EVENT_RX_HALT, &dev->flags);

tasklet_schedule (&dev->bh);

}

}

/* tasklet could resubmit itself forever if memory is tight */

if (test_bit (EVENT_RX_MEMORY, &dev->flags)) {

struct urb *urb = NULL;

int resched = 1;

if (netif_running (dev->net))

urb = usb_alloc_urb (0, GFP_KERNEL);

else

clear_bit (EVENT_RX_MEMORY, &dev->flags);

if (urb != NULL) {

clear_bit (EVENT_RX_MEMORY, &dev->flags);

status = usb_autopm_get_interface(dev->intf);

if (status < 0) {

usb_free_urb(urb);

goto fail_lowmem;

}

if (rx_submit (dev, urb, GFP_KERNEL) == -ENOLINK)

resched = 0;

usb_autopm_put_interface(dev->intf);

fail_lowmem:

if (resched)

tasklet_schedule (&dev->bh);

}

}

if (test_bit (EVENT_LINK_RESET, &dev->flags)) {

struct driver_info *info = dev->driver_info;

int retval = 0;

clear_bit (EVENT_LINK_RESET, &dev->flags);

status = usb_autopm_get_interface(dev->intf);

if (status < 0)

goto skip_reset;

if(info->link_reset && (retval = info->link_reset(dev)) < 0) {

usb_autopm_put_interface(dev->intf);

skip_reset:

netdev_info(dev->net, "link reset failed (%d) usbnet usb-%s-%s, %s\n",

retval,

dev->udev->bus->bus_name,

dev->udev->devpath,

info->description);

} else {

usb_autopm_put_interface(dev->intf);

}

}

if (dev->flags)

netdev_dbg(dev->net, "kevent done, flags = 0x%lx\n", dev->flags);

}

/*-------------------------------------------------------------------------*/

static void tx_complete (struct urb *urb)

{

struct sk_buff *skb = (struct sk_buff *) urb->context;

struct skb_data *entry = (struct skb_data *) skb->cb;

struct usbnet *dev = entry->dev;

if (urb->status == 0) {

if (!(dev->driver_info->flags & FLAG_MULTI_PACKET))

dev->net->stats.tx_packets++;

dev->net->stats.tx_bytes += entry->length;

} else {

dev->net->stats.tx_errors++;

switch (urb->status) {

case -EPIPE:

usbnet_defer_kevent (dev, EVENT_TX_HALT);

break;

/* software-driven interface shutdown */

case -ECONNRESET: // async unlink

case -ESHUTDOWN: // hardware gone

break;

// like rx, tx gets controller i/o faults during khubd delays

// and so it uses the same throttling mechanism.

case -EPROTO:

case -ETIME:

case -EILSEQ:

usb_mark_last_busy(dev->udev);

if (!timer_pending (&dev->delay)) {

mod_timer (&dev->delay,

jiffies + THROTTLE_JIFFIES);

netif_dbg(dev, link, dev->net,

"tx throttle %d\n", urb->status);

}

netif_stop_queue (dev->net);

break;

default:

netif_dbg(dev, tx_err, dev->net,

"tx err %d\n", entry->urb->status);

break;

}

}

usb_autopm_put_interface_async(dev->intf);

(void) defer_bh(dev, skb, &dev->txq, tx_done);

}

/*-------------------------------------------------------------------------*/

void usbnet_tx_timeout (struct net_device *net)

{

struct usbnet *dev = netdev_priv(net);

unlink_urbs (dev, &dev->txq);

tasklet_schedule (&dev->bh);

// FIXME: device recovery -- reset?

}

EXPORT_SYMBOL_GPL(usbnet_tx_timeout);

/*-------------------------------------------------------------------------*/

netdev_tx_t usbnet_start_xmit (struct sk_buff *skb,

struct net_device *net)

{

struct usbnet *dev = netdev_priv(net);

int length;

struct urb *urb = NULL;

struct skb_data *entry;

struct driver_info *info = dev->driver_info;

unsigned long flags;

int retval;

if (skb)

skb_tx_timestamp(skb);

// some devices want funky USB-level framing, for

// win32 driver (usually) and/or hardware quirks

if (info->tx_fixup) {

skb = info->tx_fixup (dev, skb, GFP_ATOMIC);

if (!skb) {

if (netif_msg_tx_err(dev)) {

netif_dbg(dev, tx_err, dev->net, "can't tx_fixup skb\n");

goto drop;

} else {

/* cdc_ncm collected packet; waits for more */

goto not_drop;

}

}

}

length = skb->len;

if (!(urb = usb_alloc_urb (0, GFP_ATOMIC))) {

netif_dbg(dev, tx_err, dev->net, "no urb\n");

goto drop;

}

entry = (struct skb_data *) skb->cb;

entry->urb = urb;

entry->dev = dev;

entry->length = length;

usb_fill_bulk_urb (urb, dev->udev, dev->out,

skb->data, skb->len, tx_complete, skb);

/* don't assume the hardware handles USB_ZERO_PACKET

* NOTE: strictly conforming cdc-ether devices should expect

* the ZLP here, but ignore the one-byte packet.

* NOTE2: CDC NCM specification is different from CDC ECM when

* handling ZLP/short packets, so cdc_ncm driver will make short

* packet itself if needed.

*/

if (length % dev->maxpacket == 0) {

if (!(info->flags & FLAG_SEND_ZLP)) {

if (!(info->flags & FLAG_MULTI_PACKET)) {

urb->transfer_buffer_length++;

if (skb_tailroom(skb)) {

skb->data[skb->len] = 0;

__skb_put(skb, 1);

}

}

} else

urb->transfer_flags |= URB_ZERO_PACKET;

}

spin_lock_irqsave(&dev->txq.lock, flags);

retval = usb_autopm_get_interface_async(dev->intf);

if (retval < 0) {

spin_unlock_irqrestore(&dev->txq.lock, flags);

goto drop;

}

#ifdef CONFIG_PM

/* if this triggers the device is still a sleep */

if (test_bit(EVENT_DEV_ASLEEP, &dev->flags)) {

/* transmission will be done in resume */

usb_anchor_urb(urb, &dev->deferred);

/* no use to process more packets */

netif_stop_queue(net);

spin_unlock_irqrestore(&dev->txq.lock, flags);

netdev_dbg(dev->net, "Delaying transmission for resumption\n");

goto deferred;

}

#endif

switch ((retval = usb_submit_urb (urb, GFP_ATOMIC))) {

case -EPIPE:

netif_stop_queue (net);

usbnet_defer_kevent (dev, EVENT_TX_HALT);

usb_autopm_put_interface_async(dev->intf);

break;

default:

usb_autopm_put_interface_async(dev->intf);

netif_dbg(dev, tx_err, dev->net,

"tx: submit urb err %d\n", retval);

break;

case 0:

net->trans_start = jiffies;

__usbnet_queue_skb(&dev->txq, skb, tx_start);

if (dev->txq.qlen >= TX_QLEN (dev))

netif_stop_queue (net);

}

spin_unlock_irqrestore (&dev->txq.lock, flags);

if (retval) {

netif_dbg(dev, tx_err, dev->net, "drop, code %d\n", retval);

drop:

dev->net->stats.tx_dropped++;

not_drop:

if (skb)

dev_kfree_skb_any (skb);

usb_free_urb (urb);

} else

netif_dbg(dev, tx_queued, dev->net,

"> tx, len %d, type 0x%x\n", length, skb->protocol);

#ifdef CONFIG_PM

deferred:

#endif

return NETDEV_TX_OK;

}

EXPORT_SYMBOL_GPL(usbnet_start_xmit);

static void rx_alloc_submit(struct usbnet *dev, gfp_t flags)

{

struct urb *urb;

int i;

/* don't refill the queue all at once */

for (i = 0; i < 10 && dev->rxq.qlen < RX_QLEN(dev); i++) {

urb = usb_alloc_urb(0, flags);

if (urb != NULL) {

if (rx_submit(dev, urb, flags) == -ENOLINK)

return;

}

}

}

/*-------------------------------------------------------------------------*/

// tasklet (work deferred from completions, in_irq) or timer

static void usbnet_bh (unsigned long param)

{

struct usbnet *dev = (struct usbnet *) param;

struct sk_buff *skb;

struct skb_data *entry;

while ((skb = skb_dequeue (&dev->done))) {

entry = (struct skb_data *) skb->cb;

switch (entry->state) {

case rx_done:

entry->state = rx_cleanup;

rx_process (dev, skb);

continue;

case tx_done:

case rx_cleanup:

usb_free_urb (entry->urb);

dev_kfree_skb (skb);

continue;

default:

netdev_dbg(dev->net, "bogus skb state %d\n", entry->state);

}

}

// waiting for all pending urbs to complete?

if (dev->wait) {

if ((dev->txq.qlen + dev->rxq.qlen + dev->done.qlen) == 0) {

wake_up (dev->wait);

}

// or are we maybe short a few urbs?

} else if (netif_running (dev->net) &&

netif_device_present (dev->net) &&

!timer_pending (&dev->delay) &&

!test_bit (EVENT_RX_HALT, &dev->flags)) {

int temp = dev->rxq.qlen;

if (temp < RX_QLEN(dev)) {

rx_alloc_submit(dev, GFP_ATOMIC);

if (temp != dev->rxq.qlen)

netif_dbg(dev, link, dev->net,

"rxqlen %d --> %d\n",

temp, dev->rxq.qlen);

if (dev->rxq.qlen < RX_QLEN(dev))

tasklet_schedule (&dev->bh);

}

if (dev->txq.qlen < TX_QLEN (dev))

netif_wake_queue (dev->net);

}

}

/*-------------------------------------------------------------------------

*

* USB Device Driver support

*

*-------------------------------------------------------------------------*/

// precondition: never called in_interrupt

void usbnet_disconnect (struct usb_interface *intf)

{

struct usbnet *dev;

struct usb_device *xdev;

struct net_device *net;

dev = usb_get_intfdata(intf);

usb_set_intfdata(intf, NULL);

if (!dev)

return;

xdev = interface_to_usbdev (intf);

netif_info(dev, probe, dev->net, "unregister '%s' usb-%s-%s, %s\n",

intf->dev.driver->name,

xdev->bus->bus_name, xdev->devpath,

dev->driver_info->description);

net = dev->net;

unregister_netdev (net);

cancel_work_sync(&dev->kevent);

if (dev->driver_info->unbind)

dev->driver_info->unbind (dev, intf);

usb_kill_urb(dev->interrupt);

usb_free_urb(dev->interrupt);

free_netdev(net);

usb_put_dev (xdev);

}

EXPORT_SYMBOL_GPL(usbnet_disconnect);

static const struct net_device_ops usbnet_netdev_ops = {

.ndo_open = usbnet_open,

.ndo_stop = usbnet_stop,

.ndo_start_xmit = usbnet_start_xmit,

.ndo_tx_timeout = usbnet_tx_timeout,

.ndo_change_mtu = usbnet_change_mtu,

.ndo_set_mac_address = eth_mac_addr,

.ndo_validate_addr = eth_validate_addr,

};

/*-------------------------------------------------------------------------*/

// precondition: never called in_interrupt

static struct device_type wlan_type = {

.name = "wlan",

};

static struct device_type wwan_type = {

.name = "wwan",

};

int

usbnet_probe (struct usb_interface *udev, const struct usb_device_id *prod)

{

struct usbnet *dev;

struct net_device *net;

struct usb_host_interface *interface;

struct driver_info *info;

struct usb_device *xdev;

int status;

const char *name;

struct usb_driver *driver = to_usb_driver(udev->dev.driver);

/* usbnet already took usb runtime pm, so have to enable the feature

* for usb interface, otherwise usb_autopm_get_interface may return

* failure if USB_SUSPEND(RUNTIME_PM) is enabled.

*/

if (!driver->supports_autosuspend) {

driver->supports_autosuspend = 1;

pm_runtime_enable(&udev->dev);

}

name = udev->dev.driver->name;

info = (struct driver_info *) prod->driver_info;

if (!info) {

dev_dbg (&udev->dev, "blacklisted by %s\n", name);

return -ENODEV;

}

xdev = interface_to_usbdev (udev);

interface = udev->cur_altsetting;

usb_get_dev (xdev);

status = -ENOMEM;

// set up our own records

net = alloc_etherdev(sizeof(*dev));

if (!net)

goto out;

/* netdev_printk() needs this so do it as early as possible */

SET_NETDEV_DEV(net, &udev->dev);

dev = netdev_priv(net);

dev->udev = xdev;

dev->intf = udev;

dev->driver_info = info;

dev->driver_name = name;

dev->msg_enable = netif_msg_init (msg_level, NETIF_MSG_DRV

| NETIF_MSG_PROBE | NETIF_MSG_LINK);

skb_queue_head_init (&dev->rxq);

skb_queue_head_init (&dev->txq);

skb_queue_head_init (&dev->done);

skb_queue_head_init(&dev->rxq_pause);

dev->bh.func = usbnet_bh;

dev->bh.data = (unsigned long) dev;

INIT_WORK (&dev->kevent, kevent);

init_usb_anchor(&dev->deferred);

dev->delay.function = usbnet_bh;

dev->delay.data = (unsigned long) dev;

init_timer (&dev->delay);

mutex_init (&dev->phy_mutex);

dev->net = net;

strcpy (net->name, "usb%d");

memcpy (net->dev_addr, node_id, sizeof node_id);

/* rx and tx sides can use different message sizes;

* bind() should set rx_urb_size in that case.

*/

dev->hard_mtu = net->mtu + net->hard_header_len;

#if 0

// dma_supported() is deeply broken on almost all architectures

// possible with some EHCI controllers

if (dma_supported (&udev->dev, DMA_BIT_MASK(64)))

net->features |= NETIF_F_HIGHDMA;

#endif

net->netdev_ops = &usbnet_netdev_ops;

net->watchdog_timeo = TX_TIMEOUT_JIFFIES;

net->ethtool_ops = &usbnet_ethtool_ops;

// allow device-specific bind/init procedures

// NOTE net->name still not usable ...

if (info->bind) {

status = info->bind (dev, udev);

if (status < 0)

goto out1;

// heuristic: "usb%d" for links we know are two-host,

// else "eth%d" when there's reasonable doubt. userspace

// can rename the link if it knows better.

if ((dev->driver_info->flags & FLAG_ETHER) != 0 &&

((dev->driver_info->flags & FLAG_POINTTOPOINT) == 0 ||

(net->dev_addr [0] & 0x02) == 0))

strcpy (net->name, "eth%d");

/* WLAN devices should always be named "wlan%d" */

if ((dev->driver_info->flags & FLAG_WLAN) != 0)

strcpy(net->name, "wlan%d");

/* WWAN devices should always be named "wwan%d" */

if ((dev->driver_info->flags & FLAG_WWAN) != 0)

strcpy(net->name, "wwan%d");

/* maybe the remote can't receive an Ethernet MTU */

if (net->mtu > (dev->hard_mtu - net->hard_header_len))

net->mtu = dev->hard_mtu - net->hard_header_len;

} else if (!info->in || !info->out)

status = usbnet_get_endpoints (dev, udev);

else {

dev->in = usb_rcvbulkpipe (xdev, info->in);

dev->out = usb_sndbulkpipe (xdev, info->out);

if (!(info->flags & FLAG_NO_SETINT))

status = usb_set_interface (xdev,

interface->desc.bInterfaceNumber,

interface->desc.bAlternateSetting);

else

status = 0;

}

if (status >= 0 && dev->status)

status = init_status (dev, udev);

if (status < 0)

goto out3;

if (!dev->rx_urb_size)

dev->rx_urb_size = dev->hard_mtu;

dev->maxpacket = usb_maxpacket (dev->udev, dev->out, 1);

if ((dev->driver_info->flags & FLAG_WLAN) != 0)