0.参考

https://doc.scrapy.org/en/latest/topics/item-pipeline.html?highlight=mongo#write-items-to-mongodb

1.主要实现

(1) 连接超时自动重连 MySQL server

(2) 通过 item_list 收集 item,达到阈值后批量提交至数据库

(3) 通过解析异常,自动移除存在异常的数据行,重新提交 item_list

2.代码实现

保存至 /site-packages/my_pipelines.py

from socket import gethostname import time import re from html import escape import pymysql pymysql.install_as_MySQLdb() from pymysql.err import OperationalError, InterfaceError, DataError, IntegrityError class MyMySQLPipeline(object): hostname = gethostname() def __init__(self, settings): self.mysql_host = settings.get('MYSQL_HOST', '127.0.0.1') self.mysql_port = settings.get('MYSQL_PORT', 3306) self.mysql_user = settings.get('MYSQL_USER', 'username') self.mysql_passwd = settings.get('MYSQL_PASSWD', 'password') self.mysql_reconnect_wait = settings.get('MYSQL_RECONNECT_WAIT', 60) self.mysql_db = settings.get('MYSQL_DB') self.mysql_charset = settings.get('MYSQL_CHARSET', 'utf8') #utf8mb4 self.mysql_item_list_limit = settings.get('MYSQL_ITEM_LIST_LIMIT', 30) self.item_list = [] @classmethod def from_crawler(cls, crawler): return cls( settings = crawler.settings ) def open_spider(self, spider): try: self.conn = pymysql.connect( host = self.mysql_host, port = self.mysql_port, user = self.mysql_user, passwd = self.mysql_passwd, db = self.mysql_db, charset = self.mysql_charset, ) except Exception as err: spider.logger.warn('MySQL: FAIL to connect {} {}'.format(err.__class__, err)) time.sleep(self.mysql_reconnect_wait) self.open_spider(spider) else: spider.logger.info('MySQL: connected') self.curs = self.conn.cursor(pymysql.cursors.DictCursor) spider.curs = self.curs def close_spider(self, spider): self.insert_item_list(spider) self.conn.close() spider.logger.info('MySQL: closed') def process_item(self, item, spider): self.item_list.append(item) if len(self.item_list) >= self.mysql_item_list_limit: self.insert_item_list(spider) return item def sql(self): raise NotImplementedError('Subclass of MyMySQLPipeline must implement the sql() method') def insert_item_list(self, spider): spider.logger.info('insert_item_list: {}'.format(len(self.item_list))) try: self.sql() except (OperationalError, InterfaceError) as err: # <class 'pymysql.err.OperationalError'> # (2013, 'Lost connection to MySQL server during query ([Errno 110] Connection timed out)') spider.logger.info('MySQL: exception {} err {}'.format(err.__class__, err)) self.open_spider(spider) self.insert_item_list(spider) except Exception as err: if len(err.args) == 2 and isinstance(err.args[1], str): # <class 'pymysql.err.DataError'> # (1264, "Out of range value for column 'position_id' at row 2") # <class 'pymysql.err.InternalError'> # (1292, "Incorrect date value: '1977-06-31' for column 'release_day' at row 26") m_row = re.search(r'at\s+row\s+(\d+)$', err.args[1]) # <class 'pymysql.err.IntegrityError'> # (1048, "Column 'name' cannot be null") films 43894 m_column = re.search(r"Column\s'(.+)'", err.args[1]) if m_row: row = m_row.group(1) item = self.item_list.pop(int(row) - 1) spider.logger.warn('MySQL: {} {} exception from item {}'.format(err.__class__, err, item)) self.insert_item_list(spider) elif m_column: column = m_column.group(1) item_list = [] for item in self.item_list: if item[column] == None: item_list.append(item) for item in item_list: self.item_list.remove(item) spider.logger.warn('MySQL: {} {} exception from item {}'.format(err.__class__, err, item)) self.insert_item_list(spider) else: spider.logger.error('MySQL: {} {} unhandled exception from item_list: \n{}'.format( err.__class__, err, self.item_list)) else: spider.logger.error('MySQL: {} {} unhandled exception from item_list: \n{}'.format( err.__class__, err, self.item_list)) finally: self.item_list.clear()

3.使用方法

Scrapy 项目 project_name

MySQL 数据库 database_name, 表 table_name

(1) 项目 pipelines.py 添加代码:

from my_pipelines import MyMySQLPipeline class MySQLPipeline(MyMySQLPipeline): def sql(self): self.curs.executemany(""" INSERT INTO table_name ( position_id, crawl_time) VALUES ( %(position_id)s, %(crawl_time)s) ON DUPLICATE KEY UPDATE crawl_time=values(crawl_time) """, self.item_list) self.conn.commit()

(2) 项目 settings.py 添加代码:

# Configure item pipelines # See https://doc.scrapy.org/en/latest/topics/item-pipeline.html ITEM_PIPELINES = { # 'project_name.pipelines.ProxyPipeline': 300, 'project_name.pipelines.MySQLPipeline': 301, } MYSQL_HOST = '127.0.0.1' MYSQL_PORT = 3306 MYSQL_USER = 'username' MYSQL_PASSWD ='password' MYSQL_RECONNECT_WAIT = 60 MYSQL_DB = 'database_name' MYSQL_CHARSET = 'utf8' #utf8mb4 MYSQL_ITEM_LIST_LIMIT = 3 #100

4.运行结果

自动移除存在异常的数据行,重新提交 item_list:

2018-07-18 12:35:52 [scrapy.core.scraper] DEBUG: Scraped from <200 http://httpbin.org/> {'position_id': 103, 'crawl_time': '2018-07-18 12:35:52'} 2018-07-18 12:35:52 [scrapy.core.scraper] DEBUG: Scraped from <200 http://httpbin.org/> {'position_id': None, 'crawl_time': '2018-07-18 12:35:52'} 2018-07-18 12:35:52 [scrapy.core.scraper] DEBUG: Scraped from <200 http://httpbin.org/> {'position_id': 104, 'crawl_time': '2018-02-31 17:51:47'} 2018-07-18 12:35:55 [scrapy.core.engine] DEBUG: Crawled (200) <GET http://httpbin.org/> (referer: http://httpbin.org/) 2018-07-18 12:35:55 [test] INFO: insert_item_list: 3 2018-07-18 12:35:55 [test] WARNING: MySQL: <class 'pymysql.err.IntegrityError'> (1048, "Column 'position_id' cannot be null") exception from item {'position_id': None, 'crawl_time': '2018-07-18 12:35:52'} 2018-07-18 12:35:55 [test] INFO: insert_item_list: 2 2018-07-18 12:35:55 [test] WARNING: MySQL: <class 'pymysql.err.InternalError'> (1292, "Incorrect datetime value: '2018-02-31 17:51:47' for column 'crawl_time' at row 1") exception from item {'position_id': 104, 'crawl_time': '2018-02-31 17:51:47'} 2018-07-18 12:35:55 [test] INFO: insert_item_list: 1 2018-07-18 12:35:55 [scrapy.core.scraper] DEBUG: Scraped from <200 http://httpbin.org/>

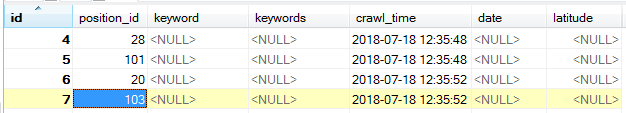

提交结果: