1.图

1.2 GraphX的框架

2.术语

2.1 顶点和边

2.2 有向图和无向图

2.3 有环图和无环图

2.4 度、出边、入边、出度、入度

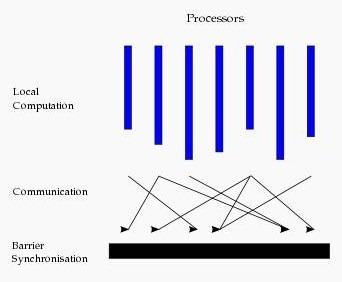

2.5 超步

三种视图及操作

/**

* Author: king

* Email: [email protected]

* Datetime: Created In 2018/4/28 13:43

* Desc: as follows.

*/

package zy.rttx.spark.graphx.learning

import org.apache.log4j.{Level, Logger}

import org.apache.spark._

import org.apache.spark.graphx.{VertexId, _}

// To make some of the examples work we will also need RDD

import org.apache.spark.rdd.RDD

object GetingStart {

def main(args: Array[String]): Unit = {

//屏蔽日志

Logger.getLogger("org.apache.spark").setLevel(Level.WARN)

//设置运行环境

val conf =new SparkConf().setAppName("getingStart").setMaster("local[2]")

val sc =new SparkContext(conf)

//设置顶点和边,注意顶点和边都是用元组定义的 Array

//顶点的数据类型是 VD:(String,String)

val vertexArray= Array(

(3L,("rxin","student")),

(7L,("jgonzal","postdoc")),

(5L,("franklin","prof")),

(2L,("istoica","prof")))//student:学生,postdoc:博士后,prof:教授

//边的数据类型 ED:String

val edgeArray = Array(

Edge(3L, 7L, "collab"),

Edge(5L, 3L, "advisor"),

Edge(2L, 5L, "colleague"),

Edge(5L, 7L, "pi"))

//构造 vertexRDD 和 edgeRDD

val vertexRDD: RDD[(Long, (String, String))] = sc.parallelize(vertexArray)

val edgeRDD: RDD[Edge[String]] = sc.parallelize(edgeArray)

/*// Create an RDD for the vertices

val users: RDD[(VertexId, (String, String))] =

sc.parallelize(Array((3L, ("rxin", "student")), (7L, ("jgonzal", "postdoc")),

(5L, ("franklin", "prof")), (2L, ("istoica", "prof"))))

// Create an RDD for edges

val relationships: RDD[Edge[String]] =

sc.parallelize(Array(Edge(3L, 7L, "collab"), Edge(5L, 3L, "advisor"),

Edge(2L, 5L, "colleague"), Edge(5L, 7L, "pi")))*/

// Define a default user in case there are relationship with missing user

val defaultUser = ("John Doe", "Missing")

/*// Build the initial Graph

val graph = Graph(users, relationships, defaultUser)*/

//构造图 Graph[VD,ED]

val graph: Graph[(String, String), String] = Graph(vertexRDD, edgeRDD)

// Count all users which are postdocs ,vertices将图转换为矩阵

println("all postdocs have:"+graph.vertices.filter { case (id, (name, pos)) => pos == "postdoc" }.count)

// Count all the edges where src > dst

println(graph.edges.filter(e => e.srcId > e.dstId).count)

//another method

println(graph.edges.filter { case Edge(src, dst, prop) => src > dst }.count)

// reverse

println(graph.edges.filter { case Edge(src, dst, prop) => src < dst }.count)

// Use the triplets view to create an RDD of facts.

val facts: RDD[String] =

graph.triplets.map(triplet =>

triplet.srcAttr._1 + " is the " + triplet.attr + " of " + triplet.dstAttr._1)

facts.collect.foreach(println(_))

}

}

上面是一个简单的例子,说明如何建立一个属性图,那么建立一个图主要有哪些方法呢?我们先看图的定义:

object Graph {

def apply[VD, ED](

vertices: RDD[(VertexId, VD)],

edges: RDD[Edge[ED]],

defaultVertexAttr: VD = null)

: Graph[VD, ED]

def fromEdges[VD, ED](

edges: RDD[Edge[ED]],

defaultValue: VD): Graph[VD, ED]

def fromEdgeTuples[VD](

rawEdges: RDD[(VertexId, VertexId)],

defaultValue: VD,

uniqueEdges: Option[PartitionStrategy] = None): Graph[VD, Int]

}(1)在构造图的时候,会自动使用apply方法,因此前面那个例子中实际上就是使用apply方法。相当于Java/C++语言的构造函数。有三个参数,分别是:vertices: RDD[(VertexId, VD)], edges: RDD[Edge[ED]], defaultVertexAttr: VD = null),前两个必须有,最后一个可选择。“顶点“和”边“的RDD来自不同的数据源,与Spark中其他RDD的建立并没有区别。

这里再举读取文件,产生RDD,然后利用RDD建立图的例子:

(1)读取文件,建立顶点和边的RRD,然后利用RDD建立属性图

//读入数据文件

val articles: RDD[String] = sc.textFile("E:/data/graphx/graphx-wiki-vertices.txt")

val links: RDD[String] = sc.textFile("E:/data/graphx/graphx-wiki-edges.txt")

//装载“顶点”和“边”RDD

val vertices = articles.map { line =>

val fields = line.split('\t')

(fields(0).toLong, fields(1))

}//注意第一列为vertexId,必须为Long,第二列为顶点属性,可以为任意类型,包括Map等序列。

val edges = links.map { line =>

val fields = line.split('\t')

Edge(fields(0).toLong, fields(1).toLong, 1L)//起始点ID必须为Long,最后一个是属性,可以为任意类型

}

//建立图

val graph = Graph(vertices, edges, "").persist()//自动使用apply方法建立图(2)Graph.fromEdges方法:这种方法相对而言最为简单,只是由”边”RDD建立图,由边RDD中出现所有“顶点”(无论是起始点src还是终点dst)自动产生顶点vertextId,顶点的属性将被设置为一个默认值。

Graph.fromEdges allows creating a graph from only an RDD of edges, automatically creating any vertices mentioned by edges and assigning them the default value.

举例如下

//读入数据文件

val records: RDD[String] = sc.textFile("/microblogPCU/microblogPCU/follower_followee")

//微博数据:000000261066,郜振博585,3044070630,redashuaicheng,1929305865,1994,229,3472,male,first

// 第三列是粉丝Id:3044070630,第五列是用户Id:1929305865

val followers=records.map {case x => val fields=x.split(",")

Edge(fields(2).toLong, fields(4).toLong,1L )

}

val graph=Graph.fromEdges(followers, 1L)(3)Graph.fromEdgeTuples方法

Graph.fromEdgeTuples allows creating a graph from only an RDD of edge tuples, assigning the edges the value 1, and automatically creating any vertices mentioned by edges and assigning them the default value. It also supports deduplicating the edges; to deduplicate, pass Some of a PartitionStrategy as the uniqueEdges parameter (for example, uniqueEdges = Some(PartitionStrategy.RandomVertexCut)). A partition strategy is necessary to colocate identical edges on the same partition so they can be deduplicated.

除了三种方法,还可以用GraphLoader构建图。如下面GraphLoader.edgeListFile:

(4)GraphLoader.edgeListFile建立图的基本结构,然后Join属性

(a)首先建立图的基本结构:

利用GraphLoader.edgeListFile函数从边List文件中建立图的基本结构(所有“顶点”+“边”),且顶点和边的属性都默认为

object GraphLoader {

def edgeListFile(

sc: SparkContext,

path: String,

canonicalOrientation: Boolean = false,

minEdgePartitions: Int = 1)

: Graph[Int, Int]

}使用方法如下:

val graph=GraphLoader.edgeListFile(sc, "/data/graphx/followers.txt")

//文件的格式如下:

//2 1

//4 1

//1 2 依次为第一个顶点和第二个顶点

(b)然后读取属性文件,获得RDD后和(1)中得到的基本结构图join在一起,就可以组合成完整的属性图。

在Scala语言中,可以用case语句进行形式简单、功能强大的模式匹配

//假设graph顶点属性(String,Int)-(name,age),边有一个权重(int)

val graph: Graph[(String, Int), Int] = Graph(vertexRDD, edgeRDD)

用case匹配可以很方便访问顶点和边的属性及id

graph.vertices.map{

case (id,(name,age))=>//利用case进行匹配

(age,name)//可以在这里加上自己想要的任何转换

}

graph.edges.map{

case Edge(srcid,dstid,weight)=>//利用case进行匹配

(dstid,weight*0.01)//可以在这里加上自己想要的任何转换

}也可以通过下标访问

graph.vertices.map{

v=>(v._1,v._2._1,v._2._2)//v._1,v._2._1,v._2._2分别对应Id,name,age

}

graph.edges.map {

e=>(e.attr,e.srcId,e.dstId)

}

graph.triplets.map{

triplet=>(triplet.srcAttr._1,triplet.dstAttr._2,triplet.srcId,triplet.dstId)

}graph.mapVertices{

case (id,(name,age))=>//利用case进行匹配

(age,name)//可以在这里加上自己想要的任何转换

}

graph.mapEdges(e=>(e.attr,e.srcId,e.dstId))

graph.mapTriplets(triplet=>(triplet.srcAttr._1))3.1 存储模式

3.1.1 图存储模式

2.1.2 GraphX存储模式

3.2 计算模式

3.2.1 图计算模式

3.2.2GraphX计算模式

3.2.2.1 图的缓存

3.2.2.2 邻边聚合

3.2.2.3 进化的Pregel模式

3.2.2.4 图算法工具包

Spark GraphX中的图的函数大全

/** Summary of the functionality in the property graph */

class Graph[VD, ED] {

// Information about the Graph

//图的基本信息统计

===================================================================

val numEdges: Long

val numVertices: Long

val inDegrees: VertexRDD[Int]

val outDegrees: VertexRDD[Int]

val degrees: VertexRDD[Int]

// Views of the graph as collections

// 图的三种视图

=============================================================

val vertices: VertexRDD[VD]

val edges: EdgeRDD[ED]

val triplets: RDD[EdgeTriplet[VD, ED]]

// Functions for caching graphs ==================================================================

def persist(newLevel: StorageLevel = StorageLevel.MEMORY_ONLY): Graph[VD, ED]

def cache(): Graph[VD, ED]

def unpersistVertices(blocking: Boolean = true): Graph[VD, ED]

// Change the partitioning heuristic ============================================================

def partitionBy(partitionStrategy: PartitionStrategy): Graph[VD, ED]

// Transform vertex and edge attributes

// 基本的转换操作

==========================================================

def mapVertices[VD2](map: (VertexID, VD) => VD2): Graph[VD2, ED]

def mapEdges[ED2](map: Edge[ED] => ED2): Graph[VD, ED2]

def mapEdges[ED2](map: (PartitionID, Iterator[Edge[ED]]) => Iterator[ED2]): Graph[VD, ED2]

def mapTriplets[ED2](map: EdgeTriplet[VD, ED] => ED2): Graph[VD, ED2]

def mapTriplets[ED2](map: (PartitionID, Iterator[EdgeTriplet[VD, ED]]) => Iterator[ED2])

: Graph[VD, ED2]

// Modify the graph structure

//图的结构操作(仅给出四种基本的操作,子图提取是比较重要的操作)

====================================================================

def reverse: Graph[VD, ED]

def subgraph(

epred: EdgeTriplet[VD,ED] => Boolean = (x => true),

vpred: (VertexID, VD) => Boolean = ((v, d) => true))

: Graph[VD, ED]

def mask[VD2, ED2](other: Graph[VD2, ED2]): Graph[VD, ED]

def groupEdges(merge: (ED, ED) => ED): Graph[VD, ED]

// Join RDDs with the graph

// 两种聚合方式,可以完成各种图的聚合操作 ======================================================================

def joinVertices[U](table: RDD[(VertexID, U)])(mapFunc: (VertexID, VD, U) => VD): Graph[VD, ED]

def outerJoinVertices[U, VD2](other: RDD[(VertexID, U)])

(mapFunc: (VertexID, VD, Option[U]) => VD2)

// Aggregate information about adjacent triplets

//图的邻边信息聚合,collectNeighborIds都是效率不高的操作,优先使用aggregateMessages,这也是GraphX最重要的操作之一。

=================================================

def collectNeighborIds(edgeDirection: EdgeDirection): VertexRDD[Array[VertexID]]

def collectNeighbors(edgeDirection: EdgeDirection): VertexRDD[Array[(VertexID, VD)]]

def aggregateMessages[Msg: ClassTag](

sendMsg: EdgeContext[VD, ED, Msg] => Unit,

mergeMsg: (Msg, Msg) => Msg,

tripletFields: TripletFields = TripletFields.All)

: VertexRDD[A]

// Iterative graph-parallel computation ==========================================================

def pregel[A](initialMsg: A, maxIterations: Int, activeDirection: EdgeDirection)(

vprog: (VertexID, VD, A) => VD,

sendMsg: EdgeTriplet[VD, ED] => Iterator[(VertexID,A)],

mergeMsg: (A, A) => A)

: Graph[VD, ED]

// Basic graph algorithms

//图的算法API(目前给出了三类四个API) ========================================================================

def pageRank(tol: Double, resetProb: Double = 0.15): Graph[Double, Double]

def connectedComponents(): Graph[VertexID, ED]

def triangleCount(): Graph[Int, ED]

def stronglyConnectedComponents(numIter: Int): Graph[VertexID, ED]

}结构操作

Structural Operators

Spark2.0版本中,仅仅有四种最基本的结构操作,未来将开发更多的结构操作。

class Graph[VD, ED] {

def reverse: Graph[VD, ED]

def subgraph(epred: EdgeTriplet[VD,ED] => Boolean,

vpred: (VertexId, VD) => Boolean): Graph[VD, ED]

def mask[VD2, ED2](other: Graph[VD2, ED2]): Graph[VD, ED]

def groupEdges(merge: (ED, ED) => ED): Graph[VD,ED]

}import org.apache.log4j.{Level, Logger}

import org.apache.spark.{SparkContext, SparkConf}

import org.apache.spark.graphx._

import org.apache.spark.rdd.RDD

object Test {

def main(args: Array[String]) {

//屏蔽日志

Logger.getLogger("org.apache.spark").setLevel(Level.WARN)

//设置运行环境

val conf = new SparkConf().setAppName("GraphXTest").setMaster("local[*]")

val sc = new SparkContext(conf)

//设置顶点和边,注意顶点和边都是用元组定义的 Array

//顶点的数据类型是 VD:(String,Int)

val vertexArray = Array(

(1L, ("Alice", 28)),

(2L, ("Bob", 27)),

(3L, ("Charlie", 65)),

(4L, ("David", 42)),

(5L, ("Ed", 55)),

(6L, ("Fran", 50))

)

//边的数据类型 ED:Int

val edgeArray = Array(

Edge(2L, 1L, 7),

Edge(2L, 4L, 2),

Edge(3L, 2L, 4),

Edge(3L, 6L, 3),

Edge(4L, 1L, 1),

Edge(5L, 2L, 2),

Edge(5L, 3L, 8),

Edge(5L, 6L, 3)

)

//构造 vertexRDD 和 edgeRDD

val vertexRDD: RDD[(Long, (String, Int))] = sc.parallelize(vertexArray)

val edgeRDD: RDD[Edge[Int]] = sc.parallelize(edgeArray)

//构造图 Graph[VD,ED]

val graph: Graph[(String, Int), Int] = Graph(vertexRDD, edgeRDD)

println("***********************************************")

println("属性演示")

println("**********************************************************")

println("找出图中年龄大于 30 的顶点:")

graph.vertices.filter { case (id, (name, age)) => age > 30 }.collect.foreach {

case (id, (name, age)) => println(s"$name is $age")

}

graph.triplets.foreach(t => println(s"triplet:${t.srcId},${t.srcAttr},${t.dstId},${t.dstAttr},${t.attr}"))

//边操作:找出图中属性大于 5 的边

println("找出图中属性大于 5 的边:")

graph.edges.filter(e => e.attr > 5).collect.foreach(e => println(s"${e.srcId} to ${e.dstId} att ${e.attr}"))

println

//triplets 操作,((srcId, srcAttr), (dstId, dstAttr), attr)

println("列出边属性>5 的 tripltes:")

for (triplet <- graph.triplets.filter(t => t.attr > 5).collect) {

println(s"${triplet.srcAttr._1} likes ${triplet.dstAttr._1}")

}

println

//Degrees 操作

println("找出图中最大的出度、入度、度数:")

def max(a: (VertexId, Int), b: (VertexId, Int)): (VertexId, Int) = {

if (a._2 > b._2) a else b

}

println("max of outDegrees:" + graph.outDegrees.reduce(max) + ", max of inDegrees:" + graph.inDegrees.reduce(max) + ", max of Degrees:" +

graph.degrees.reduce(max))

println

println("**********************************************************")

println("转换操作")

println("**********************************************************")

println("顶点的转换操作,顶点 age + 10:")

graph.mapVertices { case (id, (name, age)) => (id, (name,

age + 10))

}.vertices.collect.foreach(v => println(s"${v._2._1} is ${v._2._2}"))

println

println("边的转换操作,边的属性*2:")

graph.mapEdges(e => e.attr * 2).edges.collect.foreach(e => println(s"${e.srcId} to ${e.dstId} att ${e.attr}"))

println

println("**********************************************************")

println("结构操作")

println("**********************************************************")

println("顶点年纪>30 的子图:")

val subGraph = graph.subgraph(vpred = (id, vd) => vd._2 >= 30)

println("子图所有顶点:")

subGraph.vertices.collect.foreach(v => println(s"${v._2._1} is ${v._2._2}"))

println

println("子图所有边:")

subGraph.edges.collect.foreach(e => println(s"${e.srcId} to ${e.dstId} att ${e.attr}"))

println

println("**********************************************************")

println("连接操作")

println("**********************************************************")

val inDegrees: VertexRDD[Int] = graph.inDegrees

case class User(name: String, age: Int, inDeg: Int, outDeg: Int)

//创建一个新图,顶点 VD 的数据类型为 User,并从 graph 做类型转换

val initialUserGraph: Graph[User, Int] = graph.mapVertices { case (id, (name, age))

=> User(name, age, 0, 0)

}

//initialUserGraph 与 inDegrees、outDegrees(RDD)进行连接,并修改 initialUserGraph中 inDeg 值、outDeg 值

val userGraph = initialUserGraph.outerJoinVertices(initialUserGraph.inDegrees) {

case (id, u, inDegOpt) => User(u.name, u.age, inDegOpt.getOrElse(0), u.outDeg)

}.outerJoinVertices(initialUserGraph.outDegrees) {

case (id, u, outDegOpt) => User(u.name, u.age, u.inDeg, outDegOpt.getOrElse(0))

}

println("连接图的属性:")

userGraph.vertices.collect.foreach(v => println(s"${v._2.name} inDeg: ${v._2.inDeg} outDeg: ${v._2.outDeg}"))

println

println("出度和入读相同的人员:")

userGraph.vertices.filter {

case (id, u) => u.inDeg == u.outDeg

}.collect.foreach {

case (id, property) => println(property.name)

}

println

println("**********************************************************")

println("聚合操作")

println("**********************************************************")

println("找出年纪最大的follower:")

val oldestFollower: VertexRDD[(String, Int)] = userGraph.aggregateMessages[(String,

Int)](

// 将源顶点的属性发送给目标顶点,map 过程

et => et.sendToDst((et.srcAttr.name,et.srcAttr.age)),

// 得到最大follower,reduce 过程

(a, b) => if (a._2 > b._2) a else b

)

userGraph.vertices.leftJoin(oldestFollower) { (id, user, optOldestFollower) =>

optOldestFollower match {

case None => s"${user.name} does not have any followers."

case Some((name, age)) => s"${name} is the oldest follower of ${user.name}."

}

}.collect.foreach { case (id, str) => println(str) }

println

println("**********************************************************")

println("聚合操作")

println("**********************************************************")

println("找出距离最远的顶点,Pregel基于对象")

val g = Pregel(graph.mapVertices((vid, vd) => (0, vid)), (0, Long.MinValue), activeDirection = EdgeDirection.Out)(

(id: VertexId, vd: (Int, Long), a: (Int, Long)) => math.max(vd._1, a._1) match {

case vd._1 => vd

case a._1 => a

},

(et: EdgeTriplet[(Int, Long), Int]) => Iterator((et.dstId, (et.srcAttr._1 + 1+et.attr, et.srcAttr._2))) ,

(a: (Int, Long), b: (Int, Long)) => math.max(a._1, b._1) match {

case a._1 => a

case b._1 => b

}

)

g.vertices.foreach(m=>println(s"原顶点${m._2._2}到目标顶点${m._1},最远经过${m._2._1}步"))

// 面向对象

val g2 = graph.mapVertices((vid, vd) => (0, vid)).pregel((0, Long.MinValue), activeDirection = EdgeDirection.Out)(

(id: VertexId, vd: (Int, Long), a: (Int, Long)) => math.max(vd._1, a._1) match {

case vd._1 => vd

case a._1 => a

},

(et: EdgeTriplet[(Int, Long), Int]) => Iterator((et.dstId, (et.srcAttr._1 + 1, et.srcAttr._2))),

(a: (Int, Long), b: (Int, Long)) => math.max(a._1, b._1) match {

case a._1 => a

case b._1 => b

}

)

// g2.vertices.foreach(m=>println(s"原顶点${m._2._2}到目标顶点${m._1},最远经过${m._2._1}步"))

sc.stop()

}

}