Unity 工具 之 Azure 微软连续语音识别ASR的简单整理

目录

Unity 工具 之 Azure 微软连续语音识别ASR的简单整理

一、简单介绍

Unity 工具类,自己整理的一些游戏开发可能用到的模块,单独独立使用,方便游戏开发。

本节介绍,这里在使用微软的Azure 进行语音合成的两个方法的做简单整理,这里简单说明,如果你有更好的方法,欢迎留言交流。

官网注册:

面向学生的 Azure - 免费帐户额度 | Microsoft Azure

官网技术文档网址:

官网的TTS:

语音转文本快速入门 - 语音服务 - Azure AI services | Microsoft Learn

Azure Unity SDK 包官网:

安装语音 SDK - Azure Cognitive Services | Microsoft Learn

SDK具体链接:

https://aka.ms/csspeech/unitypackage

二、实现原理

1、官网申请得到语音识别对应的 SPEECH_KEY 和 SPEECH_REGION

2、因为语音识别需要用到麦克风,移动端需要申请麦克风权限

3、开启语音识别,监听语音识别对应事件,即可获取到识别结果

三、注意实现

1、注意如果有卡顿什么的,注意主子线程切换,可能可以适当解决你的卡顿现象

2、注意电脑端(例如windows)运行可以不申请麦克风权限,但是移动端(例如Android)运行要申请麦克风权限,不然无法开启识别成功,可能会报错:Exception with an error code: 0x15

<uses-permission android:name="android.permission.RECORD_AUDIO"/>System.ApplicationException: Exception with an error code: 0x15 at Microsoft.CognitiveServices.Speech.Internal.SpxExceptionThrower.ThrowIfFail (System.IntPtr hr) [0x00000] in <00000000000000000000000000000000>:0 at Microsoft.CognitiveServices.Speech.Recognizer.StartContinuousRecognition () [0x00000] in <00000000000000000000000000000000>:0 at Microsoft.CognitiveServices.Speech.Recognizer.DoAsyncRecognitionAction (System.Action recoImplAction) [0x00000] in <00000000000000000000000000000000>:0 at System.Threading.Tasks.Task.Execute () [0x00000] in <00000000000000000000000000000000>:0 at System.Threading.ExecutionContext.RunInternal (System.Threading.ExecutionContext executionContext, System.Threading.ContextCallback callback, System.Object state, System.Boolean preserveSyncCtx) [0x00000] in <00000000000000000000000000000000>:0 at System.Threading.Tasks.Task.ExecuteWithThreadLocal (System.Threading.Tasks.Task& currentTaskSlot) [0x00000] in

四、实现步骤

1、下载好SDK 导入

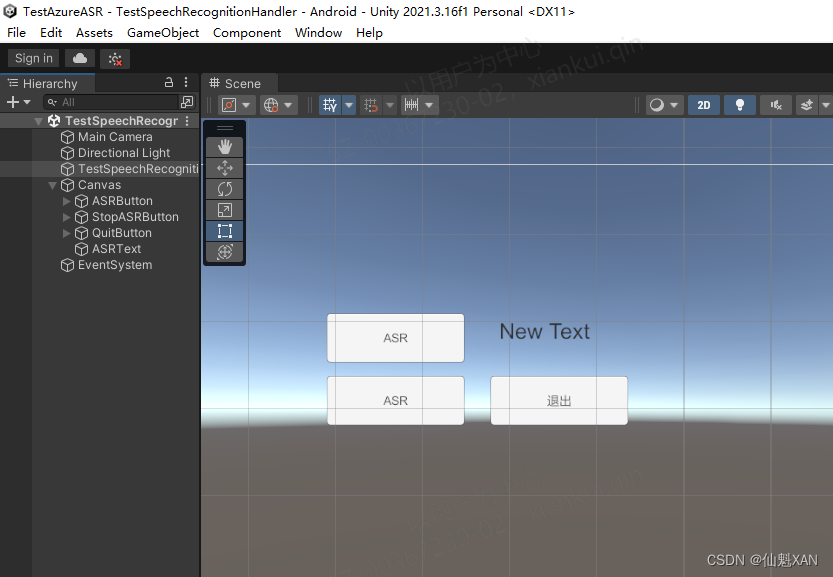

2、简单的搭建场景

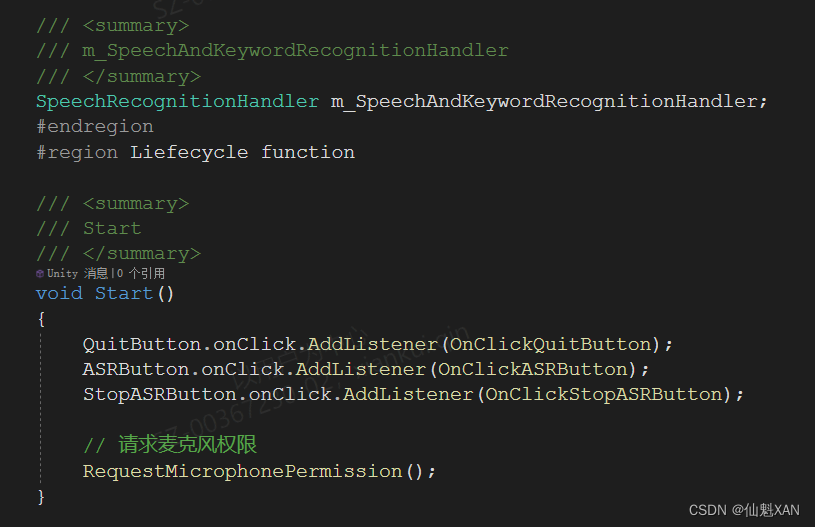

3、编写对应脚本,测试语音识别功能

4、把测试脚本添加到场景中,并赋值

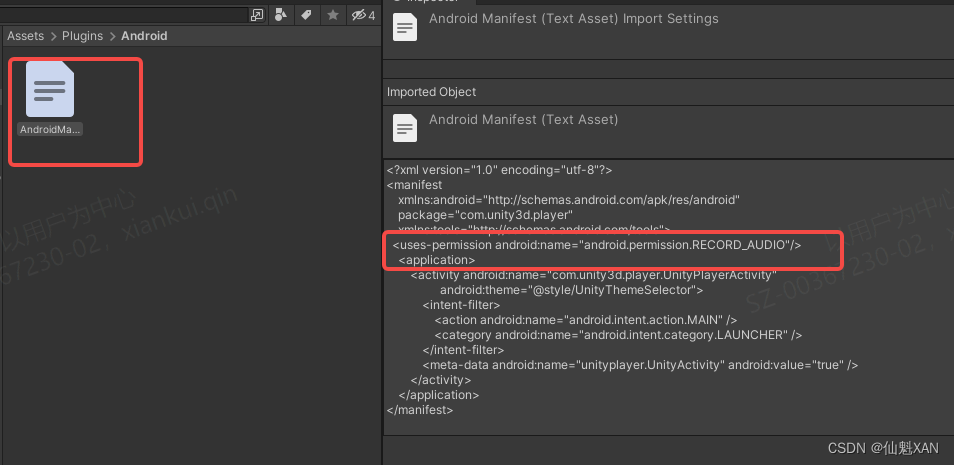

5、如果移动端,例如 Android 端,勾选如下,添加麦克风权限

<uses-permission android:name="android.permission.RECORD_AUDIO"/>

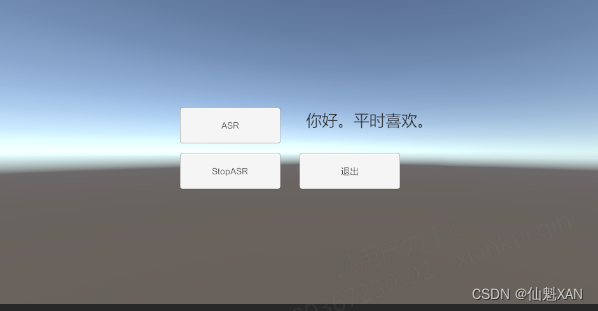

5、运行,点击对应按钮,开始识别,Console 中可以看到识别结果

五、关键脚本

1、TestSpeechRecognitionHandler

using UnityEngine;

using UnityEngine.Android;

using UnityEngine.UI;

public class TestSpeechRecognitionHandler : MonoBehaviour

{

#region Data

/// <summary>

/// 按钮,文本

/// </summary>

public Button QuitButton;

public Button ASRButton;

public Button StopASRButton;

public Text ASRText;

/// <summary>

/// m_SpeechAndKeywordRecognitionHandler

/// </summary>

SpeechRecognitionHandler m_SpeechAndKeywordRecognitionHandler;

#endregion

#region Liefecycle function

/// <summary>

/// Start

/// </summary>

void Start()

{

QuitButton.onClick.AddListener(OnClickQuitButton);

ASRButton.onClick.AddListener(OnClickASRButton);

StopASRButton.onClick.AddListener(OnClickStopASRButton);

// 请求麦克风权限

RequestMicrophonePermission();

}

/// <summary>

/// 应用退出

/// </summary>

async void OnApplicationQuit() {

await m_SpeechAndKeywordRecognitionHandler.StopContinuousRecognizer();

}

#endregion

#region Private function

/// <summary>

/// RequestMicrophonePermission

/// </summary>

void RequestMicrophonePermission()

{

// 检查当前平台是否为 Android

if (Application.platform == RuntimePlatform.Android)

{

// 检查是否已经授予麦克风权限

if (!Permission.HasUserAuthorizedPermission(Permission.Microphone))

{

// 如果没有权限,请求用户授权

Permission.RequestUserPermission(Permission.Microphone);

}

}

else

{

// 在其他平台上,可以执行其他平台特定的逻辑

Debug.LogWarning("Microphone permission is not needed on this platform.");

}

SpeechInitialized();

}

/// <summary>

/// SpeechInitialized

/// </summary>

private void SpeechInitialized() {

ASRText.text = "";

m_SpeechAndKeywordRecognitionHandler = new SpeechRecognitionHandler();

m_SpeechAndKeywordRecognitionHandler.onRecognizingAction = (str) => { Debug.Log("onRecognizingAction: " + str); };

m_SpeechAndKeywordRecognitionHandler.onRecognizedSpeechAction = (str) => { Loom.QueueOnMainThread(() => ASRText.text += str); Debug.Log("onRecognizedSpeechAction: " + str); };

m_SpeechAndKeywordRecognitionHandler.onErrorAction = (str) => { Debug.Log("onErrorAction: " + str); };

m_SpeechAndKeywordRecognitionHandler.Initialized();

}

/// <summary>

/// OnClickQuitButton

/// </summary>

private void OnClickQuitButton() {

#if UNITY_EDITOR

UnityEditor.EditorApplication.isPlaying = false;

#else

Application.Quit();

#endif

}

/// <summary>

/// OnClickASRButton

/// </summary>

private void OnClickASRButton() {

m_SpeechAndKeywordRecognitionHandler.StartContinuousRecognizer();

}

/// <summary>

/// OnClickStopASRButton

/// </summary>

private async void OnClickStopASRButton()

{

await m_SpeechAndKeywordRecognitionHandler.StopContinuousRecognizer();

}

#endregion

}

2、SpeechRecognitionHandler

using UnityEngine;

using Microsoft.CognitiveServices.Speech;

using Microsoft.CognitiveServices.Speech.Audio;

using System;

using Task = System.Threading.Tasks.Task;

/// <summary>

/// 语音识别转文本和关键词识别

/// </summary>

public class SpeechRecognitionHandler

{

#region Data

/// <summary>

///

/// </summary>

const string TAG = "[SpeechAndKeywordRecognitionHandler] ";

/// <summary>

/// 识别配置

/// </summary>

private SpeechConfig m_SpeechConfig;

/// <summary>

/// 音频配置

/// </summary>

private AudioConfig m_AudioConfig;

/// <summary>

/// 语音识别

/// </summary>

private SpeechRecognizer m_SpeechRecognizer;

/// <summary>

/// LLM 大模型配置

/// </summary>

private ASRConfig m_ASRConfig;

/// <summary>

/// 识别的事件

/// </summary>

public Action<string> onRecognizingAction;

public Action<string> onRecognizedSpeechAction;

public Action<string> onErrorAction;

public Action<string> onSessionStoppedAction;

#endregion

#region Public function

/// <summary>

/// 初始化

/// </summary>

/// <returns></returns>

public async void Initialized()

{

m_ASRConfig = new ASRConfig();

Debug.Log(TAG + "m_LLMConfig.AZURE_SPEECH_RECOGNITION_LANGUAGE " + m_ASRConfig.AZURE_SPEECH_RECOGNITION_LANGUAGE);

Debug.Log(TAG + "m_LLMConfig.AZURE_SPEECH_REGION " + m_ASRConfig.AZURE_SPEECH_REGION);

m_SpeechConfig = SpeechConfig.FromSubscription(m_ASRConfig.AZURE_SPEECH_KEY, m_ASRConfig.AZURE_SPEECH_REGION);

m_SpeechConfig.SpeechRecognitionLanguage = m_ASRConfig.AZURE_SPEECH_RECOGNITION_LANGUAGE;

m_AudioConfig = AudioConfig.FromDefaultMicrophoneInput();

Debug.Log(TAG + " Initialized 2 ====");

// 根据自己需要处理(不需要也行)

await Task.Delay(100);

}

#endregion

#region Private function

/// <summary>

/// 设置识别回调事件

/// </summary>

private void SetRecoginzeCallback()

{

Debug.Log(TAG + " SetRecoginzeCallback == ");

if (m_SpeechRecognizer != null)

{

m_SpeechRecognizer.Recognizing += OnRecognizing;

m_SpeechRecognizer.Recognized += OnRecognized;

m_SpeechRecognizer.Canceled += OnCanceled;

m_SpeechRecognizer.SessionStopped += OnSessionStopped;

Debug.Log(TAG+" SetRecoginzeCallback OK ");

}

}

#endregion

#region Callback

/// <summary>

/// 正在识别

/// </summary>

/// <param name="s"></param>

/// <param name="e"></param>

private void OnRecognizing(object s, SpeechRecognitionEventArgs e)

{

Debug.Log(TAG + "RecognizingSpeech:" + e.Result.Text + " :[e.Result.Reason]:" + e.Result.Reason);

if (e.Result.Reason == ResultReason.RecognizingSpeech )

{

Debug.Log(TAG + " Trigger onRecognizingAction is null :" + onRecognizingAction == null);

onRecognizingAction?.Invoke(e.Result.Text);

}

}

/// <summary>

/// 识别结束

/// </summary>

/// <param name="s"></param>

/// <param name="e"></param>

private void OnRecognized(object s, SpeechRecognitionEventArgs e)

{

Debug.Log(TAG + "RecognizedSpeech:" + e.Result.Text + " :[e.Result.Reason]:" + e.Result.Reason);

if (e.Result.Reason == ResultReason.RecognizedSpeech )

{

bool tmp = onRecognizedSpeechAction == null;

Debug.Log(TAG + " Trigger onRecognizedSpeechAction is null :" + tmp);

onRecognizedSpeechAction?.Invoke(e.Result.Text);

}

}

/// <summary>

/// 识别取消

/// </summary>

/// <param name="s"></param>

/// <param name="e"></param>

private void OnCanceled(object s, SpeechRecognitionCanceledEventArgs e)

{

Debug.LogFormat(TAG+"Canceled: Reason={0}", e.Reason );

if (e.Reason == CancellationReason.Error)

{

onErrorAction?.Invoke(e.ErrorDetails);

}

}

/// <summary>

/// 会话结束

/// </summary>

/// <param name="s"></param>

/// <param name="e"></param>

private void OnSessionStopped(object s, SessionEventArgs e)

{

Debug.Log(TAG+"Session stopped event." );

onSessionStoppedAction?.Invoke("Session stopped event.");

}

#endregion

#region 连续语音识别转文本

/// <summary>

/// 开启连续语音识别转文本

/// </summary>

public void StartContinuousRecognizer()

{

Debug.LogWarning(TAG + "StartContinuousRecognizer");

try

{

// 转到异步中(根据自己需要处理)

Loom.RunAsync(async () => {

try

{

if (m_SpeechRecognizer != null)

{

m_SpeechRecognizer.Dispose();

m_SpeechRecognizer = null;

}

if (m_SpeechRecognizer == null)

{

m_SpeechRecognizer = new SpeechRecognizer(m_SpeechConfig, m_AudioConfig);

SetRecoginzeCallback();

}

await m_SpeechRecognizer.StartContinuousRecognitionAsync().ConfigureAwait(false);

Loom.QueueOnMainThread(() => {

Debug.LogWarning(TAG + "StartContinuousRecognizer QueueOnMainThread ok");

});

Debug.LogWarning(TAG + "StartContinuousRecognizer RunAsync ok");

}

catch (Exception e)

{

Loom.QueueOnMainThread(() =>

{

Debug.LogError(TAG + " StartContinuousRecognizer 0 " + e);

});

}

});

}

catch (Exception e)

{

Debug.LogError(TAG + " StartContinuousRecognizer 1 " + e);

}

}

/// <summary>

/// 结束连续语音识别转文本

/// </summary>

public async Task StopContinuousRecognizer()

{

try

{

if (m_SpeechRecognizer != null)

{

await m_SpeechRecognizer.StopContinuousRecognitionAsync().ConfigureAwait(false);

//m_SpeechRecognizer.Dispose();

//m_SpeechRecognizer = null;

Debug.LogWarning(TAG + " StopContinuousRecognizer");

}

}

catch (Exception e)

{

Debug.LogError(TAG + " StopContinuousRecognizer Exception : " + e);

}

}

#endregion

}

3、ASRConfig

public class ASRConfig

{

#region Azure ASR

/// <summary>

/// AZURE_SPEECH_KEY

/// </summary>

public virtual string AZURE_SPEECH_KEY { get; } = @"You_Key";

/// <summary>

/// AZURE_SPEECH_REGION

/// </summary>

public virtual string AZURE_SPEECH_REGION { get; } = @"eastasia";

/// <summary>

/// AZURE_SPEECH_RECOGNITION_LANGUAGE

/// </summary>

public virtual string AZURE_SPEECH_RECOGNITION_LANGUAGE { get; } = @"zh-CN";

#endregion

}