代码:

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

#载入数据集

#当前路径

mnist = input_data.read_data_sets("MNISt_data", one_hot=True)

运行结果:

Extracting MNISt_data/train-images-idx3-ubyte.gz Extracting MNISt_data/train-labels-idx1-ubyte.gz Extracting MNISt_data/t10k-images-idx3-ubyte.gz Extracting MNISt_data/t10k-labels-idx1-ubyte.gz

代码:

#每个批次的大小

#以矩阵的形式放进去

batch_size = 100

#计算一共有多少个批次

n_batch = mnist.train.num_examples // batch_size

#命名空间

with tf.name_scope('input'):

#定义两个placeholder

#28 x 28 = 784

x = tf.placeholder(tf.float32, [None, 784], name='x_input')

y = tf.placeholder(tf.float32, [None, 10], name='y_input')

with tf.name_scope('layer'):

#创建一个简单的神经网络

#输入层784,没有隐藏层,输出层10个神经元

with tf.name_scope('weights'):

W = tf.Variable(tf.zeros([784, 10]), name='W')

with tf.name_scope('biases'):

b = tf.Variable(tf.zeros([1, 10]), name='b')

with tf.name_scope('wx_plus_b'):

wx_plus_b = tf.matmul(x, W) + b

with tf.name_scope('softmax'):

prediction = tf.nn.softmax(wx_plus_b)

with tf.name_scope('loss'):

#二次代价函数

loss = tf.reduce_mean(tf.square(y - prediction))

#交叉熵

#loss = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(labels=y, logits=prediction))

with tf.name_scope('train'):

#使用梯度下降法

train_step = tf.train.GradientDescentOptimizer(0.2).minimize(loss)

#初始化变量

init = tf.global_variables_initializer()

with tf.name_scope('accuracy'):

with tf.name_scope('correct_prediction'):

#结果存放在一个布尔型列表中

#tf.argmax(y, 1)与tf.argmax(prediction, 1)相同返回True,不同则返回False

#argmax返回一维张量中最大的值所在的位置

correct_prediction = tf.equal(tf.argmax(y, 1), tf.argmax(prediction, 1))

with tf.name_scope('accuracy'):

#求准确率

#tf.cast(correct_prediction, tf.float32) 将布尔型转换为浮点型

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

with tf.Session() as sess:

sess.run(init)

#当前路径logs文件夹

writer = tf.summary.FileWriter('logs/', sess.graph)

#总共1个周期

for epoch in range(1):

#总共n_batch个批次

for batch in range(n_batch):

#获得一个批次

batch_xs, batch_ys = mnist.train.next_batch(batch_size)

sess.run(train_step, feed_dict={x:batch_xs, y:batch_ys})

#训练完一个周期后准确率

acc = sess.run(accuracy, feed_dict={x:mnist.test.images, y:mnist.test.labels})

print("Iter" + str(epoch) + ", Testing Accuracy" + str(acc))

在命令行:(注意切换到当前路径下)

tensorboard --logdir=logs

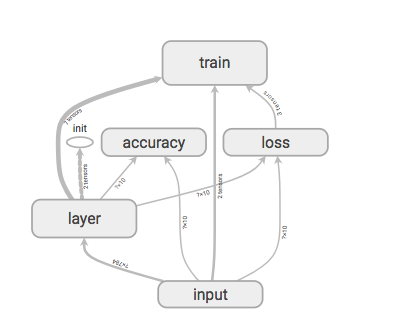

效果展示: