1、安装系统

安装 Ubuntu 22.04 LTS 系统到服务器并换源

2、配置网络连接以及hosts

确保机器可以访问互联网,并向/etc/hosts文件添加以下内容:

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

使hosts配置生效:

sudo netplan apply

3、设置root密码、开启ssh服务、允许远程登录root

设置root密码:

sudo passwd root

sudo su

开启ssh服务:

apt install ssh

systemctl start ssh

systemctl enable ssh

systemctl is-enabled ssh

允许远程登录root:

apt install vim

vim /etc/ssh/sshd_config

修改其中PermitRootLogin属性为yes:

PermitRootLogin yes

重启:

reboot

4、查看ip、检查是否可以远程登录

apt install net-tools

ifconfig

5、更新包

apt update && apt upgrade

6、安装 docker

安装docker:

sudo apt-get update

sudo apt-get install docker.io -y

sudo systemctl enable --now docker

配置docker使用systemd:

在/etc/docker/daemon.json中添加如下内容:

{

"exec-opts":["native.cgroupdriver=systemd"]

}

7、关闭 swap

sudo swapoff -a

注释/etc/fstab文件中以/swap开头的那行

ps: 22.04 LTS 默认加载了 br_netfilter 模块

8、安装 cri-dockerd

检查github.com/Mirantis/cri-dockerd的最新发行版本:

cd ~

wget https://github.com/Mirantis/cri-dockerd/releases/download/v0.3.4/cri-dockerd_0.3.4.3-0.ubuntu-jammy_amd64.deb

dpkg -i cri-dockerd_0.3.4.3-0.ubuntu-jammy_amd64.deb

ps:连接不上可以找github加速站,如:

wget http://ghproxy.com/github.com/Mirantis/cri-dockerd/releases/download/v0.3.4/cri-dockerd_0.3.4.3-0.ubuntu-jammy_amd64.deb

启动并配置开机启动:

sudo systemctl enable --now cri-docker.service

sudo systemctl enable --now cri-docker.socket

9、安装 kubeadm、kubelet、kubectl

安装所需包:

sudo apt-get update

sudo apt-get install -y apt-transport-https ca-certificates curl

配置密钥:

curl -fsSL https://mirrors.aliyun.com/kubernetes/apt/doc/apt-key.gpg | sudo gpg --dearmor -o /etc/apt/keyrings/kubernetes-archive-keyring.gpg

配置源:

echo "deb [signed-by=/etc/apt/keyrings/kubernetes-archive-keyring.gpg] http://mirrors.aliyun.com/kubernetes/apt kubernetes-xenial main" | sudo tee /etc/apt/sources.list.d/kubernetes.list

*ps:如果安装kubeadm、kubelet、kubectl很慢的话可以换别的源试试,比如清华源:

echo "deb [signed-by=/etc/apt/keyrings/kubernetes-archive-keyring.gpg] https://mirrors.tuna.tsinghua.edu.cn/kubernetes/apt kubernetes-xenial main" | sudo tee /etc/apt/sources.list.d/kubernetes.list

安装kubeadm、kubelet、kubectl:

sudo apt-get update

sudo apt-get install -y kubelet kubeadm kubectl

sudo apt-mark hold kubelet kubeadm kubectl

10、Master节点 kubeadm 初始化前准备工作(重要)

测试 docker image 拉取,由于网络问题测试会失败:

sudo kubeadm config images pull --cri-socket unix:///run/cri-dockerd.sock

查看所需images:

sudo kubeadm config images list

(示例)需要以下镜像:

registry.k8s.io/kube-apiserver:v1.27.4

registry.k8s.io/kube-controller-manager:v1.27.4

registry.k8s.io/kube-scheduler:v1.27.4

registry.k8s.io/kube-proxy:v1.27.4

registry.k8s.io/pause:3.9

registry.k8s.io/etcd:3.5.7-0

registry.k8s.io/coredns/coredns:v1.10.1

手动从国内镜像站 pull 下来:

sudo docker pull registry.aliyuncs.com/google_containers/kube-apiserver:v1.27.4

sudo docker pull registry.aliyuncs.com/google_containers/kube-controller-manager:v1.27.4

sudo docker pull registry.aliyuncs.com/google_containers/kube-scheduler:v1.27.4

sudo docker pull registry.aliyuncs.com/google_containers/kube-proxy:v1.27.4

sudo docker pull registry.aliyuncs.com/google_containers/pause:3.9

sudo docker pull registry.aliyuncs.com/google_containers/etcd:3.5.7-0

# coredns的url需要改变一下,具体随机应变吧

sudo docker pull registry.aliyuncs.com/google_containers/coredns:v1.10.1

查看docker images列表确认无误:

sudo docker images

给 pull 下来的镜像打上 kubeadm 需要的 tag:

sudo docker tag registry.aliyuncs.com/google_containers/kube-apiserver:v1.27.4 registry.k8s.io/kube-apiserver:v1.27.4

sudo docker tag registry.aliyuncs.com/google_containers/kube-controller-manager:v1.27.4 registry.k8s.io/kube-controller-manager:v1.27.4

sudo docker tag registry.aliyuncs.com/google_containers/kube-scheduler:v1.27.4 registry.k8s.io/kube-scheduler:v1.27.4

sudo docker tag registry.aliyuncs.com/google_containers/kube-proxy:v1.27.4 registry.k8s.io/kube-proxy:v1.27.4

sudo docker tag registry.aliyuncs.com/google_containers/pause:3.9 registry.k8s.io/pause:3.9

sudo docker tag registry.aliyuncs.com/google_containers/etcd:3.5.7-0 registry.k8s.io/etcd:3.5.7-0

sudo docker tag registry.aliyuncs.com/google_containers/coredns:v1.10.1 registry.k8s.io/coredns/coredns:v1.10.1

导出kubeadm默认配置文件:

cd ~

sudo kubeadm config print init-defaults > kubeadm-init-config.yaml

内容如下:

apiVersion: kubeadm.k8s.io/v1beta3

bootstrapTokens:

- groups:

- system:bootstrappers:kubeadm:default-node-token

token: abcdef.0123456789abcdef

ttl: 24h0m0s

usages:

- signing

- authentication

kind: InitConfiguration

localAPIEndpoint:

advertiseAddress: 1.2.3.4

bindPort: 6443

nodeRegistration:

criSocket: unix:///var/run/containerd/containerd.sock

imagePullPolicy: IfNotPresent

name: node

taints: null

---

apiServer:

timeoutForControlPlane: 4m0s

apiVersion: kubeadm.k8s.io/v1beta3

certificatesDir: /etc/kubernetes/pki

clusterName: kubernetes

controllerManager: {

}

dns: {

}

etcd:

local:

dataDir: /var/lib/etcd

imageRepository: registry.k8s.io

kind: ClusterConfiguration

kubernetesVersion: 1.27.0

networking:

dnsDomain: cluster.local

serviceSubnet: 10.96.0.0/12

scheduler: {

}

需要修改的属性:advertiseAddress criSocket name imageRepository

advertiseAddress: 为master的ip

criSocket: unix:///run/cri-dockerd.sock

name: xa-C92-00 #这里使用host name

imageRepository: registry.aliyuncs.com/google_containers

需要增加的属性:

podSubnet: 10.244.0.0/16

修改后:

apiVersion: kubeadm.k8s.io/v1beta3

bootstrapTokens:

- groups:

- system:bootstrappers:kubeadm:default-node-token

token: abcdef.0123456789abcdef

ttl: 24h0m0s

usages:

- signing

- authentication

kind: InitConfiguration

localAPIEndpoint:

advertiseAddress: 192.168.31.9

bindPort: 6443

nodeRegistration:

criSocket: unix:///run/cri-dockerd.sock

imagePullPolicy: IfNotPresent

name: C92-00-Master

taints: null

---

apiServer:

timeoutForControlPlane: 4m0s

apiVersion: kubeadm.k8s.io/v1beta3

certificatesDir: /etc/kubernetes/pki

clusterName: kubernetes

controllerManager: {

}

dns: {

}

etcd:

local:

dataDir: /var/lib/etcd

imageRepository: registry.aliyuncs.com/google_containers

kind: ClusterConfiguration

kubernetesVersion: 1.27.0

networking:

dnsDomain: cluster.local

serviceSubnet: 10.96.0.0/12

podSubnet: 10.244.0.0/16

scheduler: {

}

11、Master节点 kubeadm 初始化及排错

尝试初始化

# 替换为配置文件具体路径

sudo kubeadm init --config /root/kubeadm-init-config.yaml

报错1:timed out waiting for condition

[init] Using Kubernetes version: v1.27.0

[preflight] Running pre-flight checks

[WARNING Hostname]: hostname "node" could not be reached

[WARNING Hostname]: hostname "node": lookup node on 127.0.0.53:53: server misbehaving

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

W0723 02:07:59.688150 9181 checks.go:835] detected that the sandbox image "registry.k8s.io/pause:3.6" of the container runtime is inconsistent with that used by kubeadm. It is recommended that using "registry.aliyuncs.com/google_containers/pause:3.9" as the CRI sandbox image.

······

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[kubelet-check] Initial timeout of 40s passed.

Unfortunately, an error has occurred:

timed out waiting for the condition

This error is likely caused by:

- The kubelet is not running

- The kubelet is unhealthy due to a misconfiguration of the node in some way (required cgroups disabled)

If you are on a systemd-powered system, you can try to troubleshoot the error with the following commands:

- 'systemctl status kubelet'

- 'journalctl -xeu kubelet'

Additionally, a control plane component may have crashed or exited when started by the container runtime.

To troubleshoot, list all containers using your preferred container runtimes CLI.

Here is one example how you may list all running Kubernetes containers by using crictl:

- 'crictl --runtime-endpoint unix:///run/cri-dockerd.sock ps -a | grep kube | grep -v pause'

Once you have found the failing container, you can inspect its logs with:

- 'crictl --runtime-endpoint unix:///run/cri-dockerd.sock logs CONTAINERID'

error execution phase wait-control-plane: couldn't initialize a Kubernetes cluster

To see the stack trace of this error execute with --v=5 or higher

大概率是pull所需镜像超时导致的,把需要的镜像手动pull下来打tag即可

排错1:

首先查看日志:

sudo journalctl -xeu kubelet

关键内容如下:

7月 23 02:15:33 xa-C92-00 kubelet[9329]: E0723 02:15:33.493930 9329 kuberuntime_sandbox.go:72] "Failed to create sandbox for pod" err="rpc error: code = Unknown desc = failed pulling image \"registry.k8s.io/pause:3.6\": Error response from daemon: Head \"https://europe-west2-docker.pkg.dev/v2/k8s-artifacts-prod/images/pause/manifests/3.6\": dial tcp 142.250.157.82:443: i/o timeout" pod="kube-system/kube-apiserver-node"

7月 23 02:15:33 xa-C92-00 kubelet[9329]: E0723 02:15:33.493995 9329 kuberuntime_manager.go:1122] "CreatePodSandbox for pod failed" err="rpc error: code = Unknown desc = failed pulling image \"registry.k8s.io/pause:3.6\": Error response from daemon: Head \"https://europe-west2-docker.pkg.dev/v2/k8s-artifacts-prod/images/pause/manifests/3.6\": dial tcp 142.250.157.82:443: i/o timeout" pod="kube-system/kube-apiserver-node"

7月 23 02:15:33 xa-C92-00 kubelet[9329]: E0723 02:15:33.494130 9329 pod_workers.go:1294] "Error syncing pod, skipping" err="failed to \"CreatePodSandbox\" for \"kube-apiserver-node_kube-system(14e1efb20b920f675a7fa970063ebe82)\" with CreatePodSandboxError: \"Failed to create sandbox for pod \\\"kube-apiserver-node_kube-system(14e1efb20b920f675a7fa970063ebe82)\\\": rpc error: code = Unknown desc = failed pulling image \\\"registry.k8s.io/pause:3.6\\\": Error response from daemon: Head \\\"https://europe-west2-docker.pkg.dev/v2/k8s-artifacts-prod/images/pause/manifests/3.6\\\": dial tcp 142.250.157.82:443: i/o timeout\"" pod="kube-system/kube-apiserver-node" podUID=14e1efb20b920f675a7fa970063ebe82

7月 23 02:15:34 xa-C92-00 kubelet[9329]: W0723 02:15:34.624010 9329 reflector.go:533] vendor/k8s.io/client-go/informers/factory.go:150: failed to list *v1.RuntimeClass: Get "https://192.168.31.9:6443/apis/node.k8s.io/v1/runtimeclasses?limit=500&resourceVersion=0": dial tcp 192.168.31.9:6443: connect: connection refused

7月 23 02:15:34 xa-C92-00 kubelet[9329]: E0723 02:15:34.624195 9329 reflector.go:148] vendor/k8s.io/client-go/informers/factory.go:150: Failed to watch *v1.RuntimeClass: failed to list *v1.RuntimeClass: Get "https://192.168.31.9:6443/apis/node.k8s.io/v1/runtimeclasses?limit=500&resourceVersion=0": dial tcp 192.168.31.9:6443: connect: connection refused

7月 23 02:15:35 xa-C92-00 kubelet[9329]: W0723 02:15:35.027372 9329 reflector.go:533] vendor/k8s.io/client-go/informers/factory.go:150: failed to list *v1.CSIDriver: Get "https://192.168.31.9:6443/apis/storage.k8s.io/v1/csidrivers?limit=500&resourceVersion=0": dial tcp 192.168.31.9:6443: connect: connection refused

可以定位到问题是failed pulling image \"registry.k8s.io/pause:3.6\",所以重新手动下载该镜像并tag:

sudo docker pull registry.aliyuncs.com/google_containers/pause:3.6

sudo docker tag registry.aliyuncs.com/google_containers/pause:3.6 registry.k8s.io/pause:3.6

重置kubeadm:

sudo kubeadm reset -f --cri-socket unix:///run/cri-dockerd.sock

ps:如此会残留CNI配置、iptables配置、IPVS配置、etcd状态,如果reset后还有问题的话可以手动清理(参考):

# 清理iptables

iptables -F && iptables -t nat -F && iptables -t mangle -F && iptables -X

# 清理CNI配置

rm -rf /etc/cni/*

# 清理iptables配置

rm -rf /var/lib/etcd/*

# 清理其他杂项

rm -rf /etc/ceph \

/etc/cni \

/opt/cni \

/run/secrets/kubernetes.io \

/run/calico \

/run/flannel \

/var/lib/calico \

/var/lib/cni \

/var/lib/kubelet \

/var/log/containers \

/var/log/kube-audit \

/var/log/pods \

/var/run/calico \

/usr/libexec/kubernetes

继续重试kubeadm 初始化

# 替换为配置文件具体路径

sudo kubeadm init --config /root/kubeadm-init-config.yaml

如果看到以下内容就成功了:

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.31.9:6443 --token abcdef.0123456789abcdef \

--discovery-token-ca-cert-hash sha256:d35887853c98044495f91251a878376333870dd7b622161d18b172ebb2284e8d

最后根据上述提示执行命令(实例为root用户):

# 临时生效 如需永久生效可以修改/etc/environment文件添加环境变量

export KUBECONFIG=/etc/kubernetes/admin.conf

12、检查 Master 节点上 Pod 运行状况

查看所有pod:

kubectl get pod -A

可以看到coredns组件一直处于pending状态:

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system coredns-7bdc4cb885-5jqgk 0/1 Pending 0 12m

kube-system coredns-7bdc4cb885-n6smf 0/1 Pending 0 12m

kube-system etcd-node 1/1 Running 0 12m

kube-system kube-apiserver-node 1/1 Running 0 12m

kube-system kube-controller-manager-node 1/1 Running 0 12m

kube-system kube-proxy-nbn2r 1/1 Running 0 12m

kube-system kube-scheduler-node 1/1 Running 0 12m

先查看 kubelet 日志:

sudo journalctl -u kubelet -f

kubelet 日志内容如下:

7月 23 02:59:33 xa-C92-00 kubelet[10755]: E0723 02:59:33.780224 10755 kubelet.go:2760] "Container runtime network not ready" networkReady="NetworkReady=false reason:NetworkPluginNotReady message:docker: network plugin is not ready: cni config uninitialized"

再查看该 Pod 日志:

# 替换具体pod name; 记得指定namespace;

sudo kubectl logs coredns-7bdc4cb885-5jqgk --namespace=kube-system

Pod 日志内容如下:

E0723 02:57:34.887767 11558 memcache.go:265] couldn't get current server API group list: Get "http://localhost:8080/api?timeout=32s": dial tcp 127.0.0.1:8080: connect: connection refused

根据network plugin is not ready: cni config uninitialized可以知道是尚未安装网络插件的问题(参照步骤14安装Calico后会解决)

13、Node 节点加入集群

参照上面步骤为 Node 节点安装 kubelet kubeadm kubectl

之后 Master 节点执行以下命令以查看 Node 节点加入集群的命令:

sudo kubeadm token create --print-join-command

在 Node 节点执行上面显示的命令(记得指定cri-socket):

sudo kubeadm join 192.168.31.9:6443 --token pm4bmp.5qu7nqswvav4kd6s --discovery-token-ca-cert-hash sha256:d35887853c98044495f91251a878376333870dd7b622161d18b172ebb2284e8d --cri-socket unix:///run/cri-dockerd.sock

看到以下内容表示 Node 节点成功加入集群:

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

之后在 Master 节点上查看加入集群的节点:

kubectl get nodes

ps:如果报couldn't get current server API group list: Get "http://localhost:8080/api?timeout=32s": dial tcp 127.0.0.1:8080: connect: connection refused的话,大概率是终端断连导致之前export的环境变量失效了,可以在/etc/environment文件中加入KUBECONFIG="/etc/kubernetes/admin.conf"以使环境变量永久生效

14、Master 节点安装 Calico 网络插件(踩坑比较多建议看完再动手)

现在跟随Calico官网安装教程的指引,为集群安装Calico网络插件:

kubectl create -f https://raw.githubusercontent.com/projectcalico/calico/v3.26.1/manifests/tigera-operator.yaml

报错2:

The connection to the server raw.githubusercontent.com was refused - did you specify the right host or port?

由于网络问题域名解析出错

排错2:

我们直接手动下载tigera-operator.yaml后ftp到 Master 节点的/root目录下,重试:

kubectl create -f /root/tigera-operator.yaml

继续,将custom-resources.yaml由https://raw.githubusercontent.com/projectcalico/calico/v3.26.1/manifests/custom-resources.yaml手动下载,内容如下:

# This section includes base Calico installation configuration.

# For more information, see: https://projectcalico.docs.tigera.io/master/reference/installation/api#operator.tigera.io/v1.Installation

apiVersion: operator.tigera.io/v1

kind: Installation

metadata:

name: default

spec:

# Configures Calico networking.

calicoNetwork:

# Note: The ipPools section cannot be modified post-install.

ipPools:

- blockSize: 26

cidr: 192.168.0.0/16

encapsulation: VXLANCrossSubnet

natOutgoing: Enabled

nodeSelector: all()

---

# This section configures the Calico API server.

# For more information, see: https://projectcalico.docs.tigera.io/master/reference/installation/api#operator.tigera.io/v1.APIServer

apiVersion: operator.tigera.io/v1

kind: APIServer

metadata:

name: default

spec: {

}

其中应修改cidr: 192.168.0.0/16属性为kubeadm配置文件中podSubnet: 10.244.0.0/16属性指定的ip段(10.244.0.0/16)

之后继续部署pod:

kubectl create -f /root/custom-resources.yaml

查看所有 Pod:

kubectl get pod -A

NAMESPACE NAME READY STATUS RESTARTS AGE

calico-system calico-kube-controllers-67cb4686c7-dllhw 0/1 Pending 0 8m38s

calico-system calico-node-24zzc 0/1 Init:ImagePullBackOff 0 8m39s

calico-system calico-node-2s6gl 0/1 Init:0/2 0 8m39s

calico-system calico-typha-5d9d547f8-f4w48 0/1 ContainerCreating 0 8m40s

calico-system csi-node-driver-f8r58 0/2 ContainerCreating 0 8m38s

calico-system csi-node-driver-szt4w 0/2 ContainerCreating 0 8m39s

kube-system coredns-7bdc4cb885-mtpq4 0/1 Pending 0 64m

kube-system coredns-7bdc4cb885-pxwbc 0/1 Pending 0 64m

kube-system etcd-c92-00-master 1/1 Running 0 64m

kube-system kube-apiserver-c92-00-master 1/1 Running 0 64m

kube-system kube-controller-manager-c92-00-master 1/1 Running 0 64m

kube-system kube-proxy-6nzkm 1/1 Running 0 64m

kube-system kube-proxy-thdjg 1/1 Running 0 61m

kube-system kube-scheduler-c92-00-master 1/1 Running 0 64m

tigera-operator tigera-operator-5f4668786-pv8cw 1/1 Running 0 34m

报错3:Init:ImagePullBackOff

查看报错:

kubectl describe pod calico-node-24zzc --namespace calico-system

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Scheduled 7m32s default-scheduler Successfully assigned calico-system/calico-node-24zzc to c92-00-master

Normal BackOff 3m52s kubelet Back-off pulling image "docker.io/calico/pod2daemon-flexvol:v3.26.1"

Warning Failed 3m52s kubelet Error: ImagePullBackOff

Normal Pulling 3m39s (x2 over 7m27s) kubelet Pulling image "docker.io/calico/pod2daemon-flexvol:v3.26.1"

Warning Failed 6s (x2 over 3m53s) kubelet Failed to pull image "docker.io/calico/pod2daemon-flexvol:v3.26.1": rpc error: code = Canceled desc = context canceled

Warning Failed 6s (x2 over 3m53s) kubelet Error: ErrImagePull

可以看到是由于网络问题 pull 不下来 image docker.io/calico/pod2daemon-flexvol:v3.26.1

之后同上查看其他calico-system命名空间下的Pods,其中calico-typha-5d9d547f8-f4w48提示无法拉取docker.io/calico/typha:v3.26.1

排错3:

可以看到calico版本为V3.26.1,前往https://raw.githubusercontent.com/projectcalico/calico/v3.26.1/manifests/calico.yaml查看所有image:属性后的内容;查看tigera-operator.yaml文件image:属性后的内容;

综上可以得知需要以下image:

docker.io/calico/cni:v3.26.1

docker.io/calico/node:v3.26.1

docker.io/calico/kube-controllers:v3.26.1

docker.io/calico/pod2daemon-flexvol:v3.26.1

docker.io/calico/typha:v3.26.1

docker.io/calico/apiserver:v3.26.1

quay.io/tigera/operator:v1.30.4

修改docker配置文件/etc/docker/daemon.json配置docker镜像站:

{

"exec-opts": ["native.cgroupdriver=systemd"],

"registry-mirrors": ["https://ynye4lmg.mirror.aliyuncs.com","https://hub-mirror.c.163.com","https://mirror.baidubce.com"]

}

systemctl restart docker

分别在 Master 和 Node 节点上手动pull镜像:

docker pull docker.io/calico/cni:v3.26.1

docker pull docker.io/calico/node:v3.26.1

docker pull docker.io/calico/kube-controllers:v3.26.1

docker pull docker.io/calico/pod2daemon-flexvol:v3.26.1

docker pull docker.io/calico/typha:v3.26.1

docker pull docker.io/calico/apiserver:v3.26.1

docker pull quay.io/tigera/operator:v1.30.4

回退部署:

kubectl delete -f customer-resources.yaml --grace-period=0

kubectl delete -f tigera-operator.yaml --grace-period=0

重试部署:

kubectl create -f /root/tigera-operator.yaml

kubectl create -f /root/custom-resources.yaml

ps:如果回退部署有问题,可以重置一下K8S,再用kubeadm重新初始化

sudo kubeadm reset -f --cri-socket unix:///run/cri-dockerd.sock

# 记得根据之前重置k8s的内容清理残余项

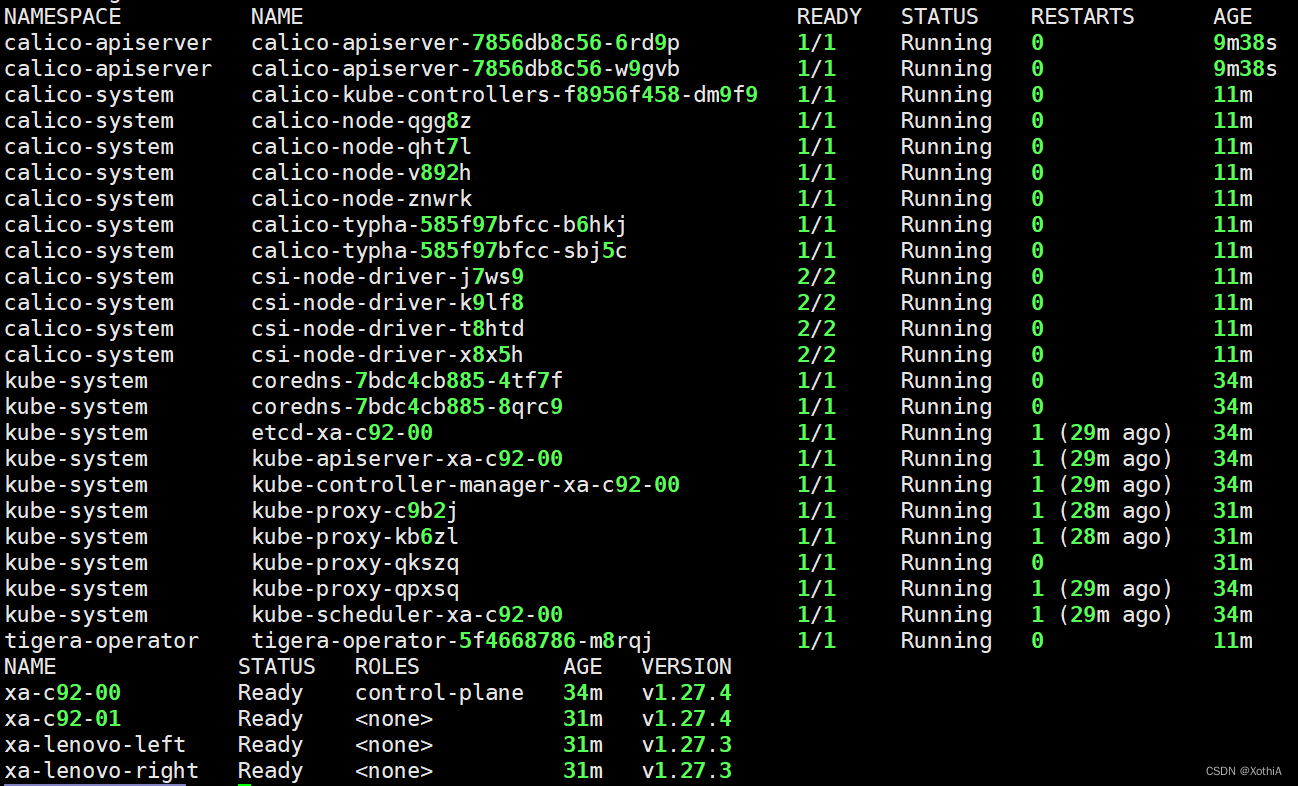

最后查看一下所有 pod 和 node:

kubectl get pods -A

kubectl get nodes

可以看到全部正常运行了