linux安装JDK及hadoop运行环境搭建

物联网

2023-09-13 18:05:39

阅读次数: 0

1.linux中安装jdk

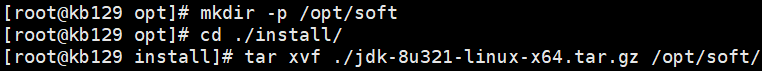

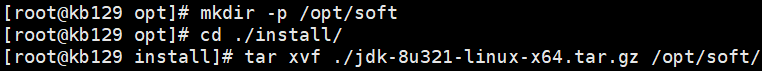

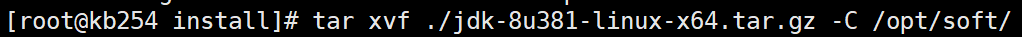

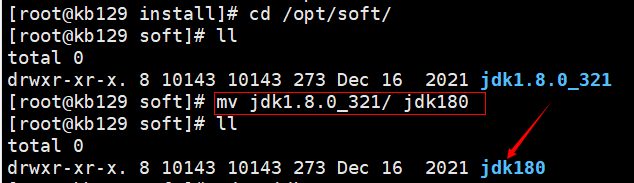

| (1)下载JDK至opt/install目录下,opt下创建目录soft,并解压至当前目录 tar xvf ./jdk-8u321-linux-x64.tar.gz -C /opt/soft/

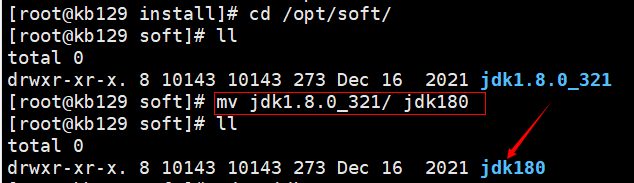

(2)改名

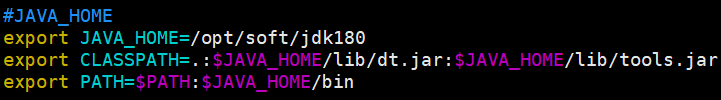

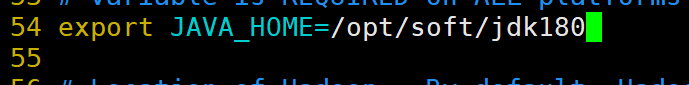

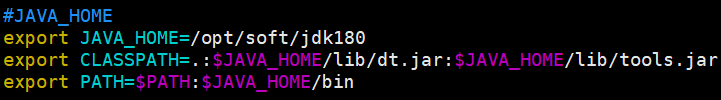

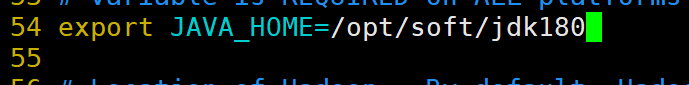

(3)配置环境变量:vim /etc/profile #JAVA_HOME export JAVA_HOME=/opt/soft/jdk180 export CLASSPATH=.:$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar export PATH=$PATH:$JAVA_HOME/bin

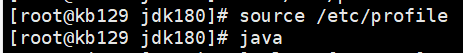

(4)更新资源并测试是否安装成功 source /opt/profile java

|

2.hadoop运行环境搭建

| 2.1 安装jDK:参上 |

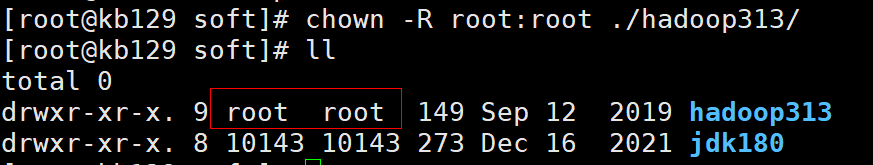

| 2.2 下载安装Hadoop 解压至soft目录下,改名为hadoop313

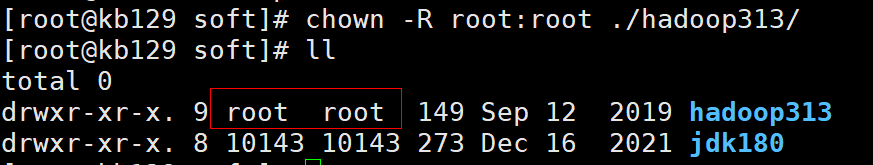

更改所属用户为root

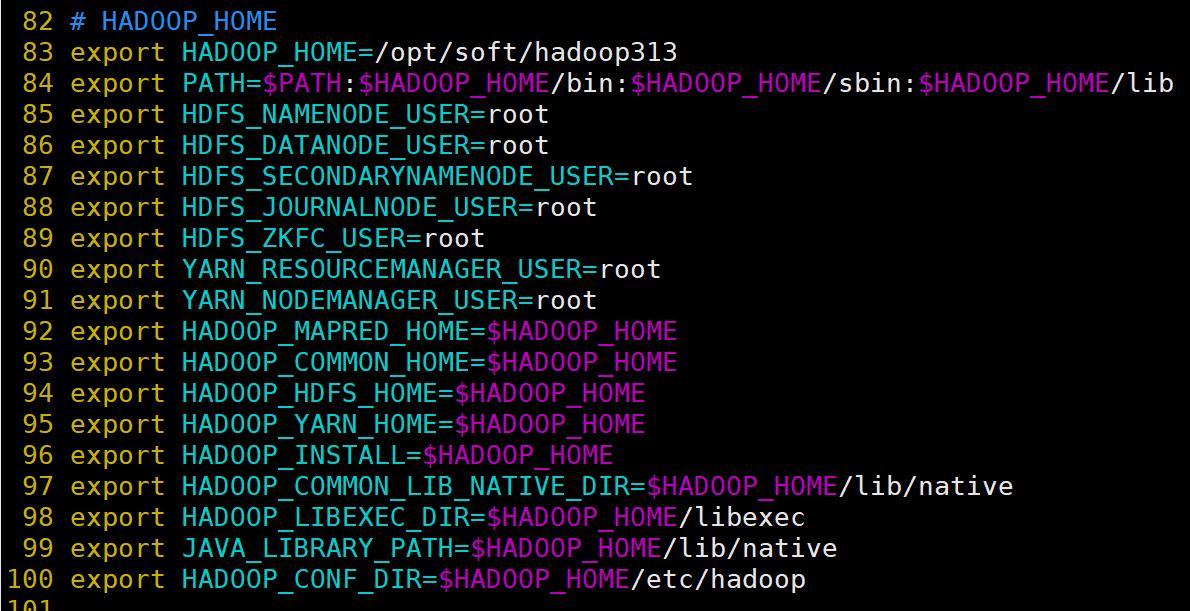

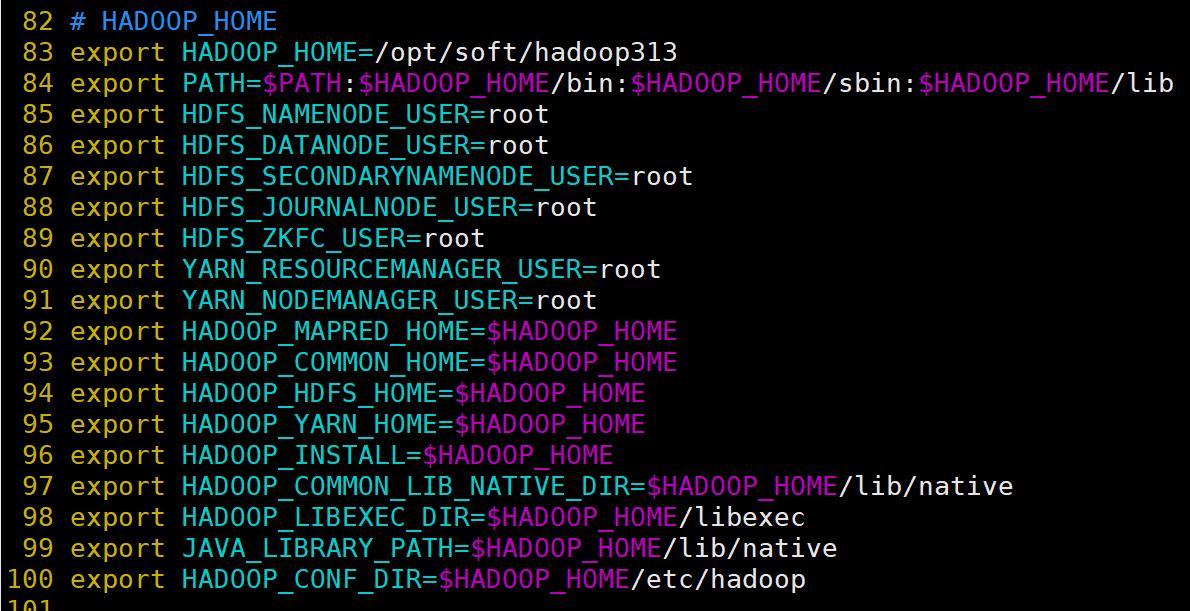

配置环境变量:vim /etc/profilre;配置完成后source /etc/profile # HADOOP_HOME

export HADOOP_HOME=/opt/soft/hadoop313

export PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$HADOOP_HOME/lib

export HDFS_NAMENODE_USER=root

export HDFS_DATANODE_USER=root

export HDFS_SECONDARYNAMENODE_USER=root

export HDFS_JOURNALNODE_USER=root

export HDFS_ZKFC_USER=root

export YARN_RESOURCEMANAGER_USER=root

export YARN_NODEMANAGER_USER=root

export HADOOP_MAPRED_HOME=$HADOOP_HOME

export HADOOP_COMMON_HOME=$HADOOP_HOME

export HADOOP_HDFS_HOME=$HADOOP_HOME

export HADOOP_YARN_HOME=$HADOOP_HOME

export HADOOP_INSTALL=$HADOOP_HOME

export HADOOP_COMMON_LIB_NATIVE_DIR=$HADOOP_HOME/lib/native

export HADOOP_LIBEXEC_DIR=$HADOOP_HOME/libexec

export JAVA_LIBRARY_PATH=$HADOOP_HOME/lib/native

export HADOOP_CONF_DIR=$HADOOP_HOME/etc/hadoop

创建数据目录data

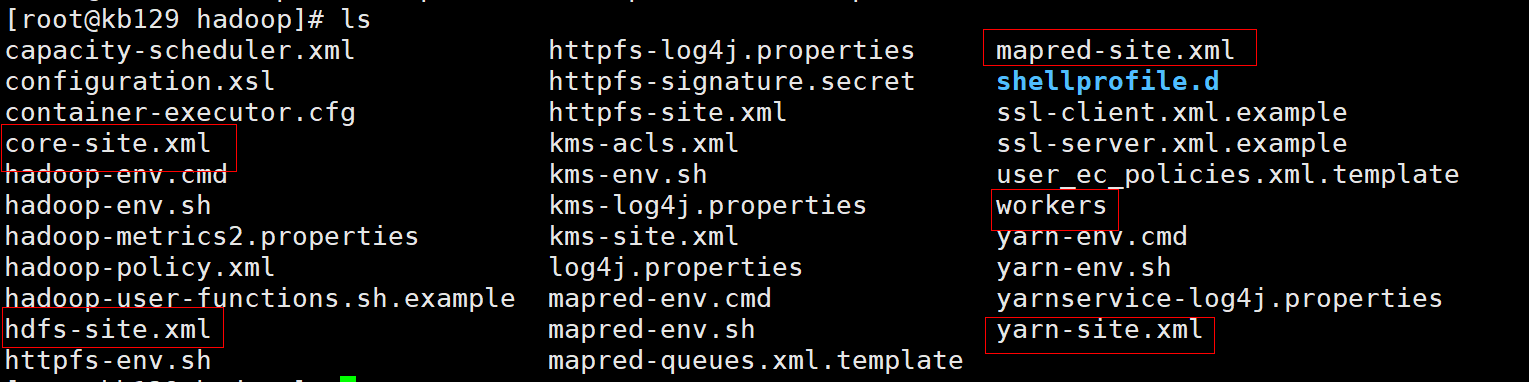

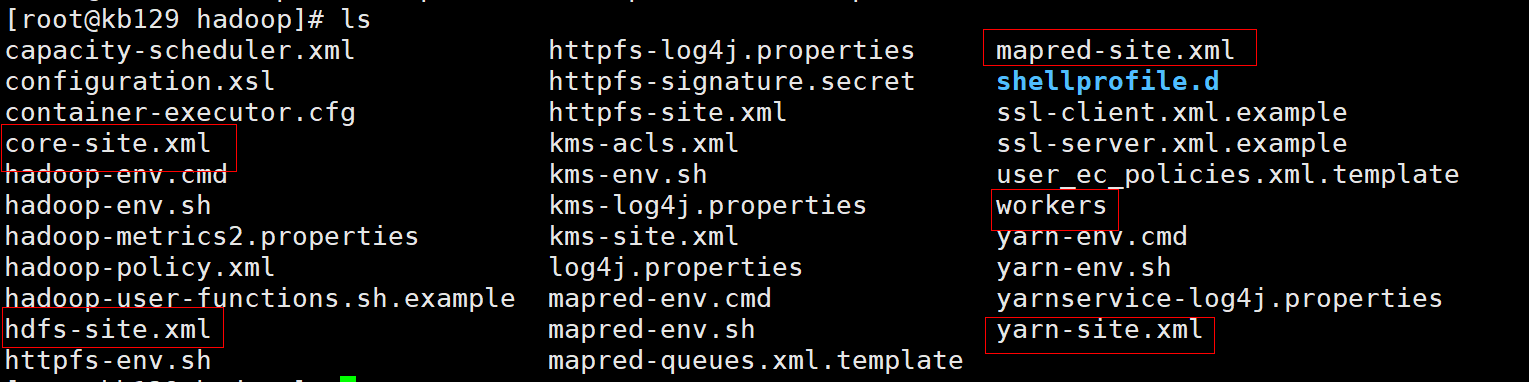

切换至hadoop目录,查看目录下文件,准备进行配置 cd /opt/soft/hadoop313/etc/hadoop

|

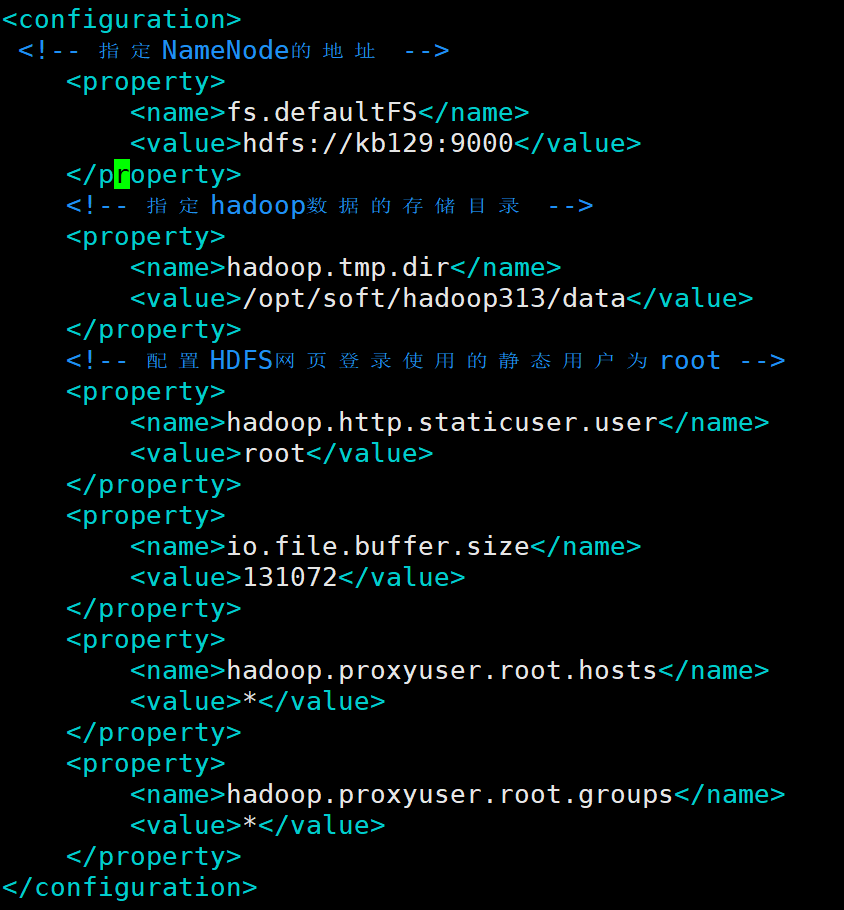

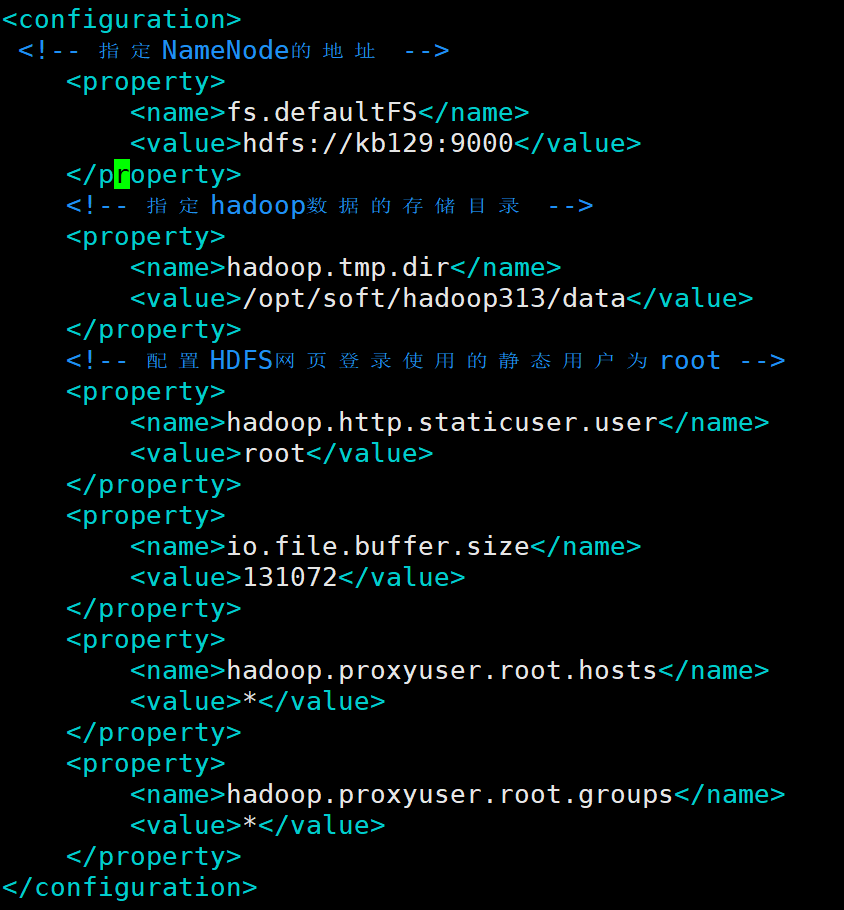

| 2.3 配置单机Hadoop (1)配置core-site.xml <configuration>

<!-- 指定NameNode的地址 -->

<property>

<name>fs.defaultFS</name>

<value>hdfs://kb129:9000</value>

</property>

<!-- 指定hadoop数据的存储目录 -->

<property>

<name>hadoop.tmp.dir</name>

<value>/opt/soft/hadoop313/data</value>

</property>

<!-- 配置HDFS网页登录使用的静态用户为root -->

<property>

<name>hadoop.http.staticuser.user</name>

<value>root</value>

</property>

<property>

<name>io.file.buffer.size</name>

<value>131072</value>

</property>

<property>

<name>hadoop.proxyuser.root.hosts</name>

<value>*</value>

</property>

<property>

<name>hadoop.proxyuser.root.groups</name>

<value>*</value>

</property>

</configuration>

(2)配置hdfs-site.xml 1)编辑hadoop-enc.sh

2)开始配置hdfs-site.xml

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>/opt/soft/hadoop313/data/dfs/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>/opt/soft/hadoop313/data/dfs/data</value>

</property>

<property>

<name>dfs.permissions.enabled</name>

<value>false</value>

</property>

</configuration>

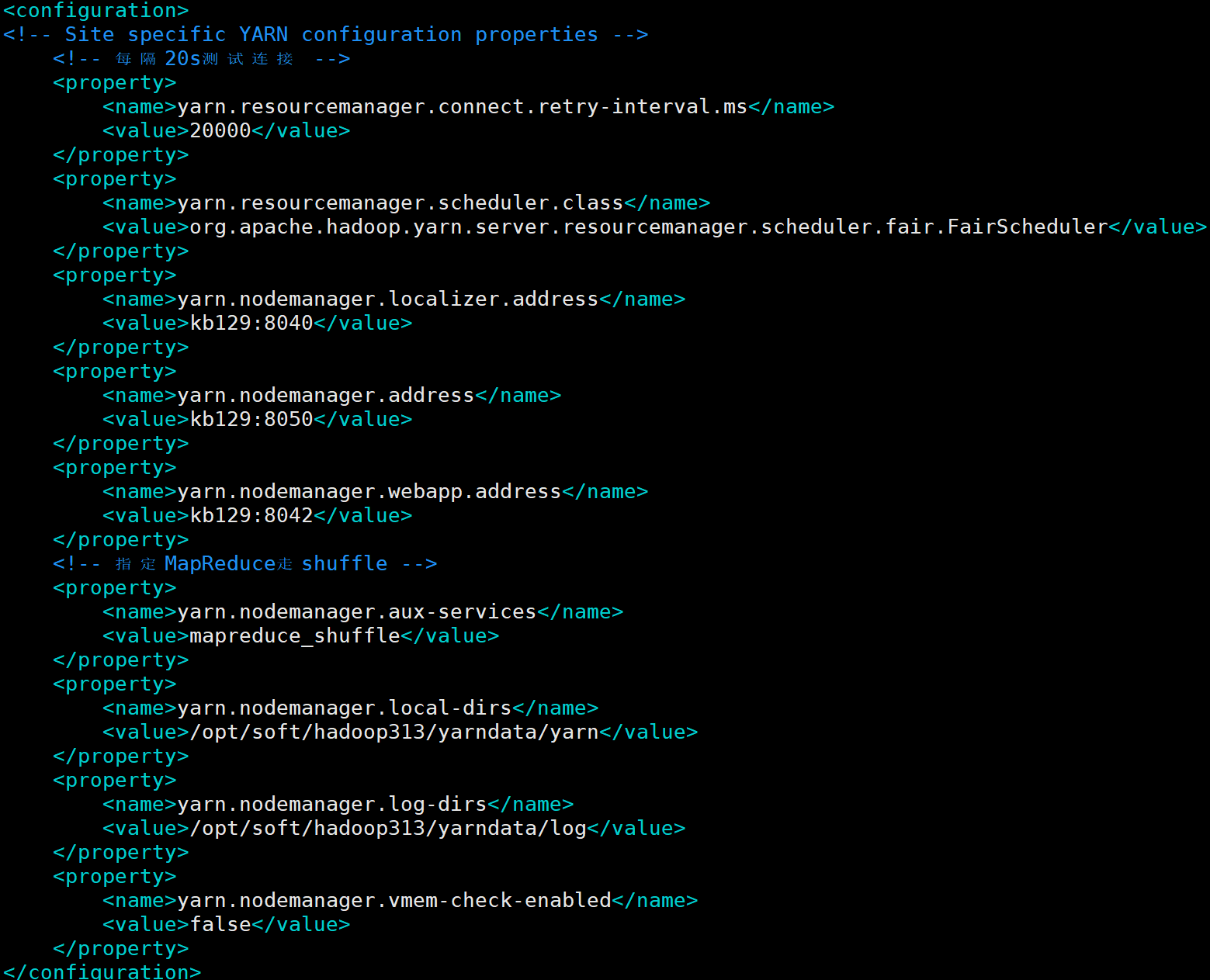

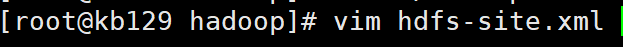

(3)配置yarn-site.xml <configuration>

<!-- Site specific YARN configuration properties -->

<!-- 每隔20s测试连接 -->

<property>

<name>yarn.resourcemanager.connect.retry-interval.ms</name>

<value>20000</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.class</name>

<value>org.apache.hadoop.yarn.server.resourcemanager.scheduler.fair.FairScheduler</value>

</property>

<property>

<name>yarn.nodemanager.localizer.address</name>

<value>kb129:8040</value>

</property>

<property>

<name>yarn.nodemanager.address</name>

<value>kb129:8050</value>

</property>

<property>

<name>yarn.nodemanager.webapp.address</name>

<value>kb129:8042</value>

</property>

<!-- 指定MapReduce走shuffle -->

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.nodemanager.local-dirs</name>

<value>/opt/soft/hadoop313/yarndata/yarn</value>

</property>

<property>

<name>yarn.nodemanager.log-dirs</name>

<value>/opt/soft/hadoop313/yarndata/log</value>

</property>

<property>

<name>yarn.nodemanager.vmem-check-enabled</name>

<value>false</value>

</property>

</configuration>

(4)配置workers更改workers内容为kb129(主机名)

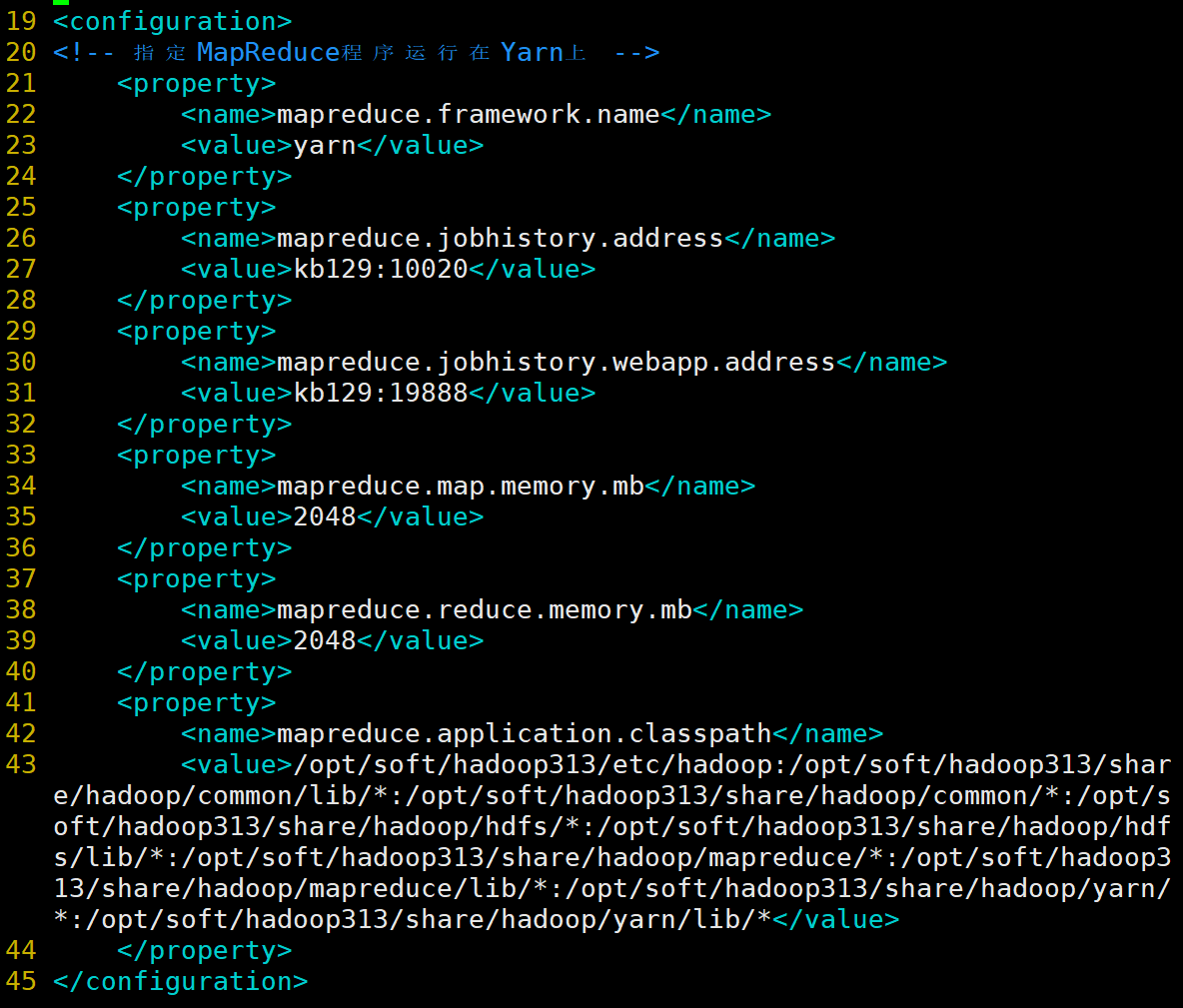

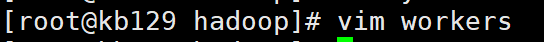

(5)配置mapred-site.xml <configuration>

<!-- 指定MapReduce程序运行在Yarn上 -->

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.jobhistory.address</name>

<value>kb129:10020</value>

</property>

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>kb129:19888</value>

</property>

<property>

<name>mapreduce.map.memory.mb</name>

<value>2048</value>

</property>

<property>

<name>mapreduce.reduce.memory.mb</name>

<value>2048</value>

</property>

<property>

<name>mapreduce.application.classpath</name>

<value>/opt/soft/hadoop313/etc/hadoop:/opt/soft/hadoop313/share/hadoop/common/lib/*:/opt/soft/hadoop313/share/hadoop/common/*:/opt/soft/hadoop313/share/hadoop/hdfs/*:/opt/soft/hadoop313/share/hadoop/hdfs/lib/*:/opt/soft/hadoop313/share/hadoop/mapreduce/*:/opt/soft/hadoop313/share/hadoop/mapreduce/lib/*:/opt/soft/hadoop313/share/hadoop/yarn/*:/opt/soft/hadoop313/share/hadoop/yarn/lib/*</value>

</property>

</configuration>

|

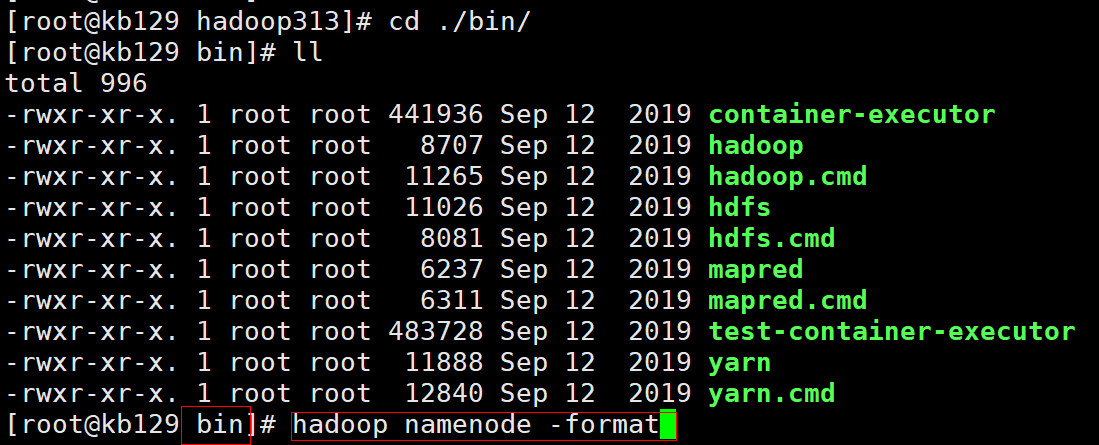

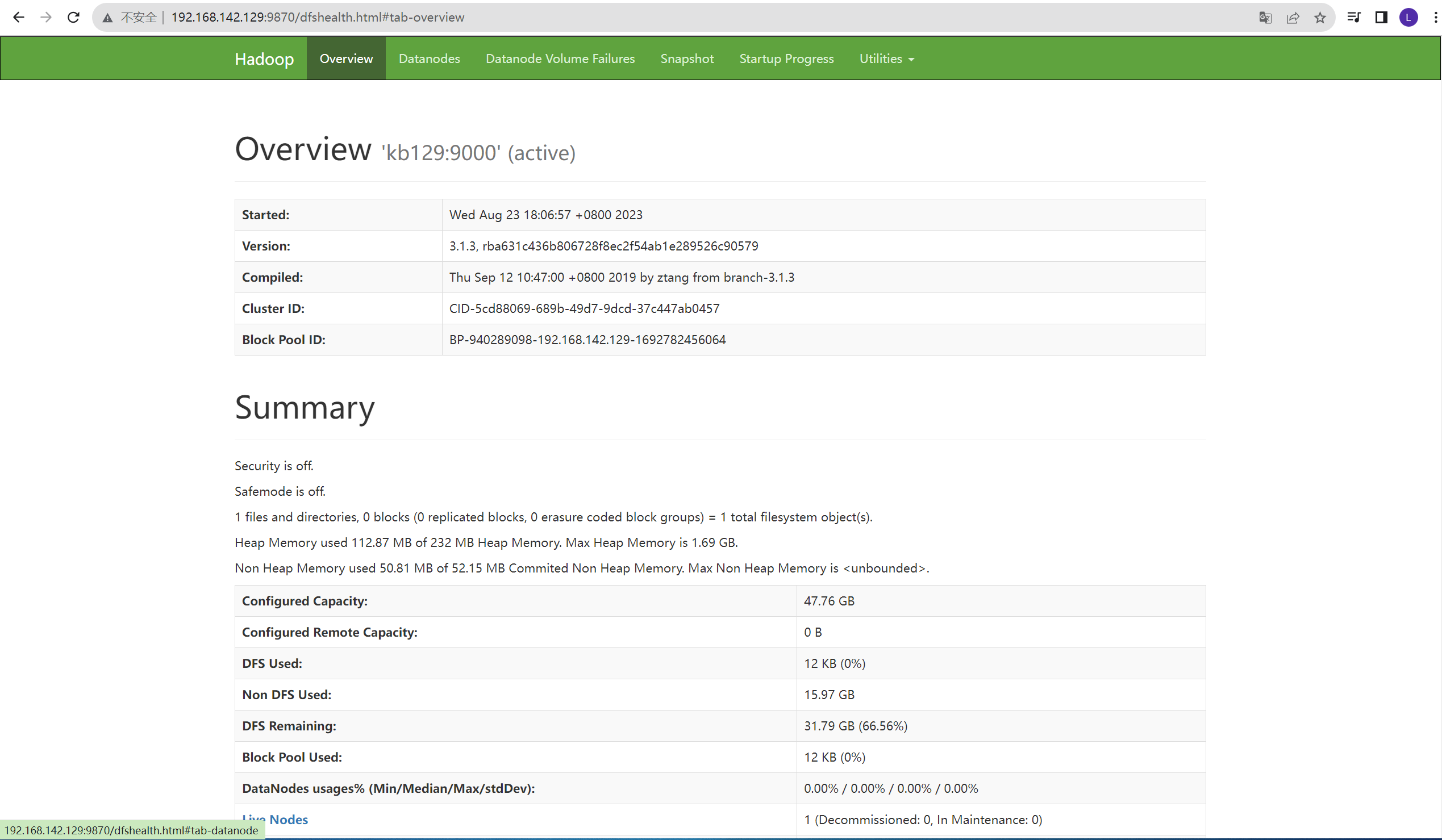

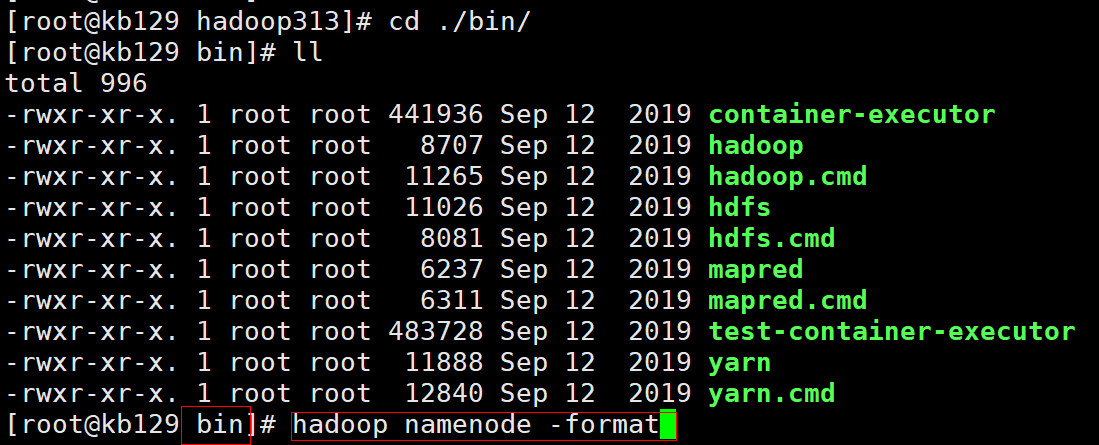

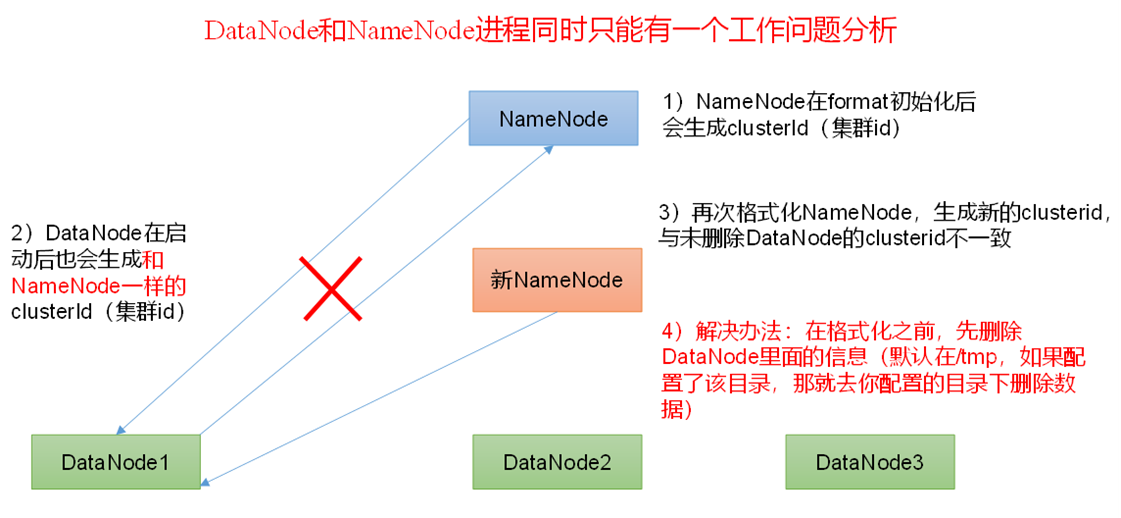

| 2.4 启动测试hadoop (1)bin目录下初始化集群hadoop namenode -format

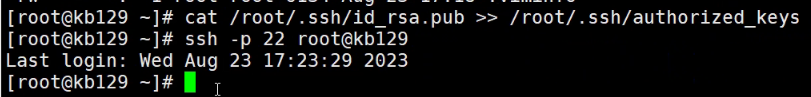

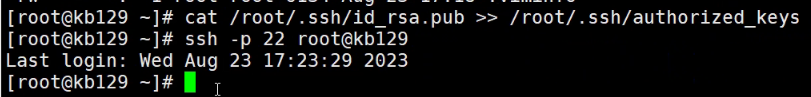

(2)设置免密登录 回到根目录下配置kb129免密登录:ssh-keygen -t rsa -P "" 将本地主机的公钥文件(~/.ssh/id_rsa.pub)拷贝到远程主机 kb128 的 root 用户的 .ssh/authorized_keys 文件中,通过 SSH 连接到远程主机时可以使用公钥进行身份验证:cat /root/.ssh/id_rsa.pub >> /root/.ssh/authorized_keys

将本地主机的公钥添加到远程主机的授权密钥列表中,以便实现通过 SSH 公钥身份验证来连接远程主机:ssh-copy-id -i ~/.ssh/id_rsa.pub -p22 root@kb128 (3)启动/关闭、查看 [root@kb129 hadoop]# start-all.sh [root@kb129 hadoop]# stop-all.sh [root@kb129 hadoop]# jps 15089 NodeManager 16241 Jps 14616 DataNode 13801 ResourceManager 14476 NameNode 16110 SecondaryNameNode

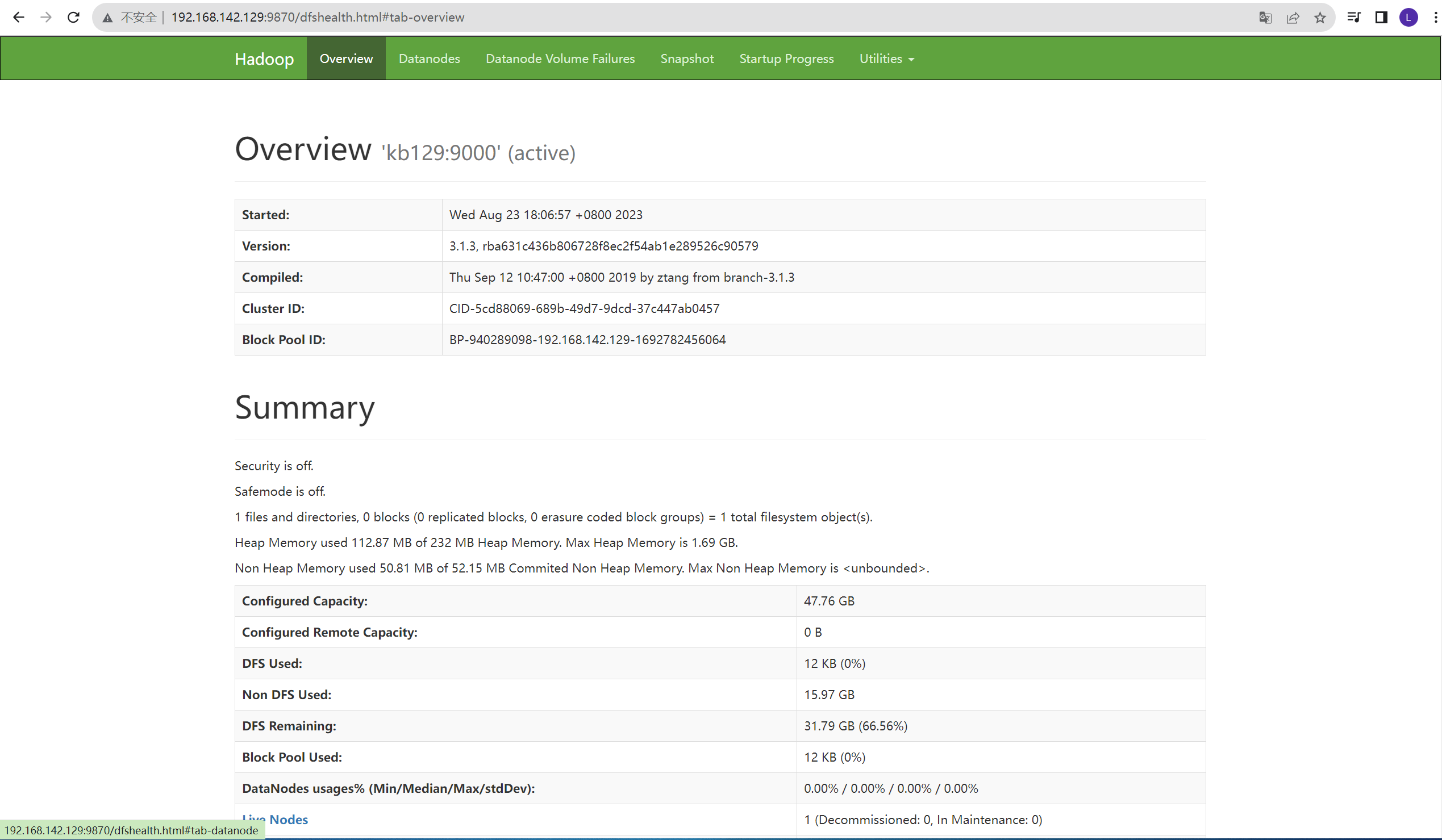

(4)网页测试:浏览器中输入网址:http://192.168.142.129:9870/

|

转载自blog.csdn.net/weixin_63713552/article/details/132476030