[toc]

一、安装要求

在开始之前,部署Kubernetes集群机器需要满足以下几个条件:

- 一台或多台机器,操作系统 CentOS7.x-86_x64

- 硬件配置:2GB或更多RAM,2个CPU或更多CPU,硬盘30GB或更多

- 集群中所有机器之间网络互通

- 可以访问外网,需要拉取镜像

- 禁止swap分区

二、准备环境

| 角色 | IP |

|---|---|

| k8s-master | 192.168.50.114 |

| k8s-node1 | 192.168.50.115 |

| k8s-node2 | 192.168.50.116 |

| k8s-node3 | 192.168.50.120 |

#关闭防火墙:

systemctl stop firewalld

systemctl disable firewalld

#关闭selinux:

sed -i 's/enforcing/disabled/' /etc/selinux/config # 永久

setenforce 0 # 临时

#关闭swap:

# 临时关闭

swapoff -a

# 永久

sed -ri 's/.*swap.*/#&/' /etc/fstab

#设置主机名:

hostnamectl set-hostname <hostname>

#在master添加hosts:

cat >> /etc/hosts << EOF

192.168.50.114 k8s-master

192.168.50.115 k8s-node1

192.168.50.116 k8s-node2

192.168.50.120 k8s-node3

EOF

#将桥接的IPv4流量传递到iptables的链:

cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-iptables=1

net.bridge.bridge-nf-call-ip6tables=1

net.ipv4.ip_forward=1

net.ipv4.tcp_tw_recycle=0

vm.swappiness=0

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_instances=8192

fs.inotify.max_user_watches=1048576

fs.file-max=52706963

fs.nr_open=52706963

net.ipv6.conf.all.disable_ipv6=1

net.netfilter.nf_conntrack_max=2310720

EOF

sysctl --system

#时间同步:

yum install ntpdate -y

ntpdate time.windows.com

#修改时区

ln -sf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

#修改语言

sudo echo 'LANG="en_US.UTF-8"' >> /etc/profile;source /etc/profile

#升级内核

cp /etc/default/grub /etc/default/grub-bak

rpm -ivh kernel-lt-5.4.186-1.el7.elrepo.x86_64.rpm

grub2-set-default 0

grub2-mkconfig -o /boot/grub2/grub.cfg

grub2-editenv list

yum makecache

awk -F\' '$1=="menuentry " {print i++ " : " $2}' /etc/grub2.cfg

reboot

uname -r

#安装ipvs模块

yum install ipset ipvsadm -y

modprobe br_netfilter

#### 内核3.10 ####

cat > /etc/sysconfig/modules/ipvs.modules <<EOF

#!/bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack_ipv4

EOF

#### 内核5.4 ####

cat > /etc/sysconfig/modules/ipvs.modules <<EOF

#!/bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack

EOF

################## 高版本的centos内核nf_conntrack_ipv4被nf_conntrack替换了

chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep -e ip_vs -e nf_conntrack

三、安装Docker/kubeadm/kubelet【所有节点】

Kubernetes默认CRI(容器运行时)为Docker,因此先安装Docker。

3.1 安装Docker

#移除docker

sudo yum -y remove docker \

docker-client \

docker-client-latest \

docker-common \

docker-latest \

docker-latest-logrotate \

docker-logrotate \

docker-selinux \

docker-engine-selinux \

docker-ce-cli \

docker-engine

#查看还有没有存在的docker组件

rpm -qa|grep docker

#有则通过命令 yum -y remove XXX 来删除,比如:

#yum remove docker-ce-cli

#安装最新版docker

wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -O /etc/yum.repos.d/docker-ce.repo

#yum -y install docker-ce

yum -y install docker-ce-20.10.10-3.el7

systemctl daemon-reload

systemctl start docker

systemctl enable docker

docker ps

#确认镜像目录是否改变

docker info |grep "Docker Root Dir"

docker --version

#配置harbor地址

#vim /lib/systemd/system/docker.service

#改为

ExecStart=/usr/bin/dockerd -H fd:// --insecure-registry https://docker.gayj.cn:444 --containerd=/run/containerd/containerd.sock

#配置镜像下载加速器和数据路径:

mkdir -p /data/docker

cat >/etc/docker/daemon.json <<EOF

{

"data-root": "/data/docker" ,

"exec-opts": ["native.cgroupdriver=systemd"],

"registry-mirrors": ["https://almtd3fa.mirror.aliyuncs.com"]

}

EOF

#删除之前的数据目录

rm -rvf /var/lib/docker

systemctl daemon-reload

systemctl restart docker

docker ps

#确认镜像目录是否改变

docker info |grep "Docker Root Dir"

docker --version

3.2 添加阿里云YUM软件源

cat > /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

3.3 安装kubeadm,kubelet和kubectl

由于版本更新频繁,这里指定版本号部署:

yum install -y kubelet-1.23.0 kubeadm-1.23.0 kubectl-1.23.0

systemctl enable kubelet

【数据目录修改】

- https://blog.csdn.net/qq_39826987/article/details/126473129?spm=1001.2014.3001.5502

四、部署Kubernetes Master

https://kubernetes.io/zh/docs/reference/setup-tools/kubeadm/kubeadm-init/#config-file

https://kubernetes.io/docs/setup/production-environment/tools/kubeadm/create-cluster-kubeadm/#initializing-your-control-plane-node

在192.168.50.114(Master)执行。

kubeadm init \

--apiserver-advertise-address=192.168.50.114 \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.23.0 \

--service-cidr=10.96.0.0/12 \

--pod-network-cidr=10.244.0.0/16

# --ignore-preflight-errors=all 忽略报错注释掉。

- –apiserver-advertise-address 集群通告地址

- –image-repository 由于默认拉取镜像地址k8s.gcr.io国内无法访问,这里指定阿里云镜像仓库地址

- –kubernetes-version K8s版本,与上面安装的一致

- –service-cidr 集群内部虚拟网络,Pod统一访问入口

- –pod-network-cidr Pod网络,,与下面部署的CNI网络组件yaml中保持一致

或者使用配置文件引导:

$ vi kubeadm.conf

apiVersion: kubeadm.k8s.io/v1beta2

kind: ClusterConfiguration

kubernetesVersion: v1.23.0

imageRepository: registry.aliyuncs.com/google_containers

networking:

podSubnet: 10.244.0.0/16

serviceSubnet: 10.96.0.0/12

$ kubeadm init --config kubeadm.conf --ignore-preflight-errors=all

拷贝kubectl使用的连接k8s认证文件到默认路径:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

$ kubectl get nodes

NAME STATUS ROLES AGE VERSION

localhost.localdomain NotReady control-plane,master 20s v1.20.0

五、加入Kubernetes Node

在192.168.4.114/115/118(Node)执行。

向集群添加新节点,执行在kubeadm init输出的kubeadm join命令:

kubeadm join 192.168.4.114:6443 --token 7gqt13.kncw9hg5085iwclx \

--discovery-token-ca-cert-hash sha256:66fbfcf18649a5841474c2dc4b9ff90c02fc05de0798ed690e1754437be35a01

默认token有效期为24小时,当过期之后,该token就不可用了。这时就需要重新创建token,可以直接使用命令快捷生成:

kubeadm token create --print-join-command

https://kubernetes.io/docs/reference/setup-tools/kubeadm/kubeadm-join/

六、部署容器网络(CNI)

1)官网

https://kubernetes.io/docs/setup/production-environment/tools/kubeadm/create-cluster-kubeadm/#pod-network

注意:只需要部署下面其中一个,推荐Calico。

Calico是一个纯三层的数据中心网络方案,Calico支持广泛的平台,包括Kubernetes、OpenStack等。

Calico 在每一个计算节点利用 Linux Kernel 实现了一个高效的虚拟路由器( vRouter) 来负责数据转发,而每个 vRouter 通过 BGP 协议负责把自己上运行的 workload 的路由信息向整个 Calico 网络内传播。

此外,Calico 项目还实现了 Kubernetes 网络策略,提供ACL功能。

【官方说明】

- https://docs.projectcalico.org/getting-started/kubernetes/quickstart

【git地址】

- https://github.com/projectcalico/calico/tree/v3.24.0

【部署地址】

- https://docs.tigera.io/archive/v3.14/getting-started/kubernetes/quickstart

【说明】https://blog.csdn.net/ma_jiang/article/details/124962352

2)部署修改

# --no-check-certificate

wget -c https://docs.projectcalico.org/v3.20/manifests/calico.yaml --no-check-certificate

下载完后还需要修改里面定义Pod网络(CALICO_IPV4POOL_CIDR),与前面kubeadm init指定的一样

修改完后应用清单:

#修改为:

- name: CALICO_IPV4POOL_CIDR

value: "10.244.0.0/16"

key: calico_backend

# Cluster type to identify the deployment type

- name: CLUSTER_TYPE

value: "k8s,bgp"

# 下方新增

- name: IP_AUTODETECTION_METHOD

value: "interface=ens192"

# ens192为本地网卡名字

3)更换模式为bgp

#修改CALICO_IPV4POOL_IPIP为Never,不过这种方式只适用于第一次部署,也就是如果已经部署了IPIP模式,这种方式就不奏效了,除非把calico删除,修改。

#更改IPIP模式为bgp模式,可以提高网速,但是节点之前相互不能通信。

- name: CALICO_IPV4POOL_IPIP

value: "Always"

#修改yaml

- name: CALICO_IPV4POOL_IPIP

value: "Never"

【报错】Warning: policy/v1beta1 PodDisruptionBudget is deprecated in v1.21+, unavailable in v1.25+; use policy/v1 PodDisruptionBudget

【原因】api版本已经过期,需要修改下。

将rbac.authorization.k8s.io/v1beta1 修改为rbac.authorization.k8s.io/v1

4)下载镜像

#quay.io是一个公共镜像仓库

docker pull quay.io/calico/cni:3.20.6

docker tag quay.io/calico/cni:v3.20.6 calico/cni:v3.20.6

docker pull quay.io/calico/kube-controllers:v3.20.6

docker tag quay.io/calico/kube-controllers:v3.20.6 calico/kube-controllers:v3.20.6

docker pull quay.io/calico/node:v3.20.6

docker tag quay.io/calico/node:v3.20.6 calico/node:v3.20.6

docker pull quay.io/calico/pod2daemon-flexvol:v3.20.6

docker tag quay.io/calico/pod2daemon-flexvol:v3.14.2 calico/pod2daemon-flexvol:v3.20.6

docker pull quay.io/calico/typha:v3.20.6

docker tag quay.io/calico/typha:v3.20.6 calico/typha:v3.20.6

#生效

kubectl apply -f calico.yaml

kubectl get pods -n kube-system

5)更改网络模式为ipvs

#修改网络模式为IPVS

[root@k8s-master ~]# kubectl edit -n kube-system cm kube-proxy

修改:将mode: " "

修改为mode: “ipvs”

:wq保存退出

#看kube-system命名空间下的kube-proxy并删除,删除后,k8s会自动再次生成,新生成的kube-proxy会采用刚刚配置的ipvs模式

kubectl get pod -n kube-system |grep kube-proxy |awk '{system("kubectl delete pod "$1" -n kube-system")}'

#清除防火墙规则

iptables -t filter -F; iptables -t filter -X; iptables -t nat -F; iptables -t nat -X

#查看日志,确认使用的是ipvs,下面的命令,将proxy的名字换成自己查询出来的名字即可

[root@k8s-master ~]# kubectl get pod -n kube-system | grep kube-proxy

kube-proxy-2bvgz 1/1 Running 0 34s

kube-proxy-hkctn 1/1 Running 0 35s

kube-proxy-pjf5j 1/1 Running 0 38s

kube-proxy-t5qbc 1/1 Running 0 36s

[root@k8s-master ~]# kubectl logs -n kube-system kube-proxy-pjf5j

I0303 04:32:34.613408 1 node.go:163] Successfully retrieved node IP: 192.168.50.116

I0303 04:32:34.613505 1 server_others.go:138] "Detected node IP" address="192.168.50.116"

I0303 04:32:34.656137 1 server_others.go:269] "Using ipvs Proxier"

I0303 04:32:34.656170 1 server_others.go:271] "Creating dualStackProxier for ipvs"

#通过ipvsadm命令查看是否正常,判断ipvs开启了

[root@k8s-master ~]# ipvsadm -Ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 172.17.0.1:32684 rr

-> 10.244.36.65:80 Masq 1 0 0

TCP 192.168.50.114:32684 rr

-> 10.244.36.65:80 Masq 1 0 0

TCP 10.96.0.1:443 rr

-> 192.168.50.114:6443 Masq 1 0 0

TCP 10.96.0.10:53 rr

-> 10.244.36.64:53 Masq 1 0 0

-> 10.244.169.128:53 Masq 1 0 0

TCP 10.96.0.10:9153 rr

-> 10.244.36.64:9153 Masq 1 0 0

-> 10.244.169.128:9153 Masq 1 0 0

TCP 10.105.5.170:80 rr

-> 10.244.36.65:80 Masq 1 0 0

UDP 10.96.0.10:53 rr

-> 10.244.36.64:53 Masq 1 0 0

-> 10.244.169.128:53 Masq 1 0 0

七、测试kubernetes集群

- 验证Pod工作

- 验证Pod网络通信

- 验证DNS解析

在Kubernetes集群中创建一个pod,验证是否正常运行:

kubectl create deployment nginx --image=nginx

kubectl expose deployment nginx --port=80 --type=NodePort

kubectl get pod,svc

访问地址:http://NodeIP:Port

八、部署 Dashboard

wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.3/aio/deploy/recommended.yaml

默认Dashboard只能集群内部访问,修改Service为NodePort类型,暴露到外部:

$ vi recommended.yaml

...

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

ports:

- port: 443

targetPort: 8443

nodePort: 30000

selector:

k8s-app: kubernetes-dashboard

type: NodePort

...

$ kubectl apply -f recommended.yaml

$ kubectl get pods -n kubernetes-dashboard

NAME READY STATUS RESTARTS AGE

dashboard-metrics-scraper-6b4884c9d5-gl8nr 1/1 Running 0 13m

kubernetes-dashboard-7f99b75bf4-89cds 1/1 Running 0 13m

访问地址:https://NodeIP:30000

创建service account并绑定默认cluster-admin管理员集群角色:

# 创建用户

kubectl create serviceaccount dashboard-admin -n kube-system

# 用户授权

kubectl create clusterrolebinding dashboard-admin --clusterrole=cluster-admin --serviceaccount=kube-system:dashboard-admin

#解决WEB页面报错

kubectl create clusterrolebinding system:anonymous --clusterrole=cluster-admin --user=system:anonymous

#访问:https://node节点:30000/

https://192.168.4.115:30000/

# 获取用户Token

kubectl describe secrets -n kube-system $(kubectl -n kube-system get secret | awk '/dashboard-admin/{print $1}')

使用输出的token登录Dashboard。

九、解决谷歌不能登录dashboard

#查看

kubectl get secrets -n kubernetes-dashboard

#删除默认的secret,用自签证书创建新的secret

kubectl delete secret kubernetes-dashboard-certs -n kubernetes-dashboard

#创建ca

openssl genrsa -out ca.key 2048

openssl req -new -x509 -key ca.key -out ca.crt -days 3650 -subj "/C=CN/ST=HB/L=WH/O=DM/OU=YPT/CN=CA"

openssl x509 -in ca.crt -noout -text

#签发Dashboard证书

openssl genrsa -out dashboard.key 2048

openssl req -new -key dashboard.key -out dashboard.csr -subj "/O=white/CN=dasnboard"

openssl x509 -req -in dashboard.csr -CA ca.crt -CAkey ca.key -CAcreateserial -out dashboard.crt -days 3650

#生成新的secret

kubectl create secret generic kubernetes-dashboard-certs --from-file=dashboard.crt=/opt/dashboard/dashboard.crt --from-file=dashboard.key=/opt/dashboard/dashboard.key -n kubernetes-dashboard

# 删除默认的secret,用自签证书创建新的secret

#kubectl create secret generic #kubernetes-dashboard-certs \

#--from-file=/etc/kubernetes/pki/apiserver.key \

#--from-file=/etc/kubernetes/pki/apiserver.crt \

#-n kubernetes-dashboard

# vim recommended.yaml 加入证书路径

args:

- --auto-generate-certificates

- --tls-key-file=dashboard.key

- --tls-cert-file=dashboard.crt

# - --tls-key-file=apiserver.key

# - --tls-cert-file=apiserver.crt

#重新生效

kubectl apply -f recommended.yaml

#删除pods重新生效

kubectl get pod -n kubernetes-dashboard | grep -v NAME | awk '{print "kubectl delete po " $1 " -n kubernetes-dashboard"}' | sh

访问:https://192.168.4.116:30000/#/login

# 获取用户Token

kubectl describe secrets -n kube-system $(kubectl -n kube-system get secret | awk '/dashboard-admin/{print $1}')

十、安装metrics

#下载

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/metrics-server:v0.5.0

#改名

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/metrics-server:v0.5.0 k8s.gcr.io/metrics-server/metrics-server:v0.5.0

#删除已经改名镜像

docker rmi -f registry.cn-hangzhou.aliyuncs.com/google_containers/metrics-server:v0.5.0

#创建目录

mkdir -p /opt/k8s/metrics-server

cd /opt/k8s/metrics-server

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

k8s-app: metrics-server

rbac.authorization.k8s.io/aggregate-to-admin: "true"

rbac.authorization.k8s.io/aggregate-to-edit: "true"

rbac.authorization.k8s.io/aggregate-to-view: "true"

name: system:aggregated-metrics-reader

rules:

- apiGroups:

- metrics.k8s.io

resources:

- pods

- nodes

verbs:

- get

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

k8s-app: metrics-server

name: system:metrics-server

rules:

- apiGroups:

- ""

resources:

- pods

- nodes

- nodes/stats

- namespaces

- configmaps

verbs:

- get

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

labels:

k8s-app: metrics-server

name: metrics-server-auth-reader

namespace: kube-system

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: extension-apiserver-authentication-reader

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

k8s-app: metrics-server

name: metrics-server:system:auth-delegator

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:auth-delegator

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

k8s-app: metrics-server

name: system:metrics-server

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:metrics-server

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: v1

kind: Service

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system

spec:

ports:

- name: https

port: 443

protocol: TCP

targetPort: https

selector:

k8s-app: metrics-server

---

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system

spec:

selector:

matchLabels:

k8s-app: metrics-server

strategy:

rollingUpdate:

maxUnavailable: 0

template:

metadata:

labels:

k8s-app: metrics-server

spec:

containers:

- args:

- --cert-dir=/tmp

- --secure-port=443

- --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname

- --kubelet-use-node-status-port

- --metric-resolution=15s

- --kubelet-insecure-tls

image: k8s.gcr.io/metrics-server/metrics-server:v0.5.0

imagePullPolicy: IfNotPresent

livenessProbe:

failureThreshold: 3

httpGet:

path: /livez

port: https

scheme: HTTPS

periodSeconds: 10

name: metrics-server

ports:

- containerPort: 443

name: https

protocol: TCP

readinessProbe:

failureThreshold: 3

httpGet:

path: /readyz

port: https

scheme: HTTPS

initialDelaySeconds: 20

periodSeconds: 10

resources:

requests:

cpu: 100m

memory: 200Mi

securityContext:

readOnlyRootFilesystem: true

runAsNonRoot: true

runAsUser: 1000

volumeMounts:

- mountPath: /tmp

name: tmp-dir

nodeSelector:

kubernetes.io/os: linux

priorityClassName: system-cluster-critical

serviceAccountName: metrics-server

volumes:

- emptyDir: {}

name: tmp-dir

---

apiVersion: apiregistration.k8s.io/v1

kind: APIService

metadata:

labels:

k8s-app: metrics-server

name: v1beta1.metrics.k8s.io

spec:

group: metrics.k8s.io

groupPriorityMinimum: 100

insecureSkipTLSVerify: true

service:

name: metrics-server

namespace: kube-system

version: v1beta1

versionPriority: 100

十一、切换容器引擎为Containerd

https://kubernetes.io/zh/docs/setup/production-environment/container-runtimes/#containerd

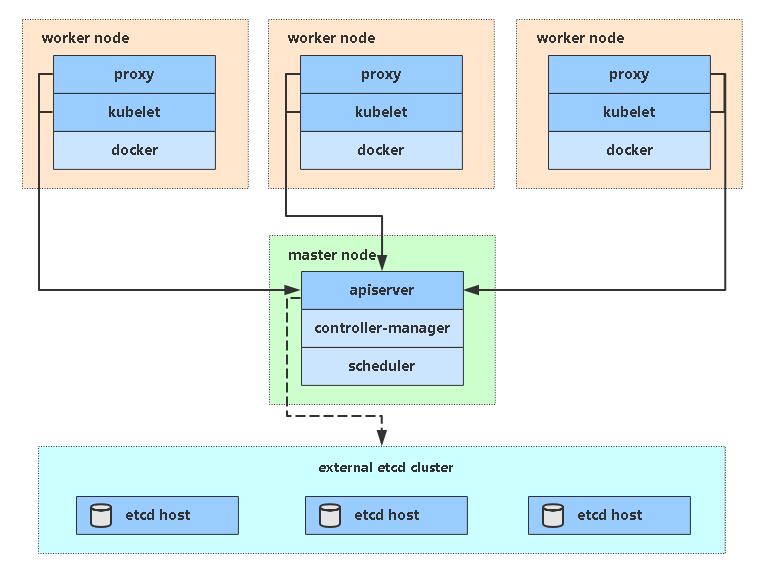

[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-RnrFbrBX-1677818402523)(WEBRESOURCE04e6a3ef57e944a08572a135e7979852)]

1、配置先决条件

cat <<EOF | sudo tee /etc/modules-load.d/containerd.conf

overlay

br_netfilter

EOF

sudo modprobe overlay

sudo modprobe br_netfilter

#查看模块

lsmod |grep overlay

lsmod |grep br_netfilter

# 设置必需的 sysctl 参数,这些参数在重新启动后仍然存在。

cat <<EOF | sudo tee /etc/sysctl.d/99-kubernetes-cri.conf

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-ip6tables = 1

EOF

# 生效

sudo sysctl --system

2、安装containerd

yum install -y yum-utils device-mapper-persistent-data lvm2

yum-config-manager \

--add-repo \

https://download.docker.com/linux/centos/docker-ce.repo

yum update -y && sudo yum install -y containerd.io

mkdir -p /etc/containerd

containerd config default | sudo tee /etc/containerd/config.toml

systemctl restart containerd

3、修改配置文件

$ vi /etc/containerd/config.toml

[plugins."io.containerd.grpc.v1.cri"]

sandbox_image = "registry.aliyuncs.com/google_containers/pause:3.2"

...

[plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc.options]

SystemdCgroup = true

...

[plugins."io.containerd.grpc.v1.cri".registry.mirrors."docker.io"]

endpoint = ["https://b9pmyelo.mirror.aliyuncs.com"]

systemctl restart containerd

systemctl enable containerd

systemctl stop docker

4、配置kubelet使用containerd

vi /etc/sysconfig/kubelet

KUBELET_EXTRA_ARGS=--container-runtime=remote --container-runtime-endpoint=unix:///run/containerd/containerd.sock --cgroup-driver=systemd

systemctl restart kubelet

5、验证

[root@k8s-master ~]# kubectl get node -o wide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

k8s-master Ready control-plane,master 24h v1.20.0 192.168.4.114 <none> CentOS Linux 7 (Core) 3.10.0-1127.19.1.el7.x86_64 docker://20.10.8

k8s-node1 Ready <none> 24h v1.20.0 192.168.4.115 <none> CentOS Linux 7 (Core) 3.10.0-1127.19.1.el7.x86_64 docker://20.10.8

k8s-node2 Ready <none> 24h v1.20.0 192.168.4.116 <none> CentOS Linux 7 (Core) 3.10.0-1127.19.1.el7.x86_64 docker://20.10.8

k8s-node3 Ready <none> 24h v1.22.1 192.168.4.118 <none> CentOS Linux 7 (Core) 3.10.0-1127.19.1.el7.x86_64 containerd://1.4.9

十一、crictl管理容器

crictl 是 CRI 兼容的容器运行时命令行接口。 你可以使用它来检查和调试 Kubernetes 节点上的容器运行时和应用程序。 crictl 和它的源代码在 cri-tools 代码库。

【视频】https://asciinema.org/a/179047

【使用网址】https://kubernetes.io/zh/docs/tasks/debug-application-cluster/crictl/

十二、常用命令

1、常用命令

#查看master组件状态:

kubectl get cs

#查看node状态:

kubectl get node

#查看Apiserver代理的URL:

kubectl cluster-info

#查看集群详细信息:

kubectl cluster-info dump

#查看资源信息:

kubectl describe <资源> <名称>

#查看K8S信息

kubectl api-resources

2、命令操作+

[root@k8s-master ~]# kubectl get cs

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

scheduler Unhealthy Get "http://127.0.0.1:10251/healthz": dial tcp 127.0.0.1:10251: connect: connection refused

controller-manager Unhealthy Get "http://127.0.0.1:10252/healthz": dial tcp 127.0.0.1:10252: connect: connection refused

etcd-0 Healthy {"health":"true"}

#修改yaml,注释掉port

$ vim /etc/kubernetes/manifests/kube-scheduler.yaml

$ vim /etc/kubernetes/manifests/kube-controller-manager.yaml

# - --port=0

#重启

systemctl restart kubelet

#查看master组件

[root@k8s-master ~]# kubectl get cs

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-0 Healthy {"health":"true"}