1.准备数据

1.创建表结构

CREATE TABLE users (

id INT PRIMARY KEY AUTO_INCREMENT,

username VARCHAR(20) NOT NULL,

password VARCHAR(32) NOT NULL,

email VARCHAR(50) NOT NULL,

phone VARCHAR(20) NOT NULL,

qq_id VARCHAR(20),

wechat_id VARCHAR(20),

nick_name VARCHAR(20),

signature VARCHAR(50),

create_time DATETIME NOT NULL,

last_login DATETIME,

update_time DATETIME NOT NULL

);2.准备十万数据

INSERT INTO users (username, password, email, phone, qq_id, wechat_id, nick_name, signature, create_time, last_login, update_time)

SELECT

CONCAT('user', LPAD(id, 5, '0')) AS username, -- 用户名,user00001 ~ user100000

MD5('[email protected]') AS password, -- 密码统一为[email protected]

CONCAT(FLOOR(RAND() * 9000000000) + 1000000000) AS email, -- 随机生成的11位数邮箱

CONCAT('138', LPAD(FLOOR(RAND() * 1000000000), 8, '0')) AS phone, -- 随机11位手机号码

LPAD((FLOOR(UNIX_TIMESTAMP() * 1000) << 12) + (FLOOR(RAND() * 4096)), 16, '0') AS qq_id, -- 随机32位雪花算法ID(QQ)

LPAD((FLOOR(UNIX_TIMESTAMP() * 1000) << 12) + (FLOOR(RAND() * 4096)), 16, '0') AS wechat_id, -- 随机32位雪花算法ID(微信)

CONCAT('nick', id) AS nick_name, -- 昵称,nick1 ~ nick100000

CONCAT('signature', id) AS signature, -- 个性签名,signature1 ~ signature100000

NOW() - INTERVAL FLOOR(RAND() * 10000) DAY AS create_time, -- 随机生成的创建时间

NOW() - INTERVAL FLOOR(RAND() * 365) DAY AS last_login, -- 随机生成的最后登录时间

NOW() - INTERVAL FLOOR(RAND() * 10000) DAY AS update_time -- 随机生成的更新时间

FROM

(SELECT @rownum := @rownum + 1 AS id FROM (SELECT 1 UNION ALL SELECT 2 UNION ALL SELECT 3 UNION ALL SELECT 4) t1, (SELECT 1 UNION ALL SELECT 2 UNION ALL SELECT 3 UNION ALL SELECT 4) t2, (SELECT 1 UNION ALL SELECT 2 UNION ALL SELECT 3 UNION ALL SELECT 4) t3, (SELECT @rownum := 0) t4) nums

WHERE

id <= 100000; -- 生成10万条假数据

执行成功但是之插入64条数据,单次最大传输包为4KB。这里解决思路将最大传输包设置为256KB,显示成功,但是查询没有数据没有改变(需要修改配置文件)。

SHOW VARIABLES LIKE 'max_allowed_packet';

SET GLOBAL max_allowed_packet=268435456;

![]()

![]()

本人采用了原始粗暴手法,使用存储过程循环添加数据。(太慢不推荐)

DELIMITER //

CREATE PROCEDURE insert_users(IN count INT)

BEGIN

DECLARE i INT DEFAULT 1;

DECLARE cur_time TIMESTAMP DEFAULT NOW();

WHILE i <= count DO

INSERT INTO users (username, password, email, phone, qq_id, wechat_id, nick_name, signature, create_time, last_login, update_time)

VALUES (

CONCAT('user', LPAD(i, 7, '0')),

MD5('[email protected]'),

CONCAT(FLOOR(RAND() * 9000000000) + 1000000000),

CONCAT('138', LPAD(FLOOR(RAND() * 1000000000), 8, '0')),

LPAD((FLOOR(UNIX_TIMESTAMP() * 1000) << 12) + (FLOOR(RAND() * 4096)), 16, '0'),

LPAD((FLOOR(UNIX_TIMESTAMP() * 1000) << 12) + (FLOOR(RAND() * 4096)), 16, '0'),

CONCAT('nick', i),

CONCAT('signature', i),

cur_time - INTERVAL FLOOR(RAND() * 10000) DAY,

cur_time - INTERVAL FLOOR(RAND() * 365) DAY,

cur_time - INTERVAL FLOOR(RAND() * 10000) DAY

);

SET i = i + 1;

END WHILE;

END//

DELIMITER ;执行

CALL insert_users(1000000);然后是漫长的等待(等的花都械了),共花费13950.374s ,接近4小时完成。

优化:采用多线程批量插入

200线程 每次插入1000条数据 用时接近2分钟 使用乐观锁

/**

* @author LJL

* @version 1.0

* @title TestUser1

* @date 2023/6/11 0:46

* @description TODO

*/

@SpringBootTest

@RunWith(SpringJUnit4ClassRunner.class)

public class TestUser1 {

@Autowired

Users1Service users1Service;

static volatile AtomicInteger total = new AtomicInteger(0);

@Test

public void save() throws InterruptedException {

long start = System.currentTimeMillis();

/**

* 创建一个同时运行200个线程执行添加任务

* 每个任务一千条数据

* 耗时:111527

*/

ExecutorService executorService = Executors.newFixedThreadPool(200);

for (int i = 0; i < 1000; i++) {

executorService.submit(new Task());

}

while (total.get() < 1000000){

Thread.sleep(10000);

}

System.out.println("total: " +total.get());

//关闭线程池

executorService.shutdown();

System.out.println("执行完毕,耗时:" + (System.currentTimeMillis() - start) );

}

public class Task implements Runnable{

@Override

public void run() {

System.out.println("线程" + Thread.currentThread().getId() + "开始执行任务") ;

List<Users1> users1List = createUsers1();

int row = users1Service.batchInsert(users1List);

System.out.println("线程" + Thread.currentThread().getId() + "添加成功" + row + "条记录") ;

}

private List<Users1> createUsers1(){

List<Users1> users1List = new ArrayList<>();

for (int i = 1; i <= 1000; i++) {

int count = 0;

while (count < 10){

boolean flag = total.compareAndSet(total.get(), total.get() +1);

if (flag){

count = 10;

break;

}

count ++;

}

Users1 users1 = builder();

users1List.add(users1);

}

return users1List;

}

}

private Users1 builder(){

Users1 users1 = new Users1();

String password = "[email protected]'";

String pwdMD5 = md5(password);

String userName = "user" + generateRandomNumber(11);;

String email = generateRandomNumber(11);

String phone = generateRandomNumber(11);

String qqId = generateRandomNumber(11);

String wxId = generateRandomNumber(11);

String nickName = "nick" + generateRandomNumber(11);;

LocalDateTime create_time = LocalDateTime.now();

LocalDateTime last_login = LocalDateTime.now();

LocalDateTime update_time = LocalDateTime.now();

users1.setEmail(email);

users1.setPassword(pwdMD5);

users1.setNickName(nickName);

users1.setPhone(phone);

users1.setUsername(userName);

users1.setWechatId(wxId);

users1.setQqId(qqId);

users1.setCreateTime(create_time);

users1.setUpdateTime(update_time);

users1.setLastLogin(last_login);

return users1;

}

/**

* 返回

* @param s

* @return

*/

public static String md5(String s) {

//生成一个md5加密器

try {

MessageDigest md = MessageDigest.getInstance("MD5");

//计算MD5 的值

md.update(s.getBytes());

//BigInteger 将8位的字符串 转成16位的字符串 得到的字符串形式是哈希码值

//BigInteger(参数1,参数2) 参数1 是 1为正数 0为零 -1为负数

return new BigInteger(1, md.digest()).toString(16);

} catch (NoSuchAlgorithmException e) {

e.printStackTrace();

}

return null;

}

/**

* @param length 随机数的长度

* @return 返回固定x位的随机数

*/

public static String generateRandomNumber(int length) {

Random random = new Random();

StringBuilder sb = new StringBuilder(length);

for (int i = 0; i < length; i++) {

int num = random.nextInt(10); // 生成0-9之间的数字

sb.append(num);

}

return sb.toString();

}

}

![]()

数据量:百万, 文件大小:188416 ,查询时间4.1198s

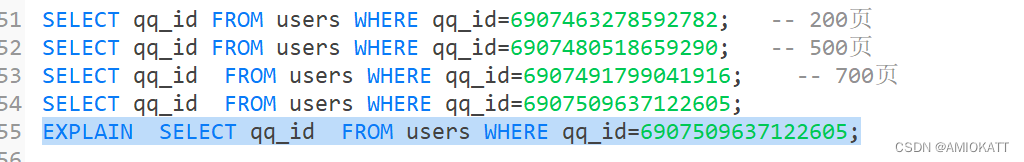

通过主键索引 (const 巨快)

查询单条qq(ALL 挺慢的)

扫描二维码关注公众号,回复:

15372700 查看本文章

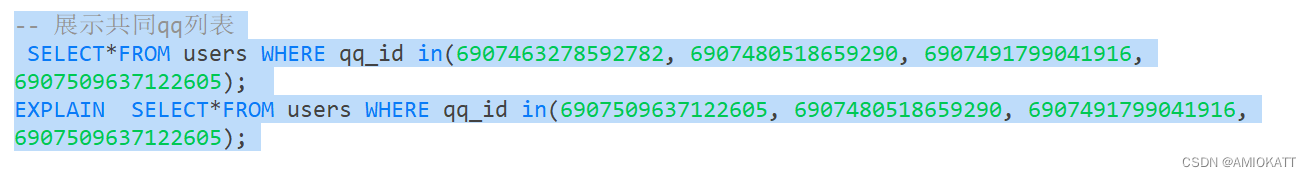

批量查询qq(ALL):回表所以是ALL

添加普通索引

单条查询 (index )

批量查询

更换唯一索引

在添加数据没有使用索引,产生了重复的数据,用联合索引

单行查询

批量查询

不想测试了,感觉没啥,直接总结

总结:

1、能走索引走索引,避免回表查询导致全文检索。

2、常用查询字段建立联合联合索引,遵循经常查询,放在左边

3、能批量就批量,效率高,执行效率慢

4、B + 树 自增 比 uuid 雪花算法效率更高。

5、索引适量就行,维护麻烦