随着以太坊的数据越来越多,同步也越来越慢,使用full sync mode同步的话恐怕得一两个礼拜也不见得能同步完。以太坊有fast sync mode,找了些文章还不是很明白具体内容,所以尝试着看懂写下来,如有错误之处欢迎指正。

关于fast sync mode的算法,是在这篇文章中讲述的,看完了也没看明白为什么同步的数据会少,速度会快,所以看看源代码的实现吧

https://github.com/ethereum/go-ethereum/pull/1889

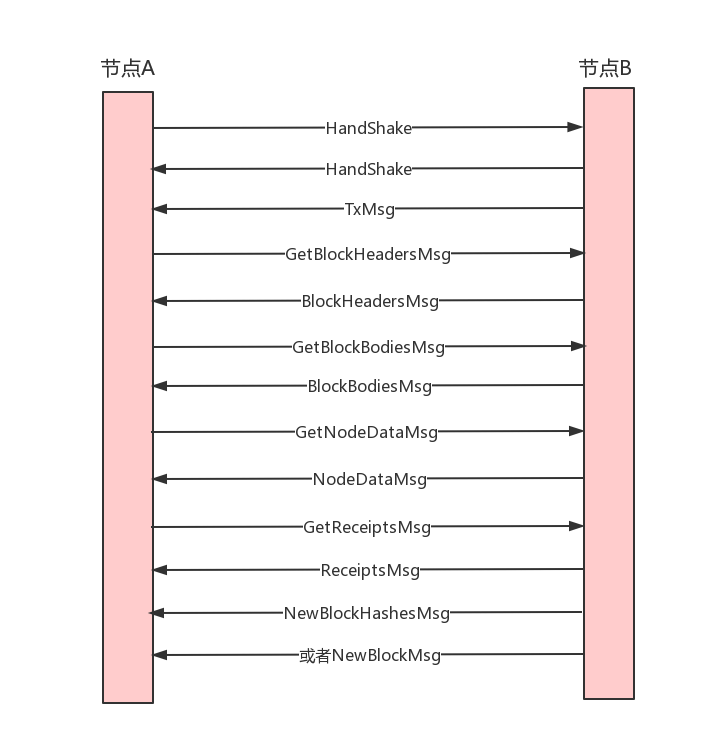

图示

先大致讲下同步流程,以新加入网络的节点A和挖矿节点B为例:

1 两个节点先hadeshake同步下各自的genesis、td、head等信息

2 连接成功后,节点B发送TxMsg消息把自己的txpool中的tx同步给节点A

然后各自循环监听对方的消息

3 节点A此时使用fast sync同步数据,依次发送GetBlockHeadersMsg、GetBlockBodiesMsg、GetReceiptsMsg、GetNodeDataMsg获取block header、block body、receipt和state数据

4 节点B对应的返回BlockHeadersMsg、BlockBodiesMsg、ReceiptsMsg、NodeDataMsg

5 节点A收到数据把header和body组成block存入自己的leveldb数据库,一并存入receipts和state数据

6 节点B挖出block后会向A同步区块,发送NewBlockMsg或者NewBlockHashesMsg(取决于节点A位于节点B的节点列表位置),如果是NewBlockMsg,那么节点A直接验证完存入本地;如果是NewBlockHashesMsg节点A会交给fetcher去获取header和body,然后再组织成block存入本地

基本概念

先简要介绍一下一些基本概念

header:区块的头

body:区块内的所有交易

block:区块,仅包含区块头和body

receipt:合约执行后的结果,log就放在这里。这个默认是不广播的。fast sync的过程中会有节点去获取。正常运行的节点(sync到最新后)收到block后,会执行block内的所有交易,就可以得到这个receipt了

statedb:世界状态,储存所有的账号状态,使用了MPT树存储。跟receipt一样,也不属于block的一部分,广播的时候也不广播。只在fast sync下,会从其他节点获取。正常运行的节点收到block执行所有的交易的时候也可以生成。

这几个数据都是存在节点的leveldb数据库中,正常情况下广播的块是区块,包含header和body(所有的交易)

开始

先从同步代码看起来吧,前面初始化的地方先不讲了,直接切入正题:如果运行geth不设置同步模式,默认是fast mode

func NewProtocolManager(config *params.ChainConfig, mode downloader.SyncMode, networkId uint64, mux *event.TypeMux, txpool txPool, engine consensus.Engine, blockchain *core.BlockChain, chaindb ethdb.Database) (*ProtocolManager, error) {

// Figure out whether to allow fast sync or not

// 如果是fast sync mode,并且当前blocknum大于0,切换为full sync模式

// 那么如果sync了一段时间断掉之后再重新sync是fast mode还是full mode呢,答案还是fast mode

// 为什么呢? 后面看到代码的时候再讲一下吧

if mode == downloader.FastSync && blockchain.CurrentBlock().NumberU64() > 0 {

log.Warn("Blockchain not empty, fast sync disabled")

mode = downloader.FullSync

}

if mode == downloader.FastSync {

manager.fastSync = uint32(1)

}

// Initiate a sub-protocol for every implemented version we can handle

manager.SubProtocols = make([]p2p.Protocol, 0, len(ProtocolVersions))

for i, version := range ProtocolVersions {

// Skip protocol version if incompatible with the mode of operation

if mode == downloader.FastSync && version < eth63 {

continue

}

// Compatible; initialise the sub-protocol

version := version // Closure for the run

manager.SubProtocols = append(manager.SubProtocols, p2p.Protocol{

Name: ProtocolName,

Version: version,

Length: ProtocolLengths[i],

Run: func(p *p2p.Peer, rw p2p.MsgReadWriter) error {

peer := manager.newPeer(int(version), p, rw)

select {

case manager.newPeerCh <- peer:

manager.wg.Add(1)

defer manager.wg.Done()

return manager.handle(peer)

case <-manager.quitSync:

return p2p.DiscQuitting

}

},

NodeInfo: func() interface{} {

return manager.NodeInfo()

},

PeerInfo: func(id discover.NodeID) interface{} {

if p := manager.peers.Peer(fmt.Sprintf("%x", id[:8])); p != nil {

return p.Info()

}

return nil

},

})

}

// Construct the different synchronisation mechanisms

// 初始化downloader

manager.downloader = downloader.New(mode, chaindb, manager.eventMux, blockchain, nil, manager.removePeer)

// validator: 验证区块头

validator := func(header *types.Header) error {

return engine.VerifyHeader(blockchain, header, true)

}

heighter := func() uint64 {

return blockchain.CurrentBlock().NumberU64()

}

// 将区块插入blockchain

inserter := func(blocks types.Blocks) (int, error) {

// If fast sync is running, deny importing weird blocks

if atomic.LoadUint32(&manager.fastSync) == 1 {

log.Warn("Discarded bad propagated block", "number", blocks[0].Number(), "hash", blocks[0].Hash())

return 0, nil

}

atomic.StoreUint32(&manager.acceptTxs, 1) // Mark initial sync done on any fetcher import

return manager.blockchain.InsertChain(blocks)

}

// 初始化fetcher,把downloader、validator作为fetcher的参数

manager.fetcher = fetcher.New(blockchain.GetBlockByHash, validator, manager.BroadcastBlock, heighter, inserter, manager.removePeer)

return manager, nil

}

这里有新的节点连接成功后,会回调这个Run函数,然后把peer写入到manager.newPeerCh这个channel。

之前初始化的时候有个goroutine一直在监听这个channel的数据

eth/sync.go:

func (pm *ProtocolManager) syncer() {

...

for {

select {

case <-pm.newPeerCh:

// Make sure we have peers to select from, then sync

if pm.peers.Len() < minDesiredPeerCount {

break

}

go pm.synchronise(pm.peers.BestPeer())

case <-forceSync.C:

// Force a sync even if not enough peers are present

go pm.synchronise(pm.peers.BestPeer())

case <-pm.noMorePeers:

return

}

}

}

syncer()是初始化的时候的一个goroutine,一直监听是否有新节点加入

开始同步

监听到了有新节点连接,minDesiredPeerCount是5,意思是有5个连接了再开始sync,BestPeer是td最高的peer,就是从最长链的节点开始同步。

如果已经开始同步了又有新节点加入还会再同步吗,答案是no,后面看代码的时候讲一下

func (pm *ProtocolManager) synchronise(peer *peer) {

// Run the sync cycle, and disable fast sync if we've went past the pivot block

if err := pm.downloader.Synchronise(peer.id, pHead, pTd, mode); err != nil {

return

}

if atomic.LoadUint32(&pm.fastSync) == 1 {

log.Info("Fast sync complete, auto disabling")

atomic.StoreUint32(&pm.fastSync, 0)

}

atomic.StoreUint32(&pm.acceptTxs, 1) // Mark initial sync done

if head := pm.blockchain.CurrentBlock(); head.NumberU64() > 0 {

// We've completed a sync cycle, notify all peers of new state. This path is

// essential in star-topology networks where a gateway node needs to notify

// all its out-of-date peers of the availability of a new block. This failure

// scenario will most often crop up in private and hackathon networks with

// degenerate connectivity, but it should be healthy for the mainnet too to

// more reliably update peers or the local TD state.

go pm.BroadcastBlock(head, false)

}

}

再调用到eth/downloader/downloader.go

func (d *Downloader) synchronise(id string, hash common.Hash, td *big.Int, mode SyncMode) error {

// 这里保证每次只有一个sync实例运行,这里保证了同时只有一个sync在进行

// Make sure only one goroutine is ever allowed past this point at once

if !atomic.CompareAndSwapInt32(&d.synchronising, 0, 1) {

return errBusy

}

defer atomic.StoreInt32(&d.synchronising, 0)

...

// Retrieve the origin peer and initiate the downloading process

p := d.peers.Peer(id)

if p == nil {

return errUnknownPeer

}

return d.syncWithPeer(p, hash, td)

}

func (d *Downloader) syncWithPeer(p *peerConnection, hash common.Hash, td *big.Int) (err error) {

// Look up the sync boundaries: the common ancestor and the target block

latest, err := d.fetchHeight(p)

if err != nil {

return err

}

height := latest.Number.Uint64()

origin, err := d.findAncestor(p, height)

if err != nil {

return err

}

d.syncStatsLock.Lock()

if d.syncStatsChainHeight <= origin || d.syncStatsChainOrigin > origin {

d.syncStatsChainOrigin = origin

}

d.syncStatsChainHeight = height

d.syncStatsLock.Unlock()

// Ensure our origin point is below any fast sync pivot point

pivot := uint64(0)

if d.mode == FastSync {

if height <= uint64(fsMinFullBlocks) {

// 如果对端节点的height小于64,则共同祖先更新为0

origin = 0

} else {

// 否则更新pivot为对端节点height-64

pivot = height - uint64(fsMinFullBlocks)

if pivot <= origin {

// 如果pivot小于共同祖先,则更新共同祖先为pivot的前一个

origin = pivot - 1

}

}

}

d.committed = 1

if d.mode == FastSync && pivot != 0 {

d.committed = 0

}

// Initiate the sync using a concurrent header and content retrieval algorithm

// 更新queue的值从共同祖先+1开始,这个好理解,就是从共同祖先开始sync区块

d.queue.Prepare(origin+1, d.mode)

if d.syncInitHook != nil {

d.syncInitHook(origin, height)

}

fetchers := []func() error{

func() error { return d.fetchHeaders(p, origin+1, pivot) }, // Headers are always retrieved

func() error { return d.fetchBodies(origin + 1) }, // Bodies are retrieved during normal and fast sync

func() error { return d.fetchReceipts(origin + 1) }, // Receipts are retrieved during fast sync

func() error { return d.processHeaders(origin+1, pivot, td) },

}

if d.mode == FastSync {

fetchers = append(fetchers, func() error { return d.processFastSyncContent(latest) })

} else if d.mode == FullSync {

fetchers = append(fetchers, d.processFullSyncContent)

}

return d.spawnSync(fetchers)

}

1 fetchHeight(p):从节点p根据head hash(建立节点handshake的时候节点p传过来的最新的head hash)获取header,发送GetBlockHeadersMsg消息,节点p收到消息后从自己本地拿到header然后发送BlockHeaderMsg消息,当前节点一直等待知道收到header的消息。

2 findAncestor(p):从节点p寻找共同的祖先header。也是发送GetBlockHeadersMsg消息,带的参数是算出来的从某个高度拿某些数量的headers。拿到headers后从最新的header往前for循环,看本地的lightchain是否有这个header,有的话就找到了共同祖先。如果未找到,再从创世快开始二分法查找是否能找到共同的祖先,然后返回这个共同的祖先origin(是header)

3 拿到最新origin后,更新queue从共同祖先开始sync,调用spawnSync开始同步

func (d *Downloader) spawnSync(fetchers []func() error) error {

errc := make(chan error, len(fetchers))

d.cancelWg.Add(len(fetchers))

for _, fn := range fetchers {

fn := fn

go func() { defer d.cancelWg.Done(); errc <- fn() }()

}

// Wait for the first error, then terminate the others.

var err error

for i := 0; i < len(fetchers); i++ {

if i == len(fetchers)-1 {

// Close the queue when all fetchers have exited.

// This will cause the block processor to end when

// it has processed the queue.

d.queue.Close()

}

if err = <-errc; err != nil {

break

}

}

d.queue.Close()

d.Cancel()

return err

}

这个函数的主要功能是:

1 循环执行fetchers,fetchers是上面传过来的一组函数,fetch header、fetch body、 fetch receipt、process header等

2 然后等待读取errc channel内容,等待sync完成。注意这里errc是一个缓冲channel,个数为fetchers的长度,就是会等待fetchers中的每个函数执行完成返回。所以这里实现了pending的效果,就是一直要等到sync完成才会结束sync

3 如果fast sync的话,fetchers的最后一个函数是processFastSyncContent();full sync模式下最后一个函数是processFullSyncContent()

同步State

state是世界状态,保存着所有账户的余额等信息

func (d *Downloader) processFastSyncContent(latest *types.Header) error {

// Start syncing state of the reported head block. This should get us most of

// the state of the pivot block.

stateSync := d.syncState(latest.Root)

defer stateSync.Cancel()

go func() {

if err := stateSync.Wait(); err != nil && err != errCancelStateFetch {

d.queue.Close() // wake up WaitResults

}

}()

// Figure out the ideal pivot block. Note, that this goalpost may move if the

// sync takes long enough for the chain head to move significantly.

pivot := uint64(0)

if height := latest.Number.Uint64(); height > uint64(fsMinFullBlocks) {

pivot = height - uint64(fsMinFullBlocks)

}

// To cater for moving pivot points, track the pivot block and subsequently

// accumulated download results separately.

var (

oldPivot *fetchResult // Locked in pivot block, might change eventually

oldTail []*fetchResult // Downloaded content after the pivot

)

for {

// Wait for the next batch of downloaded data to be available, and if the pivot

// block became stale, move the goalpost

results := d.queue.Results(oldPivot == nil) // Block if we're not monitoring pivot staleness

if len(results) == 0 {

// If pivot sync is done, stop

if oldPivot == nil {

return stateSync.Cancel()

}

// If sync failed, stop

select {

case <-d.cancelCh:

return stateSync.Cancel()

default:

}

}

if d.chainInsertHook != nil {

d.chainInsertHook(results)

}

if oldPivot != nil {

results = append(append([]*fetchResult{oldPivot}, oldTail...), results...)

}

// Split around the pivot block and process the two sides via fast/full sync

if atomic.LoadInt32(&d.committed) == 0 {

latest = results[len(results)-1].Header

if height := latest.Number.Uint64(); height > pivot+2*uint64(fsMinFullBlocks) {

log.Warn("Pivot became stale, moving", "old", pivot, "new", height-uint64(fsMinFullBlocks))

pivot = height - uint64(fsMinFullBlocks)

}

}

P, beforeP, afterP := splitAroundPivot(pivot, results)

if err := d.commitFastSyncData(beforeP, stateSync); err != nil {

return err

}

if P != nil {

// If new pivot block found, cancel old state retrieval and restart

if oldPivot != P {

stateSync.Cancel()

stateSync = d.syncState(P.Header.Root)

defer stateSync.Cancel()

go func() {

if err := stateSync.Wait(); err != nil && err != errCancelStateFetch {

d.queue.Close() // wake up WaitResults

}

}()

oldPivot = P

}

// Wait for completion, occasionally checking for pivot staleness

select {

case <-stateSync.done:

if stateSync.err != nil {

return stateSync.err

}

if err := d.commitPivotBlock(P); err != nil {

return err

}

oldPivot = nil

case <-time.After(time.Second):

oldTail = afterP

continue

}

}

// Fast sync done, pivot commit done, full import

if err := d.importBlockResults(afterP); err != nil {

return err

}

}

}

1 参数latest是刚才从对端节点获取到的最新的header,

2 syncState函数创建了stateSync结构,然后写到channel stateSyncStart

3 初始化创建downloader的时候创建的goroutine statefetcher()监听到此channel有数据就开始进行state sync

func (d *Downloader) stateFetcher() {

for {

select {

case s := <-d.stateSyncStart:

for next := s; next != nil; {

next = d.runStateSync(next)

}

case <-d.stateCh:

// Ignore state responses while no sync is running.

case <-d.quitCh:

return

}

}

}

eth/downloader/statesync.go:

runStateSync()创建了goroutine s.run(),内部调用loop函数:

func (s *stateSync) loop() (err error) {

// Listen for new peer events to assign tasks to them

newPeer := make(chan *peerConnection, 1024)

peerSub := s.d.peers.SubscribeNewPeers(newPeer)

defer peerSub.Unsubscribe()

defer func() {

cerr := s.commit(true)

if err == nil {

err = cerr

}

}()

// Keep assigning new tasks until the sync completes or aborts

for s.sched.Pending() > 0 {

if err = s.commit(false); err != nil {

return err

}

s.assignTasks()

// Tasks assigned, wait for something to happen

select {

case <-newPeer:

// New peer arrived, try to assign it download tasks

case <-s.cancel:

return errCancelStateFetch

case <-s.d.cancelCh:

return errCancelStateFetch

case req := <-s.deliver:

// Response, disconnect or timeout triggered, drop the peer if stalling

log.Trace("Received node data response", "peer", req.peer.id, "count", len(req.response), "dropped", req.dropped, "timeout", !req.dropped && req.timedOut())

if len(req.items) <= 2 && !req.dropped && req.timedOut() {

// 2 items are the minimum requested, if even that times out, we've no use of

// this peer at the moment.

log.Warn("Stalling state sync, dropping peer", "peer", req.peer.id)

s.d.dropPeer(req.peer.id)

}

// Process all the received blobs and check for stale delivery

if err = s.process(req); err != nil {

log.Warn("Node data write error", "err", err)

return err

}

req.peer.SetNodeDataIdle(len(req.response))

}

}

return nil

}

这段代码以及子函数assignTasks的作用:

1 从所有空闲的peer节点开始获取state状态,发送GetNodeDataMsg数据。

2 对端节点收到此消息后,返回NodeDataMsg包括一定数量的state数据

3 本节点收到NodeDataMsg后,把数据写到channel stateCh

4 runStateSync()函数监听此channel的数据,写入finished结构内(也是stateReq类型),然后再写入channel s.deliver中

5 刚才发送GetNodeDataMsg的函数loop()内在监听channel s.deliver,拿到数据后调用process函数

(由于代码太多,暂不贴上来,可以自行到代码中根据函数名搜索即可)

6 procress()函数处理收到的state数据,写入本地(先写入memory,后续Commit的时候一起写入leveldb数据库)

7 然后设置peer为idle后续继续请求state数据

同步Headers

上面fetchers中调用fetchHeaders同步header

func (d *Downloader) fetchHeaders(p *peerConnection, from uint64, pivot uint64) error {

// 默认skeleton为true,表示先获取骨架(间隔的headers),然后再从其他节点填充骨架间的headers

skeleton := true

getHeaders := func(from uint64) {

request = time.Now()

ttl = d.requestTTL()

timeout.Reset(ttl)

if skeleton {

// 看参数MaxHeaderFetch-1(跳跃的距离),获取的header是跳跃的

go p.peer.RequestHeadersByNumber(from+uint64(MaxHeaderFetch)-1, MaxSkeletonSize, MaxHeaderFetch-1, false)

} else {

// 获取骨架完成或者失败,顺序获取headers

go p.peer.RequestHeadersByNumber(from, MaxHeaderFetch, 0, false)

}

}

// Start pulling the header chain skeleton until all is done

getHeaders(from)

for {

select {

case <-d.cancelCh:

return errCancelHeaderFetch

// 从对端节点获取header后会写到channel headerCh

case packet := <-d.headerCh:

// If the skeleton's finished, pull any remaining head headers directly from the origin

// 如果获取skeleton完成,设置skeleton为false,顺序获取

if packet.Items() == 0 && skeleton {

skeleton = false

getHeaders(from)

continue

}

// If no more headers are inbound, notify the content fetchers and return

...

headers := packet.(*headerPack).headers

// If we received a skeleton batch, resolve internals concurrently

// 如果获取了一些header的骨架,开始填充

if skeleton {

filled, proced, err := d.fillHeaderSkeleton(from, headers)

if err != nil {

p.log.Debug("Skeleton chain invalid", "err", err)

return errInvalidChain

}

headers = filled[proced:]

from += uint64(proced)

}

// Insert all the new headers and fetch the next batch

if len(headers) > 0 {

p.log.Trace("Scheduling new headers", "count", len(headers), "from", from)

select {

case d.headerProcCh <- headers:

case <-d.cancelCh:

return errCancelHeaderFetch

}

from += uint64(len(headers))

}

getHeaders(from)

}

}

}

1 先从当前peer获取一组有间隔的headers,称谓骨架

2 然后找其他节点填充headers骨架使连续

3 填充完毕后,headers后写入channel headerProcCh(下面的处理headers中处理),同时把from赋值为新的from,然后进行下一批headers的获取

处理Headers

func (d *Downloader) processHeaders(origin uint64, pivot uint64, td *big.Int) error {

for {

select {

case <-d.cancelCh:

return errCancelHeaderProcessing

case headers := <-d.headerProcCh:

// Otherwise split the chunk of headers into batches and process them

gotHeaders = true

for len(headers) > 0 {

// Terminate if something failed in between processing chunks

select {

case <-d.cancelCh:

return errCancelHeaderProcessing

default:

}

// Select the next chunk of headers to import

limit := maxHeadersProcess

if limit > len(headers) {

limit = len(headers)

}

chunk := headers[:limit]

// In case of header only syncing, validate the chunk immediately

if d.mode == FastSync || d.mode == LightSync {

// Collect the yet unknown headers to mark them as uncertain

unknown := make([]*types.Header, 0, len(headers))

for _, header := range chunk {

if !d.lightchain.HasHeader(header.Hash(), header.Number.Uint64()) {

unknown = append(unknown, header)

}

}

// If we're importing pure headers, verify based on their recentness

frequency := fsHeaderCheckFrequency

if chunk[len(chunk)-1].Number.Uint64()+uint64(fsHeaderForceVerify) > pivot {

frequency = 1

}

if n, err := d.lightchain.InsertHeaderChain(chunk, frequency); err != nil {

// If some headers were inserted, add them too to the rollback list

if n > 0 {

rollback = append(rollback, chunk[:n]...)

}

log.Debug("Invalid header encountered", "number", chunk[n].Number, "hash", chunk[n].Hash(), "err", err)

return errInvalidChain

}

// All verifications passed, store newly found uncertain headers

rollback = append(rollback, unknown...)

if len(rollback) > fsHeaderSafetyNet {

rollback = append(rollback[:0], rollback[len(rollback)-fsHeaderSafetyNet:]...)

}

}

// Unless we're doing light chains, schedule the headers for associated content retrieval

if d.mode == FullSync || d.mode == FastSync {

// If we've reached the allowed number of pending headers, stall a bit

for d.queue.PendingBlocks() >= maxQueuedHeaders || d.queue.PendingReceipts() >= maxQueuedHeaders {

select {

case <-d.cancelCh:

return errCancelHeaderProcessing

case <-time.After(time.Second):

}

}

// Otherwise insert the headers for content retrieval

inserts := d.queue.Schedule(chunk, origin)

if len(inserts) != len(chunk) {

log.Debug("Stale headers")

return errBadPeer

}

}

headers = headers[limit:]

origin += uint64(limit)

}

}

}

}

1 收到headers后,如果是fast或者light sync,每1K个header处理,调用lightchain.InsertHeaderChain()写入header到leveldb数据库

2 然后如果当前是fast或者full sync模式后,d.queue.Schedule(chunk, origin)赋值blockTaskPool/blockTaskQueue和receiptTaskPool/receiptTaskQueue(only fast 模式下),供后续同步body和同步receipt使用

同步Body

上面fetchers中调用fetchBodies同步body

func (d *Downloader) fetchBodies(from uint64) error {

log.Debug("Downloading block bodies", "origin", from)

var (

deliver = func(packet dataPack) (int, error) {

pack := packet.(*bodyPack)

return d.queue.DeliverBodies(pack.peerId, pack.transactions, pack.uncles)

}

expire = func() map[string]int { return d.queue.ExpireBodies(d.requestTTL()) }

fetch = func(p *peerConnection, req *fetchRequest) error { return p.FetchBodies(req) }

capacity = func(p *peerConnection) int { return p.BlockCapacity(d.requestRTT()) }

setIdle = func(p *peerConnection, accepted int) { p.SetBodiesIdle(accepted) }

)

err := d.fetchParts(errCancelBodyFetch, d.bodyCh, deliver, d.bodyWakeCh, expire,

d.queue.PendingBlocks, d.queue.InFlightBlocks, d.queue.ShouldThrottleBlocks, d.queue.ReserveBodies,

d.bodyFetchHook, fetch, d.queue.CancelBodies, capacity, d.peers.BodyIdlePeers, setIdle, "bodies")

log.Debug("Block body download terminated", "err", err)

return err

}fetchParts的处理逻辑:

1 从pool中取出要同步的body

2 调用fetch,也就是调用这里的FetchBodies从节点获取body,发送GetBlockBodiesMsg消息

3 对端节点处理完成后发回消息BlockBodiesMsg,写入channel bodyCh

4 收到channel bodyCh的数据后,调用deliver函数

所以接着看下deliver函数中d.queue.DeliverReceipts的代码

func (q *queue) DeliverBodies(id string, txLists [][]*types.Transaction, uncleLists [][]*types.Header) (int, error) {

q.lock.Lock()

defer q.lock.Unlock()

reconstruct := func(header *types.Header, index int, result *fetchResult) error {

if types.DeriveSha(types.Transactions(txLists[index])) != header.TxHash || types.CalcUncleHash(uncleLists[index]) != header.UncleHash {

return errInvalidBody

}

result.Transactions = txLists[index]

result.Uncles = uncleLists[index]

return nil

}

return q.deliver(id, q.blockTaskPool, q.blockTaskQueue, q.blockPendPool, q.blockDonePool, bodyReqTimer, len(txLists), reconstruct)

}

1 这里用到了处理header时中赋值的blockTaskPool和blockTaskQueue

2 q.deliver中用到了这里定义的reconstruct函数,把body存入resultCache中的Transactions和Uncles

3 下面处理Content的时候会用到这个Transactions和Uncles

同步Receipt

上面fetchers中调用fetchReceipts同步receipts

func (d *Downloader) fetchReceipts(from uint64) error {

log.Debug("Downloading transaction receipts", "origin", from)

var (

deliver = func(packet dataPack) (int, error) {

pack := packet.(*receiptPack)

return d.queue.DeliverReceipts(pack.peerId, pack.receipts)

}

expire = func() map[string]int { return d.queue.ExpireReceipts(d.requestTTL()) }

fetch = func(p *peerConnection, req *fetchRequest) error { return p.FetchReceipts(req) }

capacity = func(p *peerConnection) int { return p.ReceiptCapacity(d.requestRTT()) }

setIdle = func(p *peerConnection, accepted int) { p.SetReceiptsIdle(accepted) }

)

err := d.fetchParts(errCancelReceiptFetch, d.receiptCh, deliver, d.receiptWakeCh, expire,

d.queue.PendingReceipts, d.queue.InFlightReceipts, d.queue.ShouldThrottleReceipts, d.queue.ReserveReceipts,

d.receiptFetchHook, fetch, d.queue.CancelReceipts, capacity, d.peers.ReceiptIdlePeers, setIdle, "receipts")

log.Debug("Transaction receipt download terminated", "err", err)

return err

}

代码跟fetchBodies中的类似:

1 从pool中取出要同步的receipts

2 调用fetch,也就是调用这里的FetchReceipts从节点获取receipts,发送GetReceiptsMsg消息

3 对端节点处理完成后发回消息ReceiptsMsg,写入channel receiptCh

4 收到channel receiptCh的数据后,调用deliver函数

看下deliver函数中的d.queue.DeliverReceipts函数func (q *queue) DeliverReceipts(id string, receiptList [][]*types.Receipt) (int, error) {

q.lock.Lock()

defer q.lock.Unlock()

reconstruct := func(header *types.Header, index int, result *fetchResult) error {

if types.DeriveSha(types.Receipts(receiptList[index])) != header.ReceiptHash {

return errInvalidReceipt

}

result.Receipts = receiptList[index]

return nil

}

return q.deliver(id, q.receiptTaskPool, q.receiptTaskQueue, q.receiptPendPool, q.receiptDonePool, receiptReqTimer, len(receiptList), reconstruct)

}

1 这里用到了处理header中的receiptTaskPool和receiptTaskQueue

2 q.deliver中用到了这里定义的reconstruct函数,把receipts存入resultCache中的Receipts

3 后续处理Content的时候拿到Receipts然后写入本地leveldb

处理Content

这里的作用是把block和receipts写入本地的leveldb

fast sync模式下调用的函数是processFastSyncContent

full sync模式下调用的函数是processFullSyncContent

先看下full模式下processFullSyncContent的代码:

func (d *Downloader) processFullSyncContent() error {

for {

results := d.queue.Results(true)

if len(results) == 0 {

return nil

}

if d.chainInsertHook != nil {

d.chainInsertHook(results)

}

if err := d.importBlockResults(results); err != nil {

return err

}

}

}

1 d.queue.Results()函数从上面的resultCache中拿到保存的内容

2 improtBlockResults()中根据results的header,body组织成block,然后d.blockchain.InsertChain(blocks)写block到本地的leveldb

再看下fast sync的processFastSyncContent函数:func (d *Downloader) processFastSyncContent(latest *types.Header) error {

// Start syncing state of the reported head block. This should get us most of

// the state of the pivot block.

stateSync := d.syncState(latest.Root)

defer stateSync.Cancel()

go func() {

if err := stateSync.Wait(); err != nil && err != errCancelStateFetch {

d.queue.Close() // wake up WaitResults

}

}()

// Figure out the ideal pivot block. Note, that this goalpost may move if the

// sync takes long enough for the chain head to move significantly.

pivot := uint64(0)

if height := latest.Number.Uint64(); height > uint64(fsMinFullBlocks) {

pivot = height - uint64(fsMinFullBlocks)

}

// To cater for moving pivot points, track the pivot block and subsequently

// accumulated download results separately.

var (

oldPivot *fetchResult // Locked in pivot block, might change eventually

oldTail []*fetchResult // Downloaded content after the pivot

)

for {

// Wait for the next batch of downloaded data to be available, and if the pivot

// block became stale, move the goalpost

results := d.queue.Results(oldPivot == nil) // Block if we're not monitoring pivot staleness

if len(results) == 0 {

// If pivot sync is done, stop

if oldPivot == nil {

return stateSync.Cancel()

}

// If sync failed, stop

select {

case <-d.cancelCh:

return stateSync.Cancel()

default:

}

}

if d.chainInsertHook != nil {

d.chainInsertHook(results)

}

if oldPivot != nil {

results = append(append([]*fetchResult{oldPivot}, oldTail...), results...)

}

// Split around the pivot block and process the two sides via fast/full sync

if atomic.LoadInt32(&d.committed) == 0 {

latest = results[len(results)-1].Header

if height := latest.Number.Uint64(); height > pivot+2*uint64(fsMinFullBlocks) {

log.Warn("Pivot became stale, moving", "old", pivot, "new", height-uint64(fsMinFullBlocks))

pivot = height - uint64(fsMinFullBlocks)

}

}

P, beforeP, afterP := splitAroundPivot(pivot, results)

if err := d.commitFastSyncData(beforeP, stateSync); err != nil {

return err

}

if P != nil {

// If new pivot block found, cancel old state retrieval and restart

if oldPivot != P {

stateSync.Cancel()

stateSync = d.syncState(P.Header.Root)

defer stateSync.Cancel()

go func() {

if err := stateSync.Wait(); err != nil && err != errCancelStateFetch {

d.queue.Close() // wake up WaitResults

}

}()

oldPivot = P

}

// Wait for completion, occasionally checking for pivot staleness

select {

case <-stateSync.done:

if stateSync.err != nil {

return stateSync.err

}

if err := d.commitPivotBlock(P); err != nil {

return err

}

oldPivot = nil

case <-time.After(time.Second):

oldTail = afterP

continue

}

}

// Fast sync done, pivot commit done, full import

if err := d.importBlockResults(afterP); err != nil {

return err

}

}

}

其中commitFastSyncData就是组织headers、receipts和body到block然后写入本地leveldb中,调用函数:

d.blockchain.InsertReceiptChain(blocks, receipts),连同receipt一起写入本地

同步结束

再看下上面讲的开始sync的地方:

func (pm *ProtocolManager) synchronise(peer *peer) {

// Run the sync cycle, and disable fast sync if we've went past the pivot block

if err := pm.downloader.Synchronise(peer.id, pHead, pTd, mode); err != nil {

return

}

if atomic.LoadUint32(&pm.fastSync) == 1 {

log.Info("Fast sync complete, auto disabling")

atomic.StoreUint32(&pm.fastSync, 0)

}

atomic.StoreUint32(&pm.acceptTxs, 1) // Mark initial sync done

if head := pm.blockchain.CurrentBlock(); head.NumberU64() > 0 {

// We've completed a sync cycle, notify all peers of new state. This path is

// essential in star-topology networks where a gateway node needs to notify

// all its out-of-date peers of the availability of a new block. This failure

// scenario will most often crop up in private and hackathon networks with

// degenerate connectivity, but it should be healthy for the mainnet too to

// more reliably update peers or the local TD state.

go pm.BroadcastBlock(head, false)

}

}

1 pm.downloader.Synchronise()结束的时候也就sync完成了

2 pm.fastSync置为0,退出fast sync模式了

3 拿出最新的head,广播自己的最新block,开始到正常工作模式。下面再写一篇文章讲解下同步完成之后的工作

总结

1 节点同步的时候默认是fast同步模式

2 代码中用到了大量的go和channel的读写,实现异步通信,这也是go语言的优势所在,很方便

3 fast 同步过程中从td最高的节点获取header、body、receipt和state然后写入本地的leveldb数据库中

4 同步到最新之后退出fast sync模式

不过看完了也没明白为什么fast sync模式要快,同步的数据要少,应该是有些地方漏掉了,再仔细看看