系列

1.【实用工具系列之爬虫】python实现爬取代理IP(防 ‘反爬虫’)

2.【实用工具系列之爬虫】python爬取资讯数据

前言

在大数据架构中,数据收集与数据存储占据了极为重要的地位,可以说是大数据的核心基础。而爬虫技术在这两大核心技术层次中占有了很大的比例。

本文实现一种简单快速的爬虫方法,其中用了代理ip,代理ip的获取可以参考我的这篇文章 【实用工具系列之爬虫】python实现爬取代理IP(防 ‘反爬虫’)。

代理IP

代理IP网站:xicidaili

具体方法详见 【实用工具系列之爬虫】python实现爬取代理IP(防 ‘反爬虫’) 。

输出的代理ip数据保存到 ‘proxy_ip.pkl’

爬取数据代码

本文以爬取小量财经数据为例子。

-

网站

-

地址:http://xxx

-

爬取内容

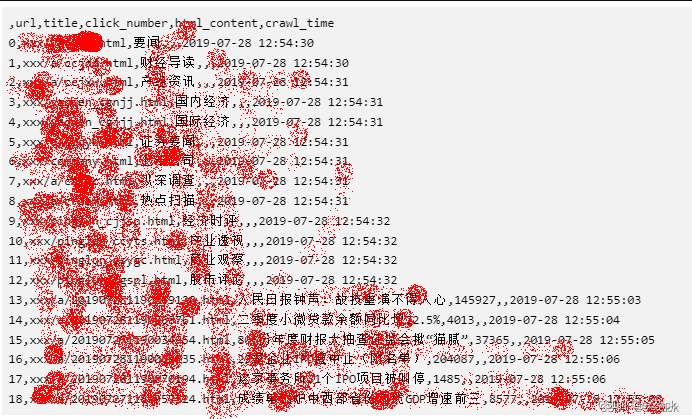

url,title,click_number,html_content -

保存数据为csv,格式如下:

url,title,click_number,html_content,crawl_time

szZack的文章

-

-

实战

- 步骤:

1、爬取首页,提取url作为第一层

2、爬取第一层的url,作为第二层

3、爬取第二层的url,作为第三层

4、结束

- 步骤:

-

环境

- pandas

- python3

- Ubuntu16.04

- requests

- 代码

crawl_finance_news.py

- 1、导入依赖包

import crawl_proxy_ip

import pandas as pd

import re, time, sys, os, random

import telnetlib

import requests

- 2.全局变量

global url_set

url_set = {}

- 3.爬取核心代码

def crawl_finance_news(start_url):

#提取数据格式:url,title,click_number,html_content,crawl_time

proxy_ip_list = crawl_proxy_ip.load_proxy_ip('proxy_ip.pkl')

#爬取首页

start_html = crawl_web_data(start_url, proxy_ip_list)

#open('tmp.txt', 'w').write(start_html)

global url_set

url_set[start_url] = 0

#提取第一层web

web_content_list = extract_web_content(start_html, proxy_ip_list)

#提取第二层web

length = len(web_content_list)

for i in range(length):

if len(web_content_list[i][2]) == 0:

html = crawl_web_data(web_content_list[i][0], proxy_ip_list)

web_content_list += extract_web_content(html, proxy_ip_list)

if len(web_content_list) > 1000:#仅仅是测试

break

#提取第3层web

length = len(web_content_list)

for i in range(length):

if len(web_content_list[i][2]) == 0:

html = crawl_web_data(web_content_list[i][0], proxy_ip_list)

web_content_list += extract_web_content(html, proxy_ip_list)

if len(web_content_list) > 1000:#仅仅是测试

break

#保存数据

columns = ['url', 'title', 'click_number', 'html_content', 'crawl_time']

df = pd.DataFrame(columns = columns, data = web_content_list)

df.to_csv('finance_data.csv', encoding='utf-8')

print('data_len:', len(web_content_list))

def crawl_web_data(url, proxy_ip_list):

proxy_ip_dict = random.choice(proxy_ip_list)

if len(proxy_ip_list) == 0:

return ''

proxy_ip_dict = proxy_ip_list[0]

try:

html = download_by_proxy(url, proxy_ip_dict)

print(url, 'ok')

except Exception as e:

#print('50 e', e)

#删除无效的ip

index = proxy_ip_list.index(proxy_ip_dict)

proxy_ip_list.pop(index)

print('proxy_ip_list', len(proxy_ip_list))

return crawl_web_data(url, proxy_ip_list)

return html

def download_by_proxy(url, proxies):

headers = {'User-Agent':'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/55.0.2883.103 Safari/537.36', 'Connection':'close'}

response = requests.get(url=url, proxies=proxies, headers=headers, timeout=10)

response.encoding = 'utf-8'

html = response.text

return html

def extract_web_content(html, proxy_ip_list):

#提取数据格式:url,title,click_number,html_content, crawl_time

web_content_list = []

html_content = html

html = html.replace(' target ="_blank"', '')

html = html.replace(' ', '')

html = html.replace('\r', '')

html = html.replace('\n', '')

html = html.replace('\t', '')

html = html.replace('"target="_blank', '')

#<h3><a href="xxx/a/123.html" >证监会:拟对证券违法行为提高刑期上限</a></h3>

res = re.search('href="(http[^"><]*finance[^"><]*)">([^<]*)<', html)#finance 必须是金融资讯

while res is not None:

url, title = res.groups()

#print('url, title', url, title)

global url_set

if url in url_set:#防止重复

html = html.replace('href="%s">%s<' %(url, title), '')

res = re.search('href="(http[^"><]*finance[^"><]*)">([^<]*)<', html)

continue

else:

url_set[url] = 0

click_number = get_click_number(url, proxy_ip_list)

#print('click_number', click_number)

now_time = time.strftime("%Y-%m-%d %H:%M:%S", time.localtime())

if len(click_number) == 0:#仅保留正文

html_content = ''

web_content_list.append([url, title, click_number, html_content, now_time])

if len(web_content_list) > 200:#test 每页最多爬取200条

break

html = html.replace('href="%s">%s<' %(url, title), '')

res = re.search('href="(http[^"><]*finance[^"><]*)">([^<]*)<', html)

return web_content_list

[szZack的文章](https://blog.csdn.net/zengNLP?type=blog)

def get_click_number(url, proxy_ip_list):

html = crawl_web_data(url, proxy_ip_list)

#<span class="num ml5">4297</span>

res = re.search('<span class="num ml5">(\d{1,})</span>', html)

if res is not None:

return res.groups()[0]

return ''

- 4.测试

if __name__ == '__main__':

#xx网:xxx/

#用法:python crawl_finance_news.py 'xxx/'

if len(sys.argv) == 2:

crawl_finance_news(sys.argv[1])

-

5.代码说明

1、先爬取代理ip:python crawl_proxy_ip.py

2、爬取财经新闻:python crawl_finance_news.py ‘xxx/’

3、这里仅仅是测试,爬取1000条就结束

4、数据保存到:finance_data.csv

szZack的文章 -

6.爬取内容示意