一、问题背景

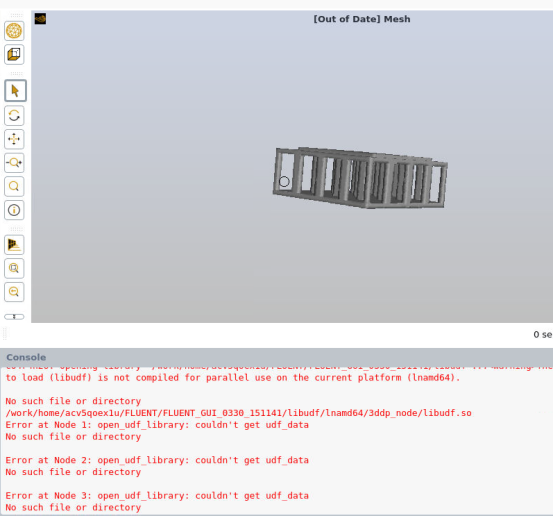

在linux服务器上加载libudf时,出现错误

错误日志如下,因为太长了所以我把中间部分删去;其实就是其他计算节点也出错了。我没统计,我申请云计算任务时开通了128个计算节点,估计节点0没出错外,其他节点都错了。

Error at Node 1: open_udf_library: couldn't get udf_data

No such file or directory

Error at Node 2: open_udf_library: couldn't get udf_data

No such file or directory

Error at Node 3: open_udf_library: couldn't get udf_data

No such file or directory

Error at Node 25: open_udf_library: couldn't get udf_data

No such file or directory

Error at Node 72: open_udf_library: couldn't get udf_data

No such file or directory

Error at Node 26: open_udf_library: couldn't get udf_data

No such file or directory

Error at Node 48: open_udf_library: couldn't get udf_data

No such file or directory

Error at Node 97: open_udf_library: couldn't get udf_data

No such file or directory

Error at Node 51: open_udf_library: couldn't get udf_data

No such file or directory

Error at Node 100: open_udf_library: couldn't get udf_data

No such file or directory

Error at Node 54: open_udf_library: couldn't get udf_data

No such file or directory

Error at Node 101: open_udf_library: couldn't get udf_data

No such file or directory

Error at Node 127: open_udf_library: couldn't get udf_data

No such file or directory

二、解决办法(不恰当的方法)

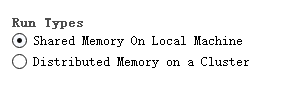

在fluent软件的启动界面使用共享内存设置。

当时我勾选的是Distributed Memory on a Cluster,就出错了。

而勾选Shared Memory On Local Machine,错误神奇地消失了。

我估计是因为节点之间的通信采用共享内存,这样libudf在节点0中,而其他节点可以直接用节点0的数据来访问UDF。

之所以说方法不恰当,是因为工程师告诉我这个共享内存只会利用一个主机,一个主机里只有64个核心,而我开的是128个核心的作业,所以CPU利用率会折损成50%。

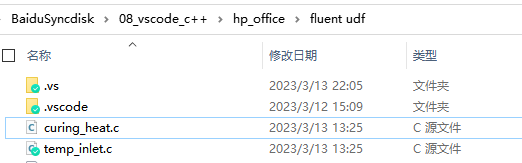

三、恰当的解决办法

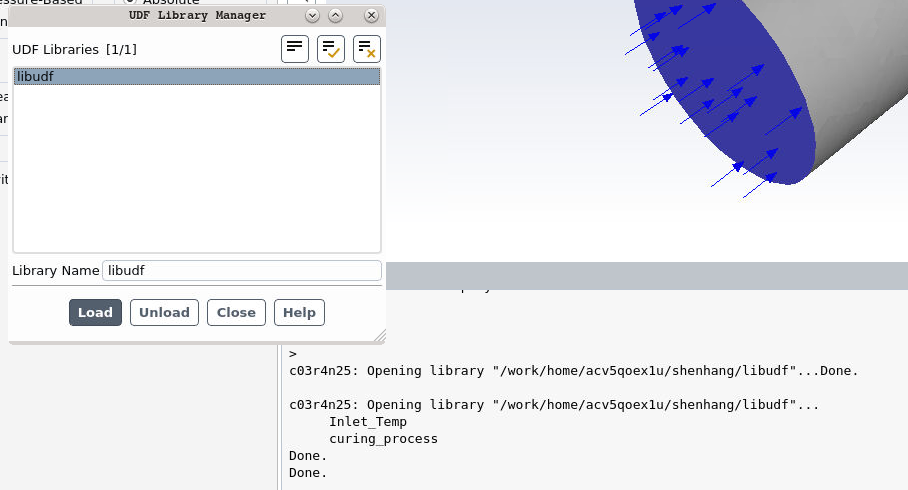

将所有的c文件名称中的空格去掉,然后再编译,再加载libudf库。

这次就没有任何问题了