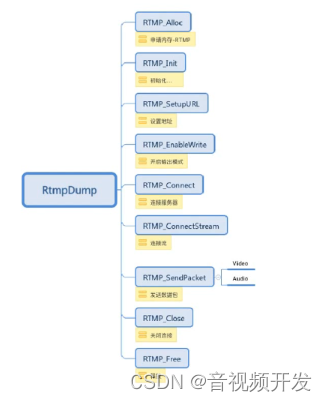

一.RTMP使用流程

rtmp协议的api调用顺序如下:

二.初始化RTMP,连接服务器

有两种构建rtmp服务器的方式我们使用的b站的服务器,要使用b站的服务器,你得认证一下,审核还需要大概1天得时间,除此之外,我们还可以自己构建rtmp服务器,你可以花几十块钱买个阿里云之类的云服务器,预装一个Linux系统,rtmp服务器一般是安装在linux上,他需要配合ngix等代理框架来实现

下面我们把rtmp服务器初始化,此处为了后面对视频的sps等数据保存,我们定义了一个结构体来保存RTMP和sps、pps等数据

//用于保存sps pps 结构体

typedef struct {

RTMP *rtmp;

int8_t *sps;//sps数组

int8_t *pps;//pps数组

int16_t sps_len;//sps数组长度

int16_t pps_len;//pps数组长度

} Live;初始化rtmp服务器,然后对rtmp进行配置,然后链接我们java层传入的rtmp地址,如果我们的rtmp服务器配置正常且开启之后RTMP_ConnectStream函数将会返回成功

extern "C"

JNIEXPORT jboolean JNICALL

Java_com_example_rtmpdemo_mediacodec_ScreenLive_connect(JNIEnv *env, jobject thiz, jstring url_) {

//链接rtmp服务器

int ret = 0;

const char *url = env->GetStringUTFChars(url_, 0);

//不断重试,链接服务器

do {

//初始化live数据

live = (Live *) malloc(sizeof(Live));

//清空live数据

memset(live, 0, sizeof(Live));

//初始化RTMP,申请内存

live->rtmp = RTMP_Alloc();

RTMP_Init(live->rtmp);

//设置超时时间

live->rtmp->Link.timeout = 10;

LOGI("connect %s", url);

//设置地址

if (!(ret = RTMP_SetupURL(live->rtmp, (char *) url))) break;

//设置输出模式

RTMP_EnableWrite(live->rtmp);

LOGI("connect Connect");

//连接

if (!(ret = RTMP_Connect(live->rtmp, 0))) break;

LOGI("connect ConnectStream");

//连接流

if (!(ret = RTMP_ConnectStream(live->rtmp, 0))) break;

LOGI("connect 成功");

} while (0);

//连接失败,释放内存

if (!ret && live) {

free(live);

live = nullptr;

}

env->ReleaseStringUTFChars(url_, url);

return ret;

}私信我,领取最新最全C++音视频学习提升资料,内容包括(C/C++,Linux,FFmpeg ,webRTC ,rtmp ,hls ,rtsp ,ffplay ,srs)

三.发送数据到rtmp服务器

java层我们通过Camera2来获取视频数据,AudioRecord来进行录音,再通过MediaCodec实现编码,cameara2的api调用比较繁琐,可以去我的github项目中查看具体的使用方法,总之就是把采集到视频数剧转换成nv12,使用MediaCodec来将yuv数据编码成h264码流,然后把h264数据通过jni方法传递到我们的Native层,现在我们来看一下Native中收到了数据后的操作:

//发送数据到rtmp服务器端

extern "C"

JNIEXPORT jboolean JNICALL

Java_com_example_rtmpdemo2_mediacodec_ScreenLive_sendData(JNIEnv *env, jobject thiz,

jbyteArray data_, jint len,

jlong tms, jint type) {

// 确保不会取出空的 RTMP 数据包

if (!data_) {

return 0;

}

int ret;

int8_t *data = env->GetByteArrayElements(data_, NULL);

if (type == 1) {//视频

LOGI("开始发送视频 %d", len);

ret = sendVideo(data, len, tms);

} else {//音频

LOGI("开始发送音频 %d", len);

ret = sendAudio(data, len, tms, type);

}

env->ReleaseByteArrayElements(data_, data, 0);

return ret;

}收到视频数据后,需要判断是否是sps、pps数据,如果是,把他们缓存到结构体中,后续的I帧进入时我们再把sps、pps添加到头部,因为直播中任意时刻进入的用户想播放必须先解析sps/pps这些配置数据才行

/**

*发送帧数据到rtmp服务器

*把pps和sps缓存下来,放在每一个I帧前面,

*/

int sendVideo(int8_t *buf, int len, long tms) {

int ret = 0;

//当前时sps,缓存sps、pps 到全局变量

if (buf[4] == 0x67) {

//判断live是否缓存过,liv缓存过不再进行缓存

if (live && (!live->sps || !live->pps)) {

saveSPSPPS(buf, len, live);

}

return ret;

}

//I帧,关键帧,非关键帧直接推送帧数据

if (buf[4] == 0x65) {

LOGI("找到关键帧,先发送sps数据 %d", len);

//先推送sps、pps

RTMPPacket *SPpacket = createSPSPPSPacket(live);

sendPacket(SPpacket);

}

//推送帧数据

RTMPPacket *packet = createVideoPacket(buf, len, tms, live);

ret = sendPacket(packet);

return ret;

}RTMP协议发送的是RTMPPacket数据包,其中sps和pps的数据包为:

/**

* 创建sps和pps的RTMPPacket

*/

RTMPPacket *createSPSPPSPacket(Live *live) {

//sps、pps的packet

int body_size = 16 + live->sps_len + live->pps_len;

LOGI("创建sps Packet ,body_size:%d", body_size);

RTMPPacket *packet = (RTMPPacket *) malloc(sizeof(RTMPPacket));

//初始化packet数据,申请数组

RTMPPacket_Alloc(packet, body_size);

int i = 0;

//固定协议字节标识

packet->m_body[i++] = 0x17;

packet->m_body[i++] = 0x00;

packet->m_body[i++] = 0x00;

packet->m_body[i++] = 0x00;

packet->m_body[i++] = 0x00;

packet->m_body[i++] = 0x01;

//sps配置信息

packet->m_body[i++] = live->sps[1];

packet->m_body[i++] = live->sps[2];

packet->m_body[i++] = live->sps[3];

//固定

packet->m_body[i++] = 0xFF;

packet->m_body[i++] = 0xE1;

//两个字节存储sps长度

packet->m_body[i++] = (live->sps_len >> 8) & 0xFF;

packet->m_body[i++] = (live->sps_len) & 0xFF;

//sps内容写入

memcpy(&packet->m_body[i], live->sps, live->sps_len);

i += live->sps_len;

//pps开始写入

packet->m_body[i++] = 0x01;

//pps长度

packet->m_body[i++] = (live->pps_len >> 8) & 0xFF;

packet->m_body[i++] = (live->pps_len) & 0xFF;

memcpy(&packet->m_body[i], live->pps, live->pps_len);

//设置视频类型

packet->m_packetType = RTMP_PACKET_TYPE_VIDEO;

packet->m_nBodySize = body_size;

//通道值,音视频不能相同

packet->m_nChannel = 0x04;

packet->m_nTimeStamp = 0;

packet->m_hasAbsTimestamp = 0;

packet->m_headerType = RTMP_PACKET_SIZE_LARGE;

packet->m_nInfoField2 = live->rtmp->m_stream_id;

return packet;

}普通帧数据的构建方式,注意就是I帧和非I帧,RTMPPacket的起始字节是不同的

/**

* 创建帧内数据的RTMPPacket,I帧和非关键帧都在此合成RTMPPacket

*/

RTMPPacket *createVideoPacket(int8_t *buf, int len, long tms, Live *live) {

buf += 4;

len -= 4;

RTMPPacket *packet = (RTMPPacket *) malloc(sizeof(RTMPPacket));

int body_size = len + 9;

LOGI("创建帧数据 Packet ,body_size:%d", body_size);

//初始化内部body数组

RTMPPacket_Alloc(packet, body_size);

packet->m_body[0] = 0x27;//非关键帧

if (buf[0] == 0x65) {//关键帧

packet->m_body[0] = 0x17;

}

//固定

packet->m_body[1] = 0x01;

packet->m_body[2] = 0x00;

packet->m_body[3] = 0x00;

packet->m_body[4] = 0x00;

//帧长度,4个字节存储

packet->m_body[5] = (len >> 24) & 0xFF;

packet->m_body[6] = (len >> 16) & 0xFF;

packet->m_body[7] = (len >> 8) & 0xFF;

packet->m_body[8] = (len) & 0xFF;

//copy帧数据

memcpy(&packet->m_body[9], buf, len);

//设置视频类型

packet->m_packetType = RTMP_PACKET_TYPE_VIDEO;

packet->m_nBodySize = body_size;

//通道值,音视频不能相同

packet->m_nChannel = 0x04;

packet->m_nTimeStamp = tms;

packet->m_hasAbsTimestamp = 0;

packet->m_headerType = RTMP_PACKET_SIZE_LARGE;

packet->m_nInfoField2 = live->rtmp->m_stream_id;

return packet;

}发送代码,这样我们就把视频数据发送出去了

//将rtmp包发送出去

int sendPacket(RTMPPacket *packet) {

int r = RTMP_SendPacket(live->rtmp, packet, 0);

RTMPPacket_Free(packet);

free(packet);

LOGI("发送packet: %d ", r);

return r;

}四.发送音频数据到服务器

音频使用AudioRecord来进行录音,同样使用MediaCodec来将pcm原始音频数据编码成AAC格式的数据推送给服务器,具体的代码前期也有写过,具体查看一下demo代码,需要注意的点是rtmp协议在发送音频数据之前要先发一个固定音频格式头:0x12, 0x08

java层在编码开始前先发送音频头:

//发送音频头

RTMPPacket rtmpPacket = new RTMPPacket();

byte[] audioHeadInfo = {0x12, 0x08};

rtmpPacket.setBuffer(audioHeadInfo);

rtmpPacket.setType(AUDIO_HEAD_TYPE);

screenLive.addPacket(rtmpPacket);native中根据java层传递的type进行判断,音频头的RTMPPacket数据第二个字节为为0x00,普通的音频为0x01

//创建audio包

RTMPPacket *createAudioPacket(int8_t *buf, int len, long tms, int type, Live *live) {

int body_size = len + 2;

RTMPPacket *packet = (RTMPPacket *) malloc(sizeof(RTMPPacket));

RTMPPacket_Alloc(packet, body_size);

packet->m_body[0] = 0xAF;

if (type == 2) {//音频头

packet->m_body[1] = 0x00;

} else {//正常数据

packet->m_body[1] = 0x01;

}

memcpy(&packet->m_body[2], buf, len);

//设置音频类型

packet->m_packetType = RTMP_PACKET_TYPE_AUDIO;

packet->m_nBodySize = body_size;

//通道值,音视频不能相同

packet->m_nChannel = 0x05;

packet->m_nTimeStamp = tms;

packet->m_hasAbsTimestamp = 0;

packet->m_headerType = RTMP_PACKET_SIZE_LARGE;

packet->m_nInfoField2 = live->rtmp->m_stream_id;

return packet;

}