参考:

https://zhuanlan.zhihu.com/p/60286685

1、创建一个文件夹 ,比如a_docker

2、进入a_docker创建三个文件

3、requirements.txt 文件

bert4keras==0.9.9

Flask==1.1.1

4、Dockfile 文件

FROM ubuntu:16.04

FROM python:3.6.5

RUN apt-get update -y && \

apt-get install -y python-pip python-dev

# We copy just the requirements.txt first to leverage Docker cache

COPY ./requirements.txt /app/requirements.txt

WORKDIR /app

RUN apt-get install vim

RUN pip install -r requirements.txt

COPY . /app

CMD python /app/server.py

5、server.py 文件

from flask import Flask

app = Flask(__name__)

@app.route('/')

def hello_world():

return 'Hello World!'

if __name__ == '__main__':

app.run("0.0.0.0", 6600, threaded=True)

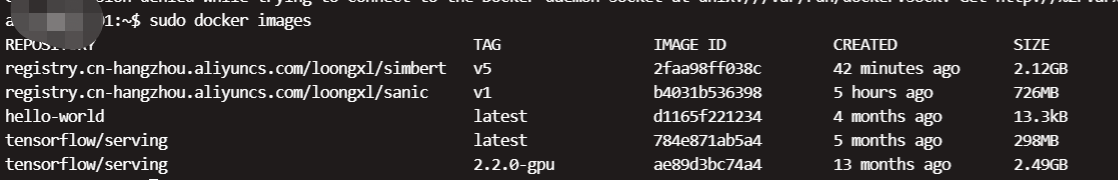

6、在a_docker当前文件夹目录下打开cmd进行打包

docker build -t simbert:v1 .

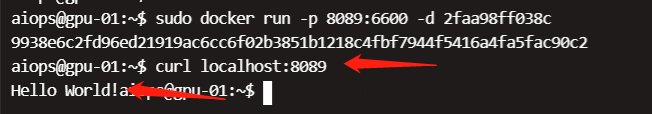

7、打包结束后运行镜像

docker run -p 8089:6600 -d 打包后的镜像id

8、进入容器id安装tensorflow

docker exec -it 容器id /bin/bash

pip install tensorflow==1.14 -i https://pypi.douban.com/simple

9、退出容器,更改后的容器再打包更新生成镜像

docker commit -m="rm bertcheckpoint" 容器id simbert:v3

10、阿里云镜像服务创建个仓库

https://cr.console.aliyun.com/cn-hangzhou/instance/repositories

11、push到阿里云

$ docker login --username=108373****@qq.com registry.cn-hangzhou.aliyuncs.com

$ docker tag [ImageId] registry.cn-hangzhou.aliyuncs.com/loongxl/simbert:[镜像版本号]

$ docker push registry.cn-hangzhou.aliyuncs.com/loongxl/simbert:[镜像版本号]

docker login --username=108***[email protected] registry.cn-hangzhou.aliyuncs.com

docker tag 2faa9***c registry.cn-hangzhou.aliyuncs.com/loongxl/simbert:v5

docker push registry.cn-hangzhou.aliyuncs.com/loongxl/simbert:v5

12、下载拉取镜像

docker pull registry.cn-hangzhou.aliyuncs.com/loongxl/simbert:v5

13、运行镜像

docker run -p 8089:6600 -d 镜像id

curl localhost:8089

文件挂载

参考:https://zhuanlan.zhihu.com/p/87593614

linux版本:

:/loong不能是/app 因为会覆盖app里内容,/loong会在容器内显示对应宿主机上的loong文件

sudo docker run -p 8089:6600 -v /tmp/loong:/loong -d 2faa98ff038c

windows版本:

/D表示d盘

docker run -p 8089:6600 -v /D/t***rt_docker/chinese_simbert_L-6_H-384_A-12:/loong -d 2faa98ff038c

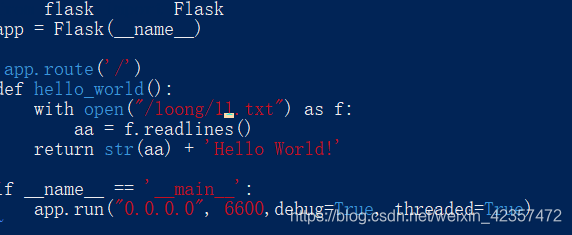

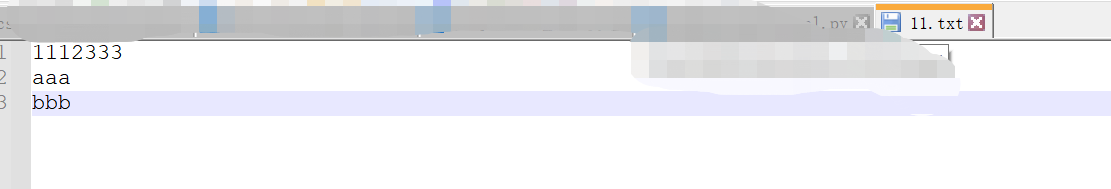

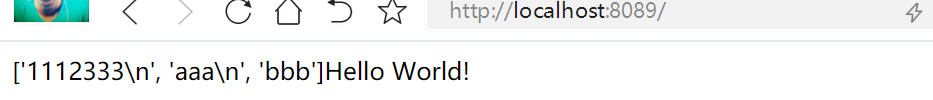

修改server.py文件,加载宿主机文件11.txt(/loong/11.txt是容器里的目录,映射的是宿主机的11.txt)

实时更改11.txt文件内容,web访问刷新也会改变

更改server.py在线更新镜像部署模型

进入原容器内更改

server.py

import numpy as np

import os

from collections import Counter

os.environ['TF_KERAS'] = '1'

from bert4keras.backend import keras, K

from bert4keras.models import build_transformer_model

from bert4keras.tokenizers import Tokenizer

from bert4keras.snippets import sequence_padding

from bert4keras.snippets import uniout

from keras.models import Model

from flask import Flask

from flask import render_template, request, jsonify

import tensorflow as tf

maxlen = 32

sess=keras.backend.get_session()

graph=tf.get_default_graph()

# bert配置

config_path = 'loong/bert_config.json'

checkpoint_path = 'loong/bert_model.ckpt'

dict_path = 'loong/vocab.txt'

# 建立分词器

tokenizer = Tokenizer(dict_path, do_lower_case=True) # 建立分词器

# 建立加载模型

bert = build_transformer_model(

config_path,

checkpoint_path,

with_pool='linear',

application='unilm',

return_keras_model=False,

)

encoder = keras.models.Model(bert.model.inputs, bert.model.outputs[0])

app = Flask(__name__)

@app.route('/', methods=['GET', 'POST'])

def build_plot():

query = request.args.get('query')

token_ids = tokenizer.encode(query, maxlen=maxlen)[0]

#在默认会话与计算图中进行模型的预测

with graph.as_default():

with sess.as_default():

a_vecs = encoder.predict([a_token_ids, np.zeros_like(a_token_ids)],

verbose=True)

a_vecs = a_vecs / (a_vecs**2).sum(axis=1, keepdims=True)**0.5

return jsonify({"hah":a_vecs})

if __name__ == '__main__':

app.run("0.0.0.0", 6600, debug=True, threaded=True)

更新新镜像并运行

docker commit -m="rm bertcheckpoint" 019728c1e196 simbert:v10

docker run -p 8099:6600 -v /D/t***-12_test:/loong -d 950f56fe6556