背景

记录下Hadoop2.5.0在CentOS7下安装部署的过程

步骤

1、新建cdh文件夹,把hadoop的压缩包解压到cdh文件夹里面

#mkdir cdh

#tar -zxvf hadoop-2.5.0-cdh5.3.6.tar.gz -C cdh2、切换到hadoop解压目录下的etc/hadoop目录,修改hadoop-env.sh、mapred-env.sh、mapred-site.xml.template、hdfs-site.xml、yarn-site.xml、core-site.xml和slaves七个文件。

两个env都只修改文件上房的JAVA_HOME即可(我的CentOS中java1.8是安装在/home/szc/jdk8_64目录下,所以JAVA_HOME设置为/home/szc/jdk8_64)

export JAVA_HOME=/home/szc/jdk8_64mapred-site.xml.template文件作如下修改后,再重命名为mapred-site.xml文件

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.jobhistory.address</name>

<value>192.168.57.141:10020</value>

</property>

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>192.168.57.141:19888</value>

</property>

</configuration>hdfs-site.xml

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>192.168.57.141:50091</value>

</property>

</configuration>yarn-site.xml

<configuration>

<!-- Site specific YARN configuration properties -->

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.resoucemanager.hostname</name>

<value>192.168.57.141</value>

</property>

<property>

<name>yarn.log-aggregation-enable</name>

<value>true</value>

</property>

<property>

<name>yarn.log-aggregation.retain-seconds</name>

<value>604800</value>

</property>

</configuration>core-site.xml,注意后两个属性中的szc换成自己的用户名,另外hadoop.tmp.dir对应的目录也要自己创建

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://192.168.57.141:8020</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/home/szc/cdh/hadoop-2.5.0-cdh5.3.6/data/tmp</value>

</property>

<property>

<name>hadoop.proxyuser.szc.hosts</name>

<value>*</value>

</property>

<property>

<name>hadoop.proxyuser.szc.groups</name>

<value>*</value>

</property>

<property>

<name>hadoop.proxyuser.root.hosts</name>

<value>*</value>

</property>

<property>

<name>hadoop.proxyuser.root.groups</name>

<value>*</value>

</property

</configuration>slaves

192.168.57.141上面的所有ip,都是centos的本机ip

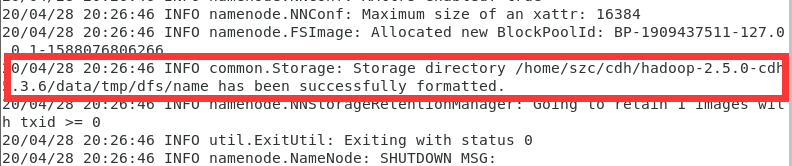

3、格式化hdfs

切换到hadoop解压目录的bin目录下,然后运行命令

#hdfs namenode -format完成后的截图如下

4、启动相应的进程

切换到hadoop解压目录的sbin目录下,运行start-dfs.sh、start-yarn.sh启动hdfs和yarn,再运行下面命令启动historyserver

./mr-jobhistory-daemon.sh start historyserver5、windows浏览器查看集群的ui界面

先开放50070端口

[root@localhost sbin]# firewall-cmd --add-port=50070/tcp --permanent

success

[root@localhost sbin]# firewall-cmd --reload

success再在windows浏览器里输入centos的ip:50070,回车后会显示如下界面

至此,hadoop部署完成

结语

以上,谢谢