目录

十、kubernetes-dashboard在谷歌浏览器中访问

一、系统初始化

#!/bin/bash

init_hosts(){

cat >> /etc/hosts << EOF

192.168.231.3 master

192.168.231.4 node1

EOF

echo -e "\033[32m [主机解析] ==> OK \033[0m"

}

init_base(){

yum install -y conntrack ntpdate ntp ipvsadm ipset jq iptables curl sysstat libseccomp wget vim git net-tools dos2unix lsof tcpdump lrzsz telnet bash-completion.noarch conntrack-tools

echo -e "\033[32m [安装常用工具] ==> OK \033[0m"

}

init_security() {

# 关闭防火墙

systemctl stop firewalld

systemctl disable firewalld &>/dev/null

yum install iptables-services -y

systemctl start iptables

systemctl enable iptables

iptables -F

service iptables save

setenforce 0

sed -i '/^SELINUX=/ s/enforcing/disabled/' /etc/selinux/config

# 关掉swap分区

swapoff -a

# 如果想永久关掉swap分区,打开如下文件注释掉swap哪一行即可.

sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab

echo "vm.swappiness = 0">> /etc/sysctl.conf

sysctl -p

echo -e "\033[32m [安全配置] ==> OK \033[0m"

}

cat > /etc/sysctl.d/kubernetes.conf << EOF

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.ipv4.ip_forward=1

vm.swappiness=0

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_watches=102400

fs.inotify.max_user_instances=1024

fs.file-max=262144

fs.nr_open=52706963

net.ipv6.conf.all.disable_ipv6=1

net.netfilter.nf_conntrack_max=300000

EOF

modprobe br_netfilter

sysctl -p /etc/sysctl.d/kubernetes.conf

echo -e "\033[32m [系统优化] ==> OK \033[0m"

init_stop_postfix(){

systemctl stop postfix.service

systemctl disable postfix.service

echo -e "\033[32m [邮件服务关闭] ==> OK \033[0m"

}

init_hosts

init_base

init_security

init_stop_postfix

mkdir /var/log/journal

mkdir /etc/systemd/journald.conf.d

cat > /etc/systemd/journald.conf.d/99-prophet.conf << EOF

[journal]

# 持久化保存到磁盘

Storage=persistent

# 压缩历史日志

Compress=yes

SyncIntervalSec=5m

RateLimitInterval=30s

RateLimitBurst=1000

# 最大占用空间 10G

SystemMaxUse=10G

# 但日志文件最大 200M

SystemMaxFileSize=200M

# 日志保持时间2周

MaxRetentionSec=2week

# 不将日志转发到syslog

ForwardToSyslog=no

EOF

systemctl restart systemd-journald

echo -e "\033[32m [日志优化] ==> OK \033[0m"

# 临时生效

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack_ipv4

# 永久生效

cat > /etc/sysconfig/modules/ipvs.modules <<EOF

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack_ipv4

EOF

chmod 755 /etc/sysconfig/modules/ipvs.modules

bash /etc/sysconfig/modules/ipvs.modules

echo -e "\033[32m [加载ipvs] ==> OK \033[0m"

chmod +x init.sh

./init.sh

设置主机名

hostnamectl set-hostname master

hostnamectl set-hostname node1

hostnamectl set-hostname node2

二、docker 安装

yum install yum-utils -y

yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

yum -y install docker-ce-19.03.9-3.el7mkdir -p /etc/systemd/system/docker.service.d

cat > /etc/docker/daemon.json << EOF { "insecure-registries": ["harbor.witcomm.bhjf"], "registry-mirrors": ["https://kfwkfulq.mirror.aliyuncs.com","https://2lqq34jg.mirror.aliyuncs.com","https://pee6w651.mirror.aliyuncs.com","https://registry.docker-cn.com","http://hub-mirror.c.163.com"], "exec-opts": ["native.cgroupdriver=systemd"], "log-driver": "json-file", "log-opts":{ "max-size": "100m" } } EOFsystemctl daemon-reload

systemctl restart docker

systemctl enable docker

三、添加kubeadm安装源

cat > /etc/yum.repos.d/kubernetes.repo << EOF [kubernetes] name=Kubernetes baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64 enabled=1 gpgcheck=0 repo_gpgcheck=0 gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg EOFyum makecache fast

yum install kubeadm-1.18.3 kubectl-1.18.3 kubelet-1.18.3 -y

systemctl enable kubelet.service

1.17.2版本

cat > /etc/yum.repos.d/kubernetes.repo <<EOF [kubernetes] name=Kubernetes repo baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/ gpgcheck=0 gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg enabled=1 EOFyum install -y kubelet-1.17.2 kubeadm-1.17.2 kubectl-1.17.2 systemctl enable kubelet && systemctl start kubelet

四、master 节点初始化

v1.18.3

kubeadm init \

--apiserver-advertise-address=192.168.99.34 \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.18.3 \

--service-cidr=10.2.0.0/16 \

--pod-network-cidr=10.244.0.0/16v1.17.2

kubeadm init \

--apiserver-advertise-address=192.168.99.34 \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.17.2 \

--pod-network-cidr=10.244.0.0/16mkdir -p $HOME/.kube

cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

chown $(id -u):$(id -g) $HOME/.kube/config

五、node节点初始化

最后输出的token,是节点加入的信息,一般是两个小时内有效

kubeadm join 192.168.99.34:6443 --token gp024k.zemwtue9qnn9ghps \

--discovery-token-ca-cert-hash sha256:43457cba87e58a5d14c1181643091d1dfc256e23a4fb7b5a006643fea1ea9471在master节点上验证,当节点准备好时(网络未配置),STATUS会从 NotReady 变成Ready

kubectl get node

NAME STATUS ROLES AGE VERSION

k8smaster180 NotReady master 6m30s v1.18.3

k8snode181 NotReady <none> 2m42s v1.18.3

六、配置flannel网络

Kubernetes v1.7+ 适用以下的flannel配置,需要等几分钟,主要是镜像下载较慢,当然也可以修改镜像源

wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

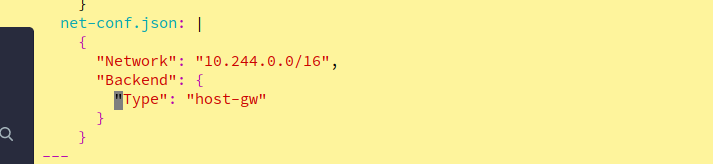

--- apiVersion: policy/v1beta1 kind: PodSecurityPolicy metadata: name: psp.flannel.unprivileged annotations: seccomp.security.alpha.kubernetes.io/allowedProfileNames: docker/default seccomp.security.alpha.kubernetes.io/defaultProfileName: docker/default apparmor.security.beta.kubernetes.io/allowedProfileNames: runtime/default apparmor.security.beta.kubernetes.io/defaultProfileName: runtime/default spec: privileged: false volumes: - configMap - secret - emptyDir - hostPath allowedHostPaths: - pathPrefix: "/etc/cni/net.d" - pathPrefix: "/etc/kube-flannel" - pathPrefix: "/run/flannel" readOnlyRootFilesystem: false # Users and groups runAsUser: rule: RunAsAny supplementalGroups: rule: RunAsAny fsGroup: rule: RunAsAny # Privilege Escalation allowPrivilegeEscalation: false defaultAllowPrivilegeEscalation: false # Capabilities allowedCapabilities: ['NET_ADMIN', 'NET_RAW'] defaultAddCapabilities: [] requiredDropCapabilities: [] # Host namespaces hostPID: false hostIPC: false hostNetwork: true hostPorts: - min: 0 max: 65535 # SELinux seLinux: # SELinux is unused in CaaSP rule: 'RunAsAny' --- kind: ClusterRole apiVersion: rbac.authorization.k8s.io/v1 metadata: name: flannel rules: - apiGroups: ['extensions'] resources: ['podsecuritypolicies'] verbs: ['use'] resourceNames: ['psp.flannel.unprivileged'] - apiGroups: - "" resources: - pods verbs: - get - apiGroups: - "" resources: - nodes verbs: - list - watch - apiGroups: - "" resources: - nodes/status verbs: - patch --- kind: ClusterRoleBinding apiVersion: rbac.authorization.k8s.io/v1 metadata: name: flannel roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: flannel subjects: - kind: ServiceAccount name: flannel namespace: kube-system --- apiVersion: v1 kind: ServiceAccount metadata: name: flannel namespace: kube-system --- kind: ConfigMap apiVersion: v1 metadata: name: kube-flannel-cfg namespace: kube-system labels: tier: node app: flannel data: cni-conf.json: | { "name": "cbr0", "cniVersion": "0.3.1", "plugins": [ { "type": "flannel", "delegate": { "hairpinMode": true, "isDefaultGateway": true } }, { "type": "portmap", "capabilities": { "portMappings": true } } ] } net-conf.json: | { "Network": "10.244.0.0/16", "Backend": { "Type": "vxlan" } } --- apiVersion: apps/v1 kind: DaemonSet metadata: name: kube-flannel-ds namespace: kube-system labels: tier: node app: flannel spec: selector: matchLabels: app: flannel template: metadata: labels: tier: node app: flannel spec: affinity: nodeAffinity: requiredDuringSchedulingIgnoredDuringExecution: nodeSelectorTerms: - matchExpressions: - key: kubernetes.io/os operator: In values: - linux hostNetwork: true priorityClassName: system-node-critical tolerations: - operator: Exists effect: NoSchedule serviceAccountName: flannel initContainers: - name: install-cni image: quay.io/coreos/flannel:v0.13.1-rc1 command: - cp args: - -f - /etc/kube-flannel/cni-conf.json - /etc/cni/net.d/10-flannel.conflist volumeMounts: - name: cni mountPath: /etc/cni/net.d - name: flannel-cfg mountPath: /etc/kube-flannel/ containers: - name: kube-flannel image: quay.io/coreos/flannel:v0.13.1-rc1 command: - /opt/bin/flanneld args: - --ip-masq - --kube-subnet-mgr resources: requests: cpu: "100m" memory: "50Mi" limits: cpu: "100m" memory: "50Mi" securityContext: privileged: false capabilities: add: ["NET_ADMIN", "NET_RAW"] env: - name: POD_NAME valueFrom: fieldRef: fieldPath: metadata.name - name: POD_NAMESPACE valueFrom: fieldRef: fieldPath: metadata.namespace volumeMounts: - name: run mountPath: /run/flannel - name: flannel-cfg mountPath: /etc/kube-flannel/ volumes: - name: run hostPath: path: /run/flannel - name: cni hostPath: path: /etc/cni/net.d - name: flannel-cfg configMap: name: kube-flannel-cfg

kubectl apply -f kube-flannel.yml

注意、注意、注意

flannel网络模型有三种

模型vxlan 三层网络模型

模型host-gw 二层网络模型

模型 {"Type": "VxLAN","Directrouting": true}} 自适应网络模型

修改模型vim kube-flannel.yml

验证flannel是否运行起来

ps -ef|grep flannel|grep -v grep

root 20102 20085 0 17:33 ? 00:00:00 /opt/bin/flanneld --ip-masq --kube-subnet-mgr

#Master节点安装自动补全工具

yum install -y bash-completion

source /usr/share/bash-completion/bash_completion

source <(kubectl completion bash)

echo "source <(kubectl completion bash)" >> ~/.bashrc七、证书更新问题

kubeadm init phase upload-certs --experimental-upload-certs

unknown flag: --experimental-upload-certs

To see the stack trace of this error execute with --v=5 or higher

原因是:新版本命令变了

解决方案:把命令换成kubeadm init phase upload-certs --upload-certs

八、部署验证集群

vim nginx-ds.yaml

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: my-nginx

spec:

selector:

matchLabels:

name: my-nginx

template:

metadata:

labels:

name: my-nginx

spec:

containers:

- name: my-nginx

image: nginxkubectl apply -f nginx-ds.yaml

九、Kubenetes Dashboard 部署

wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.0-beta6/aio/deploy/recommended.yaml

wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.3/aio/deploy/recommended.yaml

vim recommended.yaml

#可选项,修改image,自己找对应的国内的包,再根据个人需要进行修改

#增加nodeport配置

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

type: NodePort #增加此行

ports:

- port: 443

targetPort: 8443

nodePort: 30000 #增加此行

selector:

k8s-app: kubernetes-dashboard

kubectl apply -f recommended.yaml

直接访问:https://172.16.1.180:30000/

创建管理员,并赋权,获取token登陆dashboard

kubectl create serviceaccount dashboard-admin -n kube-system

kubectl create clusterrolebinding dashboard-admin --clusterrole=cluster-admin --serviceaccount=kube-system:dashboard-admin

token或取

kubectl describe secrets -n kube-system $(kubectl -n kube-system get secret | awk '/dashboard-admin/{print $1}')

十、kubernetes-dashboard在谷歌浏览器中访问

[root@elk-master ~]# openssl genrsa -out dashboard.key 2048

Generating RSA private key, 2048 bit long modulus

....................................................................................................................................................+++

..............................................................+++

e is 65537 (0x10001)

[root@elk-master ~]# openssl req -days 36000 -new -out dashboard.csr -key dashboard.key -subj '/CN=*192.168.210.70'生成crt证书

[root@elk-master ~]# openssl x509 -req -in dashboard.csr -signkey dashboard.key -out dashboard.crt

下面把证书生成到k8s中

删除原有的kubernetes-dashboard-certs证书

root@elk-master ~]# kubectl delete secret kubernetes-dashboard-certs -n kubernetes-dashboard

secret "kubernetes-dashboard-certs" deleted

证书生成到k8s中

kubectl create secret generic kubernetes-dashboard-certs --from-file=dashboard.key --from-file=dashboard.crt -n kubernetes-dashboard#重启pod