一分钟快速搭建 rtmpd 服务器: https://blog.csdn.net/freeabc/article/details/102880984

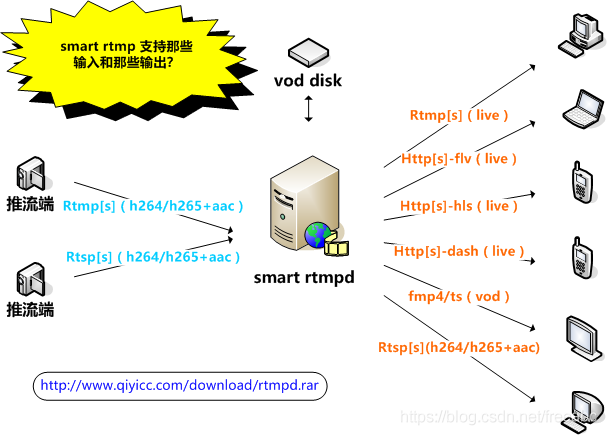

软件下载地址: http://www.qiyicc.com/download/rtmpd.rar

github 地址:https://github.com/superconvert/smart_rtmpd

-----------------------------------------------------------------------------------------------------------------------------------------

WebRTC 产生的 Offer SDP 流程分析

1. 组装 Offer 的 SDP 流程分析

./pc/media_session.cc

std::unique_ptr<SessionDescription> MediaSessionDescriptionFactory::CreateOffer(

const MediaSessionOptions& session_options,

const SessionDescription* current_description) const {

// Must have options for each existing section.

if (current_description) {

RTC_DCHECK_LE(current_description->contents().size(),

session_options.media_description_options.size());

}

IceCredentialsIterator ice_credentials(

session_options.pooled_ice_credentials);

std::vector<const ContentInfo*> current_active_contents;

if (current_description) {

current_active_contents =

GetActiveContents(*current_description, session_options);

}

StreamParamsVec current_streams =

GetCurrentStreamParams(current_active_contents);

AudioCodecs offer_audio_codecs;

VideoCodecs offer_video_codecs;

RtpDataCodecs offer_rtp_data_codecs;

GetCodecsForOffer(current_active_contents, &offer_audio_codecs,

&offer_video_codecs, &offer_rtp_data_codecs);

if (!session_options.vad_enabled) {

// If application doesn't want CN codecs in offer.

StripCNCodecs(&offer_audio_codecs);

}

FilterDataCodecs(&offer_rtp_data_codecs,

session_options.data_channel_type == DCT_SCTP);

RtpHeaderExtensions audio_rtp_extensions;

RtpHeaderExtensions video_rtp_extensions;

GetRtpHdrExtsToOffer(current_active_contents,

session_options.offer_extmap_allow_mixed,

&audio_rtp_extensions, &video_rtp_extensions);

auto offer = std::make_unique<SessionDescription>();

// Iterate through the media description options, matching with existing media

// descriptions in |current_description|.

size_t msection_index = 0;

for (const MediaDescriptionOptions& media_description_options :

session_options.media_description_options) {

const ContentInfo* current_content = nullptr;

if (current_description &&

msection_index < current_description->contents().size()) {

current_content = ¤t_description->contents()[msection_index];

// Media type must match unless this media section is being recycled.

RTC_DCHECK(current_content->name != media_description_options.mid ||

IsMediaContentOfType(current_content,

media_description_options.type));

}

switch (media_description_options.type) {

case MEDIA_TYPE_AUDIO:

if (!AddAudioContentForOffer(

media_description_options, session_options, current_content,

current_description, audio_rtp_extensions, offer_audio_codecs,

¤t_streams, offer.get(), &ice_credentials)) {

return nullptr;

}

break;

case MEDIA_TYPE_VIDEO:

if (!AddVideoContentForOffer(

media_description_options, session_options, current_content,

current_description, video_rtp_extensions, offer_video_codecs,

¤t_streams, offer.get(), &ice_credentials)) {

return nullptr;

}

break;

case MEDIA_TYPE_DATA:

if (!AddDataContentForOffer(media_description_options, session_options,

current_content, current_description,

offer_rtp_data_codecs, ¤t_streams,

offer.get(), &ice_credentials)) {

return nullptr;

}

break;

default:

RTC_NOTREACHED();

}

++msection_index;

}

// Bundle the contents together, if we've been asked to do so, and update any

// parameters that need to be tweaked for BUNDLE.

if (session_options.bundle_enabled) {

ContentGroup offer_bundle(GROUP_TYPE_BUNDLE);

for (const ContentInfo& content : offer->contents()) {

if (content.rejected) {

continue;

}

// TODO(deadbeef): There are conditions that make bundling two media

// descriptions together illegal. For example, they use the same payload

// type to represent different codecs, or same IDs for different header

// extensions. We need to detect this and not try to bundle those media

// descriptions together.

offer_bundle.AddContentName(content.name);

}

if (!offer_bundle.content_names().empty()) {

offer->AddGroup(offer_bundle);

if (!UpdateTransportInfoForBundle(offer_bundle, offer.get())) {

RTC_LOG(LS_ERROR)

<< "CreateOffer failed to UpdateTransportInfoForBundle.";

return nullptr;

}

if (!UpdateCryptoParamsForBundle(offer_bundle, offer.get())) {

RTC_LOG(LS_ERROR)

<< "CreateOffer failed to UpdateCryptoParamsForBundle.";

return nullptr;

}

}

}

// The following determines how to signal MSIDs to ensure compatibility with

// older endpoints (in particular, older Plan B endpoints).

if (is_unified_plan_) {

// Be conservative and signal using both a=msid and a=ssrc lines. Unified

// Plan answerers will look at a=msid and Plan B answerers will look at the

// a=ssrc MSID line.

offer->set_msid_signaling(cricket::kMsidSignalingMediaSection |

cricket::kMsidSignalingSsrcAttribute);

} else {

// Plan B always signals MSID using a=ssrc lines.

offer->set_msid_signaling(cricket::kMsidSignalingSsrcAttribute);

}

offer->set_extmap_allow_mixed(session_options.offer_extmap_allow_mixed);

return offer;

}函数 GetCodecsForOffer 分析

./pc/media_session.cc

void MediaSessionDescriptionFactory::GetCodecsForOffer(

const std::vector<const ContentInfo*>& current_active_contents,

AudioCodecs* audio_codecs,

VideoCodecs* video_codecs,

RtpDataCodecs* rtp_data_codecs) const {

RTC_LOG(LS_INFO) << "Caiwenfeng --- GetCodecsForOffer:" << current_active_contents.size();

// First - get all codecs from the current description if the media type

// is used. Add them to |used_pltypes| so the payload type is not reused if a

// new media type is added.

UsedPayloadTypes used_pltypes;

MergeCodecsFromDescription(current_active_contents, audio_codecs,

video_codecs, rtp_data_codecs, &used_pltypes);

// Add our codecs that are not in the current description.

MergeCodecs<AudioCodec>(all_audio_codecs_, audio_codecs, &used_pltypes);

MergeCodecs<VideoCodec>(video_codecs_, video_codecs, &used_pltypes);

MergeCodecs<DataCodec>(rtp_data_codecs_, rtp_data_codecs, &used_pltypes);

}上述函数用到了 all_audio_codecs_, video_codecs_, 这些都是在下面的构造函数中,初始化的值。

SDP 描述库初始化过程会调用 channel_manager 的各种接口获取基本的信息,以方便组装 SDP

./pc/media_session.cc

MediaSessionDescriptionFactory::MediaSessionDescriptionFactory(

ChannelManager* channel_manager,

const TransportDescriptionFactory* transport_desc_factory,

rtc::UniqueRandomIdGenerator* ssrc_generator)

: MediaSessionDescriptionFactory(transport_desc_factory, ssrc_generator) {

channel_manager->GetSupportedAudioSendCodecs(&audio_send_codecs_);

channel_manager->GetSupportedAudioReceiveCodecs(&audio_recv_codecs_);

channel_manager->GetSupportedAudioRtpHeaderExtensions(&audio_rtp_extensions_);

channel_manager->GetSupportedVideoCodecs(&video_codecs_);

channel_manager->GetSupportedVideoRtpHeaderExtensions(&video_rtp_extensions_);

channel_manager->GetSupportedDataCodecs(&rtp_data_codecs_);

ComputeAudioCodecsIntersectionAndUnion();

}上述函数用到了 channel_manager->GetSupportedVideoCodecs 接口, 这是获取视频的接口信息

./pc/channel_manager.cc

void ChannelManager::GetSupportedVideoCodecs(

std::vector<VideoCodec>* codecs) const {

if (!media_engine_) {

return;

}

codecs->clear();

std::vector<VideoCodec> video_codecs = media_engine_->video().codecs();

for (const auto& video_codec : video_codecs) {

if (!enable_rtx_ &&

absl::EqualsIgnoreCase(kRtxCodecName, video_codec.name)) {

continue;

}

codecs->push_back(video_codec);

}

}./media/engine/webrtc_video_engine.cc

std::vector<VideoCodec> WebRtcVideoEngine::codecs() const {

return AssignPayloadTypesAndDefaultCodecs(encoder_factory_.get());

}

std::vector<VideoCodec> AssignPayloadTypesAndDefaultCodecs(

const webrtc::VideoEncoderFactory* encoder_factory) {

return encoder_factory ? AssignPayloadTypesAndDefaultCodecs(

encoder_factory->GetSupportedFormats())

: std::vector<VideoCodec>();

}

std::vector<VideoCodec> AssignPayloadTypesAndDefaultCodecs(

std::vector<webrtc::SdpVideoFormat> input_formats) {

if (input_formats.empty())

return std::vector<VideoCodec>();

static const int kFirstDynamicPayloadType = 96;

static const int kLastDynamicPayloadType = 127;

int payload_type = kFirstDynamicPayloadType;

input_formats.push_back(webrtc::SdpVideoFormat(kRedCodecName));

input_formats.push_back(webrtc::SdpVideoFormat(kUlpfecCodecName));

if (IsFlexfecAdvertisedFieldTrialEnabled()) {

webrtc::SdpVideoFormat flexfec_format(kFlexfecCodecName);

// This value is currently arbitrarily set to 10 seconds. (The unit

// is microseconds.) This parameter MUST be present in the SDP, but

// we never use the actual value anywhere in our code however.

// TODO(brandtr): Consider honouring this value in the sender and receiver.

flexfec_format.parameters = {{kFlexfecFmtpRepairWindow, "10000000"}};

input_formats.push_back(flexfec_format);

}

std::vector<VideoCodec> output_codecs;

for (const webrtc::SdpVideoFormat& format : input_formats) {

VideoCodec codec(format);

codec.id = payload_type;

AddDefaultFeedbackParams(&codec);

output_codecs.push_back(codec);

// Increment payload type.

++payload_type;

if (payload_type > kLastDynamicPayloadType) {

RTC_LOG(LS_ERROR) << "Out of dynamic payload types, skipping the rest.";

break;

}

// Add associated RTX codec for non-FEC codecs.

if (!absl::EqualsIgnoreCase(codec.name, kUlpfecCodecName) &&

!absl::EqualsIgnoreCase(codec.name, kFlexfecCodecName)) {

output_codecs.push_back(

VideoCodec::CreateRtxCodec(payload_type, codec.id));

// Increment payload type.

++payload_type;

if (payload_type > kLastDynamicPayloadType) {

RTC_LOG(LS_ERROR) << "Out of dynamic payload types, skipping the rest.";

break;

}

}

}

return output_codecs;

}./sdk/android/src/jni/video_encoder_factory_wrapper.cc

std::vector<SdpVideoFormat> VideoEncoderFactoryWrapper::GetSupportedFormats()

const {

return supported_formats_;

}上述函数内的 supported_formats_ 是通过构造函数获取的,具体流程如下:

./sdk/android/src/jni/video_encoder_factory_wrapper.cc

VideoEncoderFactoryWrapper::VideoEncoderFactoryWrapper(

JNIEnv* jni,

const JavaRef<jobject>& encoder_factory)

: encoder_factory_(jni, encoder_factory) {

const ScopedJavaLocalRef<jobjectArray> j_supported_codecs =

Java_VideoEncoderFactory_getSupportedCodecs(jni, encoder_factory);

supported_formats_ = JavaToNativeVector<SdpVideoFormat>(

jni, j_supported_codecs, &VideoCodecInfoToSdpVideoFormat);

const ScopedJavaLocalRef<jobjectArray> j_implementations =

Java_VideoEncoderFactory_getImplementations(jni, encoder_factory);

implementations_ = JavaToNativeVector<SdpVideoFormat>(

jni, j_implementations, &VideoCodecInfoToSdpVideoFormat);

}static base::android::ScopedJavaLocalRef<jobjectArray>

Java_VideoEncoderFactory_getSupportedCodecs(JNIEnv* env, const base::android::JavaRef<jobject>&

obj) {

jclass clazz = org_webrtc_VideoEncoderFactory_clazz(env);

CHECK_CLAZZ(env, obj.obj(),

org_webrtc_VideoEncoderFactory_clazz(env), NULL);

jni_generator::JniJavaCallContextChecked call_context;

call_context.Init<

base::android::MethodID::TYPE_INSTANCE>(

env,

clazz,

"getSupportedCodecs",

"()[Lorg/webrtc/VideoCodecInfo;",

&g_org_webrtc_VideoEncoderFactory_getSupportedCodecs);

jobjectArray ret =

static_cast<jobjectArray>(env->CallObjectMethod(obj.obj(),

call_context.base.method_id));

return base::android::ScopedJavaLocalRef<jobjectArray>(env, ret);

}上述函数调用 java 层的代码

DefaultVideoEncoderFactory.java

public VideoCodecInfo[] DefaultVideoEncoderFactory.getSupportedCodecs() {

LinkedHashSet<VideoCodecInfo> supportedCodecInfos = new LinkedHashSet<VideoCodecInfo>();

supportedCodecInfos.addAll(Arrays.asList(softwareVideoEncoderFactory.getSupportedCodecs()));

supportedCodecInfos.addAll(Arrays.asList(hardwareVideoEncoderFactory.getSupportedCodecs()));

return supportedCodecInfos.toArray(new VideoCodecInfo[supportedCodecInfos.size()]);

}我们所有的音视频编码信息都是这个地方提供的,以供 JNI 层生成 SDP 中的音视频部分的相关信息。