| 框架 | 版本号 |

|---|---|

| Hadoop | 3.1.3 |

| Hive | 3.1.2 |

| Tez | 0.10.1 |

tez是一个Hive的运行引擎,性能优于MR。为什么优于MR呢?看下图。

用Hive直接编写MR程序,假设有四个有依赖关系的MR作业,

上图中,绿色是ReduceTask,云状表示写屏蔽,需要将中间结果持久化写到HDFS。

Tez可以将多个有依赖的作业转换为一个作业,这样只需写一次HDFS,且中间节点较少,从而大大提升作业的计算性能。

1、编译Tez0.10.1过程:遵循官网流程

1.下载tez的src.tar.gz源码包,

附官方下载链接 【点击链接】

下载之后上传到linux系统中,并且解压出来

新版的tez0.10.1需要在github下载,附链接 https://github.com/apache/tez

2.需要在pom.xml中更改hadoop.version属性的值,以匹配所使用的hadoop分支的版本。我这里是Apache Hadoop 3.1.3

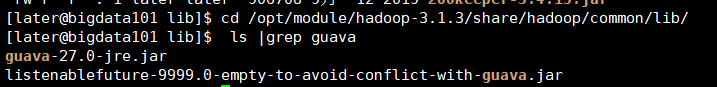

3.还有就是guava的版本,这个插件的版本也是至关重要,希望大家提前修改,把这个问题扼杀在摇篮里,我们可以看到hadoop 3.1.3使用的guava版本是27.0-jre,而tez默认的是11.0.2,所以一定要修改,否则后期装好tez也不能用。

[later@bigdata101 lib]# cd /opt/module/hadoop-3.1.3/share/hadoop/common/lib/

[later@bigdata101 lib]# ls |grep guava

guava-27.0-jre.jar

listenablefuture-9999.0-empty-to-avoid-conflict-with-guava.jar

4.还有就是在tez编译时,tez-ui这个模块是耗时耗力不讨好,而且没啥用,所以我们可以直接跳过

5.这一步结束后就是开始编译了首先要有编译环境是必须的,所以要安装maven,安装git,这两块参考我之前的文章https://blog.csdn.net/weixin_38586230/article/details/105725346

安装编译工具

yum -y install autoconf automake libtool cmake ncurses-devel openssl-devel lzo-devel zlib-devel gcc gcc-c++

6.先安装protobuf(官网让安装2.5.0版本的),下载链接https://github.com/protocolbuffers/protobuf/tags

下好源码包后,解压,并且编译protobuf 2.5.0

./configure

make install

7.开始编译Tez(

mvn clean package -DskipTests=true -Dmaven.javadoc.skip=true

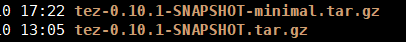

8.编译成功后,包会在apache-tez-0.10.0-src/tez-dist/target/目录下,我们需要的是这连两个

2、为Hive配置Tez了

1.将tez安装包拷贝到集群,并解压tar包,注意解压的是minimal

mkdir /opt/module/tez

tar -zxvf /opt/software/tez-0.10.1-SNAPSHOT-minimal.tar.gz -C /opt/module/tez

2.上传tez依赖到HDFS

上传的是不带minimal的那个,对应的tez配置为下面,下面有tez.xml的配置

<property>

<name>tez.use.cluster.hadoop-libs</name>

<value>true</value>

</property>

hadoop fs -mkdir /tez(集群创建/tez路径,然后再上传,注意路径)

hadoop fs -put /opt/software/tez-0.10.1-SNAPSHOT.tar.gz /tez

3.新建tez-site.xml在$HADOOP_HOME/etc/hadoop/路径下(注意,不要放在hive/conf/目录下,不生效),记得把tez-site.xml同步到集群其他机器。

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<!-- 注意你的路径以及文件名是否和我的一样 -->

<property>

<name>tez.lib.uris</name>

<value>${fs.defaultFS}/tez/tez-0.10.1-SNAPSHOT.tar.gz</value>

</property>

<property>

<name>tez.use.cluster.hadoop-libs</name>

<value>true</value>

</property>

<property>

<name>tez.am.resource.memory.mb</name>

<value>1024</value>

</property>

<property>

<name>tez.am.resource.cpu.vcores</name>

<value>1</value>

</property>

<property>

<name>tez.container.max.java.heap.fraction</name>

<value>0.4</value>

</property>

<property>

<name>tez.task.resource.memory.mb</name>

<value>1024</value>

</property>

<property>

<name>tez.task.resource.cpu.vcores</name>

<value>1</value>

</property>

</configuration>

4.修改Hadoop环境变量

## 编辑hadoop-env.sh

[later@bigdat101 software]$ vim $HADOOP_HOME/etc/hadoop/shellprofile.d/tez.sh

添加Tez的Jar包相关信息,如果最后没成功,把这个配置写example.sh中也可

hadoop_add_profile tez

function _tez_hadoop_classpath

{

hadoop_add_classpath "$HADOOP_HOME/etc/hadoop" after

hadoop_add_classpath "/opt/module/tez/*" after

hadoop_add_classpath "/opt/module/tez/lib/*" after

}

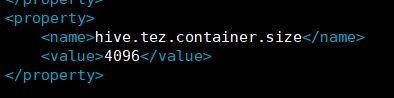

5.修改hive的计算引擎,

vim $HIVE_HOME/conf/hive-site.xml

添加以下内容

<property>

<name>hive.execution.engine</name>

<value>tez</value>

</property>

<property>

<name>hive.tez.container.size</name>

<value>1024</value>

</property>

6.在hive-env.sh中添加tez的路径

export TEZ_HOME=/opt/module/tez #是你的tez的解压目录

export TEZ_JARS=""

for jar in `ls $TEZ_HOME |grep jar`; do

export TEZ_JARS=$TEZ_JARS:$TEZ_HOME/$jar

done

for jar in `ls $TEZ_HOME/lib`; do

export TEZ_JARS=$TEZ_JARS:$TEZ_HOME/lib/$jar

done

export HIVE_AUX_JARS_PATH=/opt/module/hadoop-3.1.3/share/hadoop/common/hadoop-lzo-0.4.21-SNAPSHOT.jar$TEZ_JARS

注意你的jar包路径是不是和我的一样,需要编译lzo的看我另一篇博客安装编译lzo,附链接https://blog.csdn.net/weixin_38586230/article/details/106035660

7. 解决日志Jar包冲突,删除tez/lib下的 slf4j-log4j12-1.7.10.jar

rm /opt/module/tez/lib/slf4j-log4j12-1.7.10.jar

8.到此为止,hive的配置算是搞定了,我们先来跑一下官方给的测试案例,需要大家在本地先随便写个文件,然后上传到hdfs上,我这里写了个word.txt,上传到了hdfs上

把这个文件上传到hdfs

hadoop fs -put word.txt /tez/

开始测试wordcount,注意你的jar包路径

/opt/module/hadoop-3.1.3/bin/yarn jar /opt/module/tez/tez-examples-0.10.1-SNAPSHOT.jar orderedwordcount /tez/word.txt /tez/output/

测试结果看你的 /tez/output/ 输出结果

9.到这里就再开始测试hive中能不能使用tez引擎

1)启动Hive

[later@bigdata101 hive]$ hive

2)创建表

hive (default)> create table student(

id int,

name string);

3)向表中插入数据

hive (default)> insert into student values(1,"zhangsan");

如果没有报错就表示成功了

这里记得设置一下

hive (default)> set mapreduce.framework.name = yarn

不然踩坑一就来了

踩坑一

For more detailed output, check the application tracking page: http://bigdata101:8088/cluster/app/application_1589003644173_0009 Then click on links to logs of each attempt.

. Failing the application.

at org.apache.tez.client.TezClientUtils.getAMProxy(TezClientUtils.java:947) ~[tez-api-0.10.1-SNAPSHOT.jar:0.10.1-SNAPSHOT]

at org.apache.tez.client.TezClient.getAMProxy(TezClient.java:1060) ~[tez-api-0.10.1-SNAPSHOT.jar:0.10.1-SNAPSHOT]

at org.apache.tez.client.TezClient.stop(TezClient.java:743) ~[tez-api-0.10.1-SNAPSHOT.jar:0.10.1-SNAPSHOT]

at org.apache.hadoop.hive.ql.exec.tez.TezSessionState.closeAndIgnoreExceptions(TezSessionState.java:480) ~[hive-exec-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.ql.exec.tez.TezSessionState.startSessionAndContainers(TezSessionState.java:473) ~[hive-exec-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.ql.exec.tez.TezSessionState.access$100(TezSessionState.java:101) ~[hive-exec-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.ql.exec.tez.TezSessionState$1.call(TezSessionState.java:376) ~[hive-exec-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.ql.exec.tez.TezSessionState$1.call(TezSessionState.java:371) ~[hive-exec-3.1.2.jar:3.1.2]

at java.util.concurrent.FutureTask.run(FutureTask.java:266) ~[?:1.8.0_211]

at java.lang.Thread.run(Thread.java:748) [?:1.8.0_211]

2020-05-09T06:10:41,600 INFO [Tez session start thread] client.TezClient: Could not connect to AM, killing session via YARN, sessionName=HIVE-f7d9bc1d-65aa-4450-a740-41245bc712d2, applicationId=application_1589003644173_0009

2020-05-09T06:10:41,605 DEBUG [IPC Parameter Sending Thread #0] ipc.Client: IPC Client (321772459) connection to bigdata101/192.168.1.96:8032 from later sending #123 org.apache.hadoop.yarn.api.ApplicationClientProtocolPB.forceKillApplication

2020-05-09T06:10:41,607 DEBUG [IPC Client (321772459) connection to bigdata101/192.168.1.96:8032 from later] ipc.Client: IPC Client (321772459) connection to bigdata101/192.168.1.96:8032 from later got value #123

查资料的答案是这样解释的

踩坑二

Application application_1589119407952_0003 failed 2 times due to AM Container for appattempt_1589119407952_0003_000002 exited with exitCode: -103

Failing this attempt.Diagnostics: [2020-05-10 22:06:09.140]Container [pid=57149,containerID=container_e14_1589119407952_0003_02_000001] is running 759679488B beyond the 'VIRTUAL' memory limit. Current usage: 140.9 MB of 1 GB physical memory used; 2.8 GB of 2.1 GB virtual memory used. Killing container.

Dump of the process-tree for container_e14_1589119407952_0003_02_000001 :

|- PID PPID PGRPID SESSID CMD_NAME USER_MODE_TIME(MILLIS) SYSTEM_TIME(MILLIS) VMEM_USAGE(BYTES) RSSMEM_USAGE(PAGES) FULL_CMD_LINE

|- 57149 57147 57149 57149 (bash) 0 2 118079488 368 /bin/bash -c /opt/module/jdk1.8.0_211/bin/java -Djava.io.tmpdir=/opt/module/hadoop-3.1.3/tmp/nm-local-dir/usercache/later/appcache/application_1589119407952_0003/container_e14_1589119407952_0003_02_000001/tmp -server -Djava.net.preferIPv4Stack=true -Dhadoop.metrics.log.level=WARN -Xmx1024m -Dlog4j.configuratorClass=org.apache.tez.common.TezLog4jConfigurator -Dlog4j.configuration=tez-container-log4j.properties -Dyarn.app.container.log.dir=/opt/module/hadoop-3.1.3/logs/userlogs/application_1589119407952_0003/container_e14_1589119407952_0003_02_000001 -Dtez.root.logger=INFO,CLA -Dsun.nio.ch.bugLevel='' org.apache.tez.dag.app.DAGAppMaster --session 1>/opt/module/hadoop-3.1.3/logs/userlogs/application_1589119407952_0003/container_e14_1589119407952_0003_02_000001/stdout 2>/opt/module/hadoop-3.1.3/logs/userlogs/application_1589119407952_0003/container_e14_1589119407952_0003_02_000001/stderr

|- 57201 57149 57149 57149 (java) 871 158 2896457728 35706 /opt/module/jdk1.8.0_211/bin/java -Djava.io.tmpdir=/opt/module/hadoop-3.1.3/tmp/nm-local-dir/usercache/later/appcache/application_1589119407952_0003/container_e14_1589119407952_0003_02_000001/tmp -server -Djava.net.preferIPv4Stack=true -Dhadoop.metrics.log.level=WARN -Xmx1024m -Dlog4j.configuratorClass=org.apache.tez.common.TezLog4jConfigurator -Dlog4j.configuration=tez-container-log4j.properties -Dyarn.app.container.log.dir=/opt/module/hadoop-3.1.3/logs/userlogs/application_1589119407952_0003/container_e14_1589119407952_0003_02_000001 -Dtez.root.logger=INFO,CLA -Dsun.nio.ch.bugLevel= org.apache.tez.dag.app.DAGAppMaster --session

[2020-05-10 22:06:09.222]Container killed on request. Exit code is 143

[2020-05-10 22:06:09.229]Container exited with a non-zero exit code 143.

For more detailed output, check the application tracking page: http://bigdata101:8088/cluster/app/application_1589119407952_0003 Then click on links to logs of each attempt.

. Failing the application.

这个错误还是比较 明细的,就是说tez检查内存不够了,这个解决办法两个,

关闭虚拟内存检查

(1)关掉虚拟内存检查,修改yarn-site.xml

yarn.nodemanager.vmem-check-enabled false

(2)修改后一定要分发,并重新启动hadoop集群。

把内存调大(hive-site.xml)

踩坑三

tez跑任务报错:

java.lang.NoClassDefFoundError: org/apache/tez/dag/api/TezConfiguration

at java.lang.Class.getDeclaredMethods0(Native Method)

at java.lang.Class.privateGetDeclaredMethods(Class.java:2570)

at java.lang.Class.getMethod0(Class.java:2813)

at java.lang.Class.getMethod(Class.java:1663)

at org.apache.hadoop.util.ProgramDriver$ProgramDescription.(ProgramDriver.java:59)

at org.apache.hadoop.util.ProgramDriver.addClass(ProgramDriver.java:103)

at org.apache.tez.examples.ExampleDriver.main(ExampleDriver.java:47)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:57)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:606)

at org.apache.hadoop.util.RunJar.run(RunJar.java:221)

at org.apache.hadoop.util.RunJar.main(RunJar.java:136)

Caused by: java.lang.ClassNotFoundException: org.apache.tez.dag.api.TezConfiguration

看起来是告诉我配置什么的找不到,那不就是相当于意味着我的tez配置有问题啊,所以我把tez-site.xml放到了$HADOOP_HOME/etc/hadoop/下,以前是放在hive/conf/目录下不生效

至此可以愉快的玩耍了hive on tez