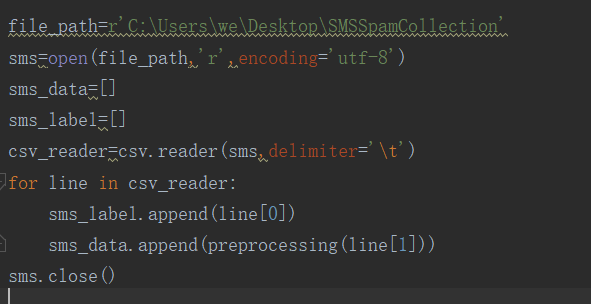

1. 读邮件数据集文件,提取邮件本身与标签。

2.邮件预处理

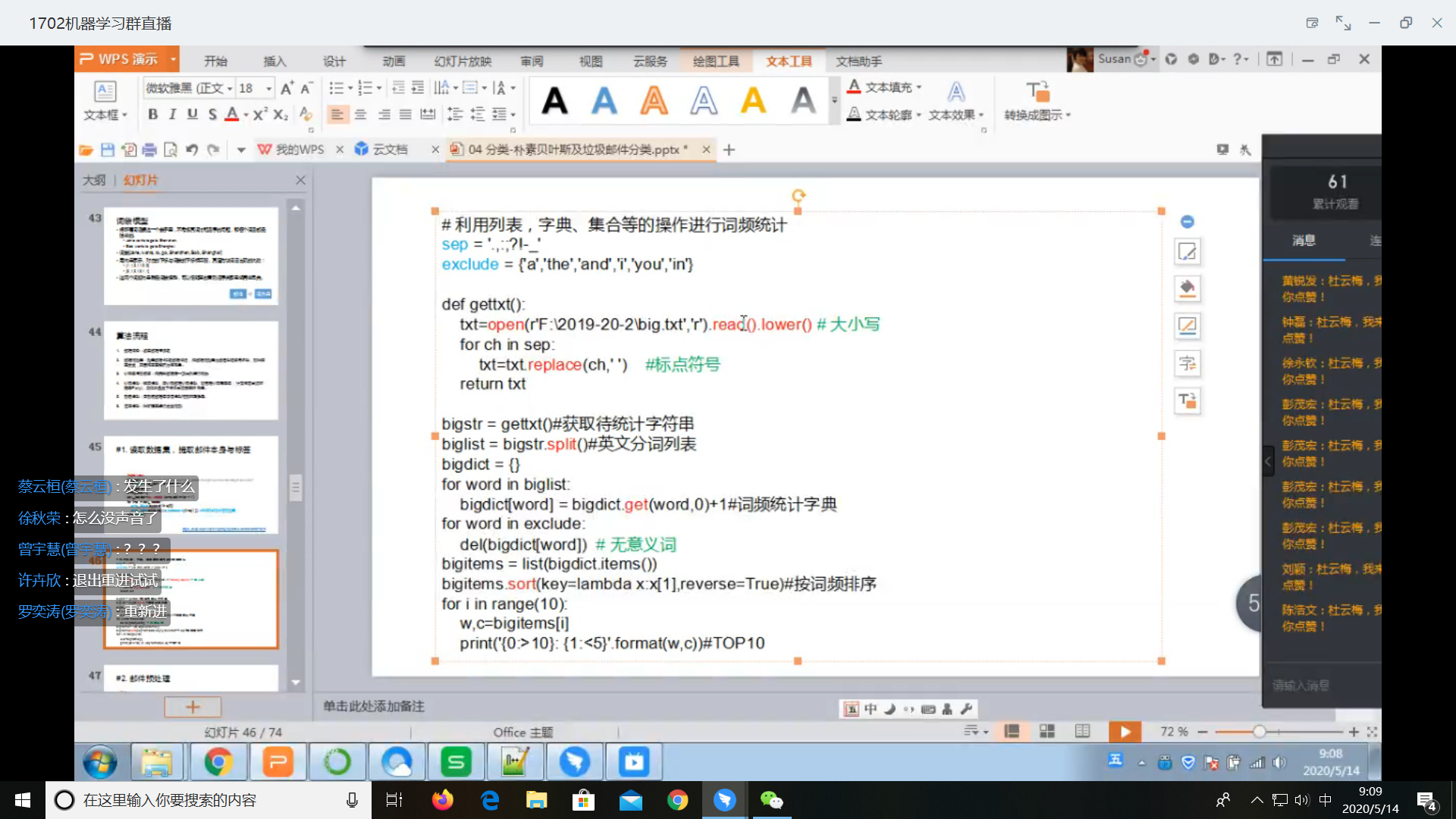

2.1传统方法

扫描二维码关注公众号,回复:

11192420 查看本文章

2.1 nltk库 分词

nltk.sent_tokenize(text) #对文本按照句子进行分割

nltk.word_tokenize(sent) #对句子进行分词

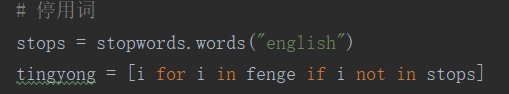

2.2 punkt 停用词

from nltk.corpus import stopwords

stops=stopwords.words('english')

2.3 NLTK 词性标注

nltk.pos_tag(tokens)

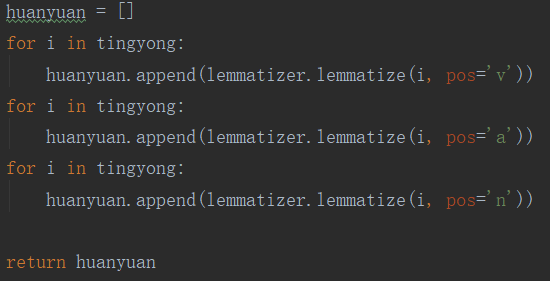

2.4 Lemmatisation(词性还原)

from nltk.stem import WordNetLemmatizer

lemmatizer = WordNetLemmatizer()

lemmatizer.lemmatize('leaves') #缺省名词

lemmatizer.lemmatize('best',pos='a')

lemmatizer.lemmatize('made',pos='v')

一般先要分词、词性标注,再按词性做词性还原。

2.5 编写预处理函数

def preprocessing(text):

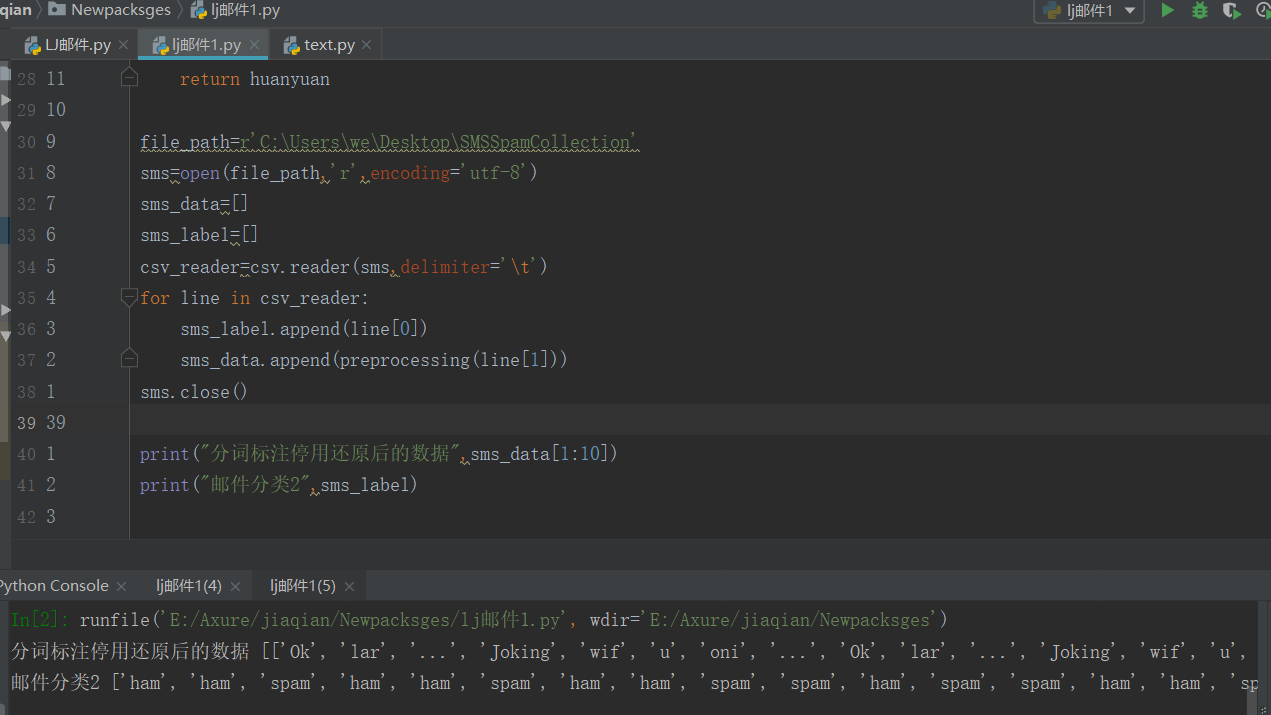

sms_data.append(preprocessing(line[1])) #对每封邮件做预处理

import csv import nltk from mistune import preprocessing from nltk.corpus import stopwords from nltk.stem import WordNetLemmatizer def preprocessing(text): # 分词 fenge = [] for sent in nltk.sent_tokenize(text): for word in nltk.word_tokenize(sent): fenge.append(word) # 停用词 stops = stopwords.words("english") tingyong = [i for i in fenge if i not in stops] # 磁性标注 nltk.pos_tag(tingyong) # 磁性还原 lemmatizer = WordNetLemmatizer() huanyuan = [] for i in tingyong: huanyuan.append(lemmatizer.lemmatize(i, pos='v')) for i in tingyong: huanyuan.append(lemmatizer.lemmatize(i, pos='a')) for i in tingyong: huanyuan.append(lemmatizer.lemmatize(i, pos='n')) return huanyuan file_path=r'C:\Users\we\Desktop\SMSSpamCollection' sms=open(file_path,'r',encoding='utf-8') sms_data=[] sms_label=[] csv_reader=csv.reader(sms,delimiter='\t') for line in csv_reader: sms_label.append(line[0]) sms_data.append(preprocessing(line[1])) sms.close() print("分词标注停用还原后的数据",sms_data[1:10]) print("邮件分类2",sms_label)

3. 训练集与测试集

4. 词向量

5. 模型