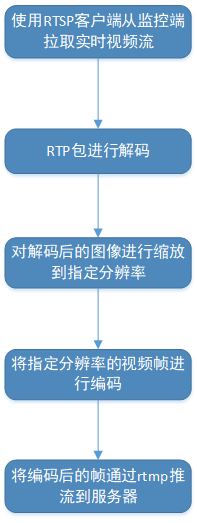

实时监控视频的码率通常在5M以上,如果做比方手机端的实时预览查看,对带宽是很大的考验,所以很有必要先做降分辨率,然后降码率的处理。所有的处理在后台服务器进行,大致的业务流程如下:

海康监控摄像头输出的分辨率是:2560*1440 ,ffmpeg提供的方法能很好的完成这个流程,其实网上有很多例子,但都不全,去看ffmpeg源码提供的例子来实现是很好的办法,比方ffmpeg-4.1的例子代码在\ffmpeg-4.1\doc\examples,参考封装了一个类来做解码、缩放和编码的流程,代码如下图:

/*

created:2019/04/02

*/

#ifndef RTP_RESAMPLE_TASK_H__

#define RTP_RESAMPLE_TASK_H__

#include <stdio.h>

#include <stdlib.h>

#include <string.h>

extern "C" {

#include <libavcodec/avcodec.h>

#include <libavutil/avutil.h>

#include <libavutil/opt.h>

#include <libavutil/imgutils.h>

#include <libavutil/base64.h>

#include <libavutil/imgutils.h>

#include <libavutil/parseutils.h>

#include <libswscale/swscale.h>

}

#include <stdio.h>

#include "Task.h"

#include "TimeoutTask.h"

#include "SVector.h"

typedef void(*EncodedCallbackFunc)(void*, char *, int);

typedef struct RtpData

{

char *data;

int length;

}RtpData;

typedef struct DstVideoInfo

{

int width;

int height;

int samplerate;

}DstVideoInfo;

typedef struct SourceVideoInfo{

int payload_type;

char *codec_type;

int freq_hz;//90000

char *sps;

char *pps;

long profile_level_id;

char *media_header;

}SourceVideoInfo;

typedef struct

{

struct AVCodec *codec;// Codec

struct AVCodecContext *context;// Codec Context

AVCodecParserContext *parser;

struct AVFrame *frame;// Frame

AVPacket *pkt;

int frame_count;

int iWidth;

int iHeight;

int comsumedSize;

int skipped_frame;

//for decodec

void* accumulator;

int accumulator_pos;

int accumulator_size;

char *szSourceDecodedSPS;

int sps_len;

char *szSourceDecodedPPS;

int pps_len;

} X264_Decoder_Handler;

typedef struct

{

struct AVCodec *codec;// Codec

struct AVCodecContext *context;// Codec Context

int frame_count;

struct AVFrame *frame;// Frame

AVPacket *pkt;

Bool16 force_idr;

int quality; // [1-31]

int iWidth;

int iHeight;

int comsumedSize;

int got_picture;

char *encodedBuffer;

int encodedBufferSize;

int encodedSize;

char *rawBuffer;

int rawBufferSize;

struct SwsContext *sws_ctx;

char *sps;

int sps_len;

char *pps;

int pps_len;

FILE *fTest;

} X264_Encoder_Handler;

class RTPResampleTask {

public:

//output width, heigh, samplerate

RTPResampleTask(const DstVideoInfo *dstVideoInfo, const SourceVideoInfo *sourceVideoInfo, EncodedCallbackFunc func, void *funcArgs);

~RTPResampleTask();

int resample(const char *inputBuffer, int inputSize, char **outputBuffer, int *outputSize);

char *getResampleSPS(int *sps_len){

*sps_len = encoderHandler.sps_len;

return encoderHandler.sps;

};

char *getResamplePPS(int *pps_len){

*pps_len = encoderHandler.pps_len;

return encoderHandler.pps;

};

enum{

STATE_INIT = -1,

STATE_RUNNING = 0,

STATE_WAITING = 1,

STATE_START_DECODE = 2,

STATE_DONE = 3

};

private:

int initEncoder();

int initDecoder();

void deInitEncoder();

void deInitDecoder();

int decodePacket(const char *inputBuffer, int inputSize);

int encodePacket();

private:

UInt32 fState;

UInt32 kIdleTime;

EncodedCallbackFunc callback;

SVector<RtpData *> reEncodedWaitList;

SourceVideoInfo sourceVideoInfo;

DstVideoInfo dstVideoInfo;

X264_Decoder_Handler decoderHandler;

X264_Encoder_Handler encoderHandler;

void *funcArgs;

};

#endif//RTP_RESAMPLE_TASK_H__

/*

created:2019/04/02

*/

#include "RTPResampleTask.h"

#define INBUF_SIZE 4096

#define H264_RTP_PAYLOAD_SIZE 1320

RTPResampleTask::RTPResampleTask(const DstVideoInfo *dstVideoInfo, const SourceVideoInfo *sourceVideoInfo, EncodedCallbackFunc func , void *funcArgs){

uint8_t szDecodedSPS[128] = { 0 };

this->decoderHandler.sps_len = av_base64_decode((uint8_t*)szDecodedSPS, (const char *)sourceVideoInfo->sps, sizeof(szDecodedSPS));//nSPSLen=24

this->decoderHandler.szSourceDecodedSPS = (char *)malloc(this->decoderHandler.sps_len);

if (this->decoderHandler.szSourceDecodedSPS != NULL){

memcpy(this->decoderHandler.szSourceDecodedSPS, szDecodedSPS, this->decoderHandler.sps_len);

}

uint8_t szDecodedPPS[128] = { 0 };

this->decoderHandler.pps_len = av_base64_decode(( uint8_t*)szDecodedPPS, (const char *)sourceVideoInfo->pps, sizeof(szDecodedPPS));//nPPSLen=4

this->decoderHandler.szSourceDecodedPPS = (char *)malloc(this->decoderHandler.pps_len);

if (this->decoderHandler.szSourceDecodedPPS != NULL){

memcpy(this->decoderHandler.szSourceDecodedPPS, szDecodedPPS, this->decoderHandler.pps_len);

}

this->funcArgs = funcArgs;

kIdleTime = 100;

fState = STATE_INIT;

initEncoder();

initDecoder();

}

RTPResampleTask::~RTPResampleTask(){

deInitEncoder();

deInitDecoder();

}

int RTPResampleTask::initEncoder(){

AVCodecID codec_id = AV_CODEC_ID_H264;

if (codec_id == AV_CODEC_ID_H264){

}

encoderHandler.rawBuffer= NULL;

encoderHandler.rawBufferSize = 0;

encoderHandler.encodedBuffer= NULL;

encoderHandler.encodedBufferSize = 0;

encoderHandler.sws_ctx = NULL;

encoderHandler.pkt = av_packet_alloc();

if (!encoderHandler.pkt) {

printf("encoderHandler.pkt == NULL");

return -1;

}

encoderHandler.codec = avcodec_find_encoder(codec_id); //AV_CODEC_ID_AAC;

if(encoderHandler.codec == NULL ) {

printf("encoderHandler.codec == NULL");

return -1;

}

//创建AVFormatContext结构体

//分配一个AVFormatContext,FFMPEG所有的操作都要通过这个AVFormatContext来进行

encoderHandler.context = avcodec_alloc_context3(encoderHandler.codec);

if(encoderHandler.context == NULL ) {

printf("encoderHandler.context == NULL");

return -1;

}

//设置AVCodecContext编码参数

struct AVCodecContext *c = encoderHandler.context;

avcodec_get_context_defaults3(c, encoderHandler.codec);

c->codec_id = codec_id;

c->bit_rate = 500000;

c->time_base.den = 25; //分母

c->time_base.num = 1; //分子

/* resolution must be a multiple of two */

c->width = 640;

c->height = 480;

/* frames per second */

c->time_base = (AVRational){1, 25};

c->framerate = (AVRational){25, 1};

c->gop_size = 1;//

c->max_b_frames = 0;

//c->rtp_payload_size = H264_RTP_PAYLOAD_SIZE;

c->pix_fmt = AV_PIX_FMT_YUV420P;

c->codec_type = AVMEDIA_TYPE_VIDEO;

//c->flags|= CODEC_FLAG_GLOBAL_HEADER;

//AVOptions的参数

av_opt_set(c->priv_data, "preset", "slow", 0);

av_opt_set(c->priv_data, "preset", "ultrafast", 0);

av_opt_set(c->priv_data, "tune","stillimage,fastdecode,zerolatency",0);

av_opt_set(c->priv_data, "x264opts","crf=26:vbv-maxrate=728:vbv-bufsize=364:keyint=25",0);

encoderHandler.frame = av_frame_alloc();

if(encoderHandler.frame == NULL ) {

printf("encoderHandler.frame == NULL");

return -1;

}

encoderHandler.frame->format = c->pix_fmt;

encoderHandler.frame->width = c->width;

encoderHandler.frame->height = c->height;

avpicture_alloc((AVPicture *)encoderHandler.frame,AV_PIX_FMT_YUV420P, encoderHandler.frame->width, encoderHandler.frame->height); //desW,desH分别为目标分辨率的宽度、高度

/* open it */

int ret = avcodec_open2(encoderHandler.context, encoderHandler.codec , NULL);

if (ret < 0) {

fprintf(stderr, "Could not open codec: %d\n", ret);

return -1;

}

encoderHandler.fTest = fopen("/tmp/test_out.h264", "wb");

if (!encoderHandler.fTest) {

fprintf(stderr, "Could not open /tmp/test_out.h264 \n");

return -1;

}

encoderHandler.sps = NULL;

encoderHandler.sps_len = 0;

encoderHandler.pps = NULL;

encoderHandler.pps_len = 0;

return 0;

}

void RTPResampleTask::deInitEncoder(){

if(encoderHandler.context){

avcodec_free_context(&encoderHandler.context);

encoderHandler.context = NULL;

}

if(encoderHandler.frame){

av_frame_free(&encoderHandler.frame);

encoderHandler.frame = NULL;

}

if (encoderHandler.pkt){

av_packet_free(&encoderHandler.pkt);

encoderHandler.pkt = NULL;

}

if (encoderHandler.sws_ctx){

sws_freeContext(encoderHandler.sws_ctx);

encoderHandler.sws_ctx = NULL;

}

if (encoderHandler.fTest){

fclose(encoderHandler.fTest);

}

if (encoderHandler.sps != NULL){

free(encoderHandler.sps);

}

if (encoderHandler.pps != NULL){

free(encoderHandler.pps);

}

}

int RTPResampleTask::initDecoder(){

AVCodecID codec_id = AV_CODEC_ID_H264;

if (codec_id == AV_CODEC_ID_H264){

}

decoderHandler.pkt = av_packet_alloc();

if (!decoderHandler.pkt) {

printf("decoderHandler.pkt == NULL");

return -1;

}

decoderHandler.codec = avcodec_find_decoder(codec_id); //AV_CODEC_ID_AAC;

if(decoderHandler.codec == NULL )

{

printf("decoderHandler.codec == NULL");

return -1;

}

decoderHandler.parser = av_parser_init(decoderHandler.codec->id);

if (!decoderHandler.parser) {

printf("decoderHandler.parser == NULL");

return -1;

}

//创建AVFormatContext结构体

//分配一个AVFormatContext,FFMPEG所有的操作都要通过这个AVFormatContext来进行

decoderHandler.context = avcodec_alloc_context3(decoderHandler.codec);

if (!decoderHandler.context) {

printf("decoderHandler.context == NULL");

return -1;

}

int sps_pps_len = decoderHandler.sps_len + decoderHandler.pps_len + 6;

unsigned char *szSPSPPS = (unsigned char *)av_mallocz(sps_pps_len + 1);

char spsHeader[4] = {0x00, 0x00,0x00, 0x01};

char ppsHeader[3] = {0x00, 0x00, 0x01};

memcpy(szSPSPPS, spsHeader, sizeof(spsHeader));

memcpy(szSPSPPS + sizeof(spsHeader), decoderHandler.szSourceDecodedSPS, decoderHandler.sps_len);

memcpy((void *)(szSPSPPS + sizeof(spsHeader) + decoderHandler.sps_len), ppsHeader, sizeof(ppsHeader));

memcpy((void *)(szSPSPPS + sizeof(spsHeader) + sizeof(ppsHeader) + decoderHandler.sps_len), decoderHandler.szSourceDecodedPPS, decoderHandler.pps_len);

decoderHandler.context->extradata_size = sps_pps_len;

decoderHandler.context->extradata = (uint8_t*)av_mallocz(decoderHandler.context->extradata_size + AV_INPUT_BUFFER_PADDING_SIZE);

memcpy(decoderHandler.context->extradata, szSPSPPS, sps_pps_len);

decoderHandler.context->time_base.num = 1;

decoderHandler.context->time_base.den = 25;

if (avcodec_open2(decoderHandler.context, decoderHandler.codec, NULL) < 0) {

printf("avcodec_open2 return failed");

return -1;

}

decoderHandler.frame = av_frame_alloc();

if (!decoderHandler.frame) {

printf("decoderHandler.frame is null.");

return -1;

}

printf("initDecoder, width:%d, height:%d", decoderHandler.context->width, decoderHandler.context->height);

return 0;

}

void RTPResampleTask::deInitDecoder(){

if(decoderHandler.frame){

av_frame_free(&decoderHandler.frame);

decoderHandler.frame = NULL;

}

if(decoderHandler.parser){

av_parser_close(decoderHandler.parser);

decoderHandler.parser = NULL;

}

if (decoderHandler.pkt){

av_packet_free(&decoderHandler.pkt);

}

if (decoderHandler.context->extradata != NULL){

av_free(decoderHandler.context->extradata);

}

if(decoderHandler.context){

avcodec_free_context(&decoderHandler.context);

decoderHandler.context = NULL;

}

}

int RTPResampleTask::resample(const char *inputBuffer, int inputSize, char **outputBuffer, int *outputSize){

*outputBuffer = NULL;

*outputSize = 0;

int ret = decodePacket((const char *)inputBuffer, inputSize);

//printf("video resample decodePacket ret:%d outputSize:%d\n", ret, encoderHandler.rawBufferSize);

if (ret == 0){

char *encodeOutputBuffer = NULL;

int encodeOutputSize = 0;

int src_linesize[4], dst_linesize[4];

//scale

if (encoderHandler.sws_ctx == NULL){

encoderHandler.sws_ctx = sws_getContext(decoderHandler.context->width, decoderHandler.context->height, decoderHandler.context->pix_fmt,

encoderHandler.context->width, encoderHandler.context->height, AV_PIX_FMT_YUV420P,

SWS_BILINEAR, NULL, NULL, NULL);

}

sws_scale(encoderHandler.sws_ctx, decoderHandler.frame->data,

decoderHandler.frame->linesize, 0, decoderHandler.context->height, encoderHandler.frame->data, encoderHandler.frame->linesize);

ret = encodePacket();

if (ret != 0){

printf("video resample ret:%d err\n", ret);

return -1;

}

fwrite(encoderHandler.encodedBuffer, 1, encoderHandler.encodedSize, encoderHandler.fTest);

*outputBuffer = encoderHandler.encodedBuffer;

*outputSize = encoderHandler.encodedSize;

int typeIndex = 3;//default 00 00 01

if (encoderHandler.encodedBuffer[3] == 0x01){

typeIndex = 4;

}

if (encoderHandler.encodedBuffer[typeIndex] == 0x67 && encoderHandler.sps == NULL){

printf("video resample has sps:%d \n", encoderHandler.encodedSize);

encoderHandler.sps_len = 0;

int i = 0;

unsigned short find_sps_flag = 0;

while(i++ < encoderHandler.encodedSize){

if ((encoderHandler.encodedBuffer[typeIndex] == 0x00 &&

encoderHandler.encodedBuffer[typeIndex + 1] == 0x00 &&

encoderHandler.encodedBuffer[typeIndex + 2] == 0x01 &&

encoderHandler.encodedBuffer[typeIndex + 3] == 0x68)

){

find_sps_flag = 1;

break;

}

typeIndex++;

}

if (!find_sps_flag){

return 0;

}

find_sps_flag = 0;

encoderHandler.sps_len = typeIndex;

printf("video resample encoderHandler.sps_len:%d \n", encoderHandler.sps_len);

encoderHandler.sps = (char *)malloc(encoderHandler.sps_len);

if (encoderHandler.sps != NULL){

memcpy((void *)encoderHandler.sps, (void *)encoderHandler.encodedBuffer, encoderHandler.sps_len);

}

encoderHandler.pps_len = 0;

typeIndex = encoderHandler.sps_len + 4;

while(i++ < encoderHandler.encodedSize){

if (

(encoderHandler.encodedBuffer[typeIndex] == 0x00 &&

encoderHandler.encodedBuffer[typeIndex +1] == 0x00 &&

encoderHandler.encodedBuffer[typeIndex +2] == 0x01)

){

find_sps_flag = 1;

break;

}

typeIndex++;

encoderHandler.pps_len++;

}

if (!find_sps_flag){

return 0;

}

encoderHandler.pps_len += 4;

printf("video resample encoderHandler.pps_len:%d \n", encoderHandler.pps_len);

encoderHandler.pps = (char *)malloc(encoderHandler.pps_len);

if (encoderHandler.pps != NULL){

memcpy((void *)encoderHandler.pps, &encoderHandler.encodedBuffer[encoderHandler.sps_len], encoderHandler.pps_len);

}

}

return 0;

}

return -1;

}

int RTPResampleTask::decodePacket(const char *inputBuffer, int inputSize){

int ret;

uint8_t *data = ( uint8_t *)inputBuffer;

int data_size = inputSize;

//while (data_size > 0)

{

encoderHandler.rawBufferSize = 0;

av_init_packet(decoderHandler.pkt);

decoderHandler.pkt->dts = AV_NOPTS_VALUE;

decoderHandler.pkt->size = (int)inputSize;

decoderHandler.pkt->data = (uint8_t *)inputBuffer;

if (decoderHandler.pkt->size){

ret = avcodec_send_packet(decoderHandler.context, decoderHandler.pkt);

if (ret < 0) {

fprintf(stderr, "decodePacket Error sending a packet for decoding\n");

return -1;

}

ret = avcodec_receive_frame(decoderHandler.context, decoderHandler.frame);

if (ret == AVERROR(EAGAIN) || ret == AVERROR_EOF){

return -1;

}

else if (ret < 0) {

fprintf(stderr, "decodePacket Error during decoding :%d\n", ret);

return -1;

}

int xsize = avpicture_get_size(decoderHandler.context->pix_fmt, decoderHandler.context->width, decoderHandler.context->height);

//fprintf(stderr, "decodePacket end decoding :%d, width:%d, height:%d\n", xsize, decoderHandler.context->width, decoderHandler.context->height);

encoderHandler.rawBufferSize = xsize;

//

}else{

return -1;

}

}

return 0;

}

int RTPResampleTask::encodePacket(){

#if 0

int size = avpicture_fill((AVPicture*)encoderHandler.frame, (uint8_t*)inputBuffer, AV_PIX_FMT_YUV420P, encoderHandler.context->width, encoderHandler.context->height);

if (size != inputSize){

/* guard */

printf("RTPResampleTask::encodePacket invalid size: %u<>%u", size, inputSize);

return 0;

}

#endif//encoderHandler.frame

int ret = avcodec_send_frame(encoderHandler.context, encoderHandler.frame);

if (ret < 0) {

printf("RTPResampleTask::encodePacket error sending a frame for encoding, ret:%d\n", ret);

return ret;

}

encoderHandler.encodedSize = 0;

while (ret >= 0)

{

ret = avcodec_receive_packet(encoderHandler.context, encoderHandler.pkt);

if (ret == AVERROR(EAGAIN) || ret == AVERROR_EOF){

if (encoderHandler.encodedSize > 0){

return 0;

}

return -1;

} else if (ret < 0) {

printf("RTPResampleTask::encodePacket error during encoding\n");

return ret;

}

if (encoderHandler.encodedBuffer == NULL){

encoderHandler.encodedBuffer = (char *)malloc((encoderHandler.context->width*encoderHandler.context->height * 3)/2);

encoderHandler.encodedBufferSize = (encoderHandler.context->width*encoderHandler.context->height * 3)/2;

}

if (encoderHandler.encodedBufferSize < (encoderHandler.pkt->size + encoderHandler.encodedSize)){

encoderHandler.encodedBuffer = (char *)realloc(encoderHandler.encodedBuffer, encoderHandler.pkt->size + encoderHandler.encodedSize);

encoderHandler.encodedBufferSize += encoderHandler.pkt->size + encoderHandler.encodedSize;

}

if (encoderHandler.encodedBuffer){

memcpy((void *)(encoderHandler.encodedBuffer + encoderHandler.encodedSize), encoderHandler.pkt->data, encoderHandler.pkt->size);

}

encoderHandler.encodedSize += encoderHandler.pkt->size;

//printf("RTPResampleTask::encodePacket success ret:%d\n", encoderHandler.pkt->size);

//if (callback != NULL){

//callback(funcArgs, (char*)encoderHandler.pkt->data, encoderHandler.pkt->size);

//}

av_packet_unref(encoderHandler.pkt);

}

//printf("RTPResampleTask::encodePacket success ret:%d\n", encoderHandler.encodedBufferSize);

return 0;

}

C语言版本:

#include <stdio.h>

#include <stdlib.h>

#include <fcntl.h>

#include <unistd.h>

#include <netinet/in.h>

#include <fcntl.h>

#include "libavcodec/avcodec.h"

#include "libavutil/avutil.h"

#include "libavutil/opt.h"

#include "libavutil/imgutils.h"

#include "libavutil/base64.h"

#include "libavutil/imgutils.h"

#include "libavutil/parseutils.h"

#include "libswscale/swscale.h"

typedef void(*EncodedCallbackFunc)(void*, char *, int);

typedef struct _RtpData

{

char *data;

int length;

}RtpData;

typedef struct _DstVideoInfo

{

int width;

int height;

int samplerate;

}DstVideoInfo;

typedef struct _SourceVideoInfo{

int payload_type;

char *codec_type;

int freq_hz;//90000

char *sps;

char *pps;

long profile_level_id;

char *media_header;

}SourceVideoInfo;

typedef struct

{

struct AVCodec *codec;// Codec

struct AVCodecContext *context;// Codec Context

AVCodecParserContext *parser;

struct AVFrame *frame;// Frame

AVPacket *pkt;

int frame_count;

int iWidth;

int iHeight;

int comsumedSize;

int skipped_frame;

//for decodec

void* accumulator;

int accumulator_pos;

int accumulator_size;

char *szSourceDecodedSPS;

int sps_len;

char *szSourceDecodedPPS;

int pps_len;

} X264_Decoder_Handler;

typedef struct

{

struct AVCodec *codec;// Codec

struct AVCodecContext *context;// Codec Context

int frame_count;

struct AVFrame *frame;// Frame

AVPacket *pkt;

gboolean force_idr;

int quality; // [1-31]

int iWidth;

int iHeight;

int comsumedSize;

int got_picture;

char *encodedBuffer;

int encodedBufferSize;

int encodedSize;

char *rawBuffer;

int rawBufferSize;

struct SwsContext *sws_ctx;

char *sps;

int sps_len;

char *pps;

int pps_len;

FILE *fTest;

} X264_Encoder_Handler;

//output width, heigh, samplerate

typedef struct _RTPResampleTask {

guint32 fState;

EncodedCallbackFunc callback;

void *funcArgs;

SourceVideoInfo sourceVideoInfo;

DstVideoInfo dstVideoInfo;

X264_Decoder_Handler decoderHandler;

X264_Encoder_Handler encoderHandler;

}RTPResampleTask;

void initRTPResampleTask(RTPResampleTask *this, const DstVideoInfo *dstVideoInfo, const SourceVideoInfo *sourceVideoInfo, EncodedCallbackFunc func, void *funcArgs);

void deInitRTPResampleTask(RTPResampleTask *this);

int resample(RTPResampleTask *this, const char *inputBuffer, int inputSize, char **outputBuffer, int *outputSize);

char *getResampleSPS(RTPResampleTask *this, int *sps_len){

*sps_len = this->encoderHandler.sps_len;

return this->encoderHandler.sps;

};

char *getResamplePPS(RTPResampleTask *this, int *pps_len){

*pps_len = this->encoderHandler.pps_len;

return this->encoderHandler.pps;

};

#define RTP_VERSION_ 2

#define BUF_SIZE (1024 * 1024* 4)

#define AUDIO_BUF_SIZE 1024*2

typedef struct

{

//LITTLE_ENDIAN

unsigned short cc:4; /* CSRC count */

unsigned short x:1; /* header extension flag */

unsigned short p:1; /* padding flag */

unsigned short v:2; /* packet type */

unsigned short pt:7; /* payload type */

unsigned short m:1; /* marker bit */

uint16_t seq; /* sequence number */

guint32 ts; /* timestamp */

guint32 ssrc; /* synchronization source */

} rtp_hdr_t;

typedef struct CH264_RTP_UNPACK{

rtp_hdr_t m_RTP_Header ;

char m_H264PAYLOADTYPE ;

char *m_pBuf ;

gboolean m_bSPSFound ;

gboolean m_bWaitKeyFrame ;

char *m_pStart ;

char *m_pEnd ;

guint32 m_dwSize;

guint32 m_wSeq ;

guint32 m_ssrc ;

uint8_t* m_pSPS;

uint8_t* m_pPPS;

uint16_t m_iSPSLen;

uint16_t m_iPPSLen;

}CH264_RTP_UNPACK;

typedef struct CAAC_RTP_UNPACK {

rtp_hdr_t m_RTP_Header ;

char *m_pBuf;

int g_fd;

guint32 m_iSeq ;

uint8_t m_pSpecificInfo[4];

}CAAC_RTP_UNPACK;

void initH264 (CH264_RTP_UNPACK *self, unsigned char H264PAYLOADTYPE/* = 96 */);

void deInitH264(CH264_RTP_UNPACK *self);

//pBufΪH264 RTPÊÓƵÊý¾Ý°ü£¬nSizeΪRTPÊÓƵÊý¾Ý°ü×Ö½Ú³¤¶È£¬outSizeΪÊä³öÊÓƵÊý¾ÝÖ¡×Ö½Ú³¤¶È¡£

//·µ»ØֵΪָÏòÊÓƵÊý¾ÝÖ¡µÄÖ¸Õë¡£ÊäÈëÊý¾Ý¿ÉÄܱ»ÆÆ»µ¡£

char* Parse_RTP_Packet_H264 (CH264_RTP_UNPACK *self, char *pBuf, unsigned short nSize, int *outSize, guint32* timestamp);

void SetLostPacket();

void initAAC (CAAC_RTP_UNPACK *self, unsigned char H264PAYLOADTYPE /*= 96*/ );

void deInitAAC(CAAC_RTP_UNPACK *self);

char* Parse_RTP_Packet_AAC (CAAC_RTP_UNPACK *self, char *pBuf, unsigned short nSize, int *outSize, guint32* timestamp);

void addADTStoPacket(CAAC_RTP_UNPACK *self, uint8_t* adts, int packetLen);

void addADTStoPacket2(CAAC_RTP_UNPACK *self, uint8_t* adts_header, int frame_length);

//********************************* H264 ****/

const uint8_t start_sequence[] = { 0, 0, 0, 0x01 };

uint8_t adts[7] = {0};

// one_piece

unsigned char _sps[] = {0x67,0x64,0x00,0x1F,0xAC,0xD9,0x40,0xD4,0x3D,0xA1,0x00,0x01,0x00,0x00,0x03,0x00,0x34,0xD9,0x96,0x0F,0x18,0x31,0x96}; //23

unsigned char _pps[] = {0x68,0xEB,0xE3,0xCB,0x22,0xC0}; // 6

void initH264 (CH264_RTP_UNPACK *this, unsigned char H264PAYLOADTYPE/* = 96 */)

{

memset(this, 0, sizeof(CAAC_RTP_UNPACK));

this->m_pBuf = (char *)malloc(BUF_SIZE);

if ( this->m_pBuf == NULL ){

return ;

}

memset(this->m_pBuf, 0, BUF_SIZE);

this->m_H264PAYLOADTYPE = H264PAYLOADTYPE ;

this->m_pEnd = this->m_pBuf + BUF_SIZE ;

this->m_pStart = this->m_pBuf ;

this->m_dwSize = 0 ;

this->m_iSPSLen = 0;

this->m_iPPSLen = 0;

}

void deInitH264(CH264_RTP_UNPACK *this)

{

free(this->m_pBuf);

if(this->m_pSPS) free(this->m_pSPS);

if(this->m_pPPS) free(this->m_pPPS);

}

char* Parse_RTP_Packet (CH264_RTP_UNPACK *this, char *pBuf, unsigned short nSize, int *outSize, guint32* timestamp)

{

if ( nSize <= 12 ) {

return NULL ;

}

char *head = (char*)&this->m_RTP_Header ;

head[0] = pBuf[0] ; // v:2 p:1 x:1 cc:4

head[1] = pBuf[1] ; // m:1 PT:7

// ntohs

this->m_RTP_Header.seq = ntohs(*((uint16_t*)(pBuf+2))); // 2Byte

this->m_RTP_Header.ts = ntohl(*((guint32*)(pBuf+4))); // 4Byte

// this->m_RTP_Header.ssrc = ntohl(*((guint32*)(pBuf+8))); // 4Byte

// printf(" this->m_RTP_Header.ts: %ld\n", this->m_RTP_Header.ts);

char *pPayload = pBuf + 12 ;

int16_t payloadSize = nSize - 12;

unsigned char padding = ((head[0] & 0x20) >> 5);

unsigned int paddinglength = 0;

if (padding & 0x1){

paddinglength = *(uint8_t*)(pBuf+(nSize -1));

//printf("Packet has padding Flg, seq:%d, padd:%d\n", this->m_RTP_Header.seq, paddinglength);

payloadSize -= paddinglength;

}

if (payloadSize <= 0){

return NULL;

}

int PayloadType = pPayload[0] & 0x1f ; // type : end 5

int NALType = PayloadType ;

// printf("NALType =%d, seq0=%d\n", NALType, this->m_RTP_Header.seq);

char spsHeader[4] = {0x00, 0x00, 0x00, 0x01};

char ppsHeader[3] = {0x00, 0x00, 0x01};

if ( (NALType == 0x07 || NALType == 0x08) && !this->m_bSPSFound ) // SPS PPS

{

this->m_wSeq = this->m_RTP_Header.seq ;

if ( NALType == 0x07 ){ // SPS

this->m_pSPS = (uint8_t*)malloc(payloadSize + sizeof(spsHeader));

memcpy(this->m_pSPS, spsHeader, sizeof(spsHeader));

memcpy(this->m_pSPS + sizeof(spsHeader), pPayload, payloadSize);

this->m_iSPSLen = payloadSize + sizeof(spsHeader);

printf("Get SPS(nal_type=0x07)\n");

memset(this->m_pStart, 0, BUF_SIZE);

this->m_pBuf = this->m_pStart;

memcpy(this->m_pBuf, this->m_pSPS, this->m_iSPSLen);

*outSize = this->m_iSPSLen;

*timestamp = this->m_RTP_Header.ts;

return this->m_pStart;

} else{

this->m_pPPS = (uint8_t*)malloc(payloadSize + sizeof(ppsHeader));

memcpy(this->m_pSPS, ppsHeader, sizeof(ppsHeader));

memcpy(this->m_pPPS + sizeof(ppsHeader), pPayload, payloadSize);

this->m_iPPSLen = payloadSize + sizeof(ppsHeader);

this->m_bSPSFound = TRUE ;

printf("Get PPS(nal_type=0x08)\n");

memset(this->m_pStart, 0, BUF_SIZE);

this->m_pBuf = this->m_pStart;

memcpy(this->m_pBuf, this->m_pSPS, this->m_iSPSLen);

*outSize = this->m_iPPSLen;

*timestamp = this->m_RTP_Header.ts;

return this->m_pStart;

}

}

if ( !this->m_bSPSFound ){

printf("this->m_bSPSFound=%d, NALType=%d\n", this->m_bSPSFound, NALType);

#if 1 // one_piece

this->m_iSPSLen = sizeof(_sps)/sizeof(_sps[0]);

this->m_pSPS = (uint8_t*)malloc(this->m_iSPSLen);

memcpy(this->m_pSPS, _sps, this->m_iSPSLen);

this->m_iPPSLen = sizeof(_pps)/sizeof(_pps[0]);

this->m_pPPS = (uint8_t*)malloc(this->m_iPPSLen);

memcpy(this->m_pPPS, _pps, this->m_iPPSLen);

this->m_bSPSFound = TRUE;

#endif

return NULL ;

}

///////////////////////////////////////////////////////////////

/*if (NALType >= 1 && NALType <= 23)

NALType = 1;

*/

if(NALType >= 28){ // FU_A

if ( payloadSize < 2 ){

return NULL ;

}

// ffmpeg rtpdec_h264.c

// fu_indicator fu_header [2 Byte]

uint8_t nal = 0;

uint8_t fu_indicator = pPayload[0];

uint8_t fu_header = pPayload[1];

uint8_t start_bit = fu_header >> 7; //= *(pPayload + 1) & 0x80;

uint8_t end_bit = fu_header & 0x40 ; //*(pPayload + 1) & 0x40;

NALType = fu_header & 0x1f; //*(pPayload+1) & 0x1f ;

if(NALType == 5){

nal = (fu_indicator & 0xe0 )| NALType;

}

else{

nal = (fu_indicator & 0x40 )| NALType;

}

// printf("NALType =%d, end_bit=%d, seq=%d\n", NALType, end_bit, this->m_RTP_Header.seq);

// skip the fu_indicator and fu_header

pPayload += 2;

payloadSize -= 2;

if(start_bit){

//printf("start_bit =1, NALType =%d, seq=%d\n", NALType, this->m_RTP_Header.seq);

memset(this->m_pStart, 0, BUF_SIZE);

this->m_pBuf = this->m_pStart;

if (NALType == 0x07){

memcpy(this->m_pBuf, spsHeader, sizeof(spsHeader));

this->m_dwSize = sizeof(ppsHeader);

} else if (NALType == 0x08) {

memcpy(this->m_pBuf, ppsHeader, sizeof(ppsHeader));

this->m_dwSize = sizeof(ppsHeader);

} else {

memcpy(this->m_pBuf, start_sequence, sizeof(start_sequence));

memcpy(this->m_pBuf+sizeof(start_sequence), &nal, 1);

this->m_dwSize = sizeof(start_sequence) + 1;

}

}

memcpy(this->m_pBuf + this->m_dwSize, pPayload, payloadSize);

// printf("NALType =%d, seq=%d, payloadSize=%d, this->m_dwSize=%d\n", NALType, this->m_RTP_Header.seq, payloadSize, this->m_dwSize);

this->m_dwSize += payloadSize ;

if(this->m_RTP_Header.m){

//printf("this->m_dwSize=%d, end_bit =%d, NALType =%d, seq=%d\n", this->m_dwSize, end_bit, NALType, this->m_RTP_Header.seq);

*outSize = this->m_dwSize;

*timestamp = this->m_RTP_Header.ts;

return this->m_pStart;

}

return NULL;

}else{

memset(this->m_pStart, 0, BUF_SIZE);

this->m_pBuf = this->m_pStart;

memcpy(this->m_pBuf, start_sequence, sizeof(start_sequence));

memcpy(this->m_pBuf + sizeof(start_sequence), pPayload, payloadSize);

*outSize = payloadSize + sizeof(start_sequence);

*timestamp = this->m_RTP_Header.ts;

return this->m_pStart;

}

}

void SetLostPacket(CH264_RTP_UNPACK *this)

{

this->m_bSPSFound = FALSE;

this->m_bWaitKeyFrame = TRUE ;

this->m_pStart = this->m_pBuf ;

this->m_dwSize = 0 ;

}

void initAAC(CAAC_RTP_UNPACK *this, unsigned char H264PAYLOADTYPE)

{

this->m_pBuf = (char *)malloc(AUDIO_BUF_SIZE);

if ( this->m_pBuf == NULL ){

return ;

}

memset(this->m_pBuf, 0, AUDIO_BUF_SIZE);

memset(this->m_pSpecificInfo, 0 , 4);

this->m_iSeq = 0;

this->g_fd = open("/usr/local/movies/one_p.aac", O_CREAT | O_WRONLY , 0666);

if(this->g_fd != -1)

{

printf("open a aac file ok\n");

}

}

void deInitAAC(CAAC_RTP_UNPACK *this)

{

if (this->m_pBuf != NULL) {

free(this->m_pBuf);

this->m_pBuf = NULL;

}

if(this->g_fd != -1){

close(this->g_fd);

}

}

char* Parse_RTP_Packet_AAC( CAAC_RTP_UNPACK *this, char *pBuf, unsigned short nSize, int *outSize, guint32* timestamp)

{

if ( nSize <= 12 ) {

return NULL ;

}

char* payloadbuf = pBuf;

int currentPos = 0;

//char *head = (char*)&this->m_RTP_Header ;

//head[0] = pBuf[0] ; // v:2 p:1 x:1 cc:4

//head[1] = pBuf[1] ; // m:1 PT:7

// ntohs

this->m_RTP_Header.seq = ntohs(*((uint16_t*)(pBuf+2))); // 2Byte

// this->m_RTP_Header.ts = ntohl(*((guint32*)(pBuf+4))); // 4Byte

*timestamp = ntohl(*((guint32*)(pBuf+4))); // 4Byte

// this->m_RTP_Header.ssrc = ntohl(*((guint32*)(pBuf+8))); // 4Byte

printf("this->m_iSeq=%d, this->m_RTP_Header.seq: %ld, timestamp=%d\n",this->m_iSeq, this->m_RTP_Header.seq, *timestamp);

if(this->m_iSeq != 0 && this->m_iSeq == this->m_RTP_Header.seq)

return NULL;

this->m_iSeq = this->m_RTP_Header.seq;

uint16_t us_header_len = ntohs(*((uint16_t*)(pBuf+12)));

uint16_t us_header_plen = us_header_len >> 3;

uint16_t us_header = ntohs(*((uint16_t*)(pBuf+14)));

uint16_t payloadLen = us_header >> 3;

printf("us_header_len=%d, payloadLen=%d\n", us_header_plen, payloadLen);

int8_t hasv = *(pBuf+12);

int8_t lasv = *(pBuf+13);

u_int16_t au_size = ((hasv << 5) & 0xE0) | ((lasv >> 3) & 0x1f);

printf("Parse_RTP_Packet nSize=%d, au_size=%d\n", nSize, au_size);

if(nSize < au_size)

return NULL ;

currentPos += 14;

payloadbuf = (pBuf+14 + au_size);

int required_size = 0;

int i = 0;

for (i = 0; i < au_size; i += 2) {

hasv = *(pBuf+14+i);

lasv = *(pBuf+14+i+1);

u_int16_t sample_size = ((hasv << 5) & 0xE0) | ((lasv >> 3) & 0x1f);

// printf("Parse_RTP_Packet 1 sample_size=%d\n", sample_size);

currentPos +=2 ;

// TODO: FIXME: finger out how to parse the size of sample.

if (sample_size < 0x100 && nSize >= (required_size + sample_size + 0x100 + currentPos)) {

sample_size = sample_size | 0x100;

}

printf("Parse_RTP_Packet 2 sample_size=%d\n", sample_size);

char* sample = payloadbuf + required_size;

if(nSize < (required_size+currentPos+sample_size)) {

printf(" error \n");

return NULL;

}

memcpy(this->m_pBuf+ required_size , sample, sample_size);

required_size += sample_size;

}

*outSize = required_size;

//*outSize = (nSize - (12 + au_size));

return this->m_pBuf;

}

/**

* Add ADTS header at the beginning of each and every AAC packet.

* This is needed as MediaCodec encoder generates a packet of raw

* AAC data.

*

* Note the packetLen must count in the ADTS header itself !!! .

*注æ„,这里的packetLenå‚数为raw aac Packet Len + 7; 7 bytes adts header

**/

void addADTStoPacket(CAAC_RTP_UNPACK *self, uint8_t* adts, int packetLen) {

int profile = 2; //AAC LC,MediaCodecInfo.CodecProfileLevel.AACObjectLC;

int freqIdx = 4; //44K, è§åŽé¢æ³¨é‡Šavpriv_mpeg4audio_sample_ratesä¸44100å¯¹åº”çš„æ•°ç»„ä¸‹æ ‡ï¼Œæ¥è‡ªffmpegæºç

int chanCfg = 2; //è§åŽé¢æ³¨é‡Šchannel_configuration,SteroåŒå£°é“立体声

/*int avpriv_mpeg4audio_sample_rates[] = {

96000, 88200, 64000, 48000, 44100, 32000,

24000, 22050, 16000, 12000, 11025, 8000, 7350

};

channel_configuration: 表示声é“æ•°chanCfg

0: Defined in AOT Specifc Config

1: 1 channel: front-center

2: 2 channels: front-left, front-right

3: 3 channels: front-center, front-left, front-right

4: 4 channels: front-center, front-left, front-right, back-center

5: 5 channels: front-center, front-left, front-right, back-left, back-right

6: 6 channels: front-center, front-left, front-right, back-left, back-right, LFE-channel

7: 8 channels: front-center, front-left, front-right, side-left, side-right, back-left, back-right, LFE-channel

8-15: Reserved

*/

// fill in ADTS data

adts[0] = (uint8_t)0xFF;

adts[1] = (uint8_t)0xF1;

adts[2] = (uint8_t)(((profile-1)<<6) + (freqIdx<<2) +(chanCfg>>2));

adts[3] = (uint8_t)(((chanCfg&3)<<6) + (packetLen>>11));

adts[4] = (uint8_t)((packetLen&0x7FF) >> 3);

adts[5] = (uint8_t)(((packetLen&7)<<5) + 0x1F);

adts[6] = (uint8_t)0xFC;

}

void addADTStoPacket2(CAAC_RTP_UNPACK *self, uint8_t* adts_header, int frame_length) {

unsigned int profile = 1; // aac -lc ,[profile, the MPEG-4 Audio Object Type minus 1]

unsigned int num_data_block = frame_length / 1024;

unsigned int freqIdx = 4;

unsigned int chanCfg = 2;

// include the header length also

//frame_length += 7;

/* We want the same metadata */

/* Generate ADTS header */

if(adts_header == NULL) return;

/* Sync point over a full byte */

adts_header[0] = 0xFF;

/* Sync point continued over first 4 bits + 4 bits

* ID:MPEG Version: 0 for MPEG-4, 1 for MPEG-2

* (ID, layer, protection) = (0, 00, 1)

*/

adts_header[1] = 0xF1;

/* Object type over first 2 bits */

adts_header[2] = profile << 6;//

/* rate index over next 4 bits */

adts_header[2] |= (freqIdx << 2);

/* channels over last 2 bits */

adts_header[2] |= (chanCfg & 0x4) >> 2;

/* channels continued over next 2 bits + 4 bits at zero */

adts_header[3] = (chanCfg & 0x3) << 6;

/* frame size over last 2 bits */

adts_header[3] |= (frame_length & 0x1800) >> 11;

/* frame size continued over full byte */

adts_header[4] = (frame_length & 0x1FF8) >> 3;

/* frame size continued first 3 bits */

adts_header[5] = (frame_length & 0x7) << 5;

/* buffer fullness (0x7FF for VBR) over 5 last bits*/

adts_header[5] |= 0x1F;

/* buffer fullness (0x7FF for VBR) continued over 6 first bits + 2 zeros

* number of raw data blocks */

adts_header[6] = 0xFC; // one raw data blocks .

adts_header[6] |= num_data_block & 0x03; //Set raw Data blocks.

}

//********************************* H264 END ****/

//********************************* RESAMPLE ****/

#define INBUF_SIZE 4096

#define H264_RTP_PAYLOAD_SIZE 1320

#define TEST_SAVE_H264 0

int initEncoder(RTPResampleTask *this);

int initDecoder(RTPResampleTask *this);

void deInitEncoder(RTPResampleTask *this);

void deInitDecoder(RTPResampleTask *this);

int decodePacket(RTPResampleTask *this, const char *inputBuffer, int inputSize);

int encodePacket(RTPResampleTask *this);

void initRTPResampleTask(RTPResampleTask *this, const DstVideoInfo *dstVideoInfo, const SourceVideoInfo *sourceVideoInfo, EncodedCallbackFunc func , void *funcArgs){

if (this == NULL || dstVideoInfo == NULL || sourceVideoInfo == NULL){

return;

}

uint8_t szDecodedSPS[128] = { 0 };

this->decoderHandler.sps_len = av_base64_decode((uint8_t*)szDecodedSPS, (const char *)sourceVideoInfo->sps, sizeof(szDecodedSPS));//nSPSLen=24

this->decoderHandler.szSourceDecodedSPS = (char *)malloc(this->decoderHandler.sps_len);

if (this->decoderHandler.szSourceDecodedSPS != NULL){

memcpy(this->decoderHandler.szSourceDecodedSPS, szDecodedSPS, this->decoderHandler.sps_len);

}

uint8_t szDecodedPPS[128] = { 0 };

this->decoderHandler.pps_len = av_base64_decode(( uint8_t*)szDecodedPPS, (const char *)sourceVideoInfo->pps, sizeof(szDecodedPPS));//nPPSLen=4

this->decoderHandler.szSourceDecodedPPS = (char *)malloc(this->decoderHandler.pps_len);

if (this->decoderHandler.szSourceDecodedPPS != NULL){

memcpy(this->decoderHandler.szSourceDecodedPPS, szDecodedPPS, this->decoderHandler.pps_len);

}

this->funcArgs = funcArgs;

this->callback = func;

this->dstVideoInfo.width = dstVideoInfo->width;

this->dstVideoInfo.height = dstVideoInfo->height;

this->dstVideoInfo.samplerate = dstVideoInfo->samplerate;

initEncoder(this);

initDecoder(this);

}

void deInitRTPResampleTask(RTPResampleTask *this){

deInitEncoder(this);

deInitDecoder(this);

}

int initEncoder(RTPResampleTask *this){

int codec_id = AV_CODEC_ID_H264;

if (codec_id == AV_CODEC_ID_H264){

}

this->encoderHandler.rawBuffer= NULL;

this->encoderHandler.rawBufferSize = 0;

this->encoderHandler.encodedBuffer= NULL;

this->encoderHandler.encodedBufferSize = 0;

this->encoderHandler.sws_ctx = NULL;

this->encoderHandler.pkt = av_packet_alloc();

if (!this->encoderHandler.pkt) {

printf("this->encoderHandler.pkt == NULL");

return -1;

}

this->encoderHandler.codec = avcodec_find_encoder(codec_id); //AV_CODEC_ID_AAC;

if(this->encoderHandler.codec == NULL ) {

printf("this->encoderHandler.codec == NULL");

return -1;

}

//创建AVFormatContext结构体

//分配一个AVFormatContext,FFMPEG所有的操作都要通过这个AVFormatContext来进行

this->encoderHandler.context = avcodec_alloc_context3(this->encoderHandler.codec);

if(this->encoderHandler.context == NULL ) {

printf("this->encoderHandler.context == NULL");

return -1;

}

//设置AVCodecContext编码参数

struct AVCodecContext *c = this->encoderHandler.context;

avcodec_get_context_defaults3(c, this->encoderHandler.codec);

c->codec_id = codec_id;

c->bit_rate = this->dstVideoInfo.samplerate * 1000;//500kbps

c->time_base.den = 25; //分母

c->time_base.num = 1; //分子

/* resolution must be a multiple of two */

c->width = this->dstVideoInfo.width;

c->height = this->dstVideoInfo.height;

/* frames per second */

c->time_base = (AVRational){1, 25};

c->framerate = (AVRational){25, 1};

c->gop_size = 1;//

c->max_b_frames = 0;

//c->rtp_payload_size = H264_RTP_PAYLOAD_SIZE;

c->pix_fmt = AV_PIX_FMT_YUV420P;

c->codec_type = AVMEDIA_TYPE_VIDEO;

//c->flags|= CODEC_FLAG_GLOBAL_HEADER;

//AVOptions的参数

av_opt_set(c->priv_data, "preset", "slow", 0);

av_opt_set(c->priv_data, "preset", "ultrafast", 0);

av_opt_set(c->priv_data, "tune","stillimage,fastdecode,zerolatency",0);

av_opt_set(c->priv_data, "x264opts","crf=26:vbv-maxrate=728:vbv-bufsize=364:keyint=25",0);

this->encoderHandler.frame = av_frame_alloc();

if(this->encoderHandler.frame == NULL ) {

printf("this->encoderHandler.frame == NULL");

return -1;

}

this->encoderHandler.frame->format = c->pix_fmt;

this->encoderHandler.frame->width = c->width;

this->encoderHandler.frame->height = c->height;

avpicture_alloc((AVPicture *)this->encoderHandler.frame,AV_PIX_FMT_YUV420P, this->encoderHandler.frame->width, this->encoderHandler.frame->height); //desW,desH分别为目标分辨率的宽度、高度

/* open it */

int ret = avcodec_open2(this->encoderHandler.context, this->encoderHandler.codec , NULL);

if (ret < 0) {

fprintf(stderr, "Could not open codec: %d\n", ret);

return -1;

}

#ifdef TEST_SAVE_H264

this->encoderHandler.fTest = fopen("/tmp/test_out.h264", "wb");

if (!this->encoderHandler.fTest) {

fprintf(stderr, "Could not open /tmp/test_out.h264 \n");

return -1;

}

#endif

this->encoderHandler.sps = NULL;

this->encoderHandler.sps_len = 0;

this->encoderHandler.pps = NULL;

this->encoderHandler.pps_len = 0;

return 0;

}

void deInitEncoder(RTPResampleTask *this){

if(this->encoderHandler.context){

avcodec_free_context(&this->encoderHandler.context);

this->encoderHandler.context = NULL;

}

if(this->encoderHandler.frame){

av_frame_free(&this->encoderHandler.frame);

this->encoderHandler.frame = NULL;

}

if (this->encoderHandler.pkt){

av_packet_free(&this->encoderHandler.pkt);

this->encoderHandler.pkt = NULL;

}

if (this->encoderHandler.sws_ctx){

sws_freeContext(this->encoderHandler.sws_ctx);

this->encoderHandler.sws_ctx = NULL;

}

#ifdef TEST_SAVE_H264

if (this->encoderHandler.fTest){

fclose(this->encoderHandler.fTest);

}

#endif

if (this->encoderHandler.sps != NULL){

free(this->encoderHandler.sps);

}

if (this->encoderHandler.pps != NULL){

free(this->encoderHandler.pps);

}

}

int initDecoder(RTPResampleTask *this){

int codec_id = AV_CODEC_ID_H264;

if (codec_id == AV_CODEC_ID_H264){

}

this->decoderHandler.pkt = av_packet_alloc();

if (!this->decoderHandler.pkt) {

printf("this->decoderHandler.pkt == NULL");

return -1;

}

this->decoderHandler.codec = avcodec_find_decoder(codec_id); //AV_CODEC_ID_AAC;

if(this->decoderHandler.codec == NULL )

{

printf("this->decoderHandler.codec == NULL");

return -1;

}

this->decoderHandler.parser = av_parser_init(this->decoderHandler.codec->id);

if (!this->decoderHandler.parser) {

printf("this->decoderHandler.parser == NULL");

return -1;

}

//创建AVFormatContext结构体

//分配一个AVFormatContext,FFMPEG所有的操作都要通过这个AVFormatContext来进行

this->decoderHandler.context = avcodec_alloc_context3(this->decoderHandler.codec);

if (!this->decoderHandler.context) {

printf("this->decoderHandler.context == NULL");

return -1;

}

int sps_pps_len = this->decoderHandler.sps_len + this->decoderHandler.pps_len + 6;

unsigned char *szSPSPPS = (unsigned char *)av_mallocz(sps_pps_len + 1);

char spsHeader[4] = {0x00, 0x00,0x00, 0x01};

char ppsHeader[3] = {0x00, 0x00, 0x01};

memcpy(szSPSPPS, spsHeader, sizeof(spsHeader));

memcpy(szSPSPPS + sizeof(spsHeader), this->decoderHandler.szSourceDecodedSPS, this->decoderHandler.sps_len);

memcpy((void *)(szSPSPPS + sizeof(spsHeader) + this->decoderHandler.sps_len), ppsHeader, sizeof(ppsHeader));

memcpy((void *)(szSPSPPS + sizeof(spsHeader) + sizeof(ppsHeader) + this->decoderHandler.sps_len), this->decoderHandler.szSourceDecodedPPS, this->decoderHandler.pps_len);

this->decoderHandler.context->extradata_size = sps_pps_len;

this->decoderHandler.context->extradata = (uint8_t*)av_mallocz(this->decoderHandler.context->extradata_size + AV_INPUT_BUFFER_PADDING_SIZE);

memcpy(this->decoderHandler.context->extradata, szSPSPPS, sps_pps_len);

this->decoderHandler.context->time_base.num = 1;

this->decoderHandler.context->time_base.den = 25;

if (avcodec_open2(this->decoderHandler.context, this->decoderHandler.codec, NULL) < 0) {

printf("avcodec_open2 return failed");

return -1;

}

this->decoderHandler.frame = av_frame_alloc();

if (!this->decoderHandler.frame) {

printf("this->decoderHandler.frame is null.");

return -1;

}

printf("initDecoder, width:%d, height:%d", this->decoderHandler.context->width, this->decoderHandler.context->height);

return 0;

}

void deInitDecoder(RTPResampleTask *this){

if(this->decoderHandler.frame){

av_frame_free(&this->decoderHandler.frame);

this->decoderHandler.frame = NULL;

}

if(this->decoderHandler.parser){

av_parser_close(this->decoderHandler.parser);

this->decoderHandler.parser = NULL;

}

if (this->decoderHandler.pkt){

av_packet_free(&this->decoderHandler.pkt);

}

if (this->decoderHandler.context->extradata != NULL){

av_free(this->decoderHandler.context->extradata);

}

if(this->decoderHandler.context){

avcodec_free_context(&this->decoderHandler.context);

this->decoderHandler.context = NULL;

}

}

int resample(RTPResampleTask *this, const char *inputBuffer, int inputSize, char **outputBuffer, int *outputSize){

*outputBuffer = NULL;

*outputSize = 0;

int ret = decodePacket(this, (const char *)inputBuffer, inputSize);

//printf("video resample decodePacket ret:%d outputSize:%d\n", ret, this->encoderHandler.rawBufferSize);

if (ret == 0 && outputSize > 0){

char *encodeOutputBuffer = NULL;

int encodeOutputSize = 0;

//scale

if (this->encoderHandler.sws_ctx == NULL){

this->encoderHandler.sws_ctx = sws_getContext(this->decoderHandler.context->width, this->decoderHandler.context->height, this->decoderHandler.context->pix_fmt,

this->encoderHandler.context->width, this->encoderHandler.context->height, AV_PIX_FMT_YUV420P,

SWS_BILINEAR, NULL, NULL, NULL);

}

sws_scale(this->encoderHandler.sws_ctx, this->decoderHandler.frame->data,

this->decoderHandler.frame->linesize, 0, this->decoderHandler.context->height, this->encoderHandler.frame->data, this->encoderHandler.frame->linesize);

ret = encodePacket(this);

if (ret != 0){

printf("video resample ret:%d err\n", ret);

return -1;

}

#ifdef TEST_SAVE_H264

fwrite(this->encoderHandler.encodedBuffer, 1, this->encoderHandler.encodedSize, this->encoderHandler.fTest);

#endif//#ifdef TEST_SAVE_H264

*outputBuffer = this->encoderHandler.encodedBuffer;

*outputSize = this->encoderHandler.encodedSize;

int typeIndex = 3;//default 00 00 01

if (this->encoderHandler.encodedBuffer[3] == 0x01){

typeIndex = 4;

}

if (this->encoderHandler.encodedBuffer[typeIndex] == 0x67 && this->encoderHandler.sps == NULL){

printf("video resample has sps:%d \n", this->encoderHandler.encodedSize);

this->encoderHandler.sps_len = 0;

int i = 0;

unsigned short find_sps_flag = 0;

while(i++ < this->encoderHandler.encodedSize){

if ((this->encoderHandler.encodedBuffer[typeIndex] == 0x00 &&

this->encoderHandler.encodedBuffer[typeIndex + 1] == 0x00 &&

this->encoderHandler.encodedBuffer[typeIndex + 2] == 0x01 &&

this->encoderHandler.encodedBuffer[typeIndex + 3] == 0x68)

){

find_sps_flag = 1;

break;

}

typeIndex++;

}

if (!find_sps_flag){

return 0;

}

find_sps_flag = 0;

this->encoderHandler.sps_len = typeIndex;

printf("video resample this->encoderHandler.sps_len:%d \n", this->encoderHandler.sps_len);

this->encoderHandler.sps = (char *)malloc(this->encoderHandler.sps_len);

if (this->encoderHandler.sps != NULL){

memcpy((void *)this->encoderHandler.sps, (void *)this->encoderHandler.encodedBuffer, this->encoderHandler.sps_len);

}

this->encoderHandler.pps_len = 0;

typeIndex = this->encoderHandler.sps_len + 4;

while(i++ < this->encoderHandler.encodedSize){

if (

(this->encoderHandler.encodedBuffer[typeIndex] == 0x00 &&

this->encoderHandler.encodedBuffer[typeIndex +1] == 0x00 &&

this->encoderHandler.encodedBuffer[typeIndex +2] == 0x01)

){

find_sps_flag = 1;

break;

}

typeIndex++;

this->encoderHandler.pps_len++;

}

if (!find_sps_flag){

return 0;

}

this->encoderHandler.pps_len += 4;

printf("video resample this->encoderHandler.pps_len:%d \n", this->encoderHandler.pps_len);

this->encoderHandler.pps = (char *)malloc(this->encoderHandler.pps_len);

if (this->encoderHandler.pps != NULL){

memcpy((void *)this->encoderHandler.pps, &this->encoderHandler.encodedBuffer[this->encoderHandler.sps_len], this->encoderHandler.pps_len);

}

}

return 0;

}

return -1;

}

int decodePacket(RTPResampleTask *this, const char *inputBuffer, int inputSize){

int ret;

uint8_t *data = ( uint8_t *)inputBuffer;

int data_size = inputSize;

//while (data_size > 0)

{

this->encoderHandler.rawBufferSize = 0;

av_init_packet(this->decoderHandler.pkt);

this->decoderHandler.pkt->dts = AV_NOPTS_VALUE;

this->decoderHandler.pkt->size = (int)inputSize;

this->decoderHandler.pkt->data = (uint8_t *)inputBuffer;

if (this->decoderHandler.pkt->size){

ret = avcodec_send_packet(this->decoderHandler.context, this->decoderHandler.pkt);

if (ret < 0) {

fprintf(stderr, "decodePacket Error sending a packet for decoding, size:%d %02x %02x %02x %02x %02x \n", inputSize, *(inputBuffer), *(inputBuffer+1), *(inputBuffer+2), *(inputBuffer+3), *(inputBuffer+4));

return 1;

}

ret = avcodec_receive_frame(this->decoderHandler.context, this->decoderHandler.frame);

if (ret == AVERROR(EAGAIN) || ret == AVERROR_EOF){

return 1;

}

else if (ret < 0) {

fprintf(stderr, "decodePacket Error during decoding :%d\n", ret);

return 1;

}

int xsize = avpicture_get_size(this->decoderHandler.context->pix_fmt, this->decoderHandler.context->width, this->decoderHandler.context->height);

//fprintf(stderr, "decodePacket end decoding :%d, width:%d, height:%d\n", xsize, this->decoderHandler.context->width, this->decoderHandler.context->height);

this->encoderHandler.rawBufferSize = xsize;

//

}else{

return -1;

}

}

return 0;

}

int encodePacket(RTPResampleTask *this){

#if 0

int size = avpicture_fill((AVPicture*)this->encoderHandler.frame, (uint8_t*)inputBuffer, AV_PIX_FMT_YUV420P, this->encoderHandler.context->width, this->encoderHandler.context->height);

if (size != inputSize){

/* guard */

printf("encodePacket invalid size: %u<>%u", size, inputSize);

return 0;

}

#endif//this->encoderHandler.frame

int ret = avcodec_send_frame(this->encoderHandler.context, this->encoderHandler.frame);

if (ret < 0) {

printf("encodePacket error sending a frame for encoding, ret:%d\n", ret);

return ret;

}

this->encoderHandler.encodedSize = 0;

while (ret >= 0)

{

ret = avcodec_receive_packet(this->encoderHandler.context, this->encoderHandler.pkt);

if (ret == AVERROR(EAGAIN) || ret == AVERROR_EOF){

if (this->encoderHandler.encodedSize > 0){

return 0;

}

return -1;

} else if (ret < 0) {

printf("encodePacket error during encoding\n");

return ret;

}

if (this->encoderHandler.encodedBuffer == NULL){

this->encoderHandler.encodedBuffer = (char *)malloc((this->encoderHandler.context->width*this->encoderHandler.context->height * 3)/2);

this->encoderHandler.encodedBufferSize = (this->encoderHandler.context->width*this->encoderHandler.context->height * 3)/2;

}

if (this->encoderHandler.encodedBufferSize < (this->encoderHandler.pkt->size + this->encoderHandler.encodedSize)){

this->encoderHandler.encodedBuffer = (char *)realloc(this->encoderHandler.encodedBuffer, this->encoderHandler.pkt->size + this->encoderHandler.encodedSize);

this->encoderHandler.encodedBufferSize += this->encoderHandler.pkt->size + this->encoderHandler.encodedSize;

}

if (this->encoderHandler.encodedBuffer){

memcpy((void *)(this->encoderHandler.encodedBuffer + this->encoderHandler.encodedSize), this->encoderHandler.pkt->data, this->encoderHandler.pkt->size);

}

this->encoderHandler.encodedSize += this->encoderHandler.pkt->size;

//printf("encodePacket success ret:%d\n", this->encoderHandler.pkt->size);

//if (callback != NULL){

//callback(funcArgs, (char*)this->encoderHandler.pkt->data, this->encoderHandler.pkt->size);

//}

av_packet_unref(this->encoderHandler.pkt);

}

//printf("encodePacket success ret:%d\n", this->encoderHandler.encodedBufferSize);

return 0;

}

//********************************* RESAMPLE END ****/