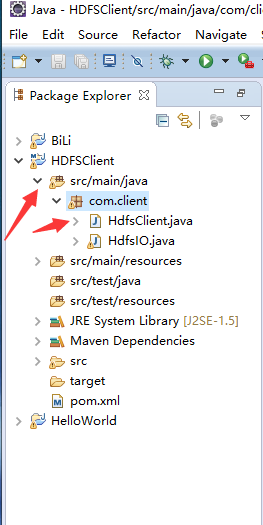

1.首先在eclipse创建

注意获取fs对象要设置一下,这个获取fs对象是简便写法(标准的写法在另外一篇博客HDFS环境客户端的环境和测试)

一般都设置成root

import java.io.IOException;

import java.net.URI;

import java.net.URISyntaxException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.BlockLocation;

import org.apache.hadoop.fs.FileStatus;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.LocatedFileStatus;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.fs.RemoteIterator;

import org.junit.Test;

public class HdfsClient {

public static void main(String[] args) throws IOException, InterruptedException, URISyntaxException {

//获取fs对象

Configuration conf = new Configuration();

FileSystem fs = FileSystem.get(new URI("hdfs://hadoop01:9000"), conf , "root");

//在hdfs上创建路径

fs.mkdirs(new Path("/5225/dashen"));

//关闭资源

fs.close();

}

//上传文件

@Test

public void testCopyFromLocalFile() throws IOException, InterruptedException, URISyntaxException{

//获取fs对象

Configuration conf = new Configuration();

FileSystem fs = FileSystem.get(new URI("hdfs://hadoop01:9000"), conf, "root");

//执行上传API

fs.copyFromLocalFile(new Path("f:/test.txt"), new Path("/banhua1.txt"));

//关闭资源

fs.close();

}

//文件下载

@Test

public void testCopyToLocalFile() throws IOException, InterruptedException, URISyntaxException{

//获取fs对象

Configuration conf = new Configuration();

FileSystem fs = FileSystem.get(new URI("hdfs://hadoop01:9000"), conf , "root");

//执行下载操作

fs.copyToLocalFile(new Path("/banhua.txt"),new Path("f:/banhua.txt"));

//关闭资源

fs.close();

}

//文件删除

@Test

public void testDelete() throws IOException, InterruptedException, URISyntaxException{

//获取fs对象

Configuration conf = new Configuration();

FileSystem fs = FileSystem.get(new URI("hdfs://hadoop01:9000"), conf, "root");

//文件删除

fs.delete(new Path("/banhua.txt"), true);

//关闭资源

fs.close();

}

//文件更名

@Test

public void testRename() throws IOException, InterruptedException, URISyntaxException{

//获取fs对象

Configuration conf = new Configuration();

FileSystem fs = FileSystem.get(new URI("hdfs://hadoop01:9000"), conf, "root");

//执行更名操作

fs.rename(new Path("/banhua1.txt"), new Path("/banhua10.txt"));

//关闭资源

fs.close();

}

//查看文件详情

@Test

public void testListFiles() throws IOException, InterruptedException, URISyntaxException{

//获取fs对象

Configuration conf = new Configuration();

FileSystem fs = FileSystem.get(new URI("hdfs://hadoop01:9000"), conf , "root");

//查看文件详情

RemoteIterator<LocatedFileStatus> listFiles = fs.listFiles(new Path("/"), true);

while(listFiles.hasNext()){

LocatedFileStatus fileStatus = listFiles.next();

//查看文件名称,权限,长度,快信息

System.out.println(fileStatus.getPath().getName()); //文件名称

System.out.println(fileStatus.getPermission()); //文件权限

System.out.println(fileStatus.getLen()); //文件长度

BlockLocation[] blockLocations = fileStatus.getBlockLocations();

for (BlockLocation blockLocation : blockLocations) {

String[] hosts = blockLocation.getHosts();

for (String host : hosts) {

System.out.println(host);

}

}

System.out.println("-------------------------");

}

//关闭资源

fs.close();

}

//判断是文件还是文件夹

@Test

public void testListStatus() throws IOException, InterruptedException, URISyntaxException{

//获取fs对象

Configuration conf = new Configuration();

FileSystem fs = FileSystem.get(new URI("hdfs://hadoop01:9000"), conf, "root");

//判断操作

FileStatus[] listStatus = fs.listStatus(new Path("/"));

for (FileStatus fileStatus : listStatus) {

if(fileStatus.isFile()){

//f是文件

System.out.println("f:"+fileStatus.getPath().getName());

}else{

//d是文件夹

System.out.println("d:"+fileStatus.getPath().getName());

}

}

//关闭资源

fs.close();

}