场景:mysql单表transaction数据量达到20亿,占服务器磁盘太多需要导出,但是普通方法导出太慢,这里借助datax工具,对transaction表按日期进行分表后将数据导入对应日期的表内。

1、需要安装的环境:

(1)jdk 1.8

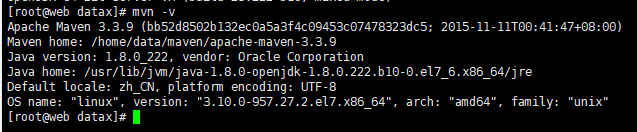

(2)maven 3.3.9

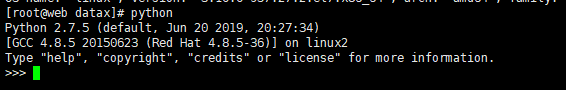

(3)python 2.6 +

验证环境:

# java -version

# mvn -v

# python

2、下载datax.tar.gz,要点datax下载地址下载tar.gz包,下载地址:https://github.com/alibaba/DataX

3、将datax.tar.gz上传到服务器安装目录,我这里是/home/data/datax,然后解压:

# tar -zxvf datax.tar.gz

tar -zxvf datax.tar.gz

目录如下:

bin目录是启动目录,job目录存放执行的json脚本文件,log目录问日志目录。

4、在目标服务器创建备份库:exchange_copy

创建日期表,比如:transaction_201910,transaction_201909,transaction_201908......

5、编写json文件:transaction_201910.json,比如我的如下:

{

"job": {

"setting": {

"speed": {

"channel": 5

},

"errorLimit": {

"record": 100000000,

"percentage": 0.02

}

},

"content": [{

"reader": {

"name": "mysqlreader",

"parameter": {

"username": "root",

"password": "123456",

"column": [

"id",

"from_uid",

"from_type",

"from_balance",

"to_uid",

"to_type",

"to_balance",

"amount",

"meta",

"scene",

"ref_type",

"ref_id",

"op_uid",

"op_ip",

"ctime",

"mtime",

"fingerprint"

],

"where": "ctime >= '2019-10-01 00:00:00' and ctime <= '2019-10-31 23:59:59'",

"connection": [{

"table": [

"transaction"

],

"jdbcUrl": [

"jdbc:mysql://192.168.0.118:3306/exchange"

]

}]

}

},

"writer": {

"name": "mysqlwriter",

"parameter": {

"writeMode": "insert",

"username": "root",

"password": "root",

"column": [

"id",

"from_uid",

"from_type",

"from_balance",

"to_uid",

"to_type",

"to_balance",

"amount",

"meta",

"scene",

"ref_type",

"ref_id",

"op_uid",

"op_ip",

"ctime",

"mtime",

"fingerprint"

],

"preSql": [

"truncate table transaction_201910"

],

"connection": [{

"jdbcUrl": "jdbc:mysql://localhost:3306/exchange_transaction_copy",

"table": [

"transaction_201910"

]

}]

}

}

}]

}

}

注意:

where:按日期查询;

setting.speed:流量控制;

errorLimit.record:配置脏数据条数,默认0条,我这里忽略脏数据所以配大点。

preSql:执行insert前先清空目标表

5、将transaction_201910文件上传到job目录,我这里目录为:/home/data/datax/datax/job

6、进入datax的bin目录,启动:

# cd /home/data/datax/datax/bin

# python datax.py ../job/transaction_201910.json

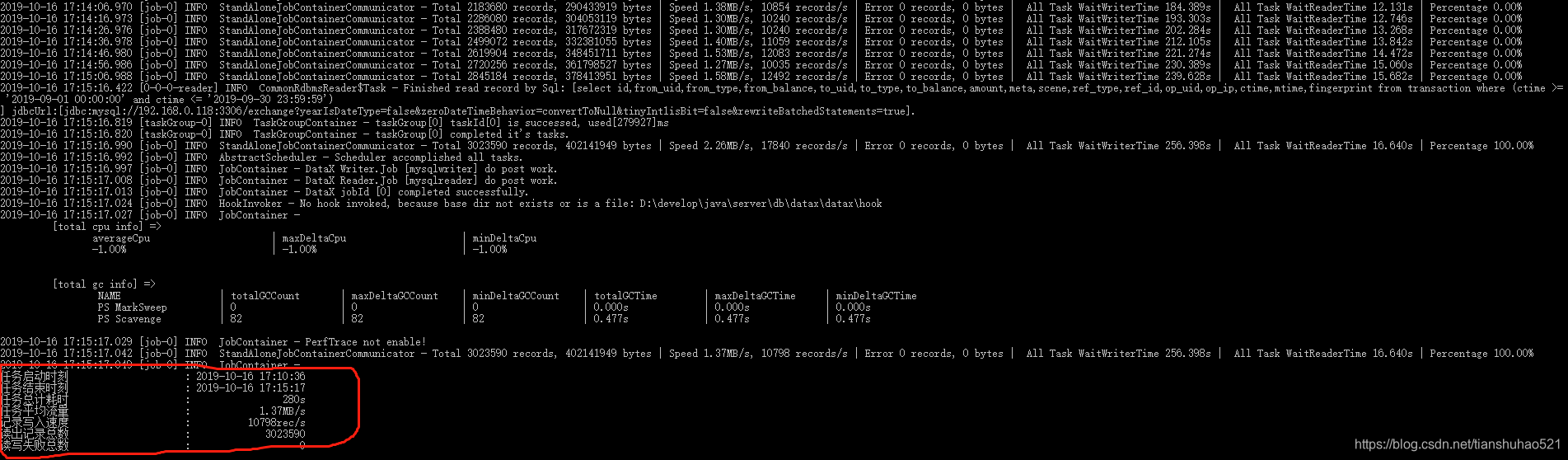

如图表示数据迁移成功。