Hadoop部署方式-完全分布式(Fully-Distributed Mode)

作者:尹正杰

版权声明:原创作品,谢绝转载!否则将追究法律责任。

本博客搭建的虚拟机是伪分布式环境(https://www.cnblogs.com/yinzhengjie/p/9058415.html)链接克隆出来的,我们只需要修改一下配置文件就可以轻松实现完全分布式部署了,部署架构是一个NameNode和三个DataNode,如果身为一个专业的运维人员你可能会一眼看出来这个集群存在单点故障,别着急,关于高可用集群部署请参考:https://www.cnblogs.com/yinzhengjie/p/9070017.html。

如果你是mac用户推荐使用"Parallets ",如果你是windows系统推荐使用“VMware Workstation”,如果是Linux用户的小伙伴推荐使用“VirtualBox”。我的实验环境在windows上操作的,安装的是VMware Workstation。

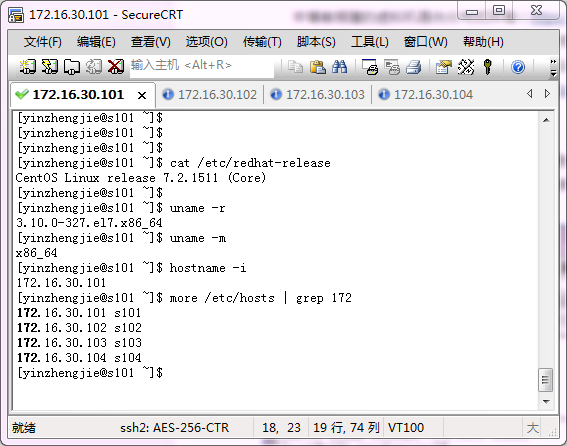

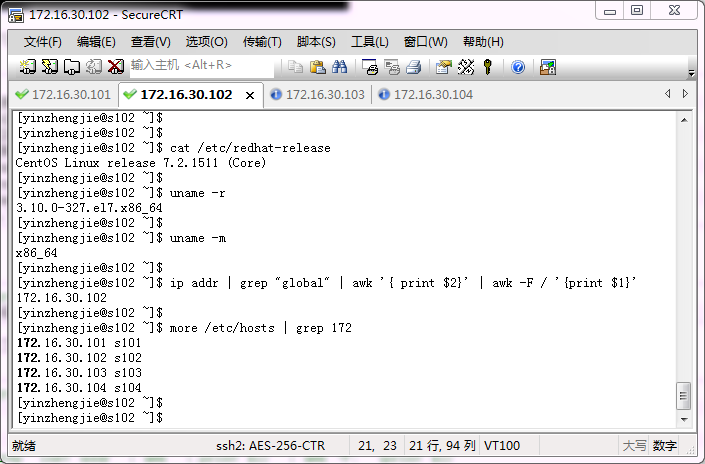

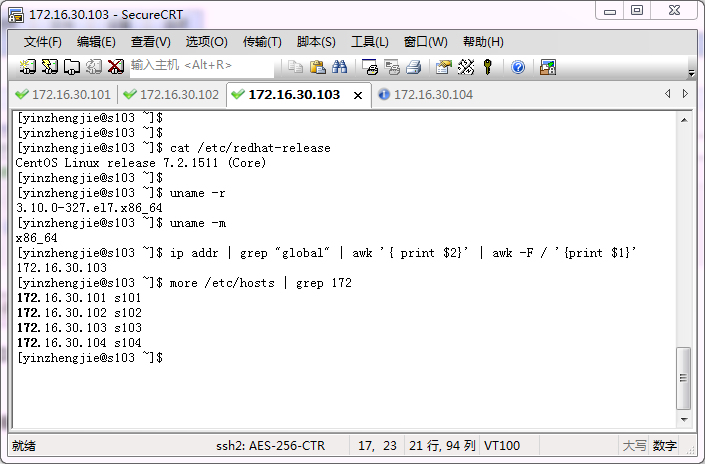

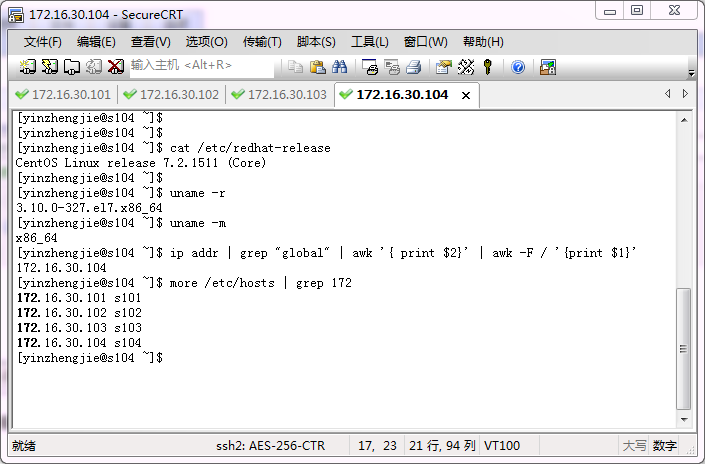

一.实验环境准备

需要准备四台Linux操作系统的服务器,配置参数最好一样,由于我的虚拟机是之前伪分布式部署而来的,因此我的环境都一致,并且每天虚拟机默认都是Hadoop伪分布式哟!

1>.NameNode服务器(172.16.30.101)

2>.DataNode服务器(172.16.30.102)

3>.DataNode服务器(172.16.30.103)

4>.DataNode服务器(172.16.30.104)

二.修改Hadoop的配置文件

修改的配置文件路径是我之前拷贝的full目录,绝对路径是:“/soft/hadoop/etc/full”,修改这个目录下的文件之后,我们将hadoop目录连接过来即可,当你需要伪分布式或者本地模式的时候只需要改变软连接指向的目录即可,这样就轻松实现了三种模式配置文件和平相处的局面。

1>.core-site.xml 配置文件

[yinzhengjie@s101 ~]$ more /soft/hadoop/etc/hadoop/core-site.xml <?xml version="1.0" encoding="UTF-8"?> <configuration> <property> <name>fs.defaultFS</name> <value>hdfs://s101:8020</value> </property> <property> <name>hadoop.tmp.dir</name> <value>/home/yinzhengjie/hadoop</value> </property> </configuration> <!-- core-site.xml配置文件的作用: 用于定义系统级别的参数,如HDFS URL、Hadoop的临时 目录以及用于rack-aware集群中的配置文件的配置等,此中的参 数定义会覆盖core-default.xml文件中的默认配置。 fs.defaultFS 参数的作用: #声明namenode的地址,相当于声明hdfs文件系统。 hadoop.tmp.dir 参数的作用: #声明hadoop工作目录的地址。 --> [yinzhengjie@s101 ~]$

2>.hdfs-site.xml 配置文件

[yinzhengjie@s101 ~]$ more /soft/hadoop/etc/hadoop/hdfs-site.xml <?xml version="1.0" encoding="UTF-8"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <configuration> <property> <name>dfs.replication</name> <value>3</value> </property> </configuration> <!-- hdfs-site.xml 配置文件的作用: #HDFS的相关设定,如文件副本的个数、块大小及是否使用强制权限 等,此中的参数定义会覆盖hdfs-default.xml文件中的默认配置. dfs.replication 参数的作用: #为了数据可用性及冗余的目的,HDFS会在多个节点上保存同一个数据 块的多个副本,其默认为3个。而只有一个节点的伪分布式环境中其仅用 保存一个副本即可,这可以通过dfs.replication属性进行定义。它是一个 软件级备份。 --> [yinzhengjie@s101 ~]$

3>.mapred-site.xml 配置文件

[yinzhengjie@s101 ~]$ more /soft/hadoop/etc/hadoop/mapred-site.xml <?xml version="1.0"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <configuration> <property> <name>mapreduce.framework.name</name> <value>yarn</value> </property> </configuration> <!-- mapred-site.xml 配置文件的作用: #HDFS的相关设定,如reduce任务的默认个数、任务所能够使用内存 的默认上下限等,此中的参数定义会覆盖mapred-default.xml文件中的 默认配置. mapreduce.framework.name 参数的作用: #指定MapReduce的计算框架,有三种可选,第一种:local(本地),第 二种是mapred(hadoop一代执行框架),第三种是yarn(二代执行框架),我 们这里配置用目前版本最新的计算框架yarn即可。 --> [yinzhengjie@s101 ~]$

4>.yarn-site.xml配置文件

[yinzhengjie@s101 ~]$ more /soft/hadoop/etc/hadoop/yarn-site.xml <?xml version="1.0"?> <configuration> <property> <name>yarn.resourcemanager.hostname</name> <value>s101</value> </property> <property> <name>yarn.nodemanager.aux-services</name> <value>mapreduce_shuffle</value> </property> </configuration> <!-- yarn-site.xml配置文件的作用: #主要用于配置调度器级别的参数. yarn.resourcemanager.hostname 参数的作用: #指定资源管理器(resourcemanager)的主机名 yarn.nodemanager.aux-services 参数的作用: #指定nodemanager使用shuffle --> [yinzhengjie@s101 ~]$

5>.slaves配置文件

[yinzhengjie@s101 ~]$ more /soft/hadoop/etc/hadoop/slaves #该配置文件的作用:是NameNode用与记录需要连接哪些DataNode服务器节点,用与启动或停止服务时发送远程命令指令的目标主机。 s102 s103 s104 [yinzhengjie@s101 ~]$

三.在NameNode节点上配置免密码登录各DataNode节点

1>.在本地上生成公私秘钥对(生成之前,把上次部署伪分布式的秘钥删除掉)

[yinzhengjie@s101 ~]$ rm -rf ~/.ssh/* [yinzhengjie@s101 ~]$ ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa Generating public/private rsa key pair. Your identification has been saved in /home/yinzhengjie/.ssh/id_rsa. Your public key has been saved in /home/yinzhengjie/.ssh/id_rsa.pub. The key fingerprint is: a3:a4:ae:d8:f7:7f:a2:b6:d6:15:74:29:de:fb:14:08 yinzhengjie@s101 The key's randomart image is: +--[ RSA 2048]----+ | . | | E o | | o = . | | o o . | | . S . . . | | o . .. . . | | . .. . o | | o .. o o . . | |. oo.+++.o | +-----------------+ [yinzhengjie@s101 ~]$

2>.使用ssh-copy-id命令分配公钥到DataNode服务器(172.16.30.101)

[yinzhengjie@s101 ~]$ ssh-copy-id yinzhengjie@s101 The authenticity of host 's101 (172.16.30.101)' can't be established. ECDSA key fingerprint is fa:25:bc:03:7e:99:eb:12:1e:bc:a8:c9:ce:39:ba:7b. Are you sure you want to continue connecting (yes/no)? yes /usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed /usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys yinzhengjie@s101's password: Number of key(s) added: 1 Now try logging into the machine, with: "ssh 'yinzhengjie@s101'" and check to make sure that only the key(s) you wanted were added. [yinzhengjie@s101 ~]$ ssh s101 Last login: Fri May 25 18:35:40 2018 from 172.16.30.1 [yinzhengjie@s101 ~]$ who yinzhengjie pts/0 2018-05-25 18:35 (172.16.30.1) yinzhengjie pts/1 2018-05-25 19:17 (s101) [yinzhengjie@s101 ~]$ exit logout Connection to s101 closed. [yinzhengjie@s101 ~]$ who yinzhengjie pts/0 2018-05-25 18:35 (172.16.30.1) [yinzhengjie@s101 ~]$

3>.使用ssh-copy-id命令分配公钥到DataNode服务器(172.16.30.102)

[yinzhengjie@s101 ~]$ ssh-copy-id yinzhengjie@s102 /usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed /usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys yinzhengjie@s102's password: Number of key(s) added: 1 Now try logging into the machine, with: "ssh 'yinzhengjie@s102'" and check to make sure that only the key(s) you wanted were added. [yinzhengjie@s101 ~]$ ssh s102 Last login: Fri May 25 18:35:42 2018 from 172.16.30.1 [yinzhengjie@s102 ~]$ who yinzhengjie pts/0 2018-05-25 18:35 (172.16.30.1) yinzhengjie pts/1 2018-05-25 19:19 (s101) [yinzhengjie@s102 ~]$ exit logout Connection to s102 closed. [yinzhengjie@s101 ~]$ who yinzhengjie pts/0 2018-05-25 18:35 (172.16.30.1) [yinzhengjie@s101 ~]$

4>.使用ssh-copy-id命令分配公钥到DataNode服务器(172.16.30.103)

[yinzhengjie@s101 ~]$ ssh-copy-id yinzhengjie@s103 The authenticity of host 's103 (172.16.30.103)' can't be established. ECDSA key fingerprint is fa:25:bc:03:7e:99:eb:12:1e:bc:a8:c9:ce:39:ba:7b. Are you sure you want to continue connecting (yes/no)? yes /usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed /usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys yinzhengjie@s103's password: Number of key(s) added: 1 Now try logging into the machine, with: "ssh 'yinzhengjie@s103'" and check to make sure that only the key(s) you wanted were added. [yinzhengjie@s101 ~]$ ssh s103 Last login: Fri May 25 18:35:45 2018 from 172.16.30.1 [yinzhengjie@s103 ~]$ who yinzhengjie pts/0 2018-05-25 18:35 (172.16.30.1) yinzhengjie pts/1 2018-05-25 19:19 (s101) [yinzhengjie@s103 ~]$ exit logout Connection to s103 closed. [yinzhengjie@s101 ~]$ who yinzhengjie pts/0 2018-05-25 18:35 (172.16.30.1) [yinzhengjie@s101 ~]$

5>.使用ssh-copy-id命令分配公钥到DataNode服务器(172.16.30.104)

[yinzhengjie@s101 ~]$ ssh-copy-id yinzhengjie@s104 The authenticity of host 's104 (172.16.30.104)' can't be established. ECDSA key fingerprint is fa:25:bc:03:7e:99:eb:12:1e:bc:a8:c9:ce:39:ba:7b. Are you sure you want to continue connecting (yes/no)? yes /usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed /usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys yinzhengjie@s104's password: Number of key(s) added: 1 Now try logging into the machine, with: "ssh 'yinzhengjie@s104'" and check to make sure that only the key(s) you wanted were added. [yinzhengjie@s101 ~]$ ssh s104 Last login: Fri May 25 18:35:47 2018 from 172.16.30.1 [yinzhengjie@s104 ~]$ who yinzhengjie pts/0 2018-05-25 18:35 (172.16.30.1) yinzhengjie pts/1 2018-05-25 19:20 (s101) [yinzhengjie@s104 ~]$ exit logout Connection to s104 closed. [yinzhengjie@s101 ~]$ who yinzhengjie pts/0 2018-05-25 18:35 (172.16.30.1) [yinzhengjie@s101 ~]$

注意:以上是普通使配置免密登录,root用户配置方法一致,最好也配置上root用户的免密登录,因为下文我会执行相应的shell脚本。

[yinzhengjie@s101 ~]$ su Password: [root@s101 yinzhengjie]# cd [root@s101 ~]# [root@s101 ~]# ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa Generating public/private rsa key pair. Created directory '/root/.ssh'. Your identification has been saved in /root/.ssh/id_rsa. Your public key has been saved in /root/.ssh/id_rsa.pub. The key fingerprint is: 9b:47:9a:ca:d2:f9:a5:57:79:35:40:be:07:3a:ed:40 root@s101 The key's randomart image is: +--[ RSA 2048]----+ | .. | | .. | | E o. | | . o o..| | S .+ + o.| | * * o | | . .= o. o | | ..o. +. | | .o.o. | +-----------------+ [root@s101 ~]# [root@s101 ~]# ssh-copy-id root@s101 The authenticity of host 's101 (172.16.30.101)' can't be established. ECDSA key fingerprint is fa:25:bc:03:7e:99:eb:12:1e:bc:a8:c9:ce:39:ba:7b. Are you sure you want to continue connecting (yes/no)? yes /usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed /usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys root@s101's password: Number of key(s) added: 1 Now try logging into the machine, with: "ssh 'root@s101'" and check to make sure that only the key(s) you wanted were added. [root@s101 ~]# ssh s101 Last login: Fri May 25 19:44:37 2018 [root@s101 ~]# who yinzhengjie pts/0 2018-05-25 18:35 (172.16.30.1) root pts/1 2018-05-25 19:49 (s101) [root@s101 ~]# exit logout Connection to s101 closed. [root@s101 ~]# who yinzhengjie pts/0 2018-05-25 18:35 (172.16.30.1) [root@s101 ~]#

[root@s101 ~]# ssh-copy-id root@s102 The authenticity of host 's102 (172.16.30.102)' can't be established. ECDSA key fingerprint is fa:25:bc:03:7e:99:eb:12:1e:bc:a8:c9:ce:39:ba:7b. Are you sure you want to continue connecting (yes/no)? yes /usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed /usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys root@s102's password: Number of key(s) added: 1 Now try logging into the machine, with: "ssh 'root@s102'" and check to make sure that only the key(s) you wanted were added. [root@s101 ~]# ssh s102 Last login: Fri May 25 09:28:59 2018 [root@s102 ~]# who yinzhengjie pts/0 2018-05-25 18:35 (172.16.30.1) root pts/1 2018-05-25 19:50 (s101) [root@s102 ~]# exit logout Connection to s102 closed. [root@s101 ~]# who yinzhengjie pts/0 2018-05-25 18:35 (172.16.30.1) [root@s101 ~]#

[root@s101 ~]# ssh-copy-id root@s103 /usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed /usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys root@s103's password: Number of key(s) added: 1 Now try logging into the machine, with: "ssh 'root@s103'" and check to make sure that only the key(s) you wanted were added. [root@s101 ~]# ssh s103 Last login: Fri May 25 19:51:44 2018 from s101 [root@s103 ~]# who yinzhengjie pts/0 2018-05-25 18:35 (172.16.30.1) root pts/1 2018-05-25 19:52 (s101) [root@s103 ~]# exit logout Connection to s103 closed. [root@s101 ~]# who yinzhengjie pts/0 2018-05-25 18:35 (172.16.30.1) [root@s101 ~]#

[root@s101 ~]# ssh-copy-id root@s104 The authenticity of host 's104 (172.16.30.104)' can't be established. ECDSA key fingerprint is fa:25:bc:03:7e:99:eb:12:1e:bc:a8:c9:ce:39:ba:7b. Are you sure you want to continue connecting (yes/no)? yes /usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed /usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys root@s104's password: Number of key(s) added: 1 Now try logging into the machine, with: "ssh 'root@s104'" and check to make sure that only the key(s) you wanted were added. [root@s101 ~]# ssh s104 Last login: Fri May 25 09:31:15 2018 [root@s104 ~]# who yinzhengjie pts/0 2018-05-25 18:35 (172.16.30.1) root pts/1 2018-05-25 19:52 (s101) [root@s104 ~]# exit logout Connection to s104 closed. [root@s101 ~]# who yinzhengjie pts/0 2018-05-25 18:35 (172.16.30.1) [root@s101 ~]#

四.自定义shell脚本

1>.编写在批量执行Linux命令的脚本(起名:xcall.sh)

[yinzhengjie@s101 ~]$ su Password: [root@s101 yinzhengjie]# [root@s101 yinzhengjie]# ll total 390272 drwxrwxr-x. 4 yinzhengjie yinzhengjie 35 May 25 19:08 hadoop -rw-r--r--. 1 root root 214092195 Aug 26 2016 hadoop-2.7.3.tar.gz -rw-r--r--. 1 root root 185540433 May 17 2017 jdk-8u131-linux-x64.tar.gz -rw-rw-r--. 1 yinzhengjie yinzhengjie 517 May 25 20:05 xcall.sh [root@s101 yinzhengjie]# more xcall.sh #!/bin/bash #@author :yinzhengjie #blog:http://www.cnblogs.com/yinzhengjie #EMAIL:[email protected] #判断用户是否传参 if [ $# -lt 1 ];then echo "请输入参数" exit fi #获取用户输入的命令 cmd=$@ for (( i=101;i<=104;i++ )) do #使终端变绿色 tput setaf 2 echo ============= s$i $cmd ============ #使终端变回原来的颜色,即白灰色 tput setaf 7 #远程执行命令 ssh s$i $cmd #判断命令是否执行成功 if [ $? == 0 ];then echo "命令执行成功" fi done [root@s101 yinzhengjie]# [root@s101 yinzhengjie]# sh xcall.sh "ln -s /soft/jdk/bin/jps /usr/local/bin/jps" ============= s101 ln -s /soft/jdk/bin/jps /usr/local/bin/jps ============ 命令执行成功 ============= s102 ln -s /soft/jdk/bin/jps /usr/local/bin/jps ============ 命令执行成功 ============= s103 ln -s /soft/jdk/bin/jps /usr/local/bin/jps ============ 命令执行成功 ============= s104 ln -s /soft/jdk/bin/jps /usr/local/bin/jps ============ 命令执行成功 [root@s101 yinzhengjie]# sh xcall.sh jps ============= s101 jps ============ 4977 Jps 命令执行成功 ============= s102 jps ============ 2854 Jps 命令执行成功 ============= s103 jps ============ 2822 Jps 命令执行成功 ============= s104 jps ============ 2788 Jps 命令执行成功 [root@s101 yinzhengjie]#

2>.编写远程同步脚本(起名:xrsync.sh)

在写同步脚本之前,每个客户端都应该安装的有rsync这个软件,检查各个虚拟机是否可以正常链接互联网,如果可以访问互联网直接yum安装一下,在各个虚拟机可以访问网络且yum源配置正确的情况下也可以用咱们上面的脚本(xcall.sh)执行批量安装哟!

[root@s101 yinzhengjie]# sh xcall.sh "yum -y install rsync" ============= s101 yum -y install rsync ============ Loaded plugins: fastestmirror Loading mirror speeds from cached hostfile * base: mirrors.aliyun.com * extras: mirrors.huaweicloud.com * updates: mirrors.huaweicloud.com Resolving Dependencies --> Running transaction check ---> Package rsync.x86_64 0:3.1.2-4.el7 will be installed --> Finished Dependency Resolution Dependencies Resolved ================================================================================ Package Arch Version Repository Size ================================================================================ Installing: rsync x86_64 3.1.2-4.el7 base 403 k Transaction Summary ================================================================================ Install 1 Package Total download size: 403 k Installed size: 815 k Downloading packages: Running transaction check Running transaction test Transaction test succeeded Running transaction Installing : rsync-3.1.2-4.el7.x86_64 1/1 Verifying : rsync-3.1.2-4.el7.x86_64 1/1 Installed: rsync.x86_64 0:3.1.2-4.el7 Complete! 命令执行成功 ============= s102 yum -y install rsync ============ Loaded plugins: fastestmirror Loading mirror speeds from cached hostfile * base: mirrors.aliyun.com * extras: mirrors.huaweicloud.com * updates: mirrors.huaweicloud.com Resolving Dependencies --> Running transaction check ---> Package rsync.x86_64 0:3.1.2-4.el7 will be installed --> Finished Dependency Resolution Dependencies Resolved ================================================================================ Package Arch Version Repository Size ================================================================================ Installing: rsync x86_64 3.1.2-4.el7 base 403 k Transaction Summary ================================================================================ Install 1 Package Total download size: 403 k Installed size: 815 k Downloading packages: Running transaction check Running transaction test Transaction test succeeded Running transaction Installing : rsync-3.1.2-4.el7.x86_64 1/1 Verifying : rsync-3.1.2-4.el7.x86_64 1/1 Installed: rsync.x86_64 0:3.1.2-4.el7 Complete! 命令执行成功 ============= s103 yum -y install rsync ============ Loaded plugins: fastestmirror Loading mirror speeds from cached hostfile * base: mirrors.aliyun.com * extras: mirrors.huaweicloud.com * updates: mirrors.huaweicloud.com Resolving Dependencies --> Running transaction check ---> Package rsync.x86_64 0:3.1.2-4.el7 will be installed --> Finished Dependency Resolution Dependencies Resolved ================================================================================ Package Arch Version Repository Size ================================================================================ Installing: rsync x86_64 3.1.2-4.el7 base 403 k Transaction Summary ================================================================================ Install 1 Package Total download size: 403 k Installed size: 815 k Downloading packages: Running transaction check Running transaction test Transaction test succeeded Running transaction Installing : rsync-3.1.2-4.el7.x86_64 1/1 Verifying : rsync-3.1.2-4.el7.x86_64 1/1 Installed: rsync.x86_64 0:3.1.2-4.el7 Complete! 命令执行成功 ============= s104 yum -y install rsync ============ Loaded plugins: fastestmirror Loading mirror speeds from cached hostfile * base: mirrors.aliyun.com * extras: mirrors.huaweicloud.com * updates: mirrors.huaweicloud.com Resolving Dependencies --> Running transaction check ---> Package rsync.x86_64 0:3.1.2-4.el7 will be installed --> Finished Dependency Resolution Dependencies Resolved ================================================================================ Package Arch Version Repository Size ================================================================================ Installing: rsync x86_64 3.1.2-4.el7 base 403 k Transaction Summary ================================================================================ Install 1 Package Total download size: 403 k Installed size: 815 k Downloading packages: Running transaction check Running transaction test Transaction test succeeded Running transaction Installing : rsync-3.1.2-4.el7.x86_64 1/1 Verifying : rsync-3.1.2-4.el7.x86_64 1/1 Installed: rsync.x86_64 0:3.1.2-4.el7 Complete! 命令执行成功 [root@s101 yinzhengjie]#

#!/bin/bash #@author :yinzhengjie #blog:http://www.cnblogs.com/yinzhengjie #EMAIL:[email protected] #判断用户是否传参 if [ $# -lt 1 ];then echo "请输入参数"; exit fi #获取文件路径 file=$@ #获取子路径 filename=`basename $file` #获取父路径 dirpath=`dirname $file` #获取完整路径 cd $dirpath fullpath=`pwd -P` #同步文件到DataNode for (( i=102;i<=104;i++ )) do #使终端变绿色 tput setaf 2 echo =========== s$i %file =========== #使终端变回原来的颜色,即白灰色 tput setaf 7 #远程执行命令 rsync -lr $filename `whoami`@s$i:$fullpath #判断命令是否执行成功 if [ $? == 0 ];then echo "命令执行成功" fi done

3>.将自定义脚本添加执行权限并链接到PATH环境变量中

[yinzhengjie@s101 ~]$ su Password: [root@s101 yinzhengjie]# ll total 390276 drwxrwxr-x. 4 yinzhengjie yinzhengjie 35 May 25 19:08 hadoop -rw-r--r--. 1 root root 214092195 Aug 26 2016 hadoop-2.7.3.tar.gz -rw-r--r--. 1 root root 185540433 May 17 2017 jdk-8u131-linux-x64.tar.gz -rw-rw-r--. 1 yinzhengjie yinzhengjie 517 May 25 20:05 xcall.sh -rw-rw-r--. 1 yinzhengjie yinzhengjie 700 May 25 20:32 xrsync.sh [root@s101 yinzhengjie]# chmod 755 xcall.sh xrsync.sh [root@s101 yinzhengjie]# ll total 390276 drwxrwxr-x. 4 yinzhengjie yinzhengjie 35 May 25 19:08 hadoop -rw-r--r--. 1 root root 214092195 Aug 26 2016 hadoop-2.7.3.tar.gz -rw-r--r--. 1 root root 185540433 May 17 2017 jdk-8u131-linux-x64.tar.gz -rwxr-xr-x. 1 yinzhengjie yinzhengjie 517 May 25 20:05 xcall.sh -rwxr-xr-x. 1 yinzhengjie yinzhengjie 700 May 25 20:32 xrsync.sh [root@s101 yinzhengjie]# [root@s101 yinzhengjie]# cp xcall.sh xrsync.sh /usr/local/bin/ [root@s101 yinzhengjie]# xcall.sh "ip addr | grep global " ============= s101 ip addr | grep global ============ inet 172.16.30.101/24 brd 172.16.30.255 scope global eno16777736 命令执行成功 ============= s102 ip addr | grep global ============ inet 172.16.30.102/24 brd 172.16.30.255 scope global eno16777736 命令执行成功 ============= s103 ip addr | grep global ============ inet 172.16.30.103/24 brd 172.16.30.255 scope global eno16777736 命令执行成功 ============= s104 ip addr | grep global ============ inet 172.16.30.104/24 brd 172.16.30.255 scope global eno16777736 命令执行成功 [root@s101 yinzhengjie]#

五.启动服务并验证是否成功

1>.使用自定义脚本同步配置文件

[yinzhengjie@s101 ~]$ xrsync.sh /soft/hadoop/etc/full/ =========== s102 %file =========== 命令执行成功 =========== s103 %file =========== 命令执行成功 =========== s104 %file =========== 命令执行成功 [yinzhengjie@s101 ~]$

2>.修改s102-s104的符号链接

[yinzhengjie@s101 ~]$ xcall.sh "ln -sfT /soft/hadoop/etc/full/ /soft/hadoop/etc/hadoop" ============= s101 ln -sfT /soft/hadoop/etc/full/ /soft/hadoop/etc/hadoop ============ 命令执行成功 ============= s102 ln -sfT /soft/hadoop/etc/full/ /soft/hadoop/etc/hadoop ============ 命令执行成功 ============= s103 ln -sfT /soft/hadoop/etc/full/ /soft/hadoop/etc/hadoop ============ 命令执行成功 ============= s104 ln -sfT /soft/hadoop/etc/full/ /soft/hadoop/etc/hadoop ============ 命令执行成功 [yinzhengjie@s101 ~]$

3>.格式化文件系统

[yinzhengjie@s101 ~]$ hdfs namenode -format 18/05/25 21:04:21 INFO namenode.NameNode: STARTUP_MSG: /************************************************************ STARTUP_MSG: Starting NameNode STARTUP_MSG: host = s101/172.16.30.101 STARTUP_MSG: args = [-format] STARTUP_MSG: version = 2.7.3 STARTUP_MSG: classpath = /soft/hadoop-2.7.3/etc/hadoop:/soft/hadoop-2.7.3/share/hadoop/common/lib/jaxb-impl-2.2.3-1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jaxb-api-2.2.2.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/stax-api-1.0-2.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/activation-1.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jackson-core-asl-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jackson-mapper-asl-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jackson-jaxrs-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jackson-xc-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jersey-server-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/asm-3.2.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/log4j-1.2.17.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jets3t-0.9.0.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/httpclient-4.2.5.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/httpcore-4.2.5.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/java-xmlbuilder-0.4.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-lang-2.6.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-configuration-1.6.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-digester-1.8.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-beanutils-1.7.0.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-beanutils-core-1.8.0.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/slf4j-api-1.7.10.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/avro-1.7.4.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/paranamer-2.3.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/snappy-java-1.0.4.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-compress-1.4.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/xz-1.0.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/protobuf-java-2.5.0.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/gson-2.2.4.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/hadoop-auth-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/apacheds-kerberos-codec-2.0.0-M15.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/apacheds-i18n-2.0.0-M15.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/api-asn1-api-1.0.0-M20.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/api-util-1.0.0-M20.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/zookeeper-3.4.6.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/netty-3.6.2.Final.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/curator-framework-2.7.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/curator-client-2.7.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jsch-0.1.42.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/curator-recipes-2.7.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/htrace-core-3.1.0-incubating.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/junit-4.11.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/hamcrest-core-1.3.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/mockito-all-1.8.5.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/hadoop-annotations-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/guava-11.0.2.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jsr305-3.0.0.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-cli-1.2.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-math3-3.1.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/xmlenc-0.52.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-httpclient-3.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-logging-1.1.3.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-codec-1.4.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-io-2.4.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-net-3.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-collections-3.2.2.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/servlet-api-2.5.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jetty-6.1.26.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jetty-util-6.1.26.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jsp-api-2.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jersey-core-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jersey-json-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jettison-1.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/hadoop-common-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/common/hadoop-common-2.7.3-tests.jar:/soft/hadoop-2.7.3/share/hadoop/common/hadoop-nfs-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-codec-1.4.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/log4j-1.2.17.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-logging-1.1.3.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/netty-3.6.2.Final.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/guava-11.0.2.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/jsr305-3.0.0.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-cli-1.2.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/xmlenc-0.52.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-io-2.4.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/servlet-api-2.5.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/jetty-6.1.26.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/jetty-util-6.1.26.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/jersey-core-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/jackson-core-asl-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/jackson-mapper-asl-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/jersey-server-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/asm-3.2.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-lang-2.6.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/protobuf-java-2.5.0.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/htrace-core-3.1.0-incubating.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-daemon-1.0.13.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/netty-all-4.0.23.Final.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/xercesImpl-2.9.1.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/xml-apis-1.3.04.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/leveldbjni-all-1.8.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/hadoop-hdfs-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/hadoop-hdfs-2.7.3-tests.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/hadoop-hdfs-nfs-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/zookeeper-3.4.6-tests.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/commons-lang-2.6.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/guava-11.0.2.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jsr305-3.0.0.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/commons-logging-1.1.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/protobuf-java-2.5.0.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/commons-cli-1.2.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/log4j-1.2.17.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jaxb-api-2.2.2.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/stax-api-1.0-2.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/activation-1.1.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/commons-compress-1.4.1.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/xz-1.0.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/servlet-api-2.5.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/commons-codec-1.4.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jetty-util-6.1.26.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-core-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-client-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jackson-core-asl-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jackson-mapper-asl-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jackson-jaxrs-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jackson-xc-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/guice-servlet-3.0.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/guice-3.0.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/javax.inject-1.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/aopalliance-1.0.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/commons-io-2.4.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-server-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/asm-3.2.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-json-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jettison-1.1.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jaxb-impl-2.2.3-1.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-guice-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/zookeeper-3.4.6.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/netty-3.6.2.Final.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/leveldbjni-all-1.8.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/commons-collections-3.2.2.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jetty-6.1.26.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-api-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-common-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-common-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-nodemanager-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-web-proxy-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-applicationhistoryservice-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-resourcemanager-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-tests-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-client-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-sharedcachemanager-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-applications-distributedshell-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-applications-unmanaged-am-launcher-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-registry-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/protobuf-java-2.5.0.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/avro-1.7.4.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/jackson-core-asl-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/jackson-mapper-asl-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/paranamer-2.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/snappy-java-1.0.4.1.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/commons-compress-1.4.1.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/xz-1.0.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/hadoop-annotations-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/commons-io-2.4.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/jersey-core-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/jersey-server-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/asm-3.2.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/log4j-1.2.17.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/netty-3.6.2.Final.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/leveldbjni-all-1.8.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/guice-3.0.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/javax.inject-1.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/aopalliance-1.0.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/jersey-guice-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/guice-servlet-3.0.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/junit-4.11.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/hamcrest-core-1.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-core-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-common-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-shuffle-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-app-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-plugins-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.7.3-tests.jar:/contrib/capacity-scheduler/*.jar STARTUP_MSG: build = https://git-wip-us.apache.org/repos/asf/hadoop.git -r baa91f7c6bc9cb92be5982de4719c1c8af91ccff; compiled by 'root' on 2016-08-18T01:41Z STARTUP_MSG: java = 1.8.0_131 ************************************************************/ 18/05/25 21:04:21 INFO namenode.NameNode: registered UNIX signal handlers for [TERM, HUP, INT] 18/05/25 21:04:21 INFO namenode.NameNode: createNameNode [-format] Formatting using clusterid: CID-4be4a490-3e1b-4675-b613-d37bb9f15790 18/05/25 21:04:22 INFO namenode.FSNamesystem: No KeyProvider found. 18/05/25 21:04:22 INFO namenode.FSNamesystem: fsLock is fair:true 18/05/25 21:04:22 INFO blockmanagement.DatanodeManager: dfs.block.invalidate.limit=1000 18/05/25 21:04:22 INFO blockmanagement.DatanodeManager: dfs.namenode.datanode.registration.ip-hostname-check=true 18/05/25 21:04:22 INFO blockmanagement.BlockManager: dfs.namenode.startup.delay.block.deletion.sec is set to 000:00:00:00.000 18/05/25 21:04:22 INFO blockmanagement.BlockManager: The block deletion will start around 2018 May 25 21:04:22 18/05/25 21:04:22 INFO util.GSet: Computing capacity for map BlocksMap 18/05/25 21:04:22 INFO util.GSet: VM type = 64-bit 18/05/25 21:04:22 INFO util.GSet: 2.0% max memory 889 MB = 17.8 MB 18/05/25 21:04:22 INFO util.GSet: capacity = 2^21 = 2097152 entries 18/05/25 21:04:22 INFO blockmanagement.BlockManager: dfs.block.access.token.enable=false 18/05/25 21:04:22 INFO blockmanagement.BlockManager: defaultReplication = 3 18/05/25 21:04:22 INFO blockmanagement.BlockManager: maxReplication = 512 18/05/25 21:04:22 INFO blockmanagement.BlockManager: minReplication = 1 18/05/25 21:04:22 INFO blockmanagement.BlockManager: maxReplicationStreams = 2 18/05/25 21:04:22 INFO blockmanagement.BlockManager: replicationRecheckInterval = 3000 18/05/25 21:04:22 INFO blockmanagement.BlockManager: encryptDataTransfer = false 18/05/25 21:04:22 INFO blockmanagement.BlockManager: maxNumBlocksToLog = 1000 18/05/25 21:04:22 INFO namenode.FSNamesystem: fsOwner = yinzhengjie (auth:SIMPLE) 18/05/25 21:04:22 INFO namenode.FSNamesystem: supergroup = supergroup 18/05/25 21:04:22 INFO namenode.FSNamesystem: isPermissionEnabled = true 18/05/25 21:04:22 INFO namenode.FSNamesystem: HA Enabled: false 18/05/25 21:04:22 INFO namenode.FSNamesystem: Append Enabled: true 18/05/25 21:04:22 INFO util.GSet: Computing capacity for map INodeMap 18/05/25 21:04:22 INFO util.GSet: VM type = 64-bit 18/05/25 21:04:22 INFO util.GSet: 1.0% max memory 889 MB = 8.9 MB 18/05/25 21:04:22 INFO util.GSet: capacity = 2^20 = 1048576 entries 18/05/25 21:04:22 INFO namenode.FSDirectory: ACLs enabled? false 18/05/25 21:04:22 INFO namenode.FSDirectory: XAttrs enabled? true 18/05/25 21:04:22 INFO namenode.FSDirectory: Maximum size of an xattr: 16384 18/05/25 21:04:22 INFO namenode.NameNode: Caching file names occuring more than 10 times 18/05/25 21:04:22 INFO util.GSet: Computing capacity for map cachedBlocks 18/05/25 21:04:22 INFO util.GSet: VM type = 64-bit 18/05/25 21:04:22 INFO util.GSet: 0.25% max memory 889 MB = 2.2 MB 18/05/25 21:04:22 INFO util.GSet: capacity = 2^18 = 262144 entries 18/05/25 21:04:22 INFO namenode.FSNamesystem: dfs.namenode.safemode.threshold-pct = 0.9990000128746033 18/05/25 21:04:22 INFO namenode.FSNamesystem: dfs.namenode.safemode.min.datanodes = 0 18/05/25 21:04:22 INFO namenode.FSNamesystem: dfs.namenode.safemode.extension = 30000 18/05/25 21:04:22 INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.window.num.buckets = 10 18/05/25 21:04:22 INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.num.users = 10 18/05/25 21:04:22 INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.windows.minutes = 1,5,25 18/05/25 21:04:22 INFO namenode.FSNamesystem: Retry cache on namenode is enabled 18/05/25 21:04:22 INFO namenode.FSNamesystem: Retry cache will use 0.03 of total heap and retry cache entry expiry time is 600000 millis 18/05/25 21:04:22 INFO util.GSet: Computing capacity for map NameNodeRetryCache 18/05/25 21:04:22 INFO util.GSet: VM type = 64-bit 18/05/25 21:04:22 INFO util.GSet: 0.029999999329447746% max memory 889 MB = 273.1 KB 18/05/25 21:04:22 INFO util.GSet: capacity = 2^15 = 32768 entries 18/05/25 21:04:22 INFO namenode.FSImage: Allocated new BlockPoolId: BP-692948037-172.16.30.101-1527307462569 18/05/25 21:04:22 INFO common.Storage: Storage directory /home/yinzhengjie/hadoop/dfs/name has been successfully formatted. 18/05/25 21:04:22 INFO namenode.FSImageFormatProtobuf: Saving image file /home/yinzhengjie/hadoop/dfs/name/current/fsimage.ckpt_0000000000000000000 using no compression 18/05/25 21:04:22 INFO namenode.FSImageFormatProtobuf: Image file /home/yinzhengjie/hadoop/dfs/name/current/fsimage.ckpt_0000000000000000000 of size 358 bytes saved in 0 seconds. 18/05/25 21:04:22 INFO namenode.NNStorageRetentionManager: Going to retain 1 images with txid >= 0 18/05/25 21:04:22 INFO util.ExitUtil: Exiting with status 0 18/05/25 21:04:22 INFO namenode.NameNode: SHUTDOWN_MSG: /************************************************************ SHUTDOWN_MSG: Shutting down NameNode at s101/172.16.30.101 ************************************************************/ [yinzhengjie@s101 ~]$ echo $? 0 [yinzhengjie@s101 ~]$

4>.启动hadoop

[yinzhengjie@s101 ~]$ start-all.sh This script is Deprecated. Instead use start-dfs.sh and start-yarn.sh Starting namenodes on [s101] s101: starting namenode, logging to /soft/hadoop-2.7.3/logs/hadoop-yinzhengjie-namenode-s101.out s102: starting datanode, logging to /soft/hadoop-2.7.3/logs/hadoop-yinzhengjie-datanode-s102.out s104: starting datanode, logging to /soft/hadoop-2.7.3/logs/hadoop-yinzhengjie-datanode-s104.out s103: starting datanode, logging to /soft/hadoop-2.7.3/logs/hadoop-yinzhengjie-datanode-s103.out Starting secondary namenodes [0.0.0.0] 0.0.0.0: starting secondarynamenode, logging to /soft/hadoop-2.7.3/logs/hadoop-yinzhengjie-secondarynamenode-s101.out starting yarn daemons starting resourcemanager, logging to /soft/hadoop-2.7.3/logs/yarn-yinzhengjie-resourcemanager-s101.out s103: starting nodemanager, logging to /soft/hadoop-2.7.3/logs/yarn-yinzhengjie-nodemanager-s103.out s104: starting nodemanager, logging to /soft/hadoop-2.7.3/logs/yarn-yinzhengjie-nodemanager-s104.out s102: starting nodemanager, logging to /soft/hadoop-2.7.3/logs/yarn-yinzhengjie-nodemanager-s102.out [yinzhengjie@s101 ~]$

5>.用自定义脚本验证NameNode和DataNode是否已经正常启动

[yinzhengjie@s101 ~]$ xcall.sh jps ============= s101 jps ============ 7593 NameNode 7946 ResourceManager 8220 Jps 7789 SecondaryNameNode 命令执行成功 ============= s102 jps ============ 3344 NodeManager 3241 DataNode 3453 Jps 命令执行成功 ============= s103 jps ============ 3296 NodeManager 3193 DataNode 3406 Jps 命令执行成功 ============= s104 jps ============ 3395 Jps 3286 NodeManager 3183 DataNode 命令执行成功 [yinzhengjie@s101 ~]$

6>.上传文件到服务器

[yinzhengjie@s101 ~]$ netstat -tnlp | grep "java" (Not all processes could be identified, non-owned process info will not be shown, you would have to be root to see it all.) tcp 0 0 0.0.0.0:50090 0.0.0.0:* LISTEN 7789/java tcp 0 0 172.16.30.101:8020 0.0.0.0:* LISTEN 7593/java tcp 0 0 0.0.0.0:50070 0.0.0.0:* LISTEN 7593/java tcp6 0 0 172.16.30.101:8088 :::* LISTEN 7946/java tcp6 0 0 172.16.30.101:8030 :::* LISTEN 7946/java tcp6 0 0 172.16.30.101:8031 :::* LISTEN 7946/java tcp6 0 0 172.16.30.101:8032 :::* LISTEN 7946/java tcp6 0 0 172.16.30.101:8033 :::* LISTEN 7946/java [yinzhengjie@s101 ~]$ [yinzhengjie@s101 ~]$ hdfs dfs -put xcall.sh xrsync.sh / [yinzhengjie@s101 ~]$ hdfs dfs -ls / Found 2 items -rw-r--r-- 3 yinzhengjie supergroup 517 2018-05-25 21:17 /xcall.sh -rw-r--r-- 3 yinzhengjie supergroup 700 2018-05-25 21:17 /xrsync.sh [yinzhengjie@s101 ~]$

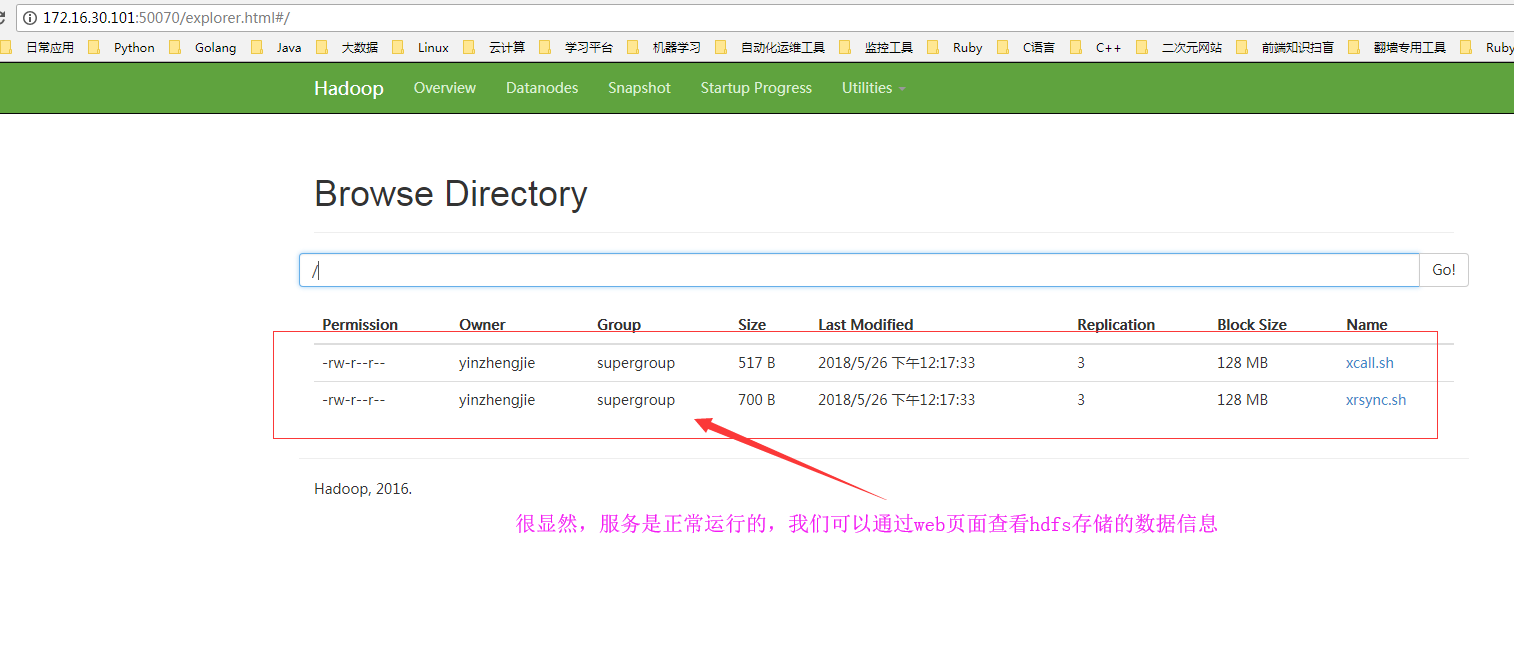

7>.客户端检查是否可用正常访问Hadoop的UI界面

五.Hadoop常用参数介绍

Hadoop有很多参数,其默认配置大多数仅适用于standalone模式,虽然大多情况下在完全分布式(Fully distributed)模式中也没有问题,但距最优化的运行模式去相去甚远。在生产环境中通常需要调整的参数如下:

1>.dfs.name.dir

作用:NameNode节点用于存储HDFS元数据的本地目录,官方建议为/home/hadoop/dfs/name;

2>.dfs.data.dir

作用:DataNode节点用于存储HDFS文件数据块的本地目录,官方建议为/home/hadoop/dfs/data;

3>.mapred.system.dir

作用:HDFS中用于存储共享的MapReduce系统文件的目录,官方建议为/hadoop/mapred/system;

4>.mapred.local.dir

作用:TaskNode节点用于存储临时数据的本地文件目录;

5>.mapred.tasktracker.{map|reduce}.tarks.maximum

作用:在TaskTracker上可同时运行的的map或reduce任务的最大数目;

6>.hadoop.tmp.dir

作用: Hadoop临时目录;

7>.mapred.child.java.opts

作用:每个子任务可申请使用的heap大小;官方建议为-Xmx512m;

8>.mapred.reduce.tasks

作用:每任务的reduce数量;

上述参数中的大多数都可以接受多个以逗号分隔的目录,尤其是对于dfs.name.dir来说,多个目录还可以达到冗余的目的;而对于拥有多块磁盘的DataNode,为其dfs.data.dir指定多个值可以存储数据于多个磁盘,并能通过并行加速I/O操作。为mapred.local.dir指定多个眼光也能起到一定的加速作用。

此外,hadoop.tmp.dir对于不同的用户来说其路径是不相同的,事实上,应该尽量避免让此路径依赖用户属性,比如可以放在一个公共位置让所有用户都可以方便地访问到。在Linux系统下,hadoop.tmp.dir的默认路径指向了/tmp,这的确是一个公共位置,但/tmp目录所在的文件系统大多数都有使用配额,而且空间也通常比较有限,因此,故此此路径殊非理想之所在。建议将其指向一个有着足够空间的文件系统上的目录。

默认配置中,Hadoop可以在每个TaskTracker上运行四个任务(两个map任务,两个reduce任务),这可以通过mapred.tasktracker.{map|reduce}.tarks.maximum进行配置,通常建议其个数为与CPU核心数目相同或者为CPU核心数目的2倍,但其最佳值通常依赖于诸多因素,而在CPU密集型的应用场景中也不应该将其最大数目设置得过高。除了CPU之外,还应该考虑每个任务所能够使用的的heap空间大小所带来的影响;默认情况下,Hadoop为每个任务指定了200MB的heap空间上限,由于每个job可能会申请使用很大的heap,因此,过高的设定可能会带来意外的结果。

每个MapReduce任务可以通过mapred.reduce.tasks配置其运行的reduce任务数,通常也应该为其指定一个在多数场景下都能工作良好的默认值,比如Hadoop默认将此数目指定为1个,这对大多数任务来讲都有着不错的性能表现。而实际使用中,一般建议将此值设定为当前Hadoop集群可以运行的reduce任务总数的0.95倍或1.75倍。0.95这个因子意味着Hadoop集群可以立即启动所有的reduce任务并在map任务完成时接收数据并进行处理,而1.75则意味着先启动部分reduce任务,执行速度快的节点上的reduce完成后可再启动一个新的reduce任务,而速度慢的节点则无须执行此类操作。这会带来较为理想的负载均衡效果。