简介

上一篇我分享了关于自定义输入的文章,下面我再来看这样一个问题。

这是原始数据

现在我们想通过得到,两个文件,一个文件里面是bigdata的news,另一个文件时其他的news。

通过自定义输出就可以做到。

代码部分

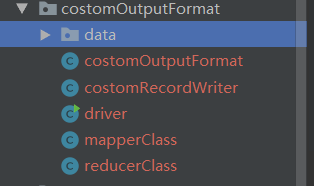

首先目录结构:

mapperClass

package costomOutputFormat;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

/**

* @Author: Braylon

* @Date: 2020/1/29 11:48

* @Version: 1.0

*/

public class mapperClass extends Mapper<LongWritable, Text, Text, NullWritable> {

Text k = new Text();

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

String s = value.toString();

k.set(s);

context.write(k, NullWritable.get());

}

}

这里很简单的逻辑,只是把每一行的内容当作key然后输送到下一个阶段。

reduceClass

package costomOutputFormat;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

/**

* @Author: Braylon

* @Date: 2020/1/29 11:52

* @Version: 1.0

*/

public class reducerClass extends Reducer<Text, NullWritable, Text, NullWritable> {

@Override

protected void reduce(Text key, Iterable<NullWritable> values, Context context) throws IOException, InterruptedException {

String s = key.toString();

s = s + "\r\n";

context.write(new Text(s), NullWritable.get());

}

}

这里增加了一个分行操作。其他的同样没有什么好说的。都很简单到目前为止。

costomOutputFormat:

如果看过我上一篇分享的就会有印象,我们通过继承FileinputFormat类来实现了自定义输入,这里同理,

package costomOutputFormat;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.RecordWriter;

import org.apache.hadoop.mapreduce.TaskAttemptContext;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

/**

* @Author: Braylon

* @Date: 2020/1/29 11:54

* @Version: 1.0

*/

public class costomOutputFormat extends FileOutputFormat<Text, NullWritable> {

@Override

public RecordWriter<Text, NullWritable> getRecordWriter(TaskAttemptContext taskAttemptContext) throws IOException, InterruptedException {

return new costomRecordWriter(taskAttemptContext);

}

}

然后发现终于找到了关键,就是costomRecordWriter,这是我们自己自定义的类,而继承了RecordWriter类,看到没有,终于找到了它。就像自定义输入时的recordReader一样,我们主要的逻辑都将在这个类中实现。

costomRecordWriter:

package costomOutputFormat;

import org.apache.hadoop.fs.FSDataInputStream;

import org.apache.hadoop.fs.FSDataOutputStream;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.RecordWriter;

import org.apache.hadoop.mapreduce.TaskAttemptContext;

import java.io.IOException;

/**

* @Author: Braylon

* @Date: 2020/1/29 11:56

* @Version: 1.0

*/

public class costomRecordWriter extends RecordWriter<Text, NullWritable> {

FSDataOutputStream bigdata = null;

FSDataOutputStream other = null;

public costomRecordWriter(TaskAttemptContext context) {

FileSystem fs;

try {

fs = FileSystem.get(context.getConfiguration());

Path path1 = new Path("D:\\idea\\HDFS\\src\\main\\java\\costomOutputFormat\\data\\out1");

Path path2 = new Path("D:\\idea\\HDFS\\src\\main\\java\\costomOutputFormat\\data\\out2");

bigdata = fs.create(path1);

other = fs.create(path2);

} catch (IOException e) {

e.printStackTrace();

}

}

@Override

public void write(Text text, NullWritable nullWritable) throws IOException, InterruptedException {

//判断是否包含目标字符

if (text.toString().contains("bigdata")) {

bigdata.write(text.toString().getBytes());

} else {

other.write(text.toString().getBytes());

}

}

@Override

public void close(TaskAttemptContext taskAttemptContext) throws IOException, InterruptedException {

if (bigdata != null) {

bigdata.close();

}

if (other != null) {

other.close();

}

}

}

知识点

-

构造函数传递context,用于配置环境变量。

-

重写write方法,判断是否包含目标字符,分别写入不同的文件。

-

注意close方法,这里由于原来我没有分享过这种形式,一般我们都会写在fis.write的后面,所以有的人对这样写有些陌生,作用就是关闭输入流。逻辑还是很好理解的。

driver类:

package costomOutputFormat;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

/**

* @Author: Braylon

* @Date: 2020/1/29 12:06

* @Version: 1.0

*/

public class driver {

public static void main(String[] args) throws IOException {

args = new String[]{"D:\\idea\\HDFS\\src\\main\\java\\costomOutputFormat\\data\\1.txt", "D:\\idea\\HDFS\\src\\main\\java\\costomOutputFormat\\out"};

Configuration conf = new Configuration();

Job job = Job.getInstance(conf);

job.setJarByClass(driver.class);

job.setMapperClass(mapperClass.class);

job.setReducerClass(reducerClass.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(NullWritable.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(NullWritable.class);

job.setOutputFormatClass(costomOutputFormat.class);

FileInputFormat.setInputPaths(job, new Path(args[0]));

FileOutputFormat.setOutputPath(job, new Path(args[1]));

/*虽然定义了outputformat,但是由于Costom类继承于FileOutputFormat

* 所以仍然要输出一个success文件,由此,指定输出目录*/

try {

job.waitForCompletion(true);

System.out.println("done");

} catch (InterruptedException e) {

e.printStackTrace();

} catch (ClassNotFoundException e) {

e.printStackTrace();

}

}

}

这里没什么新东西了。

大家注意身体,也别给国家添乱。

武汉加油

大家共勉~~