首先我们简单分析下人人网的登录时的参数

其实最重要的就是form data的那部分,即我们在向人人网发送登录的POST请求时候必传的一些参数,一目了然的展现出来,其中有几个参数是固定的,

email,origURL,domain,key_id,rkey在一定的时间内也是固定的,最后还剩下一个验证码需要解决,即icode,为了演示方便,这里我们通过提取到这个验证码的链接保存本地,并通过手动输入的方式进行读取来进行,

通过页面分析,可以拿到这个验证码的链接

快速创建一个scrapy的项目,具体过程不再赘述,贴上命令:

scrapy startproject reren_login

scrapy genspider renren_login "reren.com"

settings配置,可以参考如下:

BOT_NAME = 'renren_login'

SPIDER_MODULES = ['renren_login.spiders']

NEWSPIDER_MODULE = 'renren_login.spiders'

ROBOTSTXT_OBEY = False

DEFAULT_REQUEST_HEADERS = {

'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8',

'Accept-Language': 'en',

'User Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/65.0.3325.181 Safari/537.36'

}

ITEM_PIPELINES = {

'renren_login.pipelines.RenrenLoginPipeline': 300,

}

由于这里没有其他的业务要处理,我们暂时不对item里面的内容做更改,直接贴出登录部分的代码:

# -*- coding: utf-8 -*-

import scrapy

import time

from urllib import request

from PIL import Image

class RenrenSpider(scrapy.Spider):

name = 'renren'

allowed_domains = ['renren.com']

start_urls = ['http://renren.com/']

def parse(self, response):

#url = "http://wwww.renren.com/PLogin.do"

#url = "http://www.renren.com/ajaxLogin/login"

base_url='http://www.renren.com/ajaxLogin/login?1=1&uniqueTimestamp='

s = time.strftime("%S")

ms = int(round(time.time() % (int(time.time())), 3) * 1000)

date_time = '20191001' + s + str(ms)

login_url = base_url + date_time

formdata = {

"email":"13825511231",

"origURL": "http://www.renren.com/home",

"domain": "renren.com",

"key_id": "1",

"captcha_type": "web_login",

"password":"7325db48b424e07cc1cda55e9a346d7913b44bfc6d7519cdca8ef944392e0",

"rkey":"3f674e5c4940658971a514b5bdb19571",

"f":"http%3A%2F%2Fwww.renren.com%2F972777765%2Fnewsfeed%2Fphoto"

}

catp_image_url = response.xpath("//img[@id='verifyPic_login']/@src").get()

if catp_image_url:

image_code = self.parse_image_code(catp_image_url)

formdata['icode'] = image_code

yield scrapy.FormRequest(url=login_url,formdata=formdata,callback=self.after_login)

#解析图片验证码

def parse_image_code(self,image_url):

request.urlretrieve(image_url, "captcha.png")

image = Image.open("captcha.png")

image.show()

input_code = input("请输入验证码")

return input_code;

def after_login(self,response):

pass

在这里,我们使用了Image组件进行读取验证码上的内容,实际业务中,可以借助第三库直接识别,比如阿里云上面就有类似的产品,有的需要部分收费,然后我们运行这段程序,

将打开的图片验证码输入即可实现登录的功能

当然,也可以使用传统的requests库进行登陆,下面我们简单演示一下使用request登录豆瓣网,

豆瓣网的登录比较简单,只需要输入用户名和密码即可登录,同上,我们只需要将相关的参数包装到request的form data即可,最好把header中的参数填写完整,

import requests

try:

import cookielib

except:

import http.cookiejar as cookielib

import re

session = requests.session()

session.cookies = cookielib.LWPCookieJar(filename="cookies")

try:

session.cookies.load(ignore_discard=True)

except:

print("cookie未能加载")

agent = "Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/65.0.3325.181 Safari/537.36"

headers = {

"Accept": "application/json",

"Accept-Encoding": "gzip, deflate, br",

"Accept-Language": "zh-CN,zh;q=0.9",

"Connection": "keep-alive",

"Content-Length": "68",

"Content-Type": "application/x-www-form-urlencoded",

"Host": "accounts.douban.com",

"Origin": "https://accounts.douban.com",

"Referer": "https://accounts.douban.com/passport/login?source=movie",

"User-Agent":agent

}

#执行登录的方法

def douban_login():

login_url = "https://accounts.douban.com/j/mobile/login/basic"

post_data={

"name": "13825511231",

"password": "你的密码"

}

#response = requests.post(login_url,post_data,headers=headers)

#print(response.content)

response_session = requests.post(login_url, post_data, headers=headers)

print(response_session.text)

session.cookies.save()

douban_login()

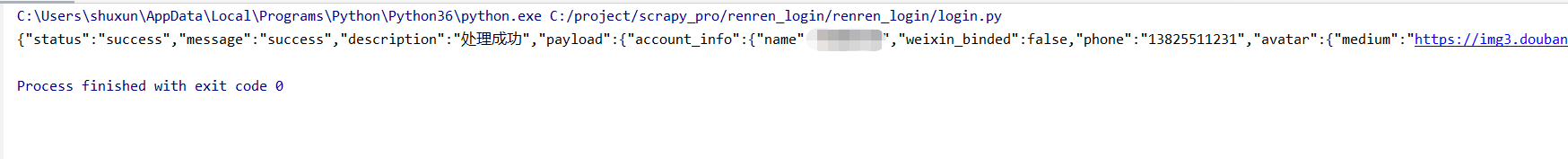

直接运行这段程序,可以看到,后台已经返回了登录成功的信息

到这里我们简单演示了一下使用scrapy模拟登陆的效果,仅仅作为demo参考学习,不足之处敬请见谅!本篇到此结束,最后感谢观看!